-

-

GIF

GIF

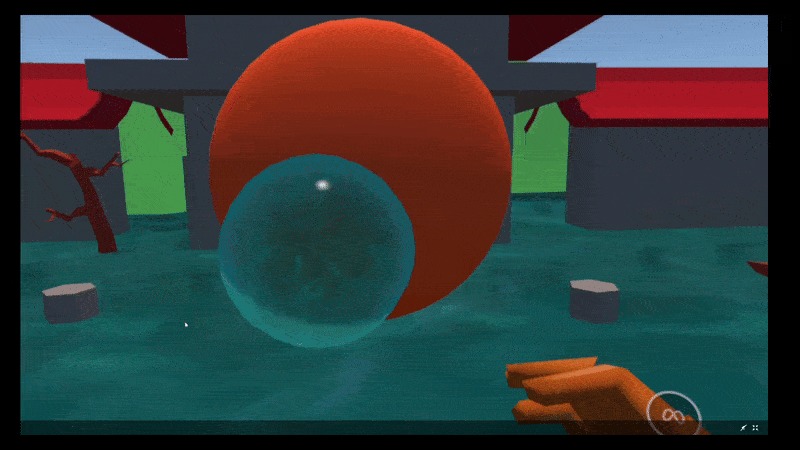

Water-bending

-

GIF

GIF

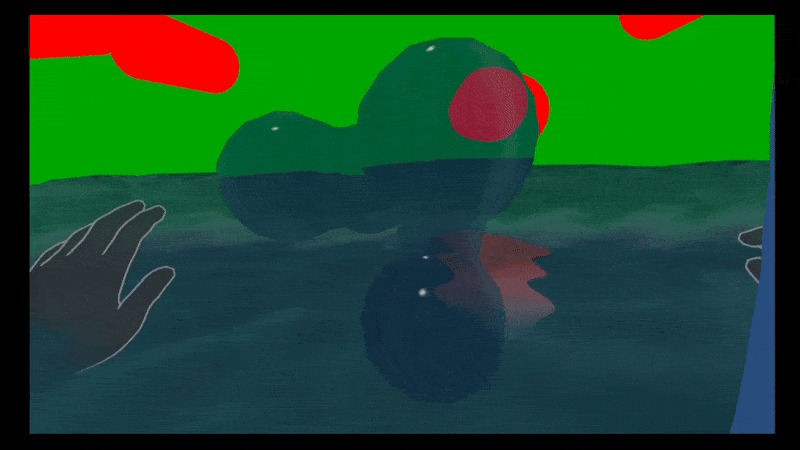

0 - my earliest "meta-water" prototype. tiny render space (~0.5m^3). very limited.

-

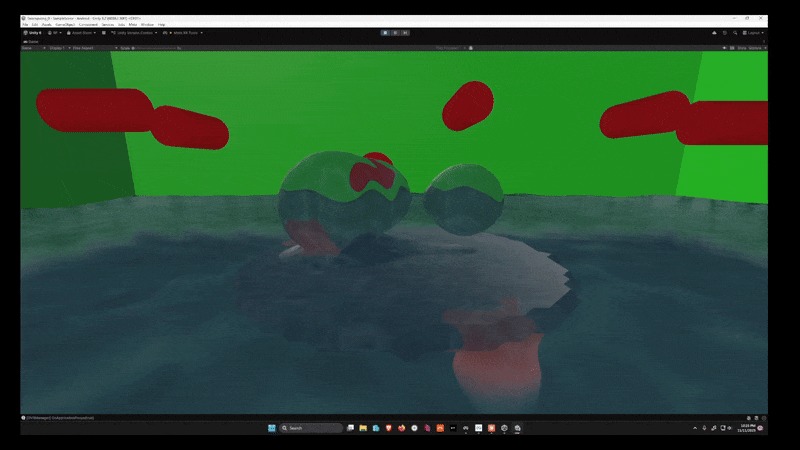

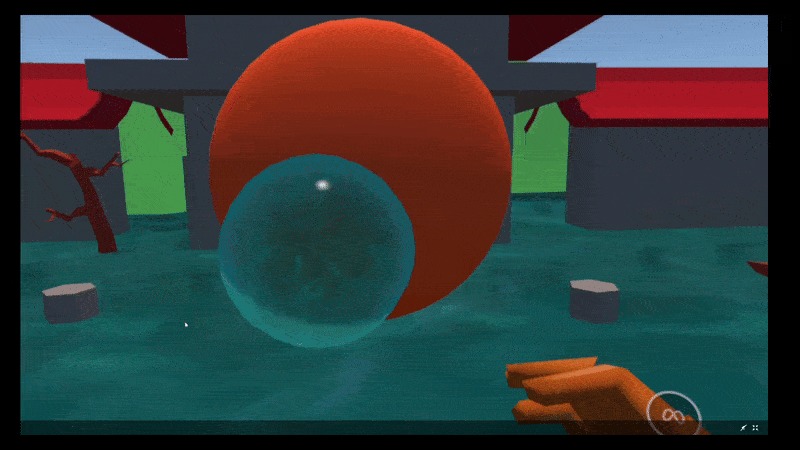

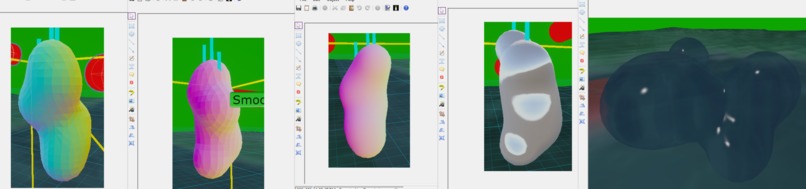

1 - "welding" the marching cube geometry together to get smooth normals

-

GIF

GIF

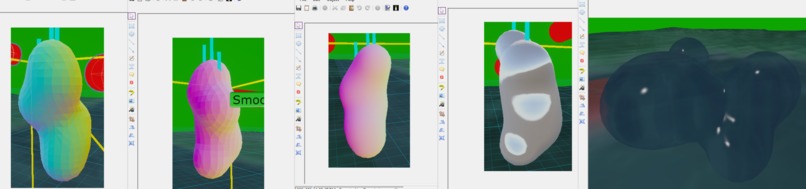

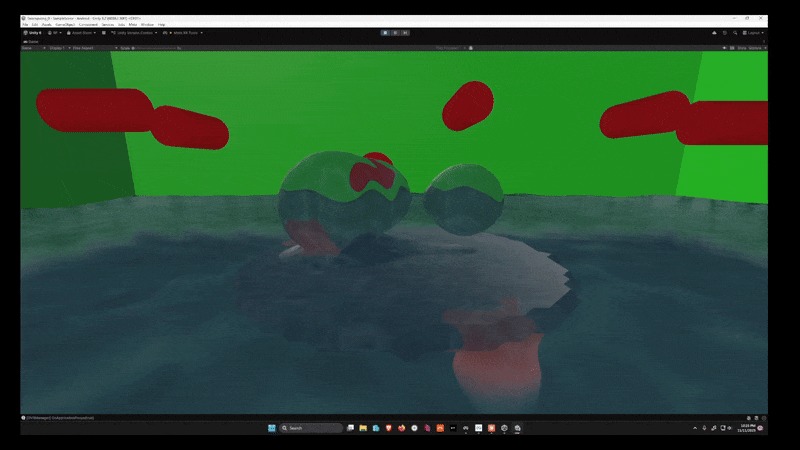

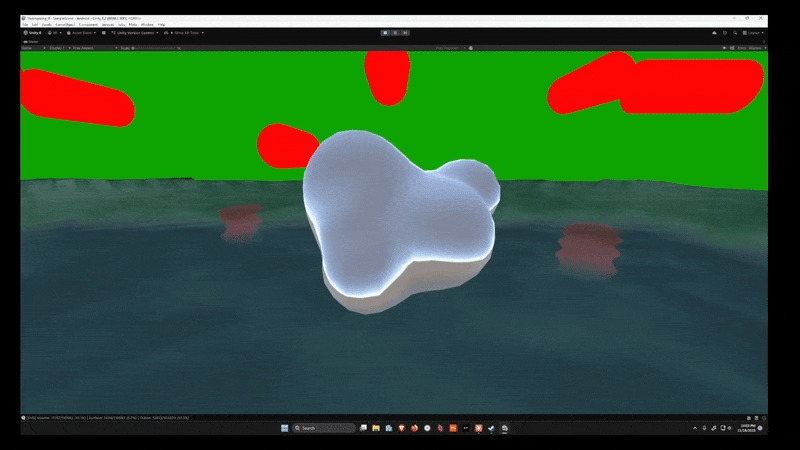

2 - smoothed, floating metawater. Still a limited rendering space (~1x1x1m).

-

GIF

GIF

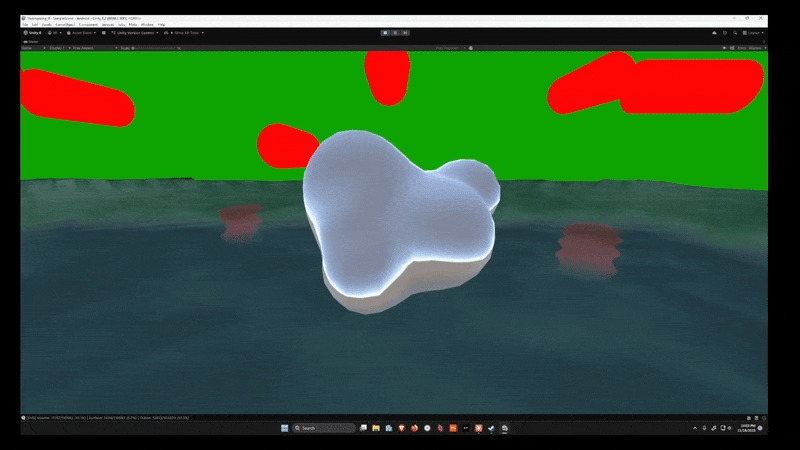

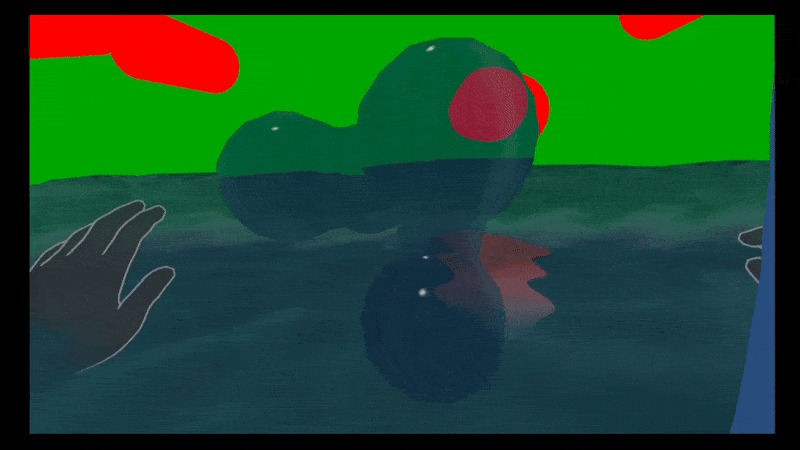

3 - Now we're cooking! No improvement in render volume limits (still stuck in a ~2m^3 box), but we can hold it.

Inspiration

I spent the first two weeks building a dynamic water system for a different idea centered around music, but the water was too expensive to run for anything fun. I gave up and started a simple "pet koi fish" app. Eventually I had an a breakthrough in optimization - chunking (like in Minecraft). My water suddenly had effectively unlimited range and much more potential.

So I changed direction on Nov 28th to roll it in an experience about attacking koi fish using water-bending (in the style of "Fruit Ninja with depth"). I particularly want players to be able to express fluid, natural movements and arcing with timed, aligned strikes of targets. I think that was the best part of Fruit Ninja.

What it does

It is a [hands-only] water-bending koi fish simulation. You use your conveniently articulated fin control a dynamic volume of water to attack other, non-water-bending koi fish as they jump from the pond. It only currently supports control of a single "serpent" (dynamic water entity), but I think there's room for two in the future. For now, just touch the sphere next to you in game.

How I built it

Frantically, with Unity (Meta SDK, particularly the Body SDK and Interaction SDK). Assets made in Blender and 3DCoat. 98% of work programming though, and the workflow was heavily AI-assisted for quick translation of design concepts into half-working, often unsuitable prototypes that I iterated on.

Challenges I ran into

Many optimization and complexity problems like the dynamic water mesh I mentioned, but some others around "biomechanics" in VR. When building the "water serpent" hand-control system, the player's hand would often obstruct their own view of the serpent due to how movement is mapped. My solution was to use the Body SDK to give me a wrist bone angle to measure against the hand. This allows the player to bend their wrist to nudge the serpent in any direction. They can "drive" it better to orbit around their hand.

Accomplishments that I'm proud of

It's the water bending. It still isn't great, but it's a system that supports fluid motion and forms, and it runs well. A sphere seamlessly transforms into a stream of water at the player's command, shifting form proportional with it's motions.

What we learned

VR has many challenges around gauging the user's intent. For example, we might have the headset's view direction, but that's not probably not exactly where the user is looking. I gave up on predictive solutions (ie "aim assist") and leaned into richer, more accurate input handling that hopefully will let the player express that intent without me getting involved.

What's next for Koi Fu

There are so many things I didn't have time to check, and I'm embarrassed with the submission state. It took well over 100 hours to stabilize the water-bending framework, so I'm definitely excited to build the easier, fun things that can now be expressed in that framework. I'll likely be ready to return to it in a week. I just need a breather.

Built With

- ai

- c#

- hands

- keyboard

Log in or sign up for Devpost to join the conversation.