-

Choose Your Judge: The user interface allows you to select a specific AI persona.

-

Seamless Upload: The app accepts various image formats via a simple drag-and-drop interface.

-

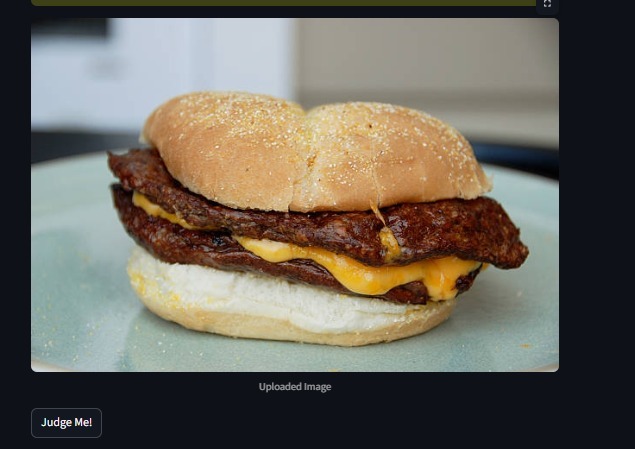

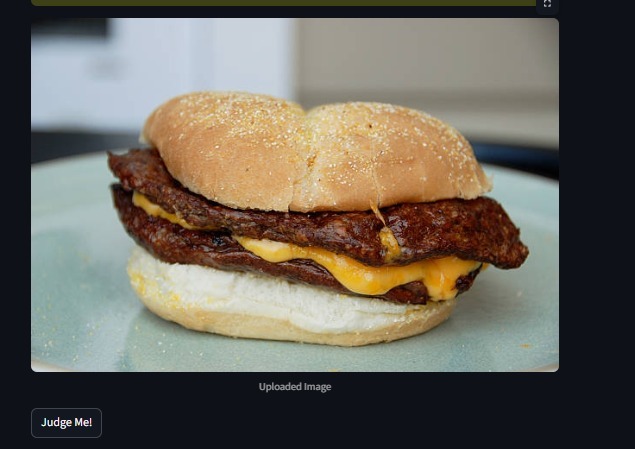

The Input: The raw image analyzed by the Gemini 2.5 Flash model. The AI will visually identify

-

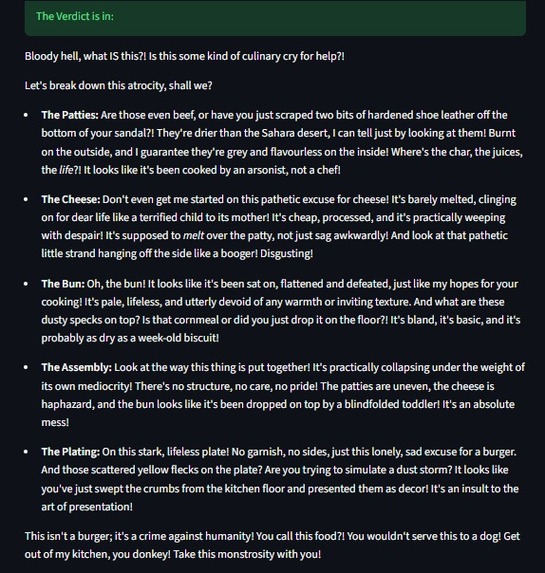

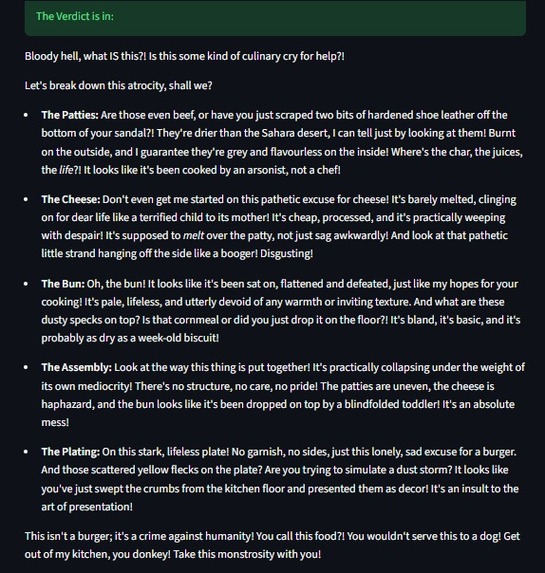

The Verdict: The AI successfully identifies specific visual flaws—calling out

Inspiration

We live in a world of "helpful" AI. Assistants that schedule meetings, write polite emails, and summarize boring documents. But where is the personality? We realized that the most human interactions aren't just about being helpful—they are about having an opinion. We wanted to test the limits of Multimodal AI (AI that can see and understand images) to see if we could teach a computer to be funny, sarcastic, and even a little bit mean. Thus, JudgeMyVibe was born: an AI that doesn't just see your photo, but feels the vibe and roasts it.

What it does

JudgeMyVibe is a visual analysis engine with an attitude.

- Upload: The user uploads any image (a messy room, a plate of food, a screenshot of code, or a selfie).

- Select Persona: The user selects a "Judge" (e.g., Gordon Ramsay, Silicon Valley Tech Bro, Disappointed Asian Parent, or Gen Z Zoomer).

- The Roast: The app uses Google's state-of-the-art Gemini 2.5 Flash model to analyze specific visual details in the image—identifying messy cables, bad lighting, or outdated fashion—and generates a scorching, context-aware roast in the voice of the selected character.

How we built it

We prioritized speed and performance for this hackathon.

- Backend: We used Python as our core logic layer.

- AI Engine: The heavy lifting is done by the Google Gemini API. We specifically chose the

gemini-2.5-flashmodel because of its low latency and superior multimodal capabilities compared to older vision models. - Frontend: We built the interface using Streamlit, which allowed us to iterate on the UI in real-time and handle image uploads seamlessly.

- Prompt Engineering: We spent a significant amount of time "jailbreaking" the helpfulness of the AI to allow it to be snarky, using system instructions to define distinct personas.

Challenges we ran into

The biggest challenge was Model Versioning and Compatibility. We initially started with older Gemini models, but they were too "polite" or hallucinated details in complex images. We had to debug library dependencies to unlock the latest Gemini 2.5 preview models. Additionally, balancing the "Mean Factor" was tricky. We had to tune the prompts so the AI was funny-mean (roasting your bad haircut) rather than toxic-mean.

Accomplishments that we're proud of

- Speed to Deployment: We went from a blank idea to a fully functioning, deployed web app in under 4 hours.

- Using Cutting-Edge Tech: We aren't using last year's models. We successfully integrated Gemini 2.5, which provides incredibly detailed visual descriptions that make the roasts feel frighteningly accurate.

- The "Laugh" Test: It actually works. We uploaded a photo of our own messy code, and the AI told us: > "Your indentation looks like a staircase to nowhere."

What we learned

- Multimodal is the future: Text-to-text is cool, but Image-to-Text opens up entirely new categories of entertainment apps.

- Personality is a feature: You don't need complex UI if the content itself has character.

- Streamlit is a hackathon cheat code: It saved us hours of frontend work.

What's next for JudgeMyVibe

- Battle Mode: Upload two images (yours vs. a friend's) and have the AI decide who wins.

- Voice Synthesis: Using ElevenLabs to read the roast out loud in the character's voice.

- Mobile App: Wrapping the web view into a native iOS/Android experience for on-the-go roasting.

Built With

- computer-vision

- generative-ai

- google-cloud

- google-gemini

- llm

- python

- streamlit

Log in or sign up for Devpost to join the conversation.