-

-

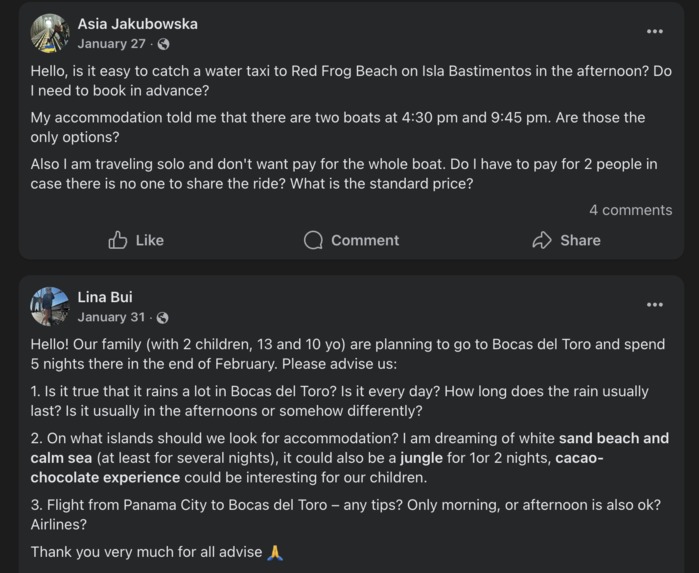

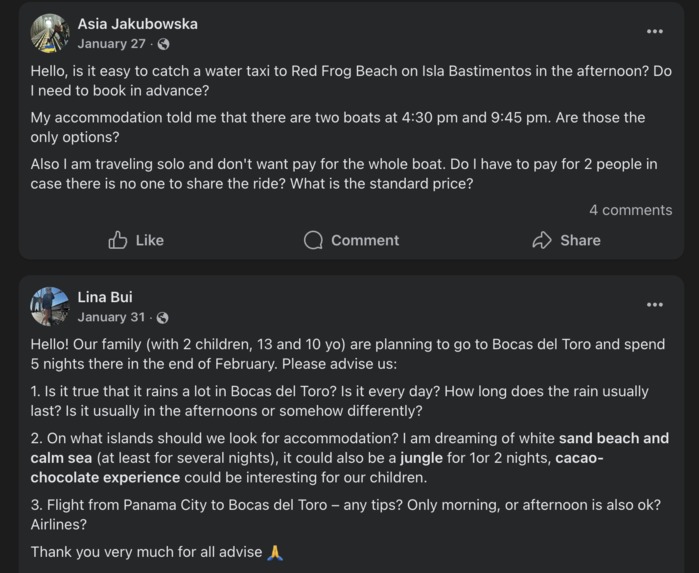

Problem 1: logistics in the Bocas del Toro archipelago can be unclear & complicated.

-

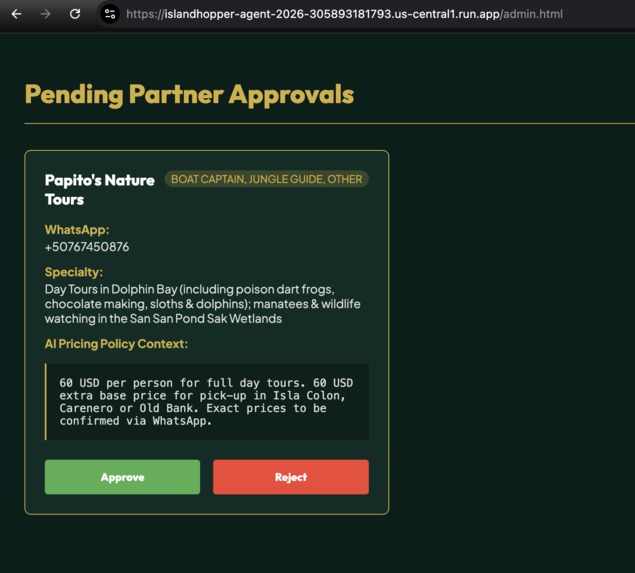

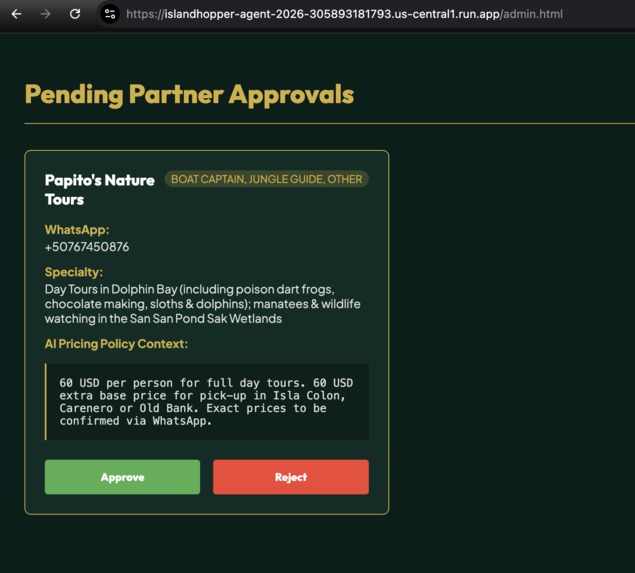

Keeping the human in the loop: we approve entries by captains in the admin sections.

-

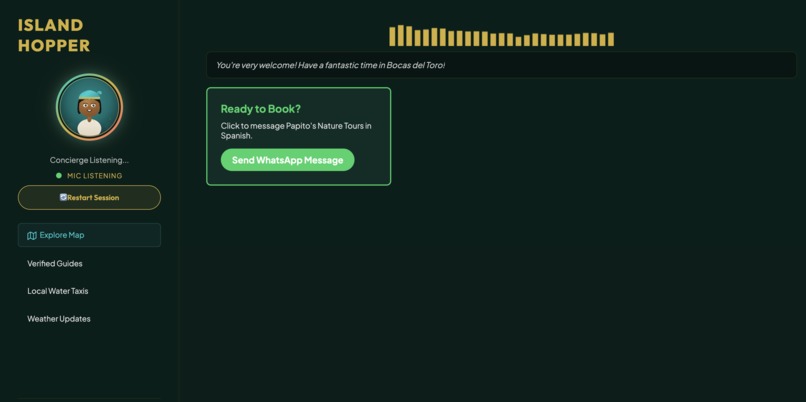

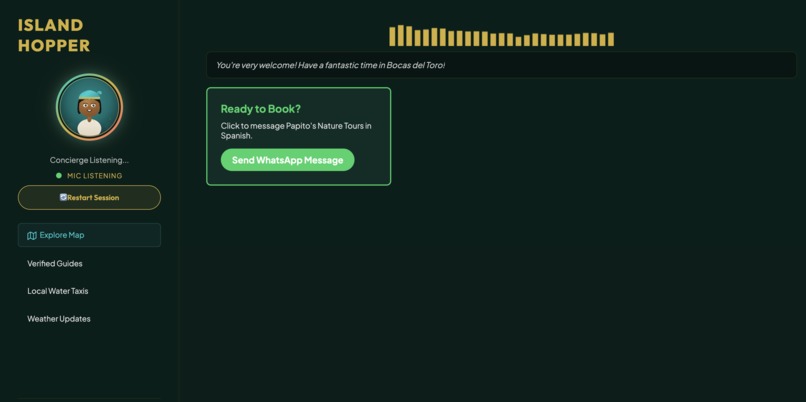

Problem 2: writing a captain in Spanish can feel overwhelming

-

Solution 2: our tool prepares the WhatsApp message

-

Architecture plan

Inspiration Tourism in Bocas del Toro, Panama is broken in a specific way: the best experiences -- secret beaches, fair-priced boat captains, sloth-spotting trails -- live entirely in the heads of locals and word-of-mouth WhatsApp groups. Meanwhile, travelers land on the island and get funneled into overpriced tourist traps by hotel concierge desks that take commissions. The local captains and guides, many of whom are Afro-Caribbean or indigenous Ngabe, have no digital presence at all. They don't have websites, they don't list on TripAdvisor, and most of them would rather talk on the phone than fill out a form.

We asked ourselves: what if an AI concierge could feel like a trusted local friend instead of a corporate chatbot? What if it could speak both English and Spanish, remember what you told it yesterday, and connect you directly to a verified captain's WhatsApp -- with your booking request already translated into Spanish and pre-filled? And what if the captains themselves could register by just talking into their phone?

That gap between "how local tourism actually works" and "what technology currently offers" is what inspired Island Hopper.

What it does Island Hopper is a voice-first, multimodal AI concierge that sits between travelers and the local economy of Bocas del Toro.

For travelers, you open the app, tap the microphone, and start talking. The concierge greets you with a warm Afro-Caribbean personality and immediately launches a visual discovery experience -- a swipeable card deck of authentic local photos. You pick your vibe (adventure, relaxation, wildlife), swipe through spots, and the AI builds a personalized day-by-day itinerary in real time, generating images and pulling verified contacts from its local database. When you're ready to book, it translates your request into Spanish and generates a one-tap WhatsApp deep link so you can message the captain directly with zero typing and zero language barrier.

For local captains and guides, there's a voice-native intake system. Instead of filling out forms, a captain taps a microphone button and describes their services in Spanish or English. The AI agent interviews them conversationally, extracts structured data (name, WhatsApp, pricing rules, specialties), and submits it for human review.

For administrators, there's a voice-powered review dashboard where an AI agent analyzes each submission, flags concerns (suspicious pricing, missing safety info, bad phone formats), and presents review cards with approve/reject/contact-captain actions.

The system also remembers returning travelers. It silently assigns a local ID, and when you disconnect, a background agent reads the conversation transcript and extracts your preferences (vegan, hates crowds, luxury budget) into a persistent profile. When you come back, the concierge already knows you.

How we built it The backbone is a FastAPI server running on Google Cloud Run, orchestrating three distinct Gemini models through Google's Agent Development Kit (ADK).

The primary conversational engine uses Gemini Live 2.5 Flash with native audio -- this processes raw PCM audio directly without a separate speech-to-text layer, which gives us sub-second latency and the ability to detect mid-sentence language switches between English and Spanish. All three agents (Concierge, Intake, Review) run on this model over persistent WebSocket connections.

For heavy reasoning tasks like generating vetting questions for captain applications and creating cinematic itinerary summaries, we route to Gemini 3.1 Pro. For lightweight background work like extracting traveler memory from session transcripts, we use Gemini 3.1 Flash Lite.

Images come from two sources: a curated Multimodal RAG pipeline backed by Google Cloud Firestore, where each photo has a 768-dimensional embedding generated by Gemini Embedding 2. When a traveler asks "What does Zapatilla Island look like?", we do a cosine similarity search across the visual asset database and return a real, curated photo if the match score exceeds 0.8. If no match exists, we fall back to Imagen 3.0 to generate a photorealistic 16:9 image on the fly.

The frontend is deliberately zero-build vanilla HTML/CSS/JS -- no React, no bundler. This was a conscious decision for maximum compatibility on the low-end Android phones that most Bocas visitors carry. The swipe engine, mood selector, gesture tracker (using MediaPipe Hands for optional camera-based swiping), and real-time itinerary builder all communicate with the backend over a single WebSocket connection.

The entire stack deploys as a single Docker container to Cloud Run with one command (./deploy.sh).

Challenges we ran into The biggest challenge was audio latency and interruption handling. Streaming raw PCM audio over WebSocket while simultaneously capturing microphone input creates a complex bidirectional audio pipeline. We had to carefully manage AudioContext scheduling, maintain a queue of active audio buffer sources, and implement instant interruption -- when the user starts talking, all queued AI audio must stop immediately. Getting this to feel natural (not choppy, not delayed, no echo) took significant iteration.

Bilingual extraction from voice was harder than expected. When a captain says "cobro veinte dolares por persona, pero si es de noche es doble, y si hay que esperar, cinco mas por hora" (I charge twenty dollars per person, but at night it's double, and if there's waiting, five more per hour), the AI needs to parse that into a clean structured pricing policy. The nuance of colloquial Panamanian Spanish pricing conventions required careful prompt engineering.

The cold-start problem was real. An AI concierge is useless without a verified database behind it. Building the voice intake pipeline and the human-in-the-loop admin review system was essential to solve this chicken-and-egg problem -- we couldn't wait to manually enter every captain.

Memory without authentication was a design constraint. We didn't want to force account creation for a casual travel tool, so we built the cognitive memory system around localStorage-based anonymous IDs and background transcript analysis. The challenge was making the memory extraction reliable enough that the agent doesn't hallucinate preferences it never heard.

Accomplishments that we're proud of We built a complete two-sided marketplace agent -- not just a chatbot, but a system that serves travelers, onboards supply (captains), and has administrative quality control, all powered by voice-first AI. Most hackathon projects demo one side; we built the full loop.

The WhatsApp handoff is the feature that feels most like magic. Watching a tourist say "book that snorkeling trip" and then seeing a perfectly translated Spanish message pre-loaded in WhatsApp, addressed to the right captain, with the right price -- that's a real product moment.

The multimodal RAG pipeline that prefers authentic local photos over AI-generated images. When a real photo scores above 0.8 similarity, the UI badges it as "Authentic Local Photo" in green. When it falls back to Imagen, it honestly labels it "AI-Generated Visual." This transparency felt important for a product about authentic tourism.

The voice-native captain intake solves a real accessibility problem. These are working boat captains, not tech workers. The fact that they can register by just talking into a phone in Spanish, and the AI handles all the structured data extraction, is something we think could genuinely work in the real world.

Five Gemini models orchestrated in a single application, each chosen for a specific capability: native audio for conversation, pro for reasoning, flash-lite for background processing, imagen for visual generation, and embeddings for semantic search.

What we learned Native audio models change the UX paradigm. The difference between "STT -> LLM -> TTS" and "audio in, audio out" is not incremental -- it's qualitative. The agent can detect hesitation, emotion, and language switches in ways that a text pipeline simply cannot. It makes the interaction feel like talking to a person.

Human-in-the-loop is not optional for trust. We initially considered auto-approving captain submissions if the AI deemed them legitimate. We quickly realized that for a system that connects real tourists with real people on boats in the ocean, there must be a human making the final call. The AI should advise, not decide.

Memory is the secret to feeling personal. The moment we added the cognitive memory system and the agent said "Welcome back! Last time you mentioned you're vegan -- I've already filtered out the seafood tours," the whole product clicked. Personalization without login is powerful.

Simple frontends win on real devices. Our zero-build vanilla JS approach meant the app worked immediately on every phone we tested -- old Samsungs, budget Xiaomis, borrowed iPhones. A React SPA with heavy dependencies would have been a liability in this context.

What's next for Island Hopper Expand beyond Bocas del Toro. The architecture is location-agnostic. The knowledge base, intake system, and concierge agent can be cloned for any destination where local tourism is underserved digitally -- think Caribbean islands, Southeast Asian beach towns, Central American surf villages.

Real WhatsApp Business API integration. Currently we use wa.me deep links for the handoff. The next step is integrating the WhatsApp Business API so the concierge can send messages on behalf of the traveler and track booking confirmations within the app.

Captain ratings and feedback loop. After a trip, the system should follow up and ask the traveler how it went. This data feeds back into the knowledge base to surface the best captains and flag problems.

Offline mode with on-device models. Bocas del Toro has spotty internet. We want to explore caching a lightweight on-device model that can handle basic queries when connectivity drops, then sync when back online.

Revenue model through ethical commissions. Instead of hotels taking 30-40% commissions from captains, Island Hopper could charge a small transparent fee (5-10%) that both parties agree to, creating a sustainable business that actually benefits the local economy.

Built With

- docker

- fastapi

- gemini-3.1-flash-lite

- gemini-3.1-pro

- gemini-embedding-2

- gemini-live-2.5-flash-(native-audio)

- google-agent-development-kit-(adk)

- google-cloud-firestore

- google-cloud-run

- imagen-3.0

- mediapipe-hands

- python

- vanilla-html/css/javascript

- web-audio-api

- websockets

Log in or sign up for Devpost to join the conversation.