-

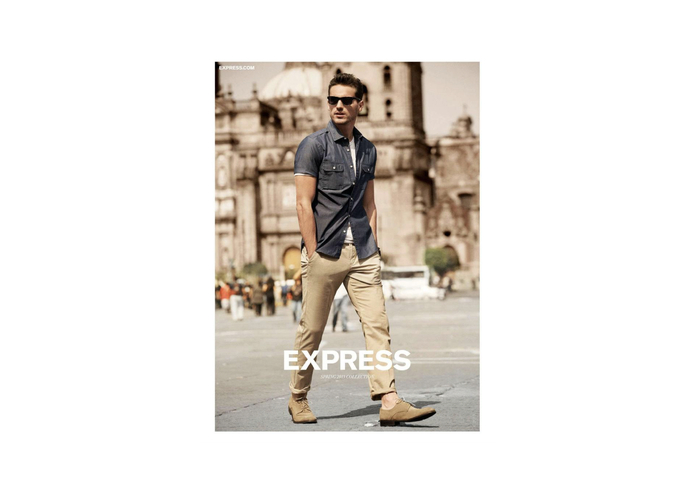

Billboard in action!

-

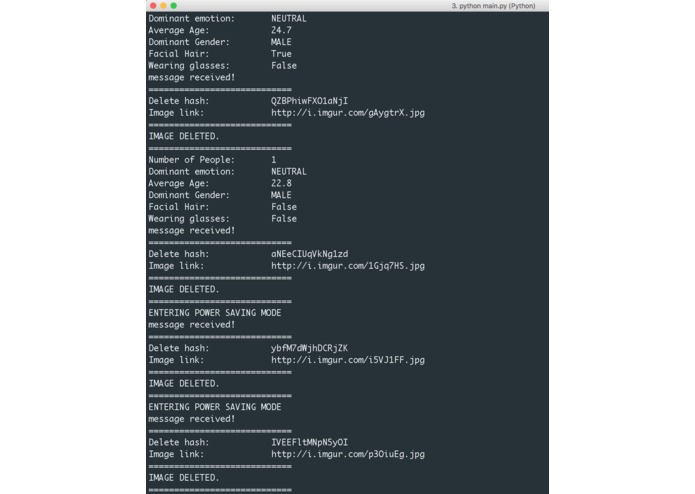

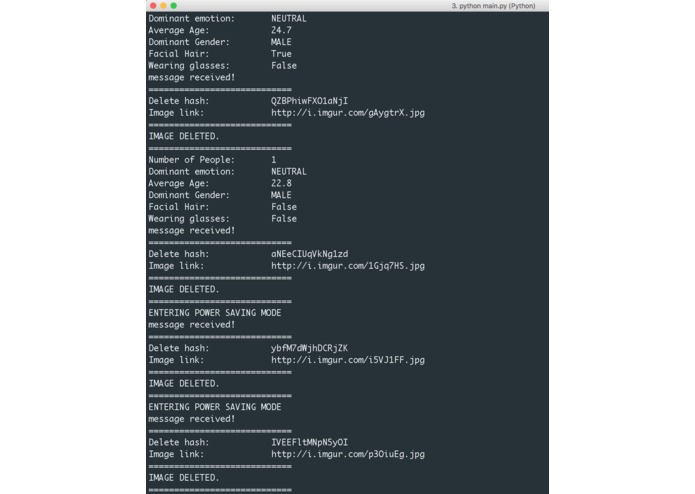

Console output for debugging

-

If I see beard from you, you'll probably get this ad

-

Oh no! You look sad. But don't worry, help is on the way!

-

Oh are you wearing glasses? Time to go retro with Google Glass

-

Power saving mode to save energy :)

-

Ladies get female clothing ads

-

Gentlemen get male clothing ads

Inspiration

You see a TON of digital billboards at NYC Time Square. The problem is that a lot of these ads are irrelevant to many people. Toyota ads here, Dunkin' Donuts ads there; it doesn't really make sense.

What it does

I built an interactive billboard that does more refined and targeted advertising and storytelling; it displays different ads based on who you are (NSA 2.0?)

The billboard is equipped with a camera, which periodically samples the audience in front of it. Then, it passes the image to a series of computer vision algorithm (Thank you Microsoft Cognitive Services), which extracts several characteristics of the viewer.

In this prototype, the billboard analyzes the viewer's:

- Dominant emotion (from facial expression)

- Age

- Gender

- Eye-sight (detects glasses)

- Facial hair (just so that it can remind you that you need a shave)

- Number of people

And considers all of these factors to present with targeted ads.

As a bonus, the billboard saves energy by dimming the screen when there's nobody in front of the billboard! (go green!)

How I built it

Here is what happens step-by-step:

- Using OpenCV, billboard takes an image of the viewer (Python program)

- Billboard passes the image to two separate services (Microsoft Face API & Microsoft Emotion API) and gets the result

- Billboard analyzes the result and decides on which ads to serve (Python program)

- Finalized ads are sent to the Billboard front-end via Websocket

- Front-end contents are served from a local web server (Node.js server built with Express.js framework and Pug for front-end template engine)

- Repeat

Challenges I ran into

- Time constraint (I actually had this huge project due on Saturday midnight - my fault -, so I only had about 9 hours to build this. Also, I built this by myself without teammates)

- Putting many pieces of technology together, and ensuring consistency and robustness.

Accomplishments that I'm proud of

- I didn't think I'd be able to finish! It was my first solo hackathon, and it was much harder to stay motivated without teammates.

What's next for Interactive Time Square

- This prototype was built with off-the-shelf computer vision service from Microsoft, which limits the number of features for me to track. Training a custom convolutional neural network would let me track other relevant visual features (dominant color, which could let me infer the viewers' race - then along with the location of the Billboard and pre-knowledge of the demographics distribution, maybe I can infer the language spoken by the audience, then automatically serve ads with translated content) -

I know this sounds a bit controversial though. I hope this doesn't count as racial profiling...

Built With

- computer-vision

- express.js

- imgur

- machine-learning

- microsoft-api

- microsoft-cognitive-services

- node.js

- opencv

- pug

- python

- websockets

Log in or sign up for Devpost to join the conversation.