-

-

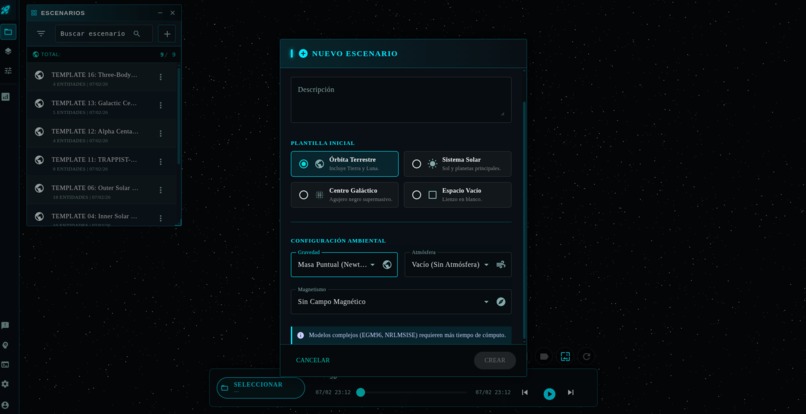

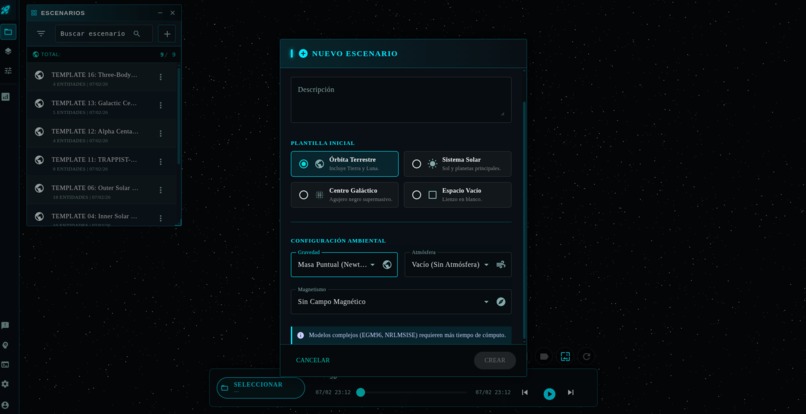

Creating scenario with specific physic engines (Gravity or atmospheric drag)

-

Representation of 3-body interaction.

-

Real time monitoring operations

-

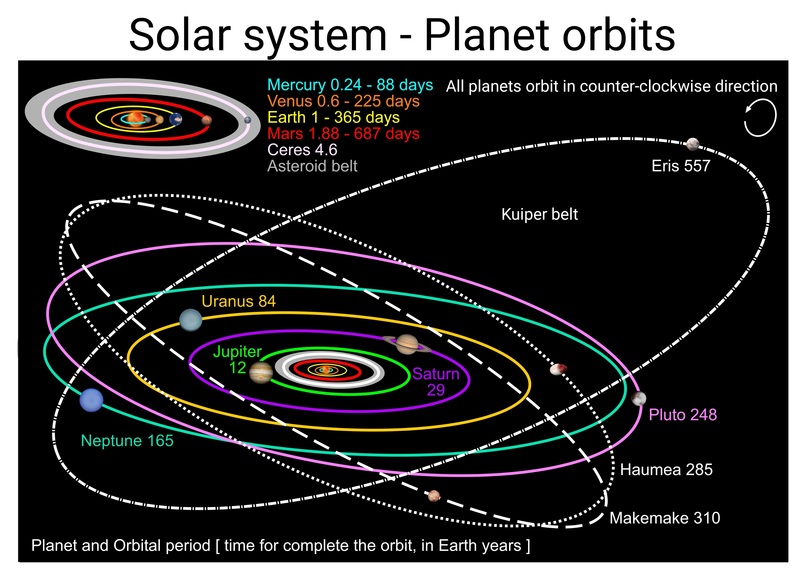

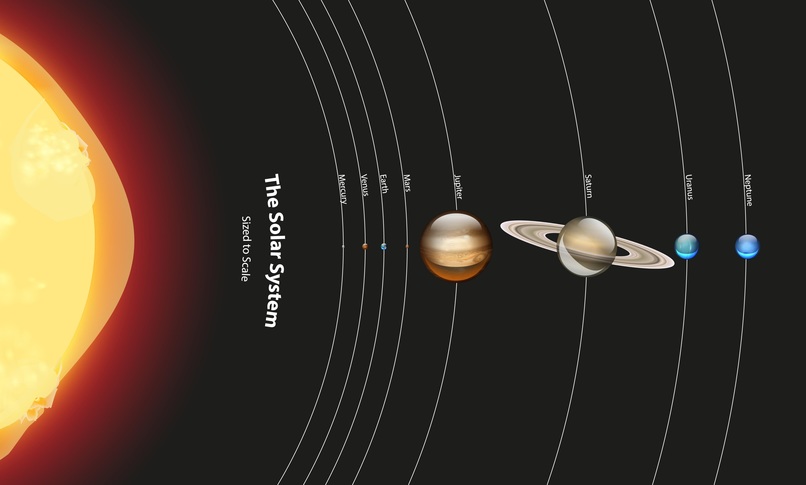

Inner Solar System

-

All functionalities through windows and grouping of tabs. Resizable and movable windows.

-

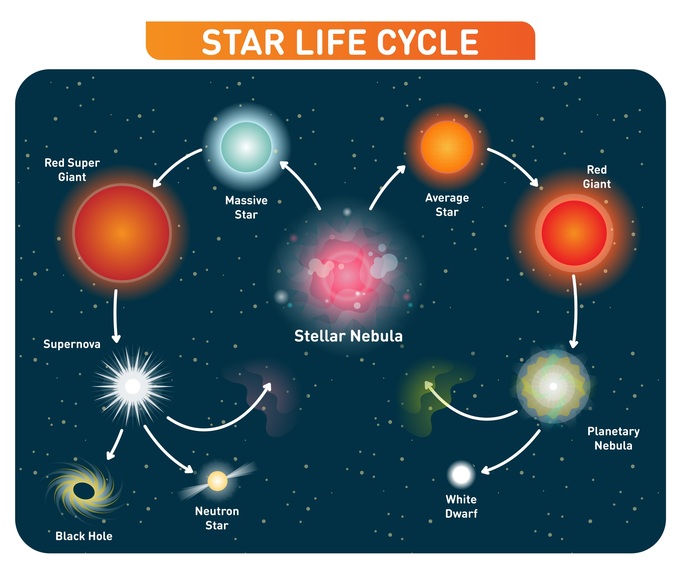

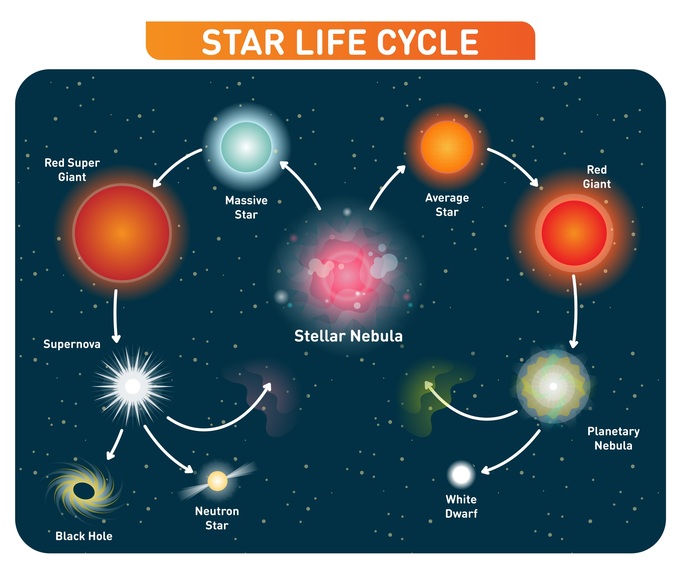

Gemini gen: Learning about nebulas examples diffs

-

Gemini gen: Learning about interstellar mediums diffs

-

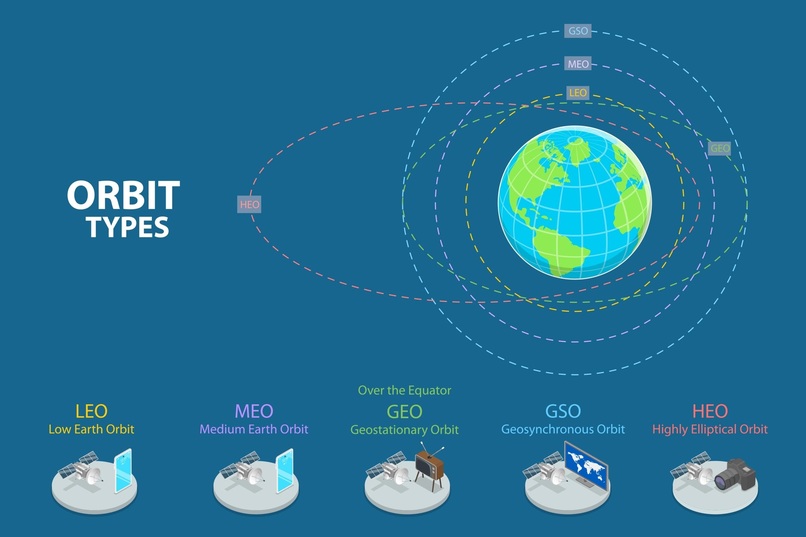

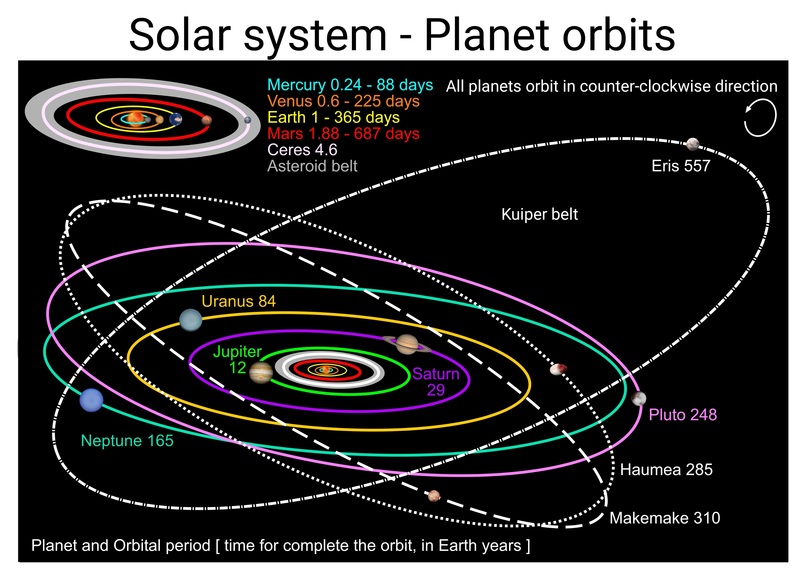

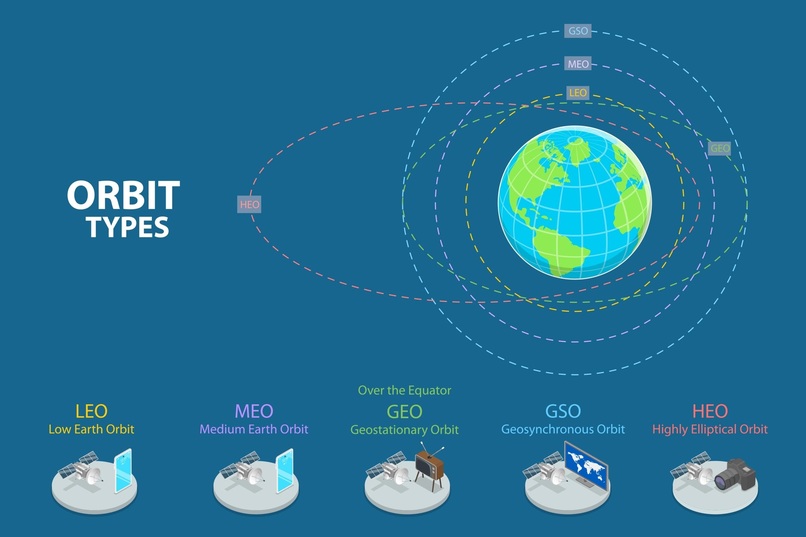

Gemini gen: Learning about system orbits

-

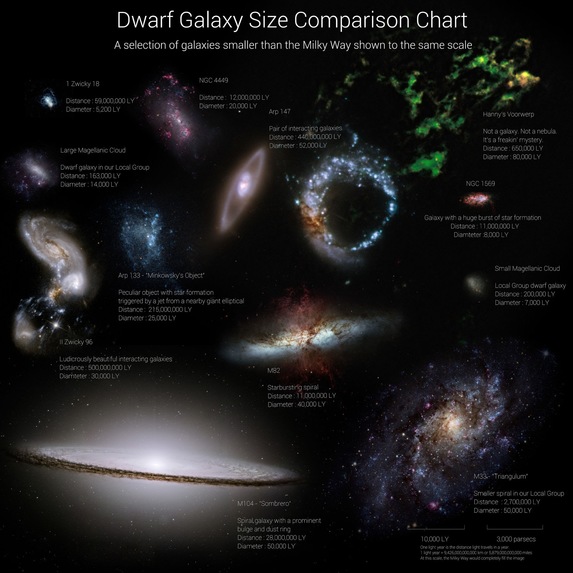

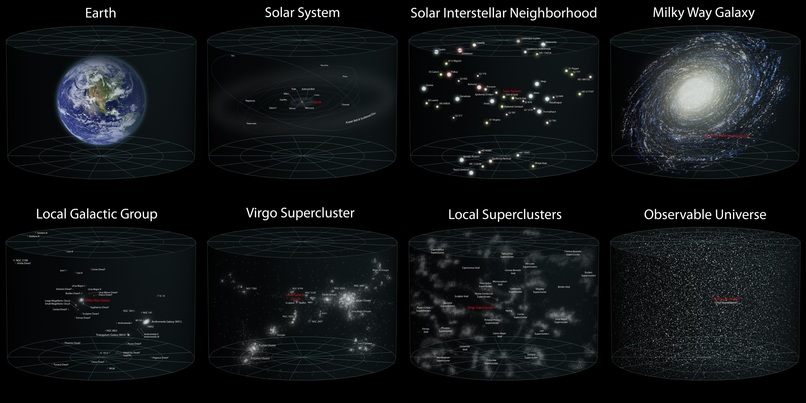

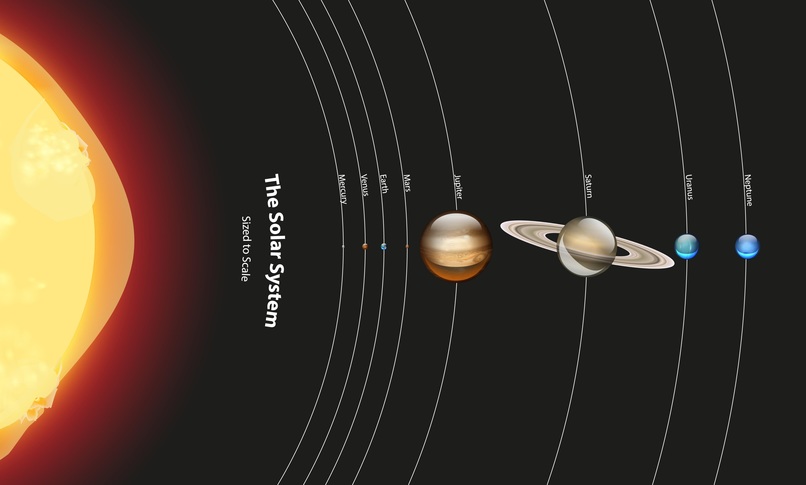

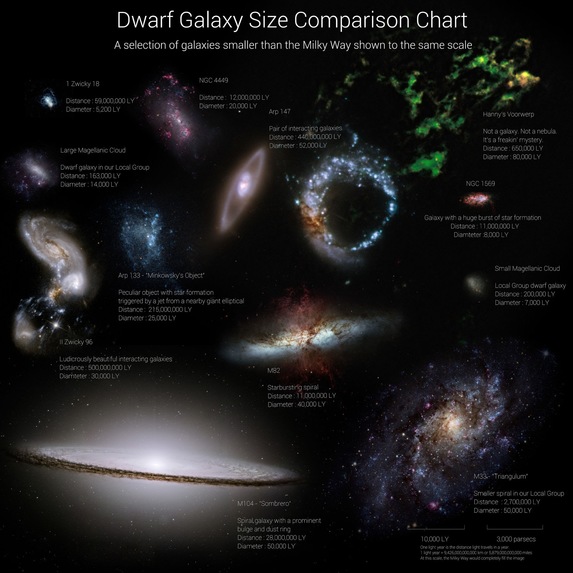

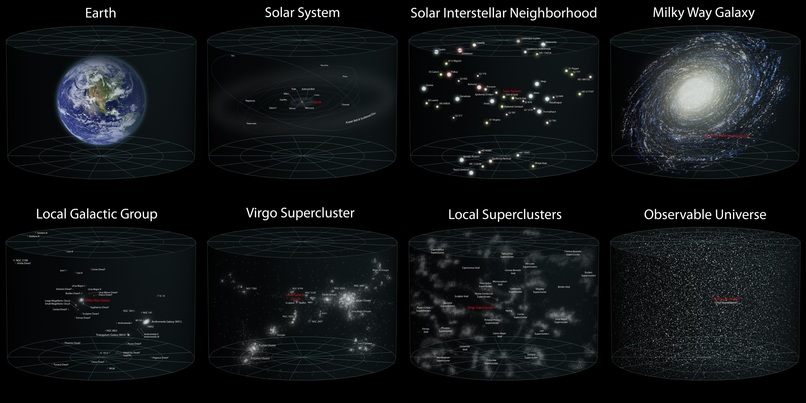

Gemini gen: Learning about system scale

-

Gemini gen: Learning about stellar evolution

-

Gemini gen: Learning about geostationary belt orbit types

About the Project

Inspiration

Space is not static, yet most simulators rely on hardcoded databases that become obsolete the moment they are written. With over 30,000 tracked debris objects and thousands of active satellites, the risk of catastrophic collisions grows daily. We asked: What if an AI "brain" could watch space in real time and react like a human operator — but faster, and never sleeping?

We were inspired by the challenge of bridging the gap between "video game physics" and engineering-grade astrodynamics. We wanted to create a system that could simulate complex scenarios — from Low Earth Orbit (LEO) traffic management to Deep Space missions like the JWST at Lagrange points — without manually inputting thousands of orbital parameters. The goal was to build a backend that acts as a living catalog, capable of self-discovery and self-correction using the same data sources professional astronomers use.

SGBrain was born from the intersection of two passions: orbital mechanics and bio-mimetic AI architecture. Instead of building yet another chatbot wrapper, we designed Synai — a cognitive system where the Gemini API becomes the neural substrate of an artificial mind, complete with brain regions, synapses, metabolism, and even fatigue.

What It Does

SGBrain is a full-stack Space Digital Twin platform that:

Autonomous Data Hydration: You simply request an entity by name (e.g., "Starlink-1007" or "James Webb"), and SGBrain's External Catalog Service intelligently queries diverse scientific APIs (JPL Horizons, CelesTrak, SIMBAD) to find the correct identity, mass, and orbital parameters.

Smart Physics Inference: The system analyzes the scenario context. If you simulate the JWST, it automatically upgrades the gravity model from a simple 2-body Keplerian orbit to a Cowell N-Body integrator, adding the Sun as a perturbator to stabilize the L2 halo orbit.

Unified Entity Factory: It abstracts the complexity of data sources. Whether the data comes from a TLE (Two-Line Element set), a state vector, or a Keplerian set, the Factory normalizes it into a unified internal physics model ready for propagation.

Streams live telemetry to a WebGL 3D viewer where operators watch satellites, debris, and celestial bodies orbit in real time at 60 FPS.

Detects threats automatically — proximity warnings and collision predictions using ephemeral future projections.

Recommends actions through Synai, a Gemini-powered cognitive AI that analyzes scenarios, calculates optimal $\Delta V$ maneuvers, and presents them for human confirmation.

Executes maneuvers by injecting velocity corrections directly into the running simulation via a Redis command bus.

The Synai Brain — How We Use Gemini

Synai is not a chatbot. It's a bio-mimetic neural network where:

- Each neuron is a

gemini-2.0-flashagent with specialized system instructions (its "DNA") - Brain regions (Frontal/Executive, Occipital/Visual, Temporal/Memory, Parietal/Spatial, Limbic/Emotional) group neurons by cognitive function

- Synapses connect neurons with weighted energy transfer: $E_{target} = (E_{source} - friction) \times weight$

- Metabolism tracks energy consumption per inference with separate costs for Flash vs Pro models

- Friction simulates cognitive fatigue — after sustained activity, neuron responses degrade naturally

- Mitosis allows neurons to self-replicate when they encounter problems beyond their specialization, using Gemini to generate new focused system instructions

- Memory Traces provide episodic RAG context, enabling neurons to reference past experiences

Brain → Regions → Neurons → Synapses

↓

GeminiNeuron (gemini-2.0-flash)

- system_instruction (DNA)

- friction (fatigue)

- uncertainty scoring

- metabolism tracking

How We Built It

Physics Core (Python/Django 5.1+):

- Orbital propagators (Cowell integrator, Kepler solver) built with Poliastro and Astropy for astrodynamics calculations, coordinate frame transformations (ICRS/GCRF), and unit conversions

- Hybrid propagation: mixing TLE-based propagation (for LEO satellites) with high-precision numerical integration (Cowell's method) for deep space objects in the same timeline

- Full perturbation modeling (J2 oblateness, atmospheric drag, N-body gravitational effects)

- Cartesian state vector initialization for N-body scenarios (Three-Body choreographies, exotic planetary systems)

Data Pipeline:

- Robust

ExternalCatalogServicethat acts as a proxy/cache gateway for scientific APIs - Handles the specific idiosyncrasies of legacy APIs (like JPL Horizons' CLI-based command syntax)

- Entity Factory system with hydration from external catalogs (JPL Horizons, CelesTrak, SIMBAD)

- Strict Registry + Factory Pattern ensuring physical constraint validation before persistence

Frontend (Angular 21 + TypeScript):

- THREE.js WebGL viewer rendering satellites, orbits, trails, and ghost trajectories at 60 FPS

- Real-time telemetry streaming via intelligent polling with chunk accumulation

- Unified System Console with tabbed navigation (SYNAI, Events, History, Logs)

- Interactive maneuver dialog with directional ΔV controls

- Signal-based reactive state management

AI Layer (Gemini):

GeminiNeuronclass wraps thegoogle-genaiSDK for neural processing- Bio-mimetic metabolism with energy accounting per inference

- Cognitive degradation under sustained activity

Infrastructure:

- PostgreSQL with JSONB fields for rich, schema-less physical properties

- Redis for command injection bus and caching

- Celery + Celery Beat for async task orchestration and continuous real-time simulation

- GCP Cloud Build pipeline (7 stages) with multi-stage Docker/Nginx deployment

Challenges

The "Horizons 400" Nightmare: Significant time debugging why JPL Horizons rejected queries. The API requires specific command syntax (e.g., appending

%3Bto asteroid IDs) to distinguish between major planets and small bodies.Keplerian Traps: Visualizing the JWST was difficult — it appeared to orbit Earth in a chaotic ellipse. The propagator treated it as a standard moon. We had to implement a "Smart Physics" layer to detect Lagrange point missions and enforce 3-body physics ($Earth + Sun + JWST$).

The "NaN" Crash: Deep space simulations occasionally produced infinite values or division-by-zero errors during reentry calculations, crashing JSON serialization to Postgres. We implemented strict sanitization layers in the simulation loop.

Live chunk accumulation: Building a seamless streaming experience where telemetry chunks accumulate without visual artifacts or timeline resets.

Bio-mimetic metabolism: Designing an energy system where AI inference has real "costs" that affect behavior — not just rate limiting, but actual cognitive degradation under fatigue.

Coordinate frame scaling: Rendering objects from mm-scale satellite components to AU-scale solar system orbits in the same viewer using logarithmic depth buffers.

N-body reference frames: Three-Body choreography scenarios required shared barycentric reference frames — each body creating a self-referencing attractor placed them in separate coordinate systems, solved with shared BARYCENTER entities.

Accomplishments We're Proud Of

Self-Healing Catalog: The system takes a simulation template with just names ("ISS", "Hubble") and, within seconds, fully hydrates the simulation with the latest real-time orbital elements from NORAD and NASA.

Hybrid Propagation: Successfully running scenarios that mix TLE-based propagation (for LEO satellites) with high-precision numerical integration (Cowell's method) for deep space objects in the same timeline.

16 Simulation Templates: From Near-Earth LEO operations to Galactic Center black hole S-star orbits, TRAPPIST-1 exoplanet systems, and Three-Body figure-8 choreographies — all with real astrophysical data.

Robust Architecture: Moving from a fragile script-based approach to a solid service-oriented architecture where the Registry, Factory, and Database have clear boundaries and responsibilities.

What We Learned

Gemini's speed: The

gemini-2.0-flashmodel's response time makes real-time cognitive processing genuinely viable — neuron response times are fast enough for operational decision-making.Bio-mimetic constraints create trust: Fatigue, metabolism, and friction create emergent behaviors that make the AI more trustworthy — operators can see why a recommendation degrades over time.

Scientific APIs are brittle: We learned to build defensive code around external services like SIMBAD and CelesTrak, implementing intelligent "skip" logic to avoid querying star catalogs for man-made satellites.

Physics is unforgiving: A small unit conversion error (AU to km) or a missing perturbing body (like the Sun) changes a stable orbit into an ejection trajectory.

Perfect domain for human-AI collaboration: Space debris collision avoidance is ideal — the AI calculates complex orbital mechanics while the human retains final authority over maneuver execution.

Demo scenarios

00 Real-Time Simulation & Monitoring 01 LEO Operations (TLEs) 03 Earth-Moon System (Cislunar) 04 Inner Solar System 06 Outer Solar System 11 TRAPPIST-1 System 12 Alpha Centauri System 13 Galactic Center (Supermassive Black Hole) 16 Three-Body Choreography

Built With

- Languages: Python 3.12, TypeScript

- Frameworks: Django 5.2+, Angular 21, Django REST Framework

- Scientific Libs: Poliastro, Astropy, NumPy, SciPy

- 3D Rendering: THREE.js (WebGL)

- AI: Google Gemini API (

gemini-2.0-flashviagoogle-genaiSDK) - Database: PostgreSQL (JSONB)

- Async/Queue: Redis, Celery

- Cloud: Google Cloud Platform (Cloud Build, Cloud Run)

- External APIs: NASA JPL Horizons, CelesTrak (NORAD TLEs), SIMBAD (via Astroquery)

Built With

- angular21

- astropy

- boinor

- celery

- celestrak(noradtles)

- django5

- djangorestframework

- jplhorizons

- nasajplhorizons(solarsystemdynamics)

- numpy

- poliastro

- postgresql

- python3.12

- redis

- scipy

- simbad

- simbad(stellardatabaseviaastroquery)

- tles

- typescript

Log in or sign up for Devpost to join the conversation.