Inspiration

BarelyAtWork is inspired by the idea that technology should disappear into the background. Instead of forcing people to open apps, switch tabs, and manage tools, BarelyAtWork brings everything into a single, natural interface: your voice. It draws from the vision of ambient computing, where actions happen as you speak and think, allowing you to stay present in the real world while your workflows run seamlessly around you. It separates itself from similar tools (such as Openclaw, IFTTT, and Zapier) by being designed specifically for the non-technical. Gone are the times of hours lost debugging when they should be saved by the very thing that wastes them - one login is all any app should need.

What it does

BarelyAtWork lets you create and run multi-step workflows across real apps entirely without a screen. After a quick one-time setup to connect 3rd-party applications with a simple OAuth flow, everything else happens through smart glasses. You simply speak, and our agent, Chad, turns natural language into automated multi-step workflows (like booking an Uber, sending messages, or updating calendars) and executes them in real time. By eliminating the need for apps, dashboards, or manual coordination, BarelyAtWork replaces traditional automation tools with a seamless, always-available interface powered purely by your voice.

How we built it

At the core of our entire infrastructure is a single Vultr instance coordinating every action our app does. It hosts its own Mongo database, handles all audio streamed to it by the smart glasses, and executes every workflow defined by the users.

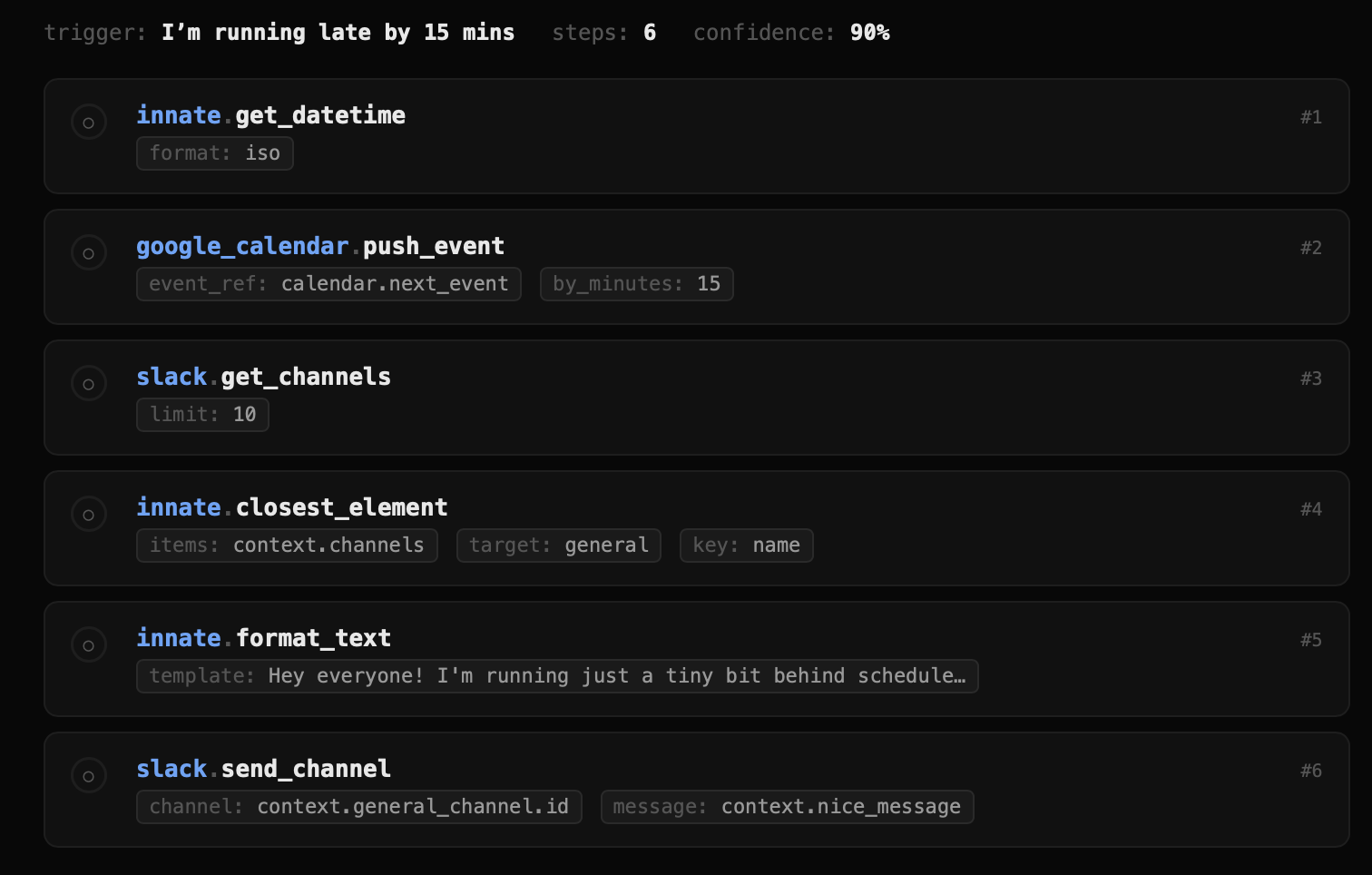

Users begin with a lightweight web app experience built with the Cloudinary React starter kit, where they securely connect third-party services like Google, Slack, or Notion via OAuth. Once set up, interaction shifts entirely to Ray-Bans, which act as the primary interface through their built-in microphone and speakers. Voice input is streamed through a mobile app to a FastAPI backend, where it's transcribed into text using Deepgram. From there, our system detects trigger phrases and finds the right workflow to execute, or utilizes Gemma to create a new static workflow if one does not exist yet.

After executing the workflow, BarelyAtWork delivers a spoken confirmation back to the user using ElevenLabs' voice synthesis, streamed directly through the glasses, closing the loop from thought to action without ever needing a screen.

Challenges we ran into

We ran into several challenges regarding infrastructure, such as unplanned unavailability and user interface failures. However we overcame all of these and were still able to deliver a full MVP at the end of the 36 hours.

Accomplishments that we're proud of

We're proud that we were able to get a real-time, production-like system working end-to-end under tight constraints, and make it actually reliable, not just a demo. We handled streaming audio, low-latency processing, and consistent execution across multiple external APIs, which is where most systems like this tend to break. We also built a flexible pipeline that can parse natural language into structured, multi-step workflows and resolve missing parameters on the fly. We also integrated multiple third-party platforms like Google, Notion, Slack, and even Dominos through OAuth in a way that was fast to extend, and demonstrated real-world utility.

What we learned

We learned that building real-time, voice-driven systems is much more about reliability and latency than raw model capability. Getting transcription, intent parsing, and execution to work consistently in a tight loop required careful handling of streaming, edge cases, and failures across multiple services. We also learned that integrating third-party APIs is often the biggest bottleneck; OAuth flows, inconsistent docs, and handling real-world errors took significantly more effort than expected. On the modeling side, translating open-ended natural language into structured, executable steps is non-trivial and requires iteration, especially when resolving missing parameters dynamically. Overall, the biggest takeaway was that making a system feel simple and seamless requires solving a lot of difficult engineering problems under the hood.

What's next for BarelyAtWork

For next steps, we'd focus on making the system more robust and scalable. That includes improving workflow accuracy and reliability, adding better error handling and retries for third-party APIs, and expanding the set of supported integrations. We also want to refine the voice interaction loop, reducing latency, improving trigger detection, and making confirmations more natural. On the product side, we'd explore lightweight ways to manage and edit workflows without breaking the no-UI paradigm, as well as adding personalization so the system adapts over time. Longer term, we're interested in making the agent layer more autonomous, so workflows can evolve more dynamically.

Log in or sign up for Devpost to join the conversation.