Black boxes, black boxes everywhere. The web is coming of age, we have real-time communication at last, audio and video elements are here and all we're doing with them is put them in little black boxes, just TV/Radio/Film shoved onto the web in the same old tired, tried and tested formats.

Hyperaudio changes all that by breaking media down into its component parts. We do this buy transcribing and adding timings to the spoken word. Once transcribed you can manipulate media from it's transcript - it's a magical experience.

It all started back in 2010 - we'd been working hard on a new audio library called jPlayer, which had gone viral on Twitter and received a good reception on Reddit, giving us the confidence we needed to show it off. The first Mozilla Festival's Media Fair seemed like a good place to do this and I came up with something that synchronised transcripts with audio. I got in!

People seemed to like it. It was a very simple demo but it got the message across. Tying text to media had potential.

Enter Hyperaudio - a name coined by Henrik Moltke - a journalist working for Mozilla who contacted me in the following Spring. We decided to collaborate and what followed was a demo for WNYC's Radiolab program.

Around this time I started thinking about how we could manipulate media from its transcripts.

A year later, Mozilla again and a new opportunity, I became one of the very first Knight Mozilla OpenNews fellow and so we ended up testing Hyperaudio in the newsroom and built several well received and award winning interactive applications.

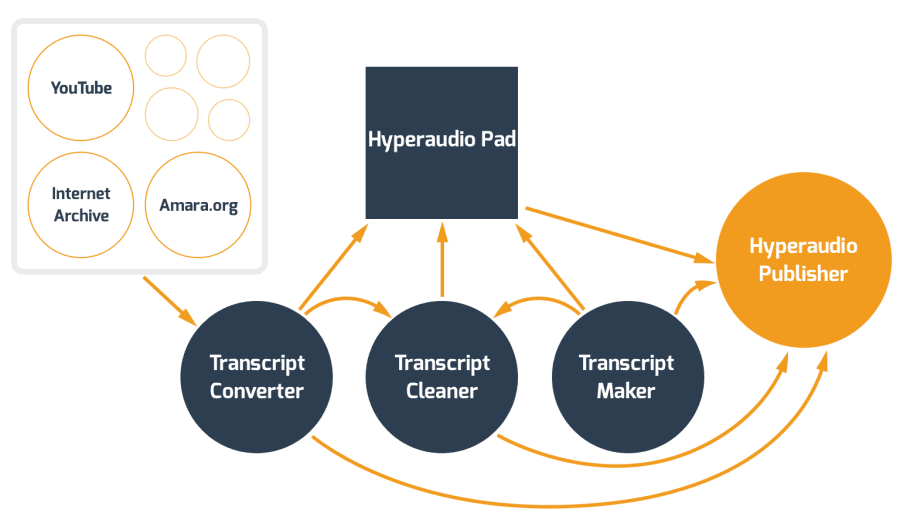

After the fellowship, I applied to the Knight Foundation to set up a non-profit - Hyperaudio Inc and create a service around the technology and with funding from Knight and Mozilla, Hyperaud.io was born. We created a number of tools to help people transcribe audio and video, linked to a third party service to time-align transcripts with media and produced an editing tool called the Hyperaudio Pad. All this backed my a public API and Hyperaudio.js our JavaScript library.

We worked closely with libraries and schools to create customisable versions of Hyperaud.io and encourage media literacy, self expression and hyperlocal activities in the younger generation.

We worked hard on building in the ability to share and embed in Hyperaud.io and we're happy with how that turned out, also having created hyperaudio.js we're excited that people can make there very own Hyperaudio applications and actually we're currently using it to create a news site interactive.

I'm perhaps most proud of the fact that Hyperaud.io is a completely decoupled system, comprising of 7 separate github repos, each element can be used independently and the API is the glue that holds it all together. All our code is MIT licensed.

We really want to take Hyperaudio a step forward and create a platform that newsrooms can use to create interactive applications. Making remixing easy means making new forms of self-expression easy, we want to see news being made from the bottom up with Hyperaudio.

Log in or sign up for Devpost to join the conversation.