-

-

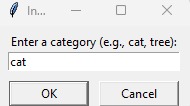

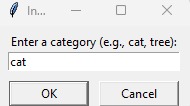

Interpretation Therapy – Visual Understanding through Drawing : Choosing Category

-

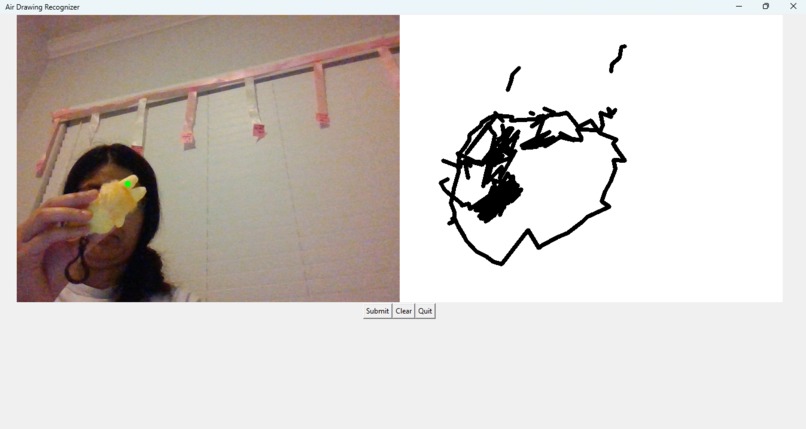

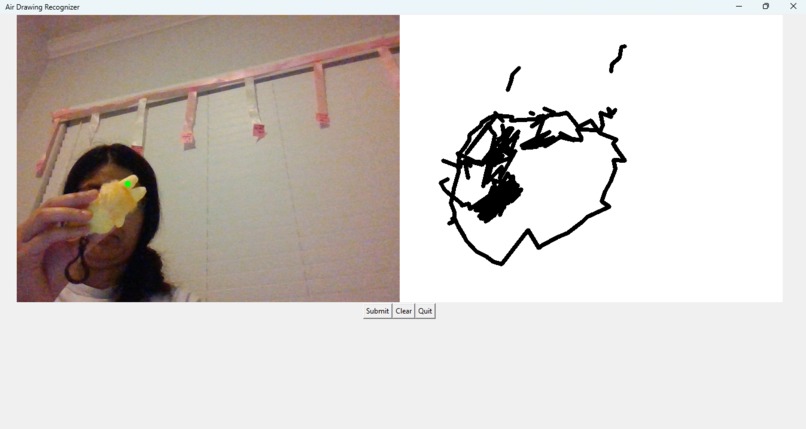

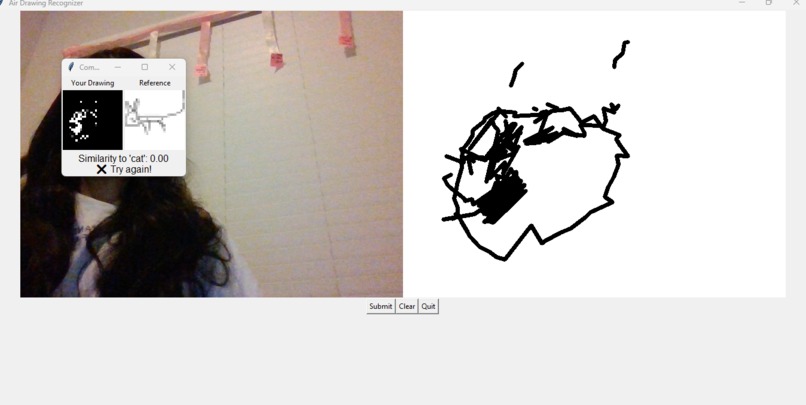

Interpretation Therapy – Visual Understanding through Drawing : Drawing Scribble

-

Interpretation Therapy – Visual Understanding through Drawing : Result verification

-

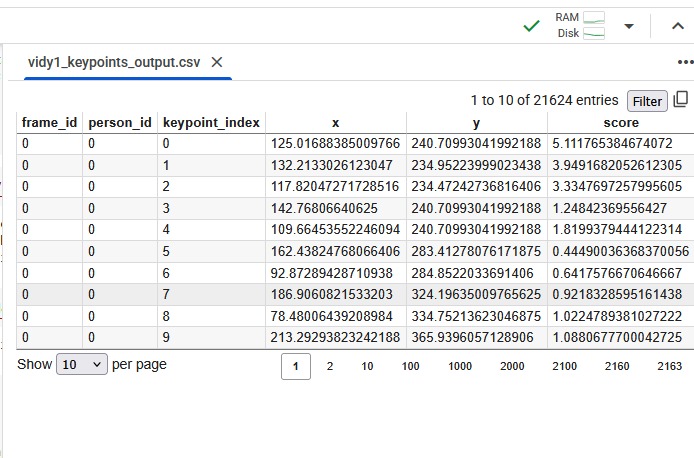

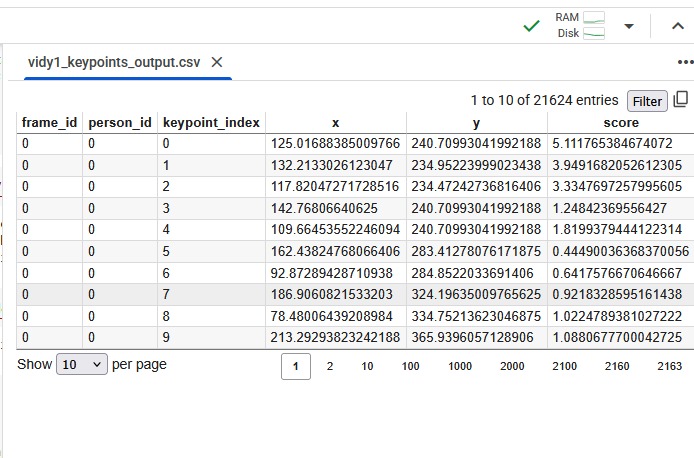

Motor Therapy – Pose Estimation : keypoints for first video in form of CSV file

-

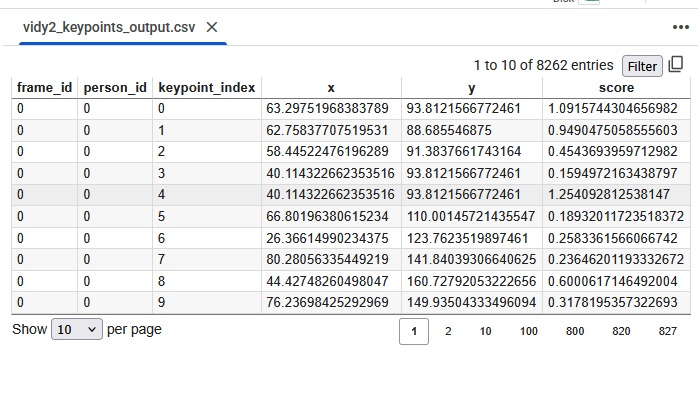

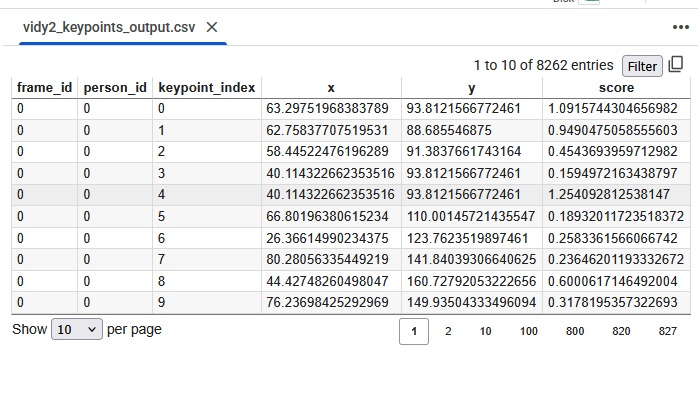

Motor Therapy – Pose Estimation : keypoints for second video in form of CSV file

-

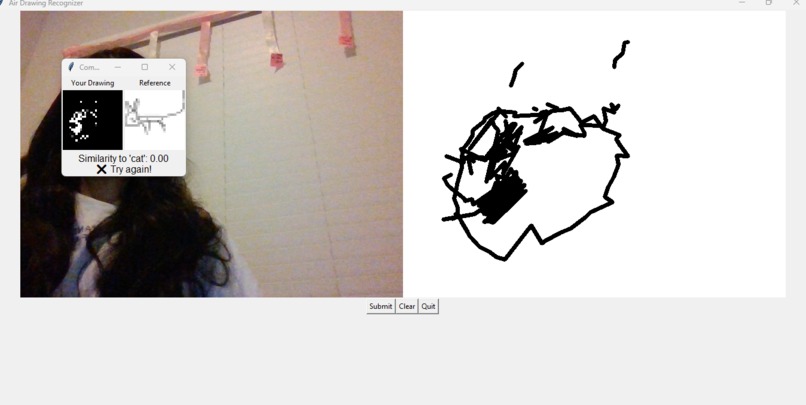

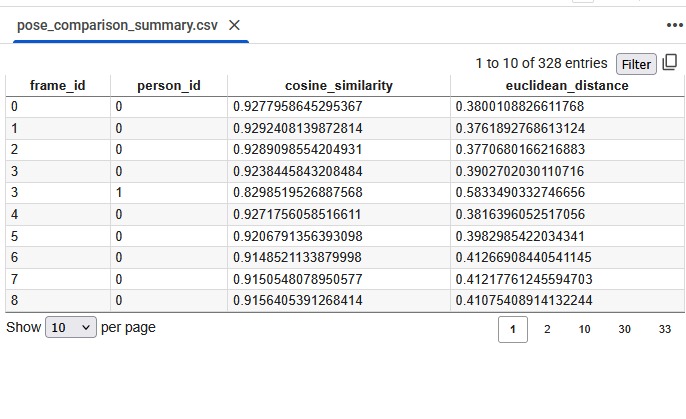

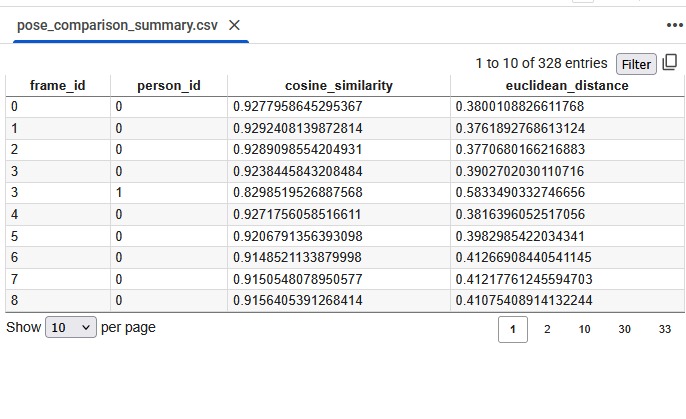

Motor Therapy – Pose Estimation : summarized keypoints for both the video in form of CSV file

-

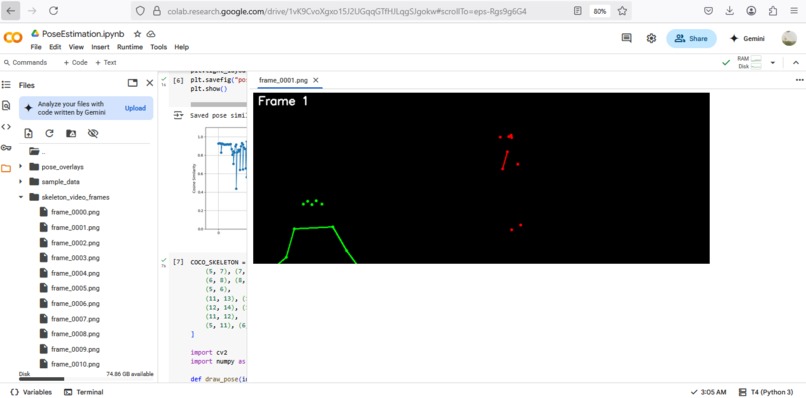

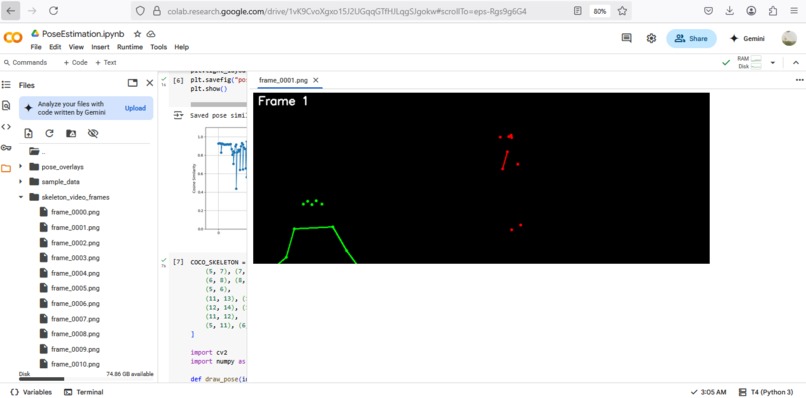

Motor Therapy – Pose Estimation : Comparing poses from individual frames in both the videos in skeleton form

-

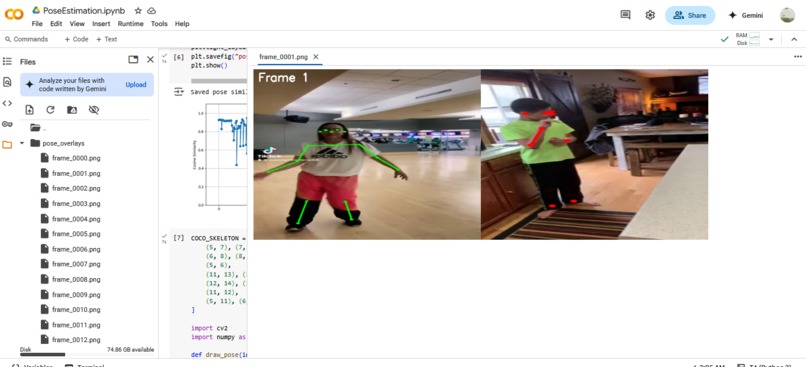

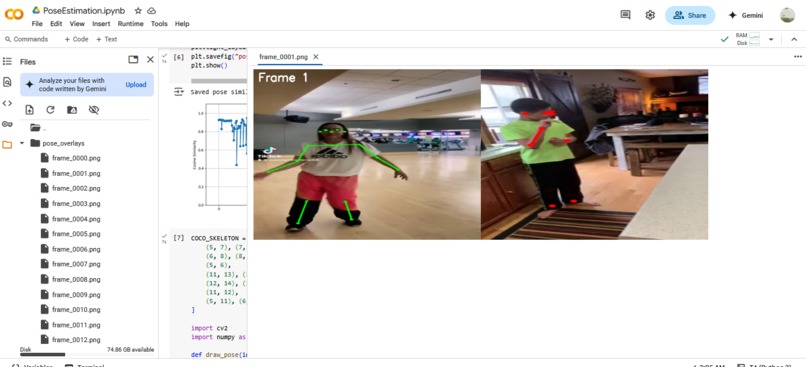

Motor Therapy – Pose Estimation : Comparing poses from individual frames in both the videos

-

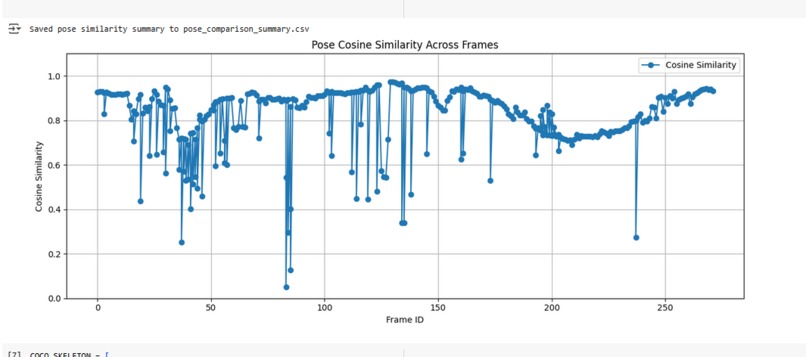

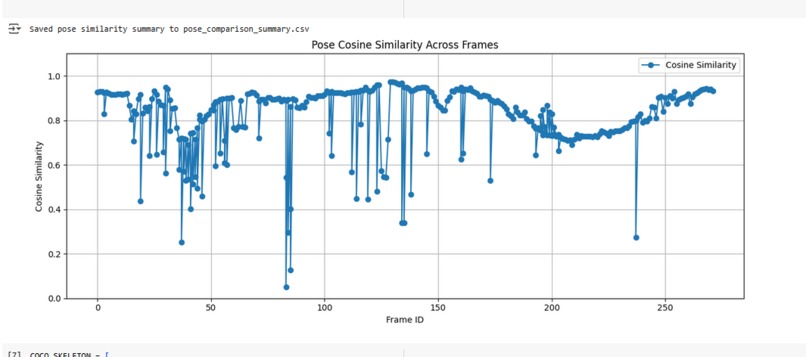

Motor Therapy – Pose Estimation : Graph for similarity comparison between poses of individual frame in both the videos

-

Motor Therapy – Pose Estimation : Combined video for better analysis

-

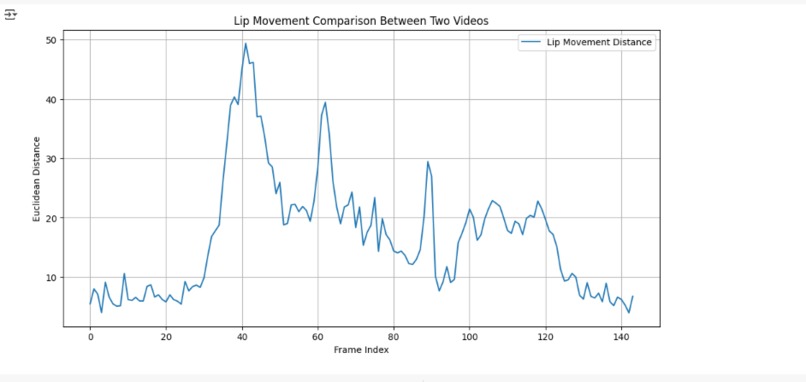

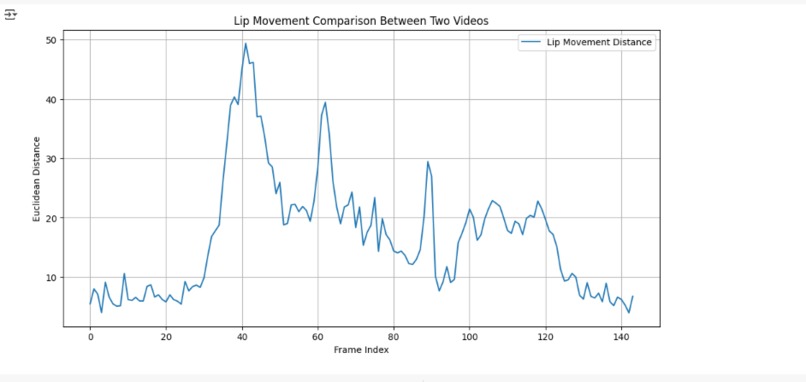

Speech Therapy – Lip Movement Analysis : Graph using Euclidean distance between lip movement distance of both the videos

-

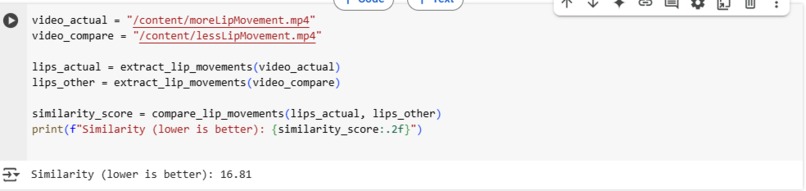

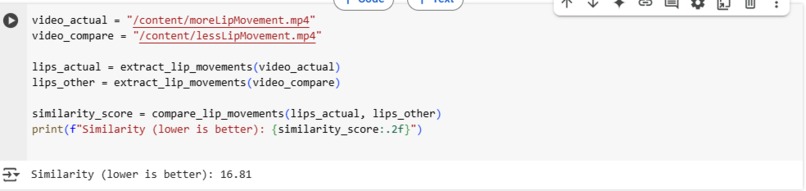

Speech Therapy – Lip Movement Analysis : Finding overall similarity score between both videos

-

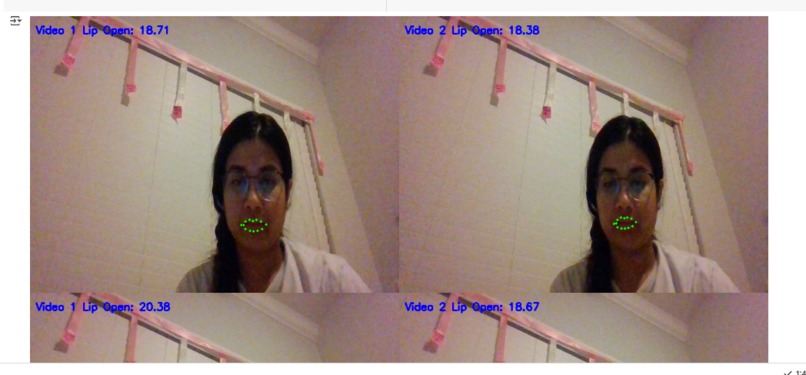

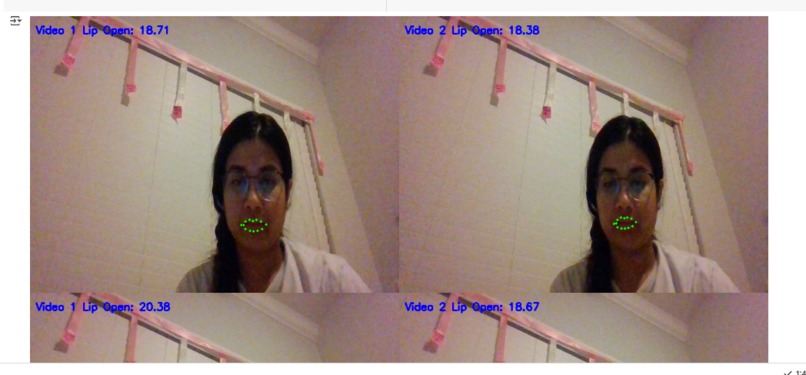

Speech Therapy – Lip Movement Analysis : Combined comparison of both videos with real time lip distance comparison

Inspiration

Driven by a deep commitment to creating inclusive technology, I center my projects around empowering individuals with disabilities. I was especially moved by the unique challenges faced by children with autism and Down syndrome, who often experience difficulty with communication, motor coordination, and self-expression. Through extensive research, I discovered a significant gap in the availability of AI-powered tools designed to support therapeutic interventions for these children. This realization inspired me to design a solution that leverages artificial intelligence to provide objective, measurable support across multiple therapy domains.

What it does

Speech Therapy – Lip Movement Analysis

In traditional speech therapy, children are guided to pronounce specific words or phrases, while therapists observe and evaluate their articulation. To digitize this process:

- Developed a system to compare a reference video (recorded by a parent or therapist) with the child’s attempt to repeat the word.

- Integrated the shape_predictor_68_face_landmarks.dat model to accurately track facial landmarks and analyze lip movements.

- Used Euclidean distance to measure the variation in lip positions across video frames, thereby quantifying pronunciation accuracy.

- Visualized the comparison with side-by-side video playback and dynamic graphs with score to detect even minor articulation differences.

Motor Therapy – Pose Estimation

Motor therapy often involves mimicking physical activities demonstrated by a therapist. My solution automates this assessment:

- Utilized Detectron’s Mask R-CNN to perform pose estimation and identify key body joints.

- Recorded and compared the child’s actions with ideal movement references.

- Calculated similarity scores to quantify motion accuracy.

- Presented results using video overlays, skeleton overlays and visual graphs, enabling therapists to assess how well the child replicates movements.

Interpretation Therapy – Visual Understanding through Drawing

Many children with developmental disorders struggle with connecting words to visuals. This module evaluates their ability to interpret verbal prompts:

- Used the QuickDraw dataset and Python API to simulate real-time drawing tasks.

- Displayed a simple word prompt (e.g., “cat”) and prompted the child to draw the object.

- Compared the child’s drawing to multiple reference samples from QuickDraw dataset/library.

- Measured semantic alignment between the prompt and drawing, offering insight into cognitive and interpretive skills.

How we built it

- Built using Python and multiple other libraries .

Core Models and dataset require are -

- Detectron2 (Keypoint R-CNN) model ( for Motor Therapy)

- shape_predictor_68_face_landmark file ( for Speech Therapy)

- QuickDraw library ( for Interpretation Therapy)

The libraries used are - opencv-python-headless, dlib, imutils, scipy, numpy, matplotlib, cv2, numpy, quickdraw (import QuickDrawData), skimage.metrics (import structural_similarity), time, pandas, torch, detectron2.engine (import DefaultPredictor), detectron2.config (import get_cfg), detectron2 (import model_zoo), sklearn.metrics.pairwise (import cosine_similarity), matplotlib, os, moviepy.editor (import VideoFileClip, clips_array), urllib.request, bz2, dlib, imutils (import face_utils), scipy.spatial (import distance), tempfile, datetime, matplotlib.pyplot, google.colab.patches (import cv2_imshow)

Challenges we ran into

Research Depth:

Spent considerable time analyzing existing AI applications in therapy to ensure the project was both innovative and impactful.

Library Conflicts:

Resolved compatibility issues between MediaPipe, TensorFlow, and local vs. Colab environments by reconfiguring dependencies and adapting workflows.

Data Handling:

Transitioned from large, cumbersome .npy files to a lightweight, modular integration using the QuickDraw Python library.

Video Processing:

Overcame Colab's lack of webcam support by engineering a workaround that allowed for in-memory video recording and analysis.

Deployment Limitations:

Explored platforms like Tkinter, Gradio, and Streamlit for unified deployment, but due to compatibility and time constraints, opted for individual, structured Jupyter notebooks.

Resource Accessibility:

Faced challenges in sourcing appropriate datasets, particularly for lip movement comparison. In the absence of real-world data, I recorded my own sample videos with varied lip motions. Unfortunately, due to a lack of access to children with special needs, I was unable to collect direct feedback, which I consider a future goal.

Accomplishments that we're proud of

Learning about new models and libraries was valuable, but being able to use that knowledge to create something that supports children with special needs made the experience truly fulfilling.

What we learned -

- Gained hands-on experience in applying AI and computer vision to address real-world challenges in therapeutic care.

- Explored advanced tools such as dlib, OpenCV, Detectron2, and the QuickDraw Python API.

- Developed a deeper understanding of the therapist’s workflow and the limitations of manual assessment methods.

- Learned how to convert behavioral observations into quantifiable data for clearer analysis and decision-making.

What's next for HopeNest AI

- Deployment on a public platform like streamlit or gradio with a better UI for better usage.

- Finding the right audience that can try the app.

- Coming with more analysis methods in real time.

Built With

- bz2

- clips-array)

- cv2

- datetime

- detectron2-(import-model-zoo)

- detectron2.config-(import-get-cfg)

- detectron2.engine-(import-defaultpredictor)

- dlib

- google.colab.patches

- import

- imutils

- imutils-(import-face-utils)

- matplotlib

- matplotlib.pyplot

- moviepy.editor-(import-videofileclip

- numpy

- opencv-python-headless

- os

- pandas

- python

- quickdraw-(import-quickdrawdata)

- scipy

- scipy.spatial-(import-distance)

- skimage.metrics-(import-structural-similarity)

- sklearn.metrics.pairwise-(import-cosine-similarity)

- tempfile

- time

- torch

- urllib.request

Log in or sign up for Devpost to join the conversation.