-

-

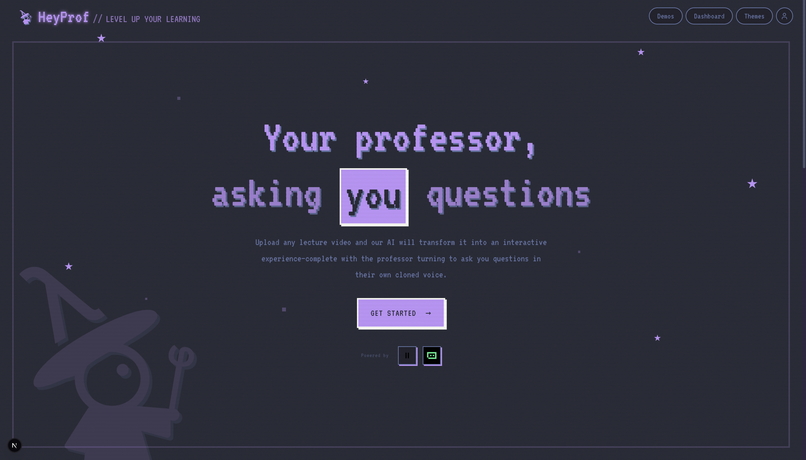

Welcome to Home page

-

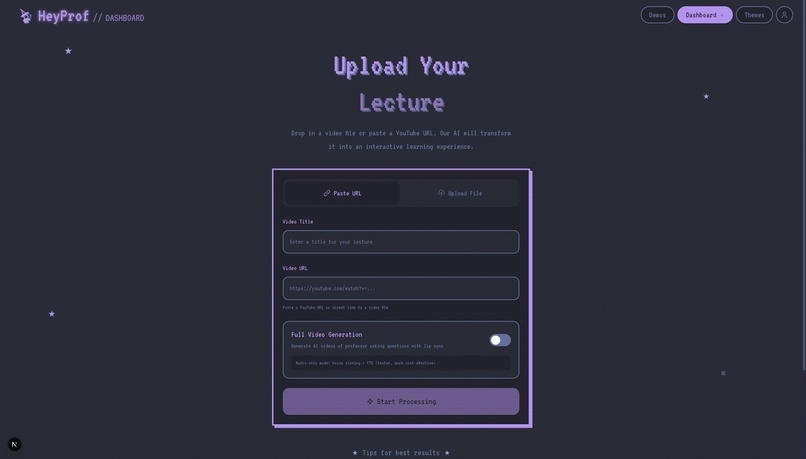

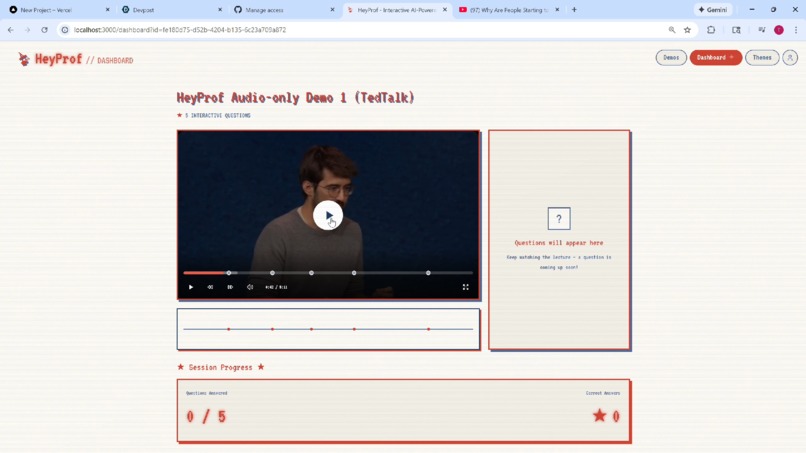

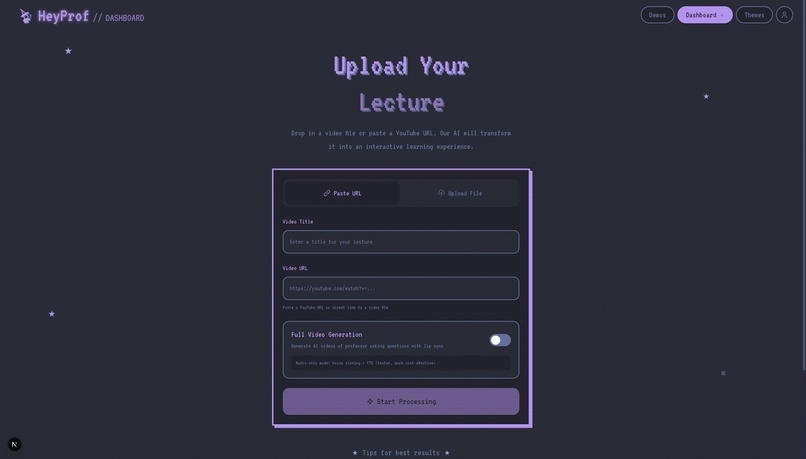

Dashboard - Upload a video file / paste a YouTube link, choose options, and let our AI transform it into an interactive experience.

-

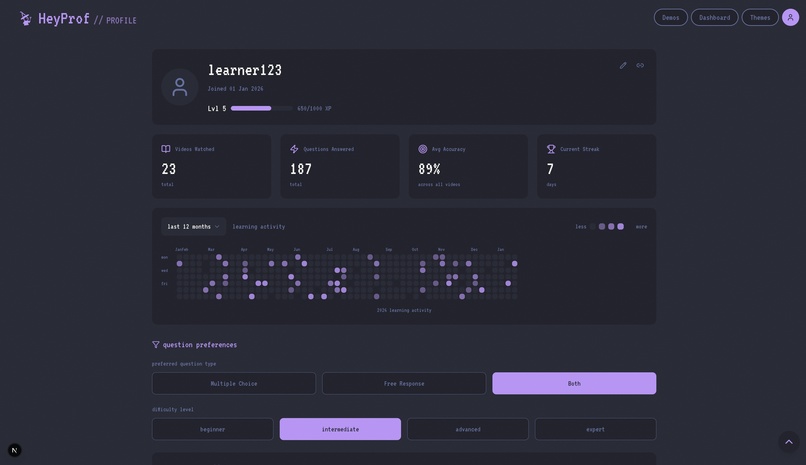

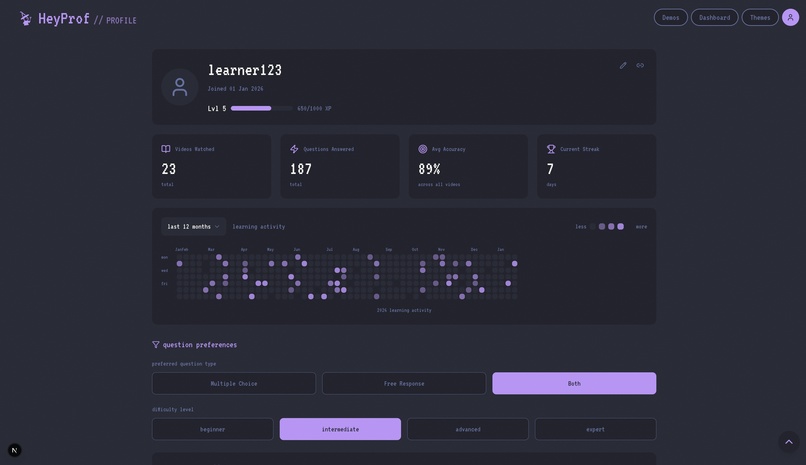

UserPage - Track progress with levels, accuracy, streaks, and activity history; toggle question settings

-

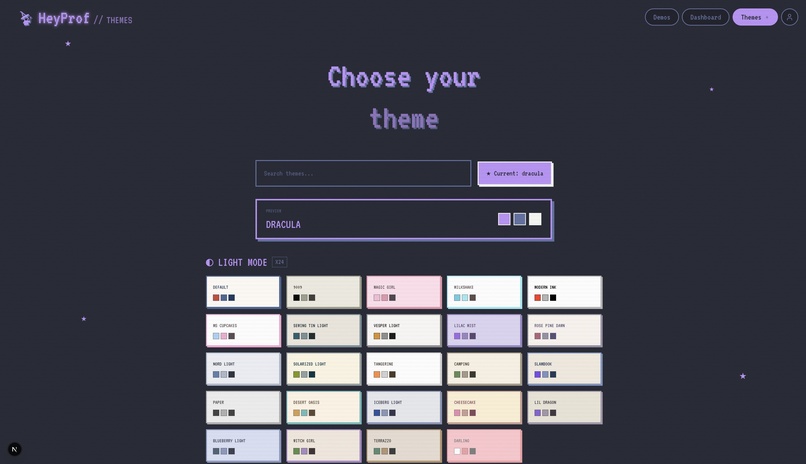

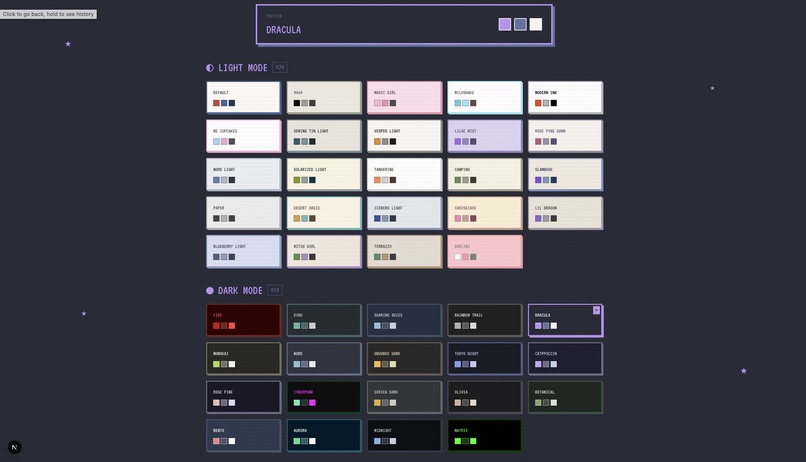

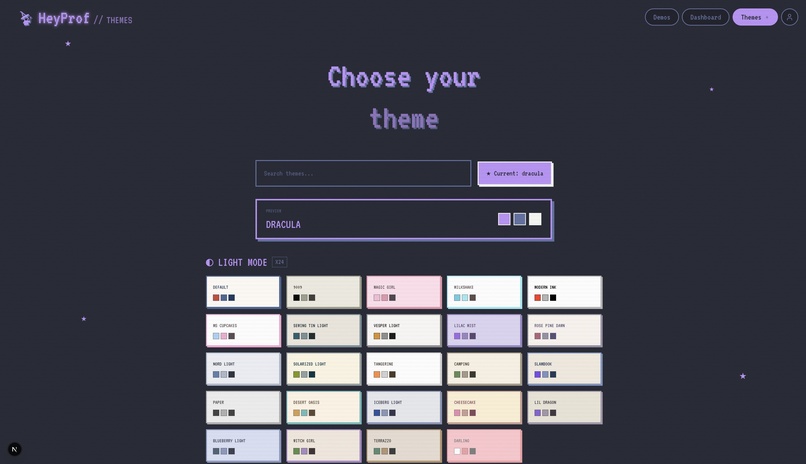

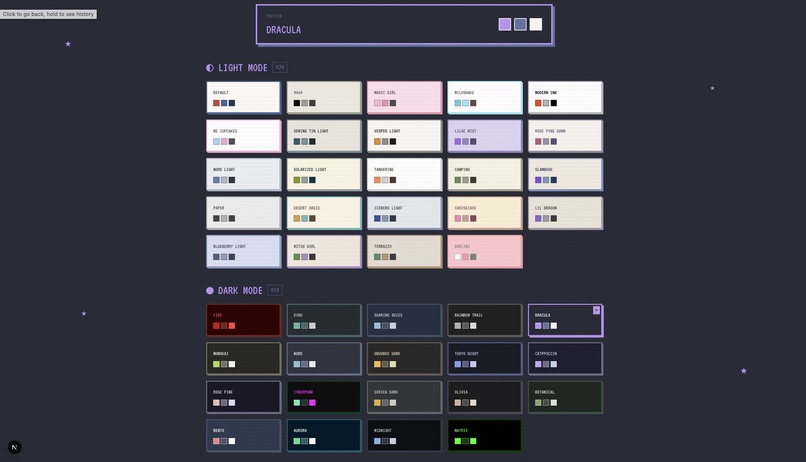

Themes - Rich theme library with light and dark modes, preview palettes, and instant switching for personalized learning.

-

Themes - Customize the learning experience with dozens of themes, letting students learn in an interface that fits their style

-

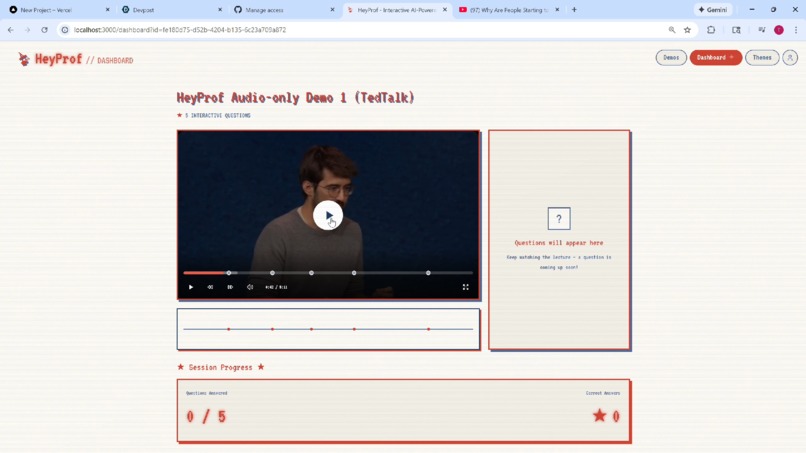

Video - Watching a Lecture

-

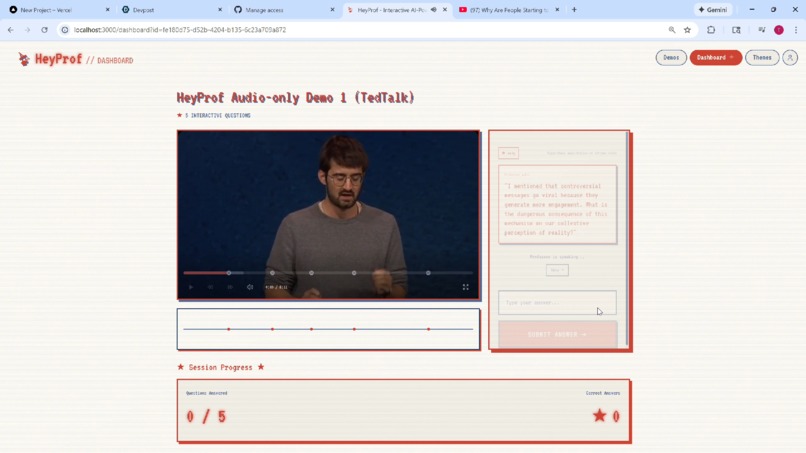

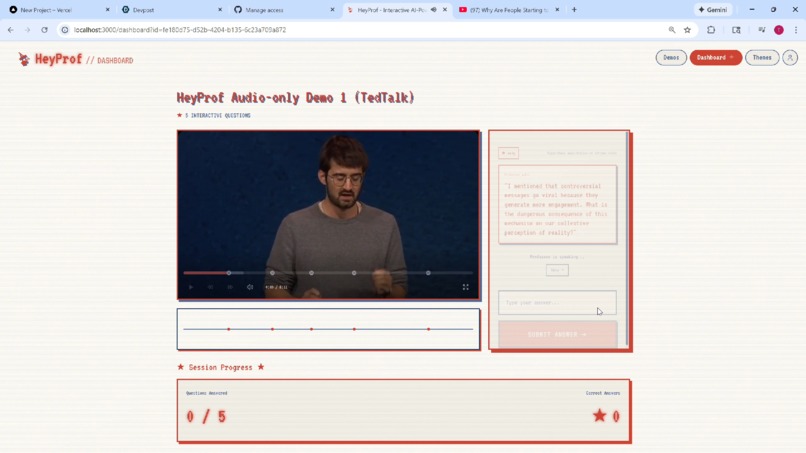

Video - A Question is Prompted

Inspiration

We've all been there. You forgot to set your phone alarm and now you woke up after your morning math lecture. And when we try to catch up later by watching recordings online, it's useless. It's too easy to zone out, scroll Instagram reels, and let the video play in the background while your mind wanders.

The problem is that watching lectures is passive learning. There's no accountability, no feedback, and no one checking if you actually understood what was just explained. We built Hey Prof to transform passive learning into active learning, where the professor stops, looks at you, and asks you questions, as if you were in the class.

What it does

Hey Prof takes any boring video content and transforms it into an interactive experience.

- Deep Understanding: It uses Gemini 1.5 Pro to watch and "understand" the lecture, identifying key concepts and optimal moments to check for understanding.

- Voice & Video Cloning: It extracts the professor's voice to create a high-fidelity clone using ElevenLabs. It then uses Kling AI to generate realistic video segments of the professor turning away from the whiteboard to look directly at the camera (you).

- Perfect Lip Sync: It generates audio questions in the professor's voice and uses Sync.so to perfectly lip-sync the generated video to this new audio.

- Interactive Quizzing: The result is a seamless video player where the professor periodically stops teaching, turns to "look" at the student, and asks a relevant question. If the student answers incorrectly, the AI professor explains the concept again and offers encouragement in their own voice.

How we built it

The application is built on Next.js 16 with a Firebase backend that orchestrates a complex pipeline.

- Gemini API serves as the brain, analyzing video transcripts and visuals to generate pedagogically meaningful, context-aware questions and personalized feedback.

- FFmpeg handles video processing like extracting audio samples for voice cloning and keyframes for video generation.

- yt-dlp enables direct downloading of YouTube lecture content.

- ElevenLabs provides high-fidelity voice cloning from extracted audio samples.

- Kling AI generates the "turning" video transitions from static lecture frames.

- Sync.so performs lip-synchronization to make the generated video speak the generated audio naturally.

- The frontend uses React 19, Tailwind CSS 4, and Radix UI primitives for a polished, accessible interface.

Challenges we ran into

Hallucination compounding: When you chain multiple AI models together, hallucinations don't just add up, they multiply. A small error in Gemini's understanding leads to a bad question, which leads to confusing feedback. We spent significant time on prompt engineering and validation layers between each stage to catch and correct errors before they propagate.

Processing time: The full pipeline (transcription, question generation, voice cloning, video generation, and lip sync) takes considerable time. Optimizing for quality while keeping wait times reasonable required careful tradeoffs.

Frame selection for video generation: Not every frame works well as a source for Kling's turning animation. We had to identify frames where the professor's posture, lighting, and positioning would translate naturally to a head turn.

Accomplishments that we're proud of

When we first saw the generated professor turn around and ask a question in their own voice, it genuinely felt like magic. The illusion really works! It feels like the professor is actually checking in on you.

What we learned

This project touched vision, language, speech synthesis, voice cloning, video generation, and lip synchronization. Seeing how all these capabilities can be composed together was really damn cool.

Prompt engineering is honestly an art. Small changes in how we instructed Gemini dramatically affected the quality and relevance of generated questions. Getting the AI to think like a teacher, not a quiz bot, took iteration.

What's next for Hey Prof

The current pipeline is slow. We want to parallelize stages, add caching, and explore faster model alternatives to reduce processing time significantly.

Built With

- ffmpeg

- firebase

- gemini-api

- kling-ai

- next.js

- sync.so

- tailwind-css

- typescript

Log in or sign up for Devpost to join the conversation.