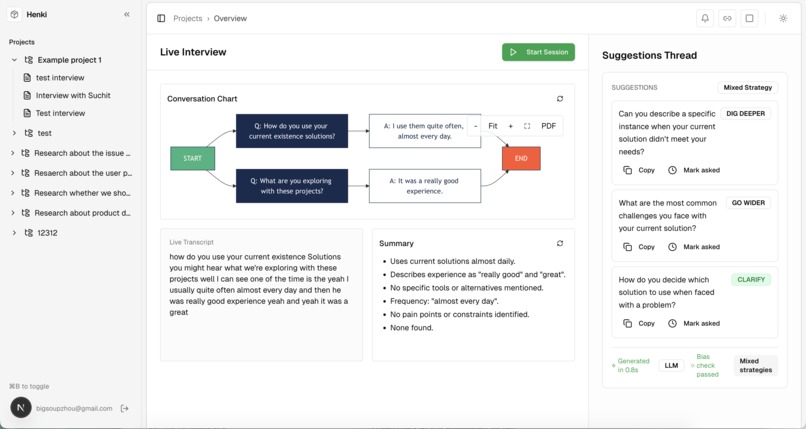

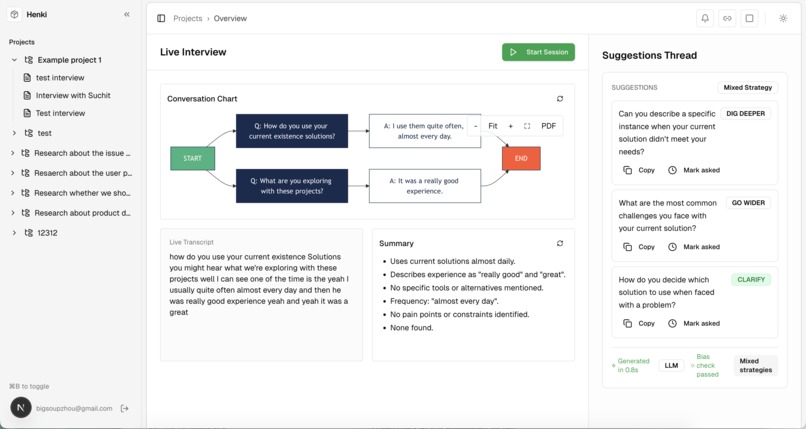

Inspiration

Interviews happen everywhere, sometimes over Zoom, sometimes across the table. In both cases, the same problems surface: note-taking distracts from listening, and missed follow-ups lead to shallow insights. We wanted a copilot that lowers cognitive load so interviewers can stay fully present while still capturing richer, more reliable data.

Screenshot

It is deployed live! Give it a try: https://hack2025-sr2p.vercel.app/

What it does

Henki acts as a real-time interview copilot:

Live capture: Streams transcription from either a shared browser tab (remote interviews) or your device microphone (in-person).

Smart follow-ups: Generates categorized prompts, probe, deep, quantify, clarify, on the fly.

Fast interaction: Quickly insert, copy, like/dislike, or regenerate suggestions without breaking flow.

Organized workspace: Interviews are grouped by project, with a clean, keyboard-first UI designed for speed.

How we built it

Frontend: Next.js 15, React 19, TypeScript, Tailwind CSS, shadcn/ui, Lucide.

Live capture:

Remote: Screen/tab audio via getDisplayMedia + MediaRecorder, with embedded video preview.

In-person: Microphone capture via getUserMedia, with chunked upload/streaming fallback.

Transcription: FastAPI WebSocket proxy to Deepgram (Nova-2/Nova-3), handling both interim and final segments.

AI suggestions: OpenAI prompts tuned for concise, actionable follow-ups.

Data & Auth: Supabase (Postgres + Auth + Storage).

Infra: pnpm, ESLint/TS, Vercel (frontend), Python backend service.

Challenges we ran into

Handling browser quirks across tab audio vs. mic input, MIME support, and HTTPS/permission flows.

Managing interim vs. final text without flicker or duplication.

Tackling echo/noise for in-room interviews while avoiding invalid MediaRecorder states.

Designing a UX that feels seamless across both live mode and manual editing.

Accomplishments that we're proud of

Built one unified flow that works for both remote and in-person interviews with minimal setup.

Delivered resilient live transcription directly into the composer, with graceful error handling.

Designed polished suggestion docks: rationale included, quick insert/copy, lightweight feedback controls.

Created a fast, professional, keyboard-first interface that feels built for practitioners.

What we learned

The practical realities of media APIs across browsers, from tab audio to microphones to WebSocket streaming.

How small UX details, like read-only states, resume behaviors, and subtle visual cues, determine whether live features feel usable.

Prompt engineering patterns that consistently generate strong, evidence-focused interview follow-ups.

Techniques for building noise- and echo-resilient transcription in real-world environments.

What's next for Henki

Language & voice: Multi-language support and speaker diarization.

IRL upgrades: Advanced noise suppression, echo cancellation, and mic device selection.

Deeper insights: Analytics (themes, summaries, exports) to accelerate synthesis.

Integrations: Zoom, Meet, Teams, and direct calendar/notes syncing.

Feedback loop: Use like/dislike signals to continuously refine suggestion quality.

Enterprise readiness: Privacy, compliance, and on-premise deployment options.

Built With

- deepgram

- fastapi

- openapi

- python

- react

- typescript

Log in or sign up for Devpost to join the conversation.