-

-

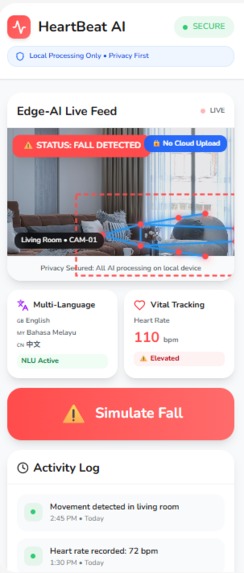

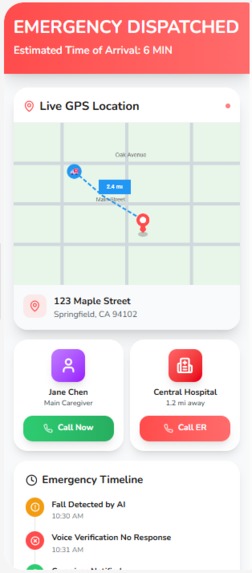

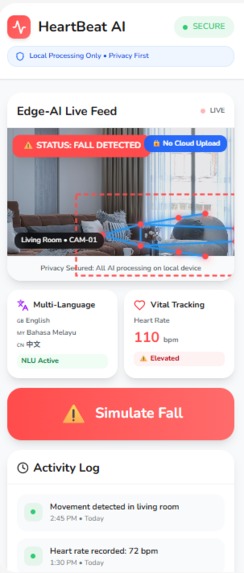

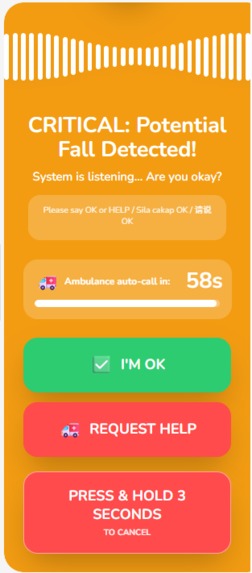

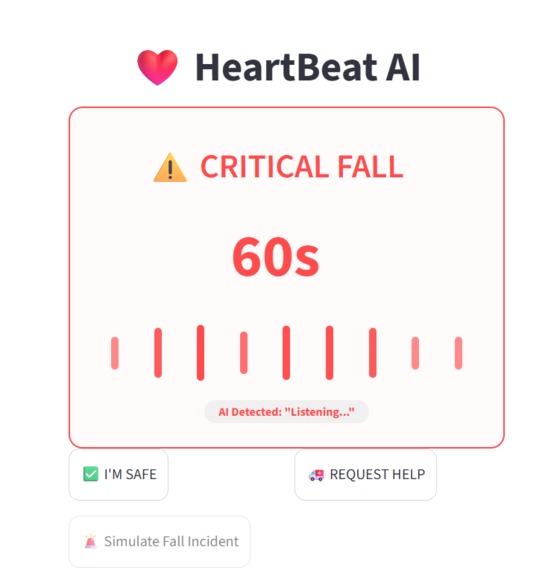

UI Dashboard upper portion

-

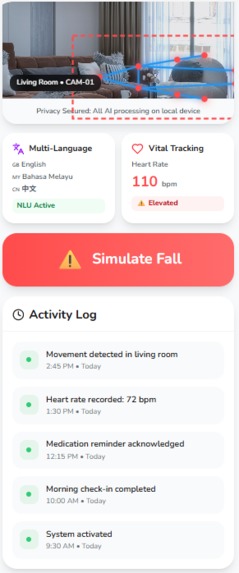

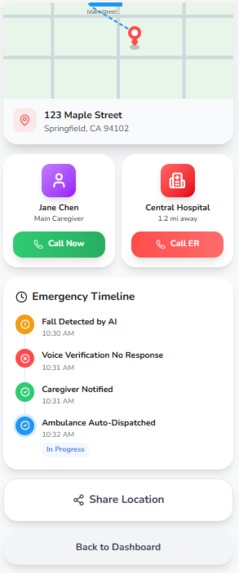

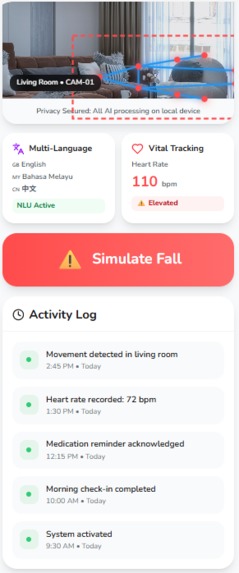

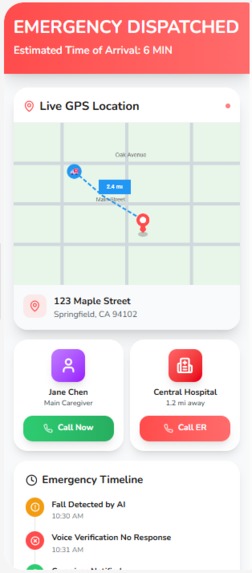

UI Dashboard bottom portion

-

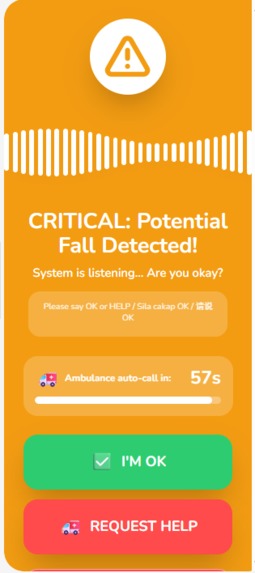

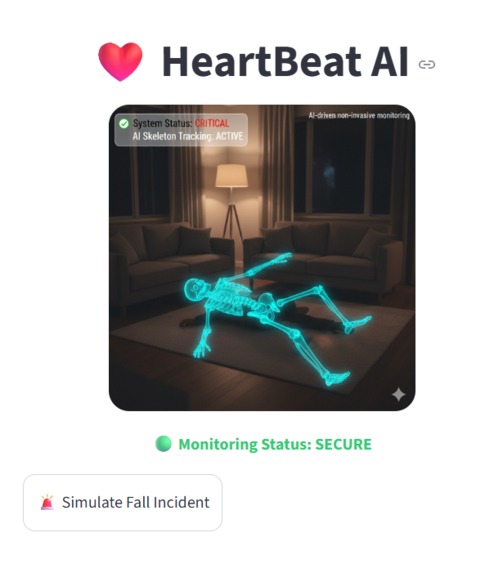

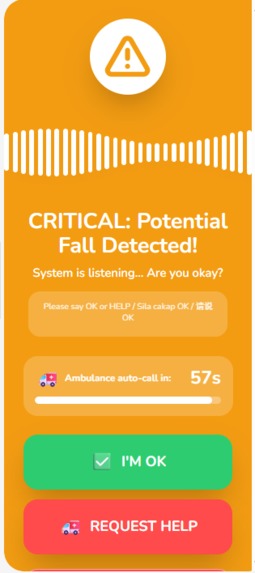

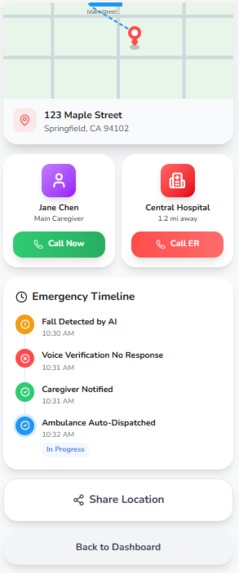

Fall detected page upper portion

-

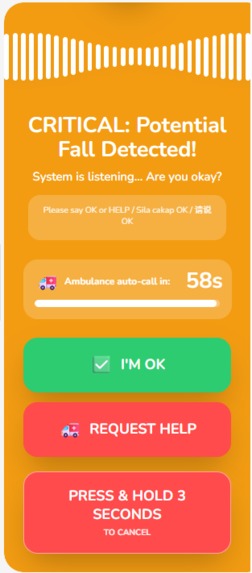

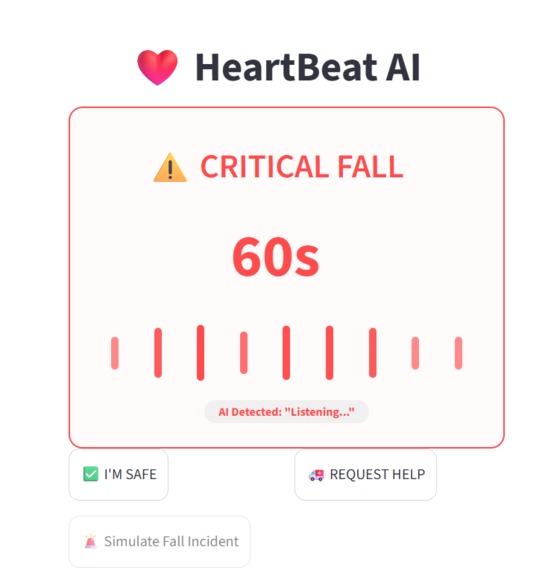

Fall detected page bottom portion

-

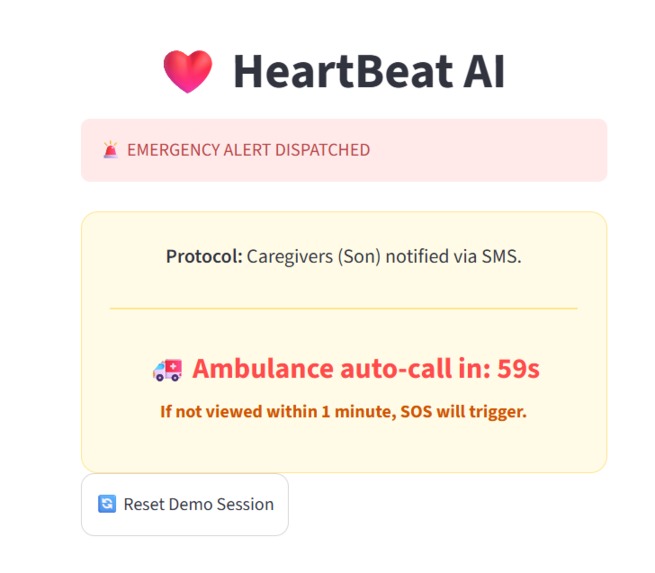

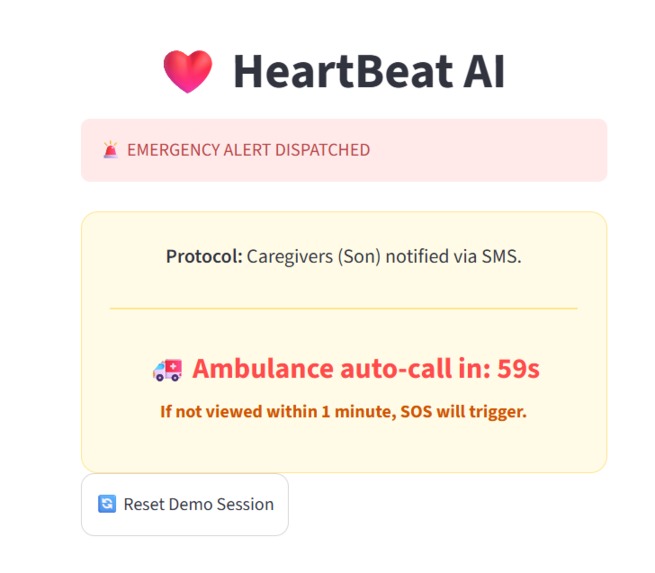

Request Help page upper portion

-

Request Help page bottom portion

-

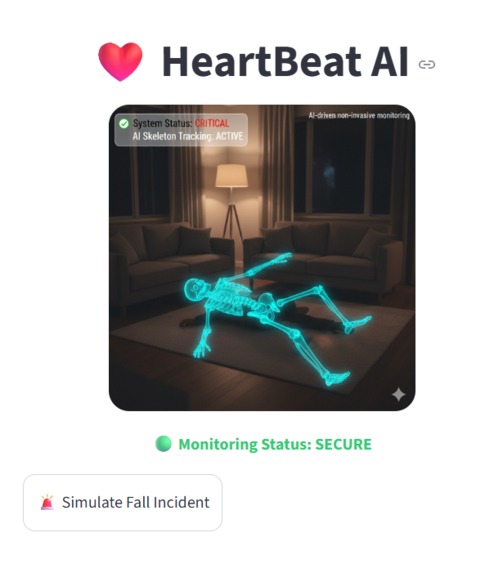

Python Streamlit app simulation

-

Python Streamlit app voice simulation

-

Python Streamlit app request help voice simulation

Inspiration

I was looking at my grandma’s living room CCTV and realized something haunting: if she fell right now, that camera would just record her lying there for hours. It’s a "silent witness" which technically perfect, but humanly useless. We don't have a hardware problem, we have an action problem. The world is covered in 1 billion cameras that "see" everything but "understand" nothing. I wanted to build the brain that these cameras were missing. I wanted to turn a passive video stream into a proactive guardian that actually asks, "Are you okay?" before it’s too late.

What it does

HeartBeat AI is a software layer that sits on top of existing CCTV infrastructure. It monitors the "physics of a fall" using skeletal data.

But detection is only half the battle. When a fall is detected, the app takes over: It speaks through the local speakers: "I've detected a fall. Are you okay?" It listens. If the senior responds "I'm okay" the system stands down to avoid false alarms. If there is zero response, it initiates a 60-second countdown. Once the timer hits zero, it bypasses the need for a phone and automatically triggers a multi-tier rescue protocol, alerting family and dispatching emergency services.

How we built it

The Vision Core: I used MediaPipe for high-precision, 2D skeletal tracking. By calculating the vertical velocity of the torso and monitoring the spatial relationship between the hip and the floor plane, we can distinguish a true fall from someone simply sitting down. The Backbone: To ensure it works with the hardware people already own, I implemented RTSP and ONVIF protocols. This allows the app to "hijack" raw streams from professional security cams and $20 baby monitors alike. The Dialogue: We integrated a real-time STT (Speech-to-Text) and TTS engine. This turns a cold computer vision algorithm into a conversational assistant that can assess a crisis in the user's native language. Privacy-First Architecture: Every bit of AI inference happens at the Edge. We don't stream video to the cloud, we only process anonymous mathematical coordinates, ensuring that privacy is never sacrificed for security.

Challenges we ran into

The biggest hurdle was the "Privacy vs. Presence" paradox. In my Figma wireframes, I kept asking: How do I make a senior feel safe without feeling watched? This led to the technical decision to move away from raw video and focus purely on skeletal coordinates.

On the Python side, concurrency was a nightmare. I realized that running a live pose-estimation loop while simultaneously "listening" for a voice response would cause the script to hang. I had to learn how to handle threading so that the AI could "hear" the user's voice without freezing the camera feed. Fine-tuning the speech recognition to ignore background TV noise and only trigger on specific "Life-sign" keywords was a grueling process of trial and error.

Accomplishments that we're proud of

I’m proud of creating a seamless bridge between UI and Logic. Seeing my Figma vision come to life in a functional Python script, where a detected "fall" actually triggers a real-time voice dialogue, felt like a massive win.

I also managed to design the system to be Hardware-Agnostic in concept. Whether it’s a high-def webcam or a basic IP stream, the logic remains the same: it treats the human body as mathematical points, not a video file. Successfully implementing the "Voice-Confirmation Loop" is what I'm most proud of, it’s the difference between an annoying false alarm and a respectful, intelligent guardian.

What we learned

I learned that in a crisis, resilience beats complexity. I spent a lot of time researching RTSP and ONVIF protocols, realizing that the key to scaling this globally isn't fancy new hardware, but hijacking the "old-school" infrastructure we already have.

I also discovered the importance of Human-in-the-loop design. Initially, I just wanted the app to call 911. But through testing, I realized that giving the user a chance to say "I'm OK" preserves their dignity and prevents "alarm fatigue." A great developer doesn't just solve a technical problem; they solve a human one.

What's next for HeartBeat AI

The goal is to move from Reactive to Predictive.

Full Protocol Integration: Moving from local webcam testing to full RTSP/ONVIF support for professional security cameras. Advanced Vitals: I’m researching Eulerian Video Magnification, the ability to detect a pulse just by analyzing micro-color changes in a person’s skin through the lens. Cross-Platform Deployment: Transitioning the Streamlit prototype into a lightweight background service that runs on a Raspberry Pi or a home NAS.

Log in or sign up for Devpost to join the conversation.