(Note: this project is still a work in progress)

(Installation instructions are coming! Keep an eye out for the next few days!)

Inspiration

COVID19 has affected our everyday lives, and it’s time we put an end to it. It challenges us, as individuals, to find ways to solve this issue and combat the problem. Social distancing and reducing dense areas is one way to solve this issue. However, currently, there is a lack of monitoring of social distancing or area density. Thus, we strived to create a means of providing this info to the student body by creating HealthEye

What it does

HealthEye is an IoT system that allows you to monitor the number of people and social distancing violations in a certain area. It uses a Raspberry Pi and camera system to analyze the number of people and the number of social distancing violations for a specific area. Additionally, it is able to forecast the number of people and social distancing violations for a future time that a user can select.

Additionally, we believe HealthEye can still be a viable product even after COVID19 (after very slight modifications), as it can potentially be used as video analytics for businesses or organizations (More info later).

As proof of concept, we also included the ability for the system to input video files as well as camera feed.

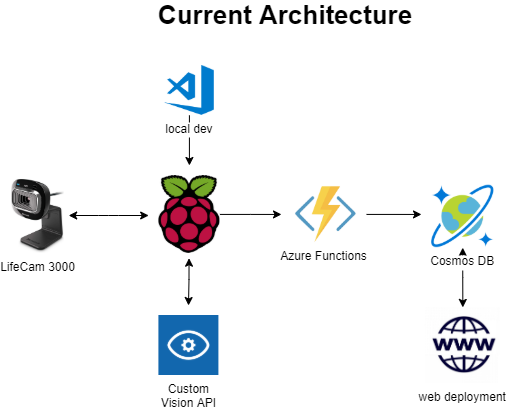

How we built it

Computer Vision

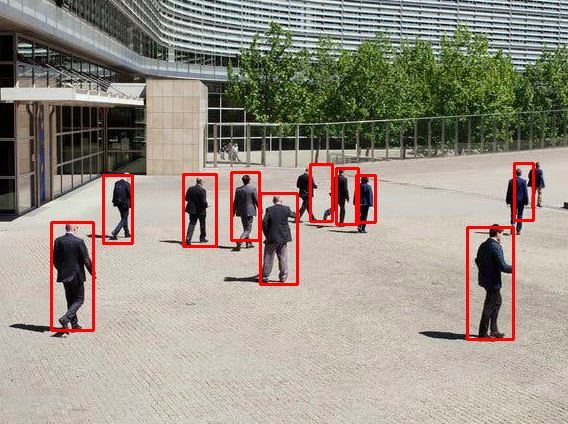

We utilized Azure Custom Vision API to detect people and their location in a video frame. We decided to use the Custom Vision AI rather than the Object Detection API as we wanted to train our model on a diverse data set consisting of images of libraries, classrooms, and outdoor scenery.

Using Custom Vision:

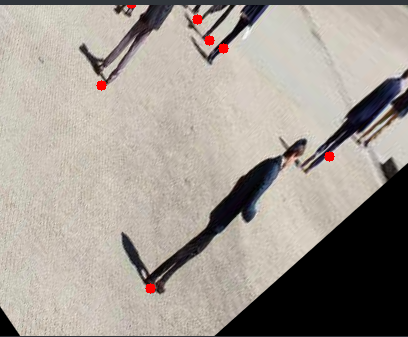

Social Distance Calculations

In addition to the Custom Vision model, we used the Open CV2 library to help us estimate the true distance between people given the bounding boxes from the vision model. We used image transformations to help us do so.

Transforming image to find distance between people:

Web

We used CosmosDB to store our data, and used Azure Functions to abstract the code to write/read from the CosmosDB instance. For the final website, we created it using Python Flask and ReactJS, and deployed as a Azure Web App

Time Series Prediction (in progress):

We utilized a multivariate time series model (VAR) to predict the number of individuals in a given location and the number of socially distanced violations for a distance threshold that a user could specify. We used data extracted from the CosmosDB database containing the same metrics, extracted from video frames.

Challenges we ran into

Besides being students and having midterm exams while working on this, we ran into quite a few challenges building the system!

Training the Vision Model

After experimenting using Azure Objection Detection, we wanted to achieve a better precision in detecting people. Thus we attempted to create our own model using the Azure Custom Vision.

Object detection:

Of course, training the model to achieve a high precision and accuracy score was proven to be rather challenging. It was soon realized that we were going to have to specifically curate our data to our needs. That meant looking for low-quality images that may contain blur for example, as our model was going to be analyzing video footage.

After training our data on a wider dataset, we experienced signs of overtraining. But we believed we achieved slightly better results with the Custom Vision rather than the Object Detection, thus why we kept it.

Custom Vision

Social Distancing Calculations

Another difficultly we faced was that a user had to select a boundary area on an image that had to have more or less parallel lines to be analyzed for the CV2 image transformation algorithms. To take this into account, a parallelogram had to be transcribed within the region that a user selects, for an image to be better calibrated for the algorithm.

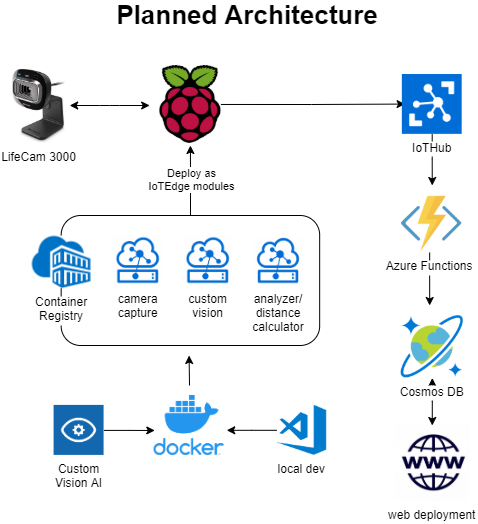

Azure IoTHub and IoTEdge setup

Originally, we had wished to use the Azure IoT Hub and IoT Edge, as these services provide the exact scalability we were hoping to achieve (talked later). However, we had to put it as a side project, as we were (multiple times) unable to install IoTEdge on our Raspberry Pi 4. Given more time and experimentation (for example, starting this project earlier), we would have used IoT Hub and IoT Edge in our final project.

Accomplishments that we're proud of

Demo Product

Originally, we all thought it was such a cool idea to make; however, we knew we were going to experience many challenges along the way. We're proud we were able to complete a minimal workable product before the deadline, and we hope HealthEye system serves as a proof of concept of other potential solution in the future

What we learned

Shelly: I learned a lot about MS Azure Custom Vision and the different services MS Azure has to offer. I also had fun experimenting with image transformation algorithms and implementing the VAR model.

Vincent: The IoTHub and IoTedge system was super fun to learn about - sadly, could not get it working on the Raspberry Pi 4. I also learned a lot about Azure Functions and CosmosDB and the many services Azure offers to make software dev lives easier

What's next for HealthEye

There's a lot more to HealthEye than COVID19. We believe this system can serve as a basis for video analytics systems, where we can gain insights to how people interact and move in space. This can potentially be useful for businesses and organizations, where they'd like to know how customers are interacting with their services. Thus, if we wish to bring this system to production level, we need several improvements.

Architecture Modification

Our originally planned architecture consisted of this:

We envisioned this solution to be scalable and modular, where it was easy to add a new Raspberry Pi (or any new device) to the system and add more data. Azure IoT Hub and IoT Edge offered this type of scalability, and thus we wanted to utilize it to its max potential. After failure to properly install IoT Edge on the Raspberry Pi 4 correctly, we set it as a project for the future (after the hackathon demo). However, we still plan to use it to make this entire thing much more scalable and modular.

Custom Vision

We hope to refine our Azure CV model to be more accurate and more accepting of a wider dataset.

Web

We'd like to improve the user experience on the website, as most of our work revolved around the backend, thus resulting in an awkward-to-use interface. We know that if this was going into production, the design and UX would have to drastically improve.

Log in or sign up for Devpost to join the conversation.