-

Home page for the application.

-

Generated summary from the sample 1 page PDF about LLMs.

-

Generated equations (none in current PDF) and explanations.

-

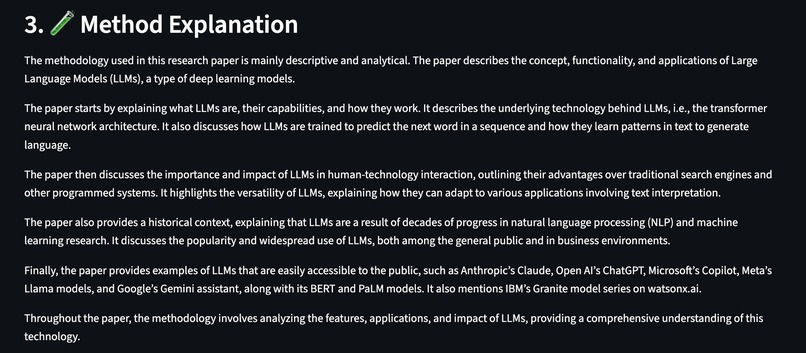

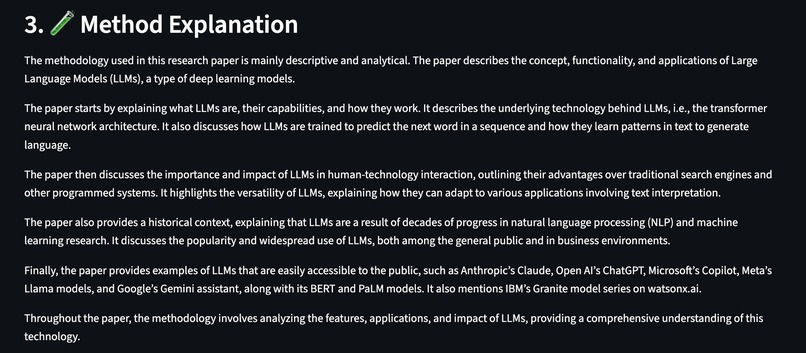

Generated method explanations.

-

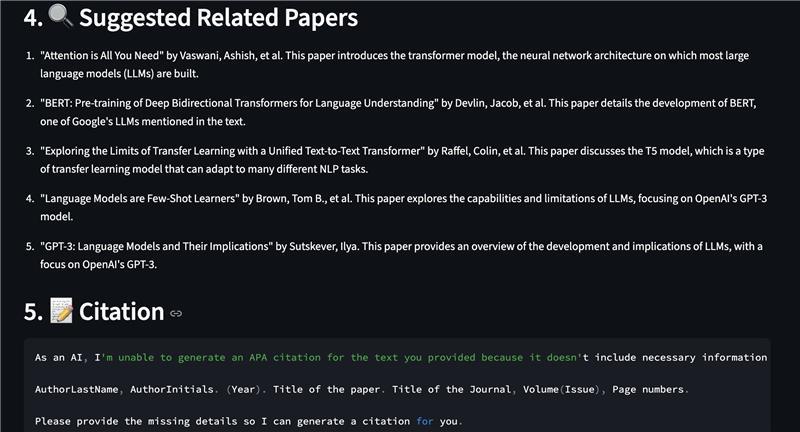

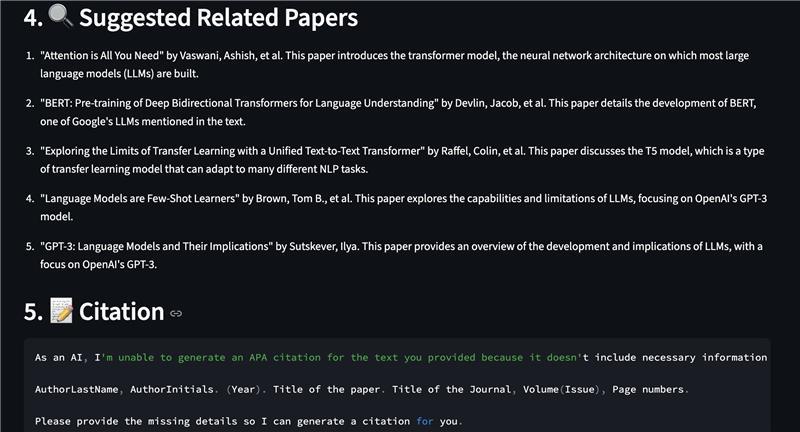

Generated related papers and citations.

-

Generated conference insights from video transcripts. (1 min video on LLMs taken from youtube).

Inspiration

Reading research papers are often confusing and touch and time-taking. This is a common problem that students usually face and might want to save time in doing this.

What it does

This project doesn't just extract the summary of a research paper, but also extracts methods, identifies key findings, and suggests related works. This isn't a regular document summarizer, it also adds the human insights using information from the conferences where this paper has been presented/mentioned/talked about. This enriches the overall value the AI Scholar Assistant can add to any student's attempt to understand research papers.

How we built it

We built HASH# AI Scholar using a powerful combination of AI-driven natural language processing, document parsing, and interactive web technologies, all wrapped into a seamless Streamlit experience. Frontend & UI: Built with Streamlit, providing a responsive, and interactive interface for researchers to upload PDFs and transcripts, explore summaries, equations, and AI insights in real time. Text Extraction Layer: PyPDF2 and custom parsing logic in utils/pdf_parser.py for extracting clean, structured text from academic PDFs. Transcript parsing handled by utils/transcript_parser.py, enabling multi-format input. Summarization & NLP Core: Implemented with OpenAI GPT models (v1 API) and Transformers (Hugging Face) for context-aware summarization, section-wise breakdown, and conference insights. The summarizer intelligently condenses dense academic writing into concise, human-readable explanations. Mathematical & Method Analysis: Regex-based and transformer-assisted equation extraction from complex LaTeX patterns. The method explainer module uses LLM reasoning to interpret research methods and experimental design. Voice Narration: Integrated Elevenlabs to automatically narrate summaries for accessibility and auditory learners. Recommendation Engine: Related paper suggestions powered by semantic similarity embeddings using OpenAI text embeddings API and cosine similarity scoring. Deployment: Hosted on Streamlit Cloud, leveraging containerized Python environments with dependency management via requirements.txt. Fully serverless—users only need a browser to access AI Scholar.

Challenges we ran into

PDF Parsing Complexity: Research papers often have non-linear layouts (columns, equations, tables), making accurate text extraction and segmentation challenging. Token Management: Managing large document contexts with limited model input size required intelligent chunking and summarization strategies. We could only parese 10000 with our OpenAI API key, so, we had to use a 1 page PDF and 1 page transcript text file. Latency & Performance: Generating AI outputs (summaries, related papers, explanations) while keeping the interface responsive involved caching. Audio Integration: Ensuring smooth playback of dynamically generated mp3 across browsers required custom HTML embedding and base64 encoding.

Accomplishments that we're proud of

Attempted to build a fully functional AI research assistant that can read, summarize, explain, and even speak research papers. Designed a modular architecture (utils/ package) allowing easy extension and maintenance. Tried to develop a clean, professional Streamlit interface that feels like a scholar’s digital companion. Combined multiple AI capabilities - summarization, explanation, recommendation, and narration - into a single cohesive app.

What we learned

Latest trends and technologies in gen AI, including RAG based agentic AI, prompt engineering, cross-ref API, semantic vector search and generation, BERT, LLMs, MathPix, Evelenlabs API and streamlit.

What's next for HASH# AI SCHOLAR

We can fine tune the RAG pipeline to reduce LLM hallucinations, and scale the application to be able to process larger documents.

Built With

- artificial-intelligence

- backend

- elevenlabs-api

- flask

- frontend

- gen-ai

- langchain

- machine-learning

- openai-api

- python

- rag

- streamlit-ui

Log in or sign up for Devpost to join the conversation.