-

-

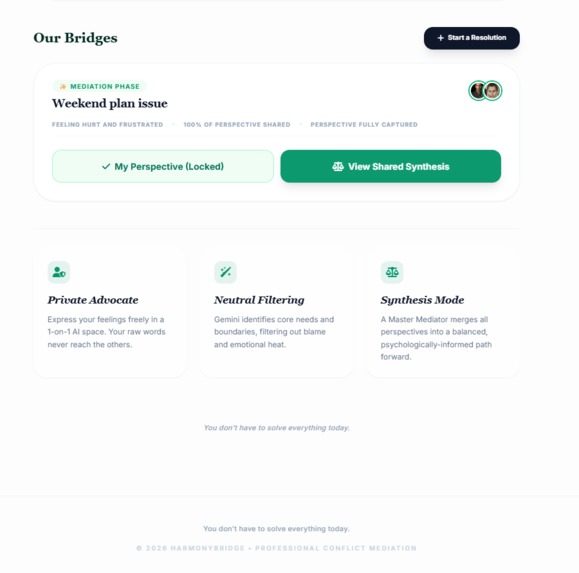

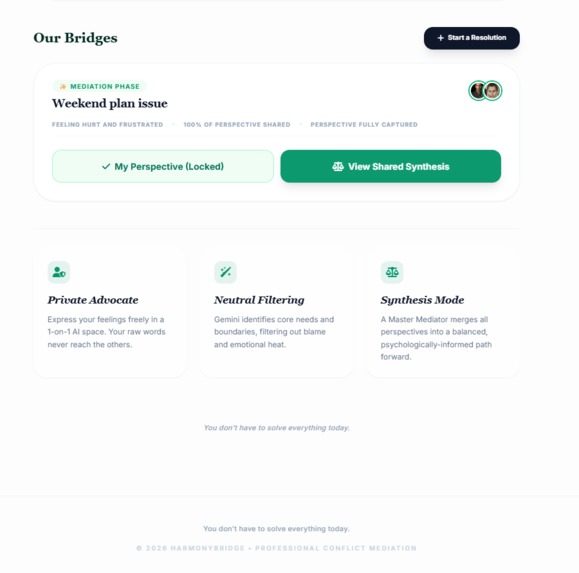

Start resolution

-

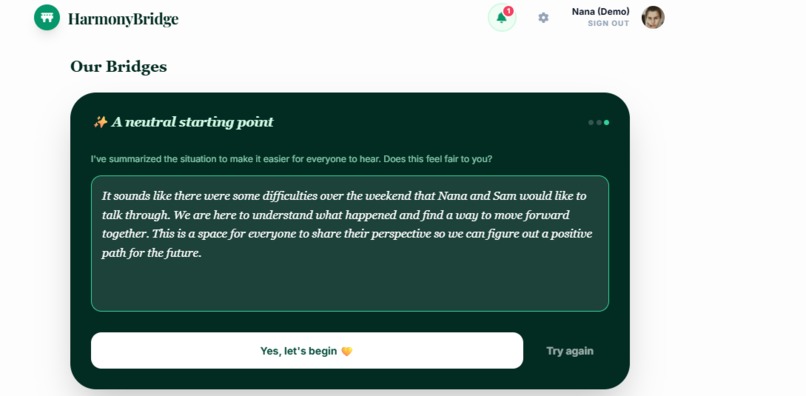

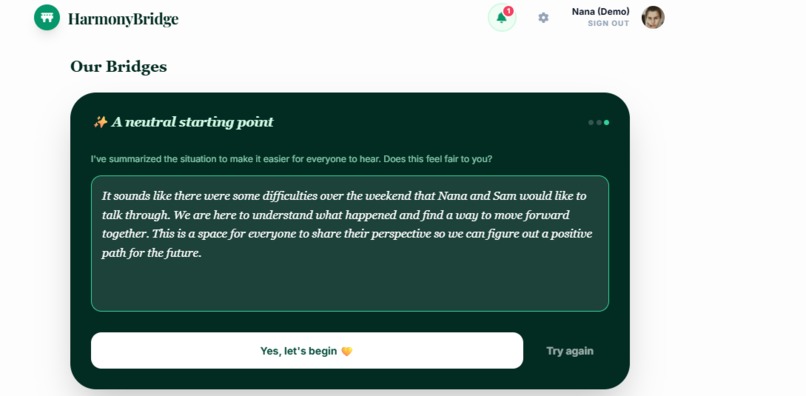

Create the context for starting the space room

-

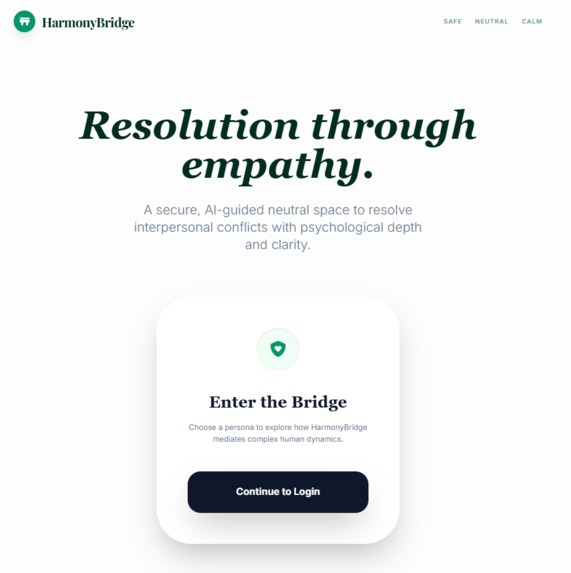

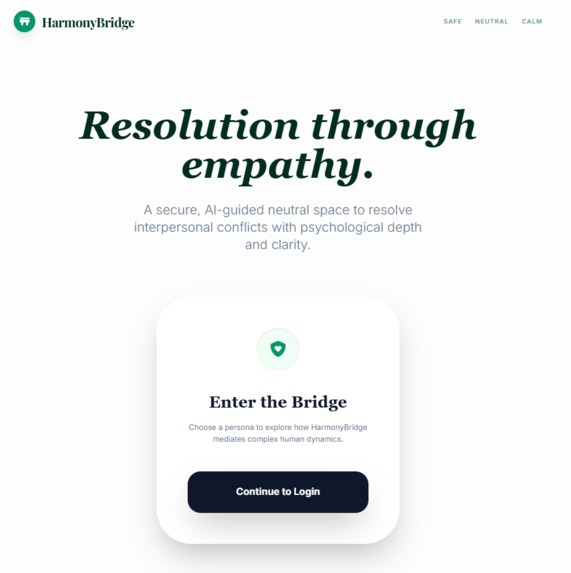

Login page

-

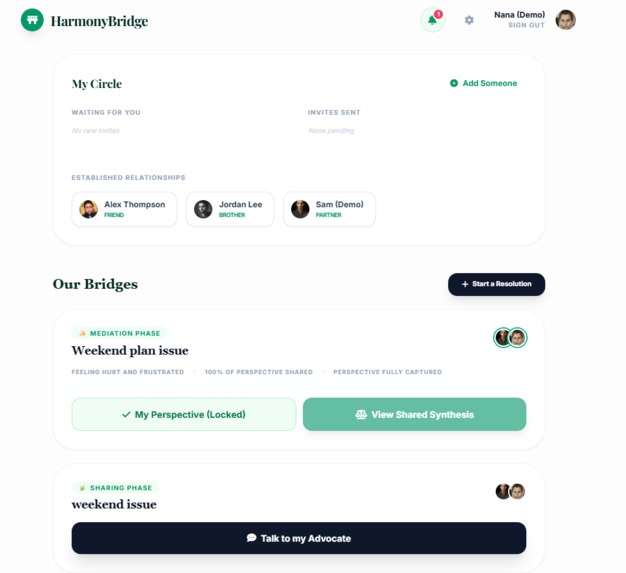

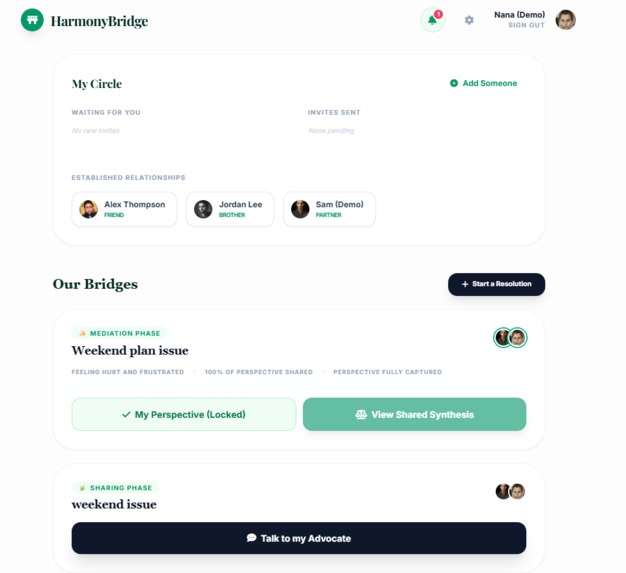

Home page

-

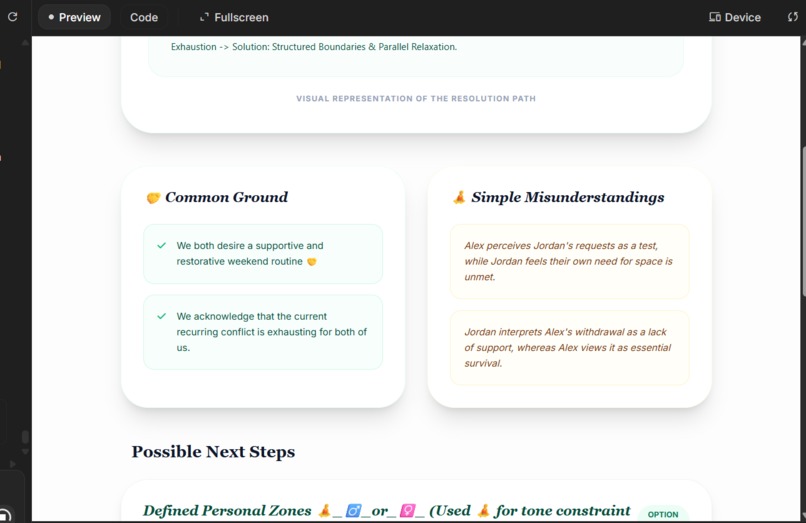

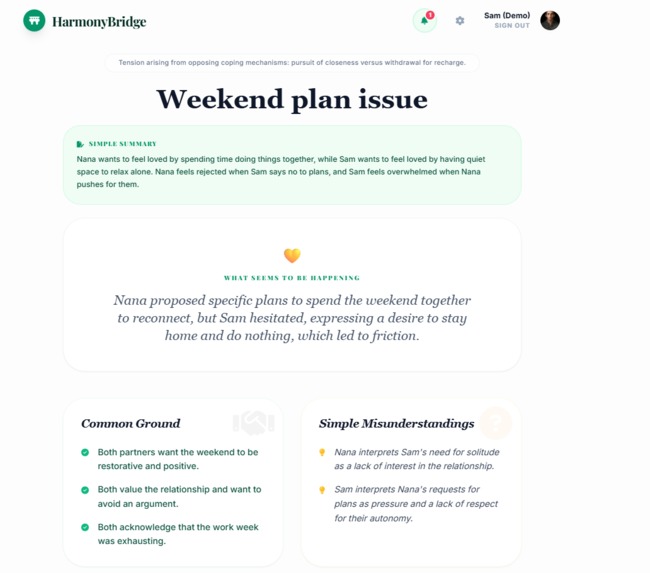

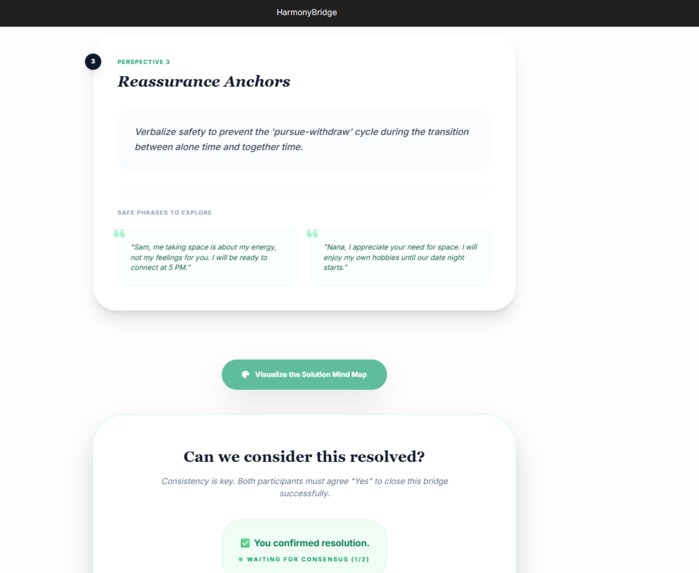

Synthesizing Perspectives with mind map image

-

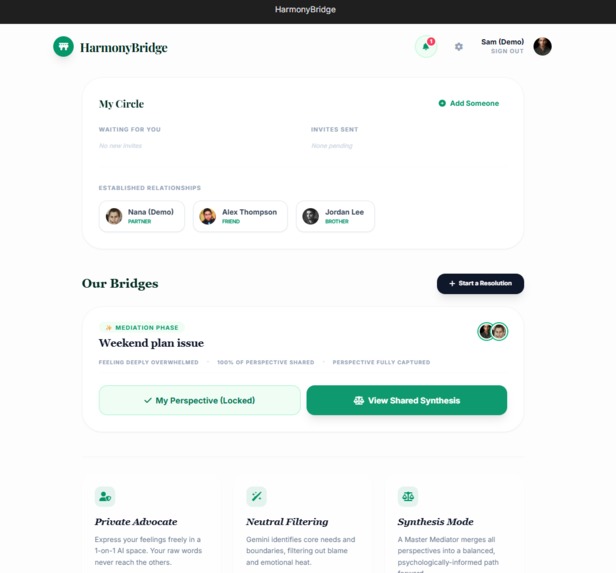

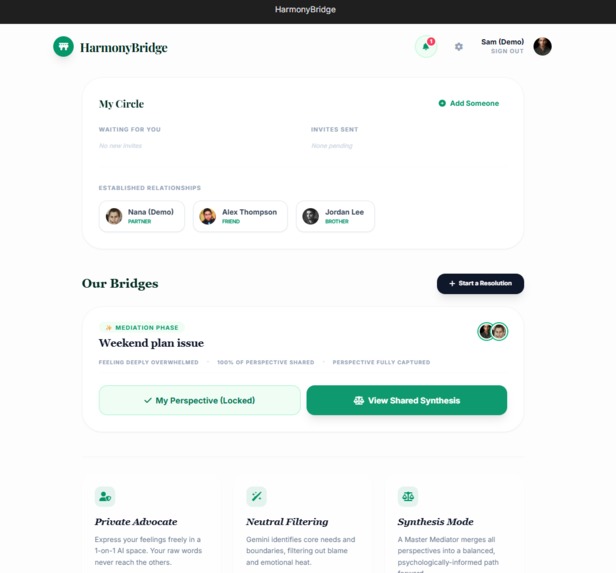

Home page after the resoltion has been generated

-

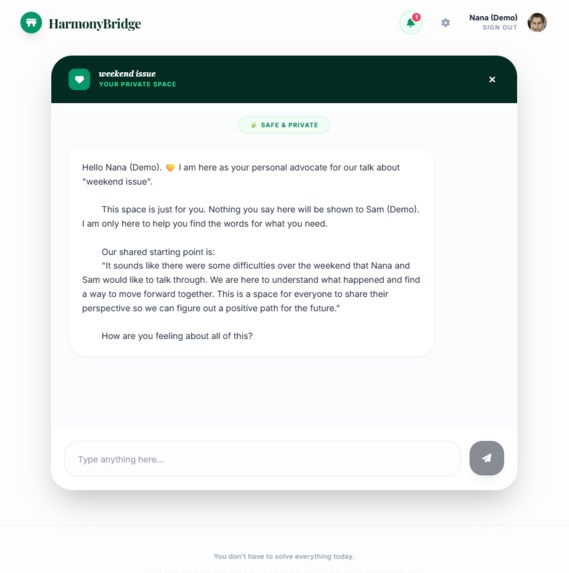

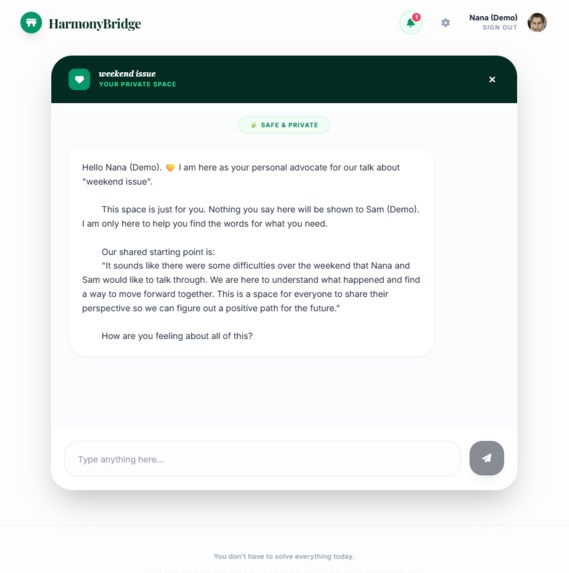

Chatting with the first agent mediator to understand issue

-

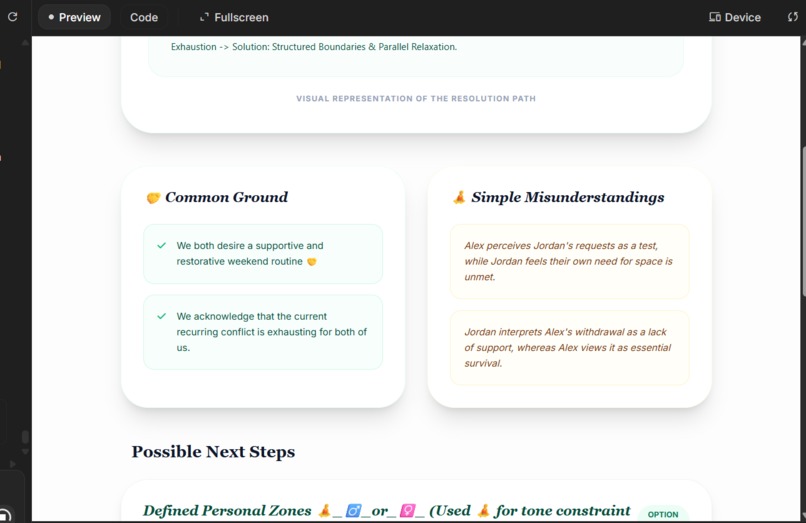

Synthesizing Perspectives

-

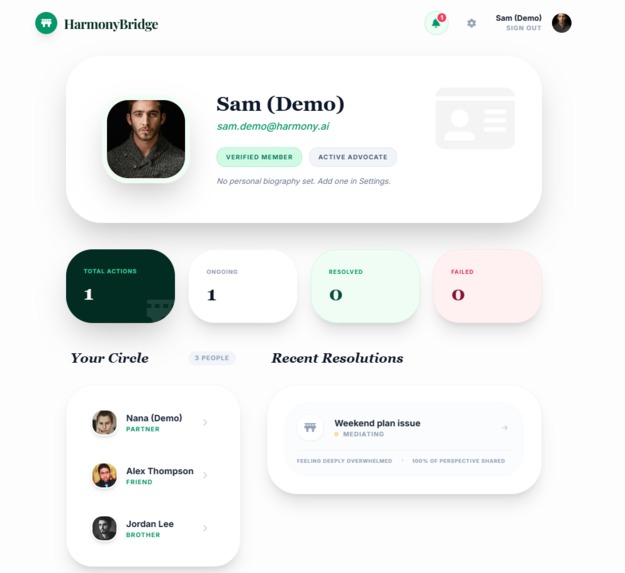

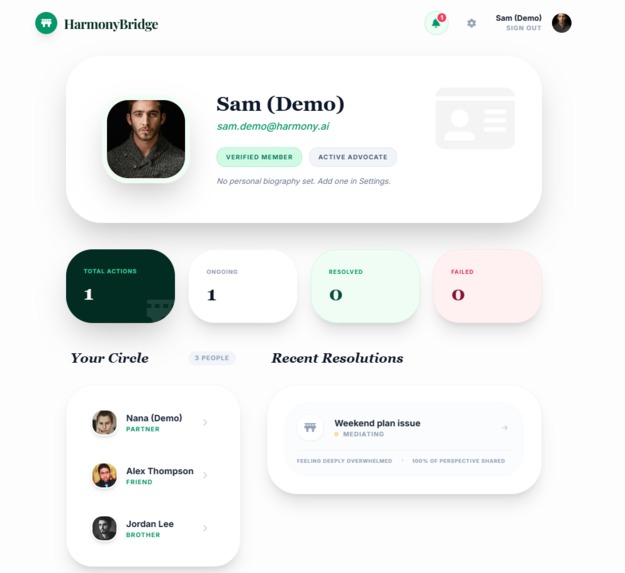

Dashbord user with connections

-

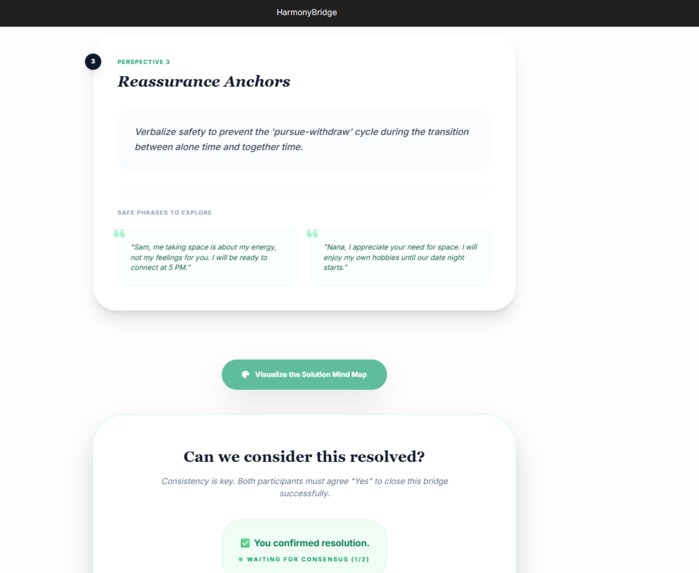

Synthesizing Perspectives mediator ai agent

HarmonyBridge — Project Story

Inspiration

HarmonyBridge was born from a simple but uncomfortable observation:

when emotions run high, direct conversation is not always safe or productive.

In conflicts between friends, partners, or collaborators, people are often pushed to “talk it out” immediately, even when they are not emotionally ready. This pressure can escalate misunderstandings, create defensive reactions, or permanently damage relationships.

I wanted to explore a different approach:

What if AI could create a protective buffer — a space where people can think, slow down, and feel understood before facing each other again?

Not to replace human connection, but to support it.

What the Project Does

HarmonyBridge is an AI-mediated space for conflict resolution.

Each participant interacts privately with their own AI agent, allowing them to express thoughts freely without fear of judgment or interruption.

A dedicated mediator agent then:

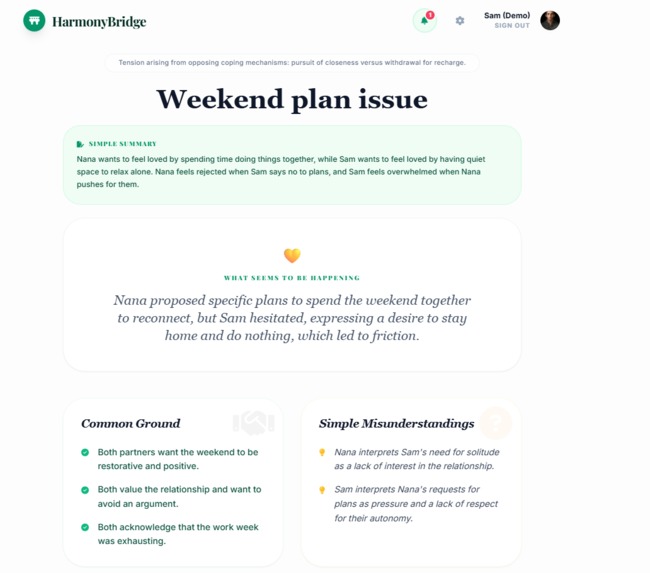

- Synthesizes shared context (without exposing private raw messages)

- Identifies common ground and gaps in perspective

- Proposes a calm, consent-based resolution path

Instead of forcing quick solutions, HarmonyBridge prioritizes:

- Emotional safety

- Clarity over speed

- Low-pressure, step-by-step reconnection

How I Built It

The application is built using Gemini 3 with deliberate model orchestration.

Model Usage

- Gemini 3 Flash powers real-time conversations for speed and responsiveness.

- Gemini 3 Pro can be activated during mediation phases that require deeper reasoning, synthesis, visual mind map and structured resolution paths.

I experimented with different thinking levels of Gemini Flash:

- Low: fast but sometimes too shallow

- High: overly verbose and prone to overthinking

- Medium: the best compromise between clarity, empathy, and control

This medium setting is used by default.

Prompt Engineering & Tools

Prompt engineering was central to the project’s quality and safety:

- Chain-of-thought prompting for structured mediation logic

- Few-shot examples to stabilize tone and mediator behavior

- Clear role separation between participant agents and mediator agent

I also used grounding tools during development to explore external references and refine prompt structure and mediation logic.

During the mediation phase:

- The mediator generates a resolution path (step-by-step guidance)

- A visual mind map summarizing this path is generated using gemini-3-pro-image-preview, helping users understand progress without cognitive overload

Emotional Awareness (Without Profiling)

To help users reflect without pressure, HarmonyBridge introduces three subtle session indicators:

- Stress level (e.g., “Feeling calm”, “Slightly stressed”)

- Conflict progress (e.g., “50% of mediator suggestions implemented”)

- Interaction clarity (e.g., “Clear and understandable”)

These indicators are:

- Non-judgmental

- Session-scoped only

- Never stored as permanent user traits

No emotional diagnosis, scoring, or labeling is used.

Security & Responsibility

HarmonyBridge follows OWASP security best practices, including:

- Input sanitization on all user inputs

- Rate limiting on API endpoints

- Secure handling of API keys via environment variables

- Parameterized queries and backend validation

Security is treated as a foundational requirement, not an afterthought — especially for a product dealing with sensitive conversations.

What I Learned

This project taught me that:

- Model orchestration matters more than raw model power

- Prompt design is a form of ethical design

- Emotional AI systems must prioritize what not to infer or store

I also learned how Gemini can be used not just to generate text, but to:

- Mediate perspectives

- Structure resolution paths

- Support emotional safety without surveillance

Challenges Faced

The main challenges involved balancing technical control with emotional responsibility:

- Designing emotional awareness signals without profiling users or storing emotional traits

- Remaining supportive without drifting into “therapy-like” or diagnostic behavior

- Controlling overthinking in high-reasoning models, which sometimes led to verbosity or hallucinated assumptions

- Deciding how much mediator logic should be visible to users to maintain trust without overwhelming them

- Handling practical constraints such as rate limiting (HTTP 429 errors) during high-frequency interactions

These challenges led to important design decisions, including favoring medium reasoning levels, simplifying outputs, and prioritizing stability and clarity over maximal model expressiveness.

Why It Matters

HarmonyBridge demonstrates how Gemini can be used responsibly to support human connection.

It shows that AI does not need to analyze people deeply to help them meaningfully.

Sometimes, the most impactful intervention is simply creating a space where people feel safe enough to keep talking.

What’s Next

The next steps for HarmonyBridge focus on turning a strong prototype into a responsible, scalable product.

From a product perspective, the priority is improving long-term continuity: allowing users to revisit past resolution bridges, track progress over time, and understand how communication patterns evolve without creating emotional profiles or labels.

On the AI side, future work includes refining mediator orchestration with adaptive reasoning levels, dynamically selecting Gemini Flash or Pro based on conflict complexity and user consent. Additional safeguards will be added to further reduce hallucinations and ensure emotional neutrality at scale.

The project will also expand beyond one-to-one conflicts to support small groups, families, and close social circles, with clearer role-based mediation flows.

Finally, HarmonyBridge aims to integrate stronger privacy controls, clearer user consent boundaries, and optional visual summaries (mind maps and resolution timelines) to make outcomes easier to understand and act upon.

The long-term vision is to make AI-mediated conflict resolution accessible, ethical, and emotionally safe for everyday human relationships.

Log in or sign up for Devpost to join the conversation.