-

-

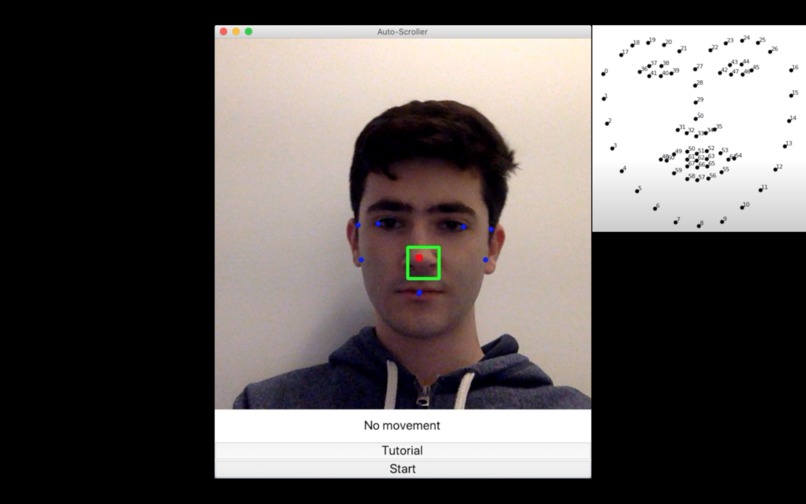

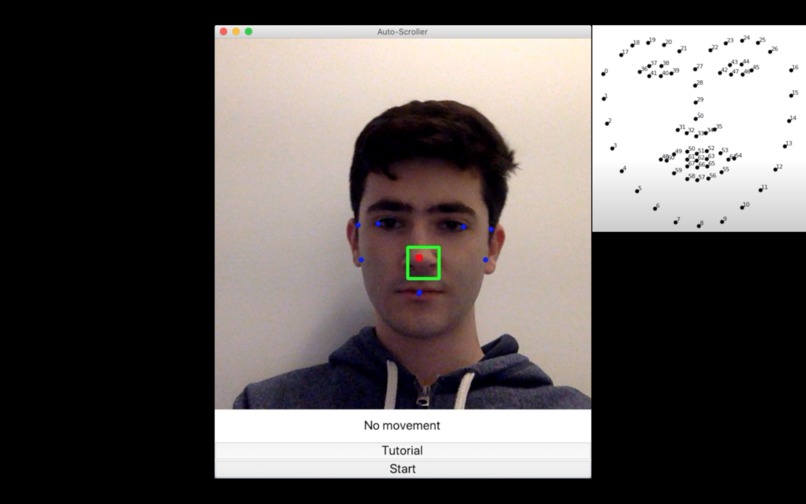

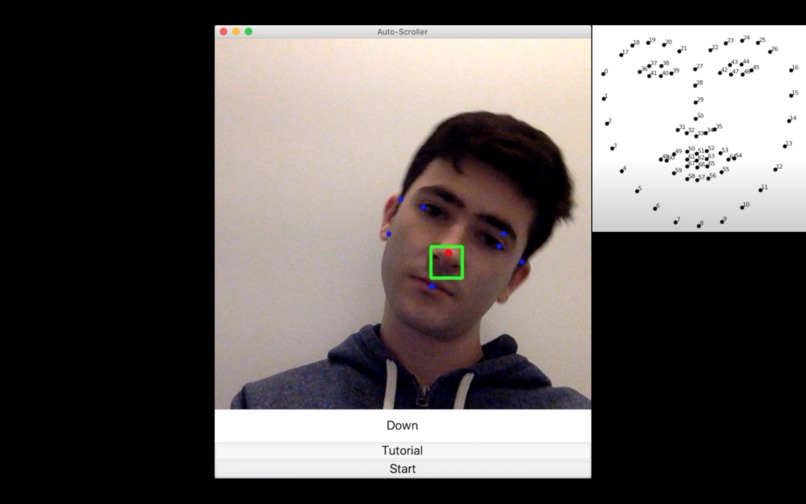

No movement visualization

-

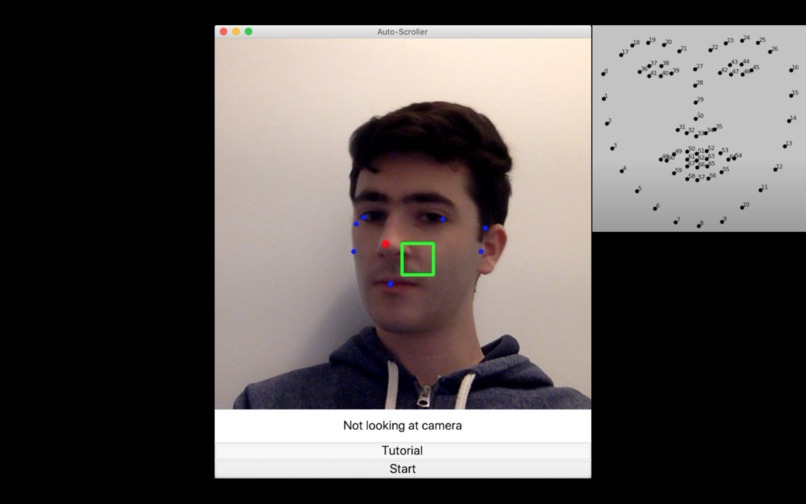

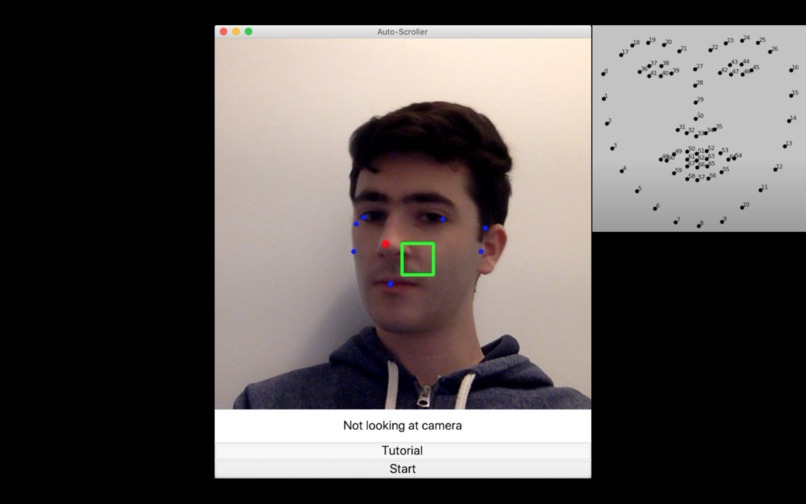

Not looking at camera visualization

-

Scrolling up visualization

-

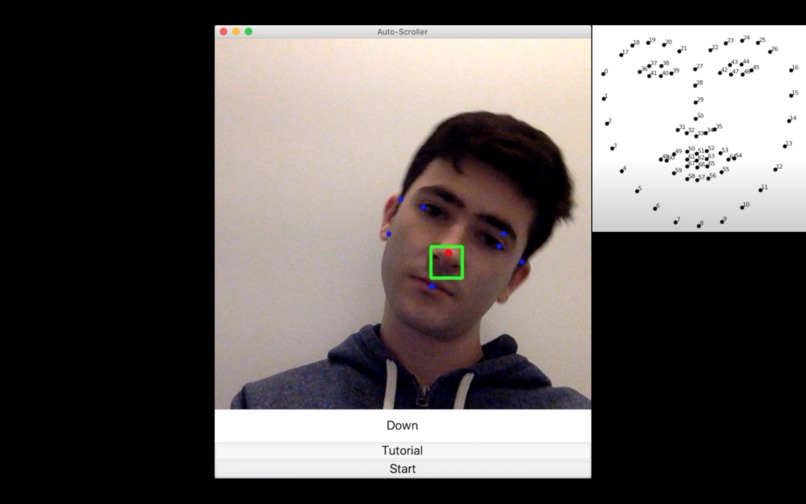

Scrolling down visualization

-

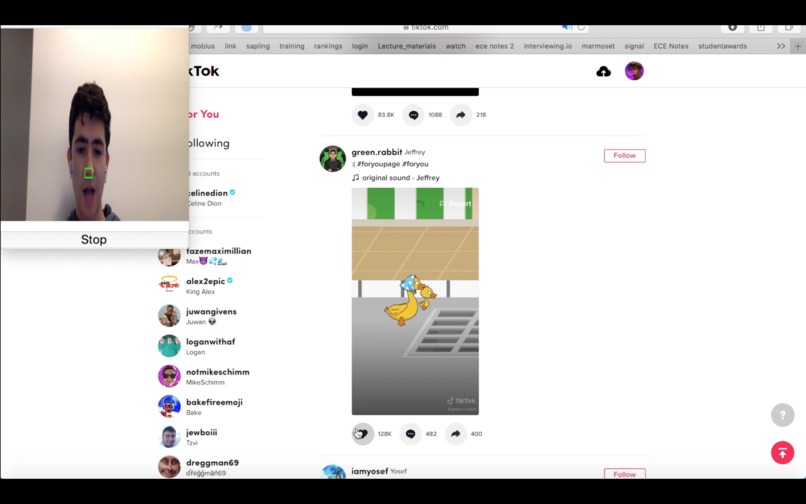

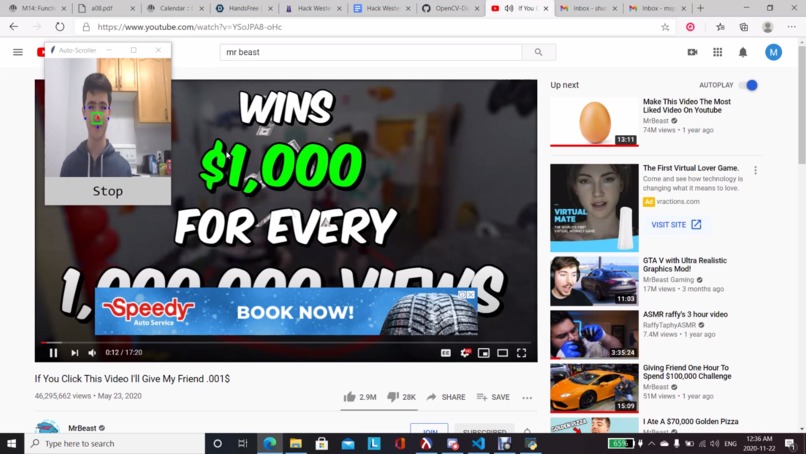

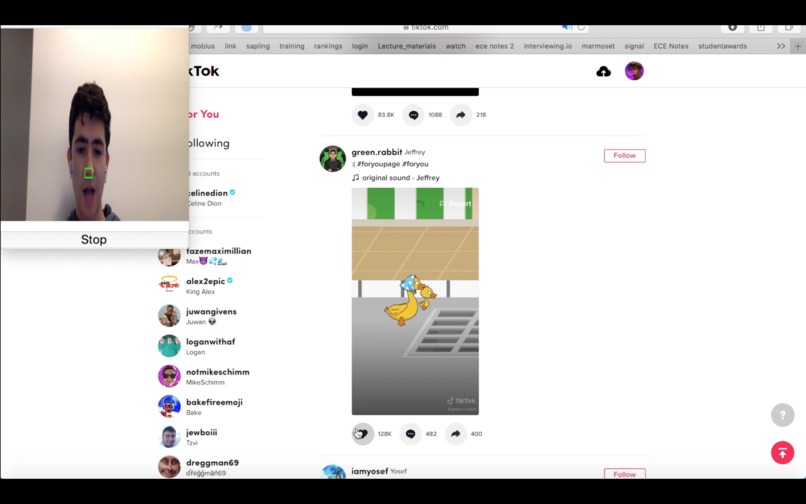

HandsFree clicking (opening mouth to click)

-

HandsFree cursor movement (moving nose to move cursor)

-

HandsFree clicking (opening mouth to click)

-

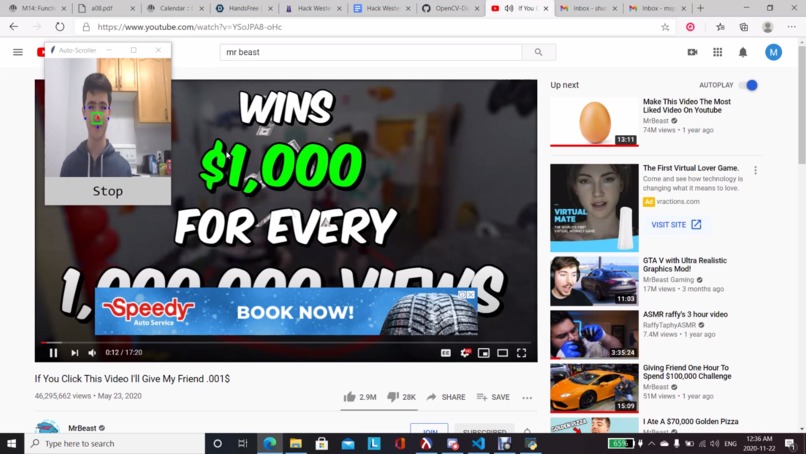

HandsFree cursor movement and click

-

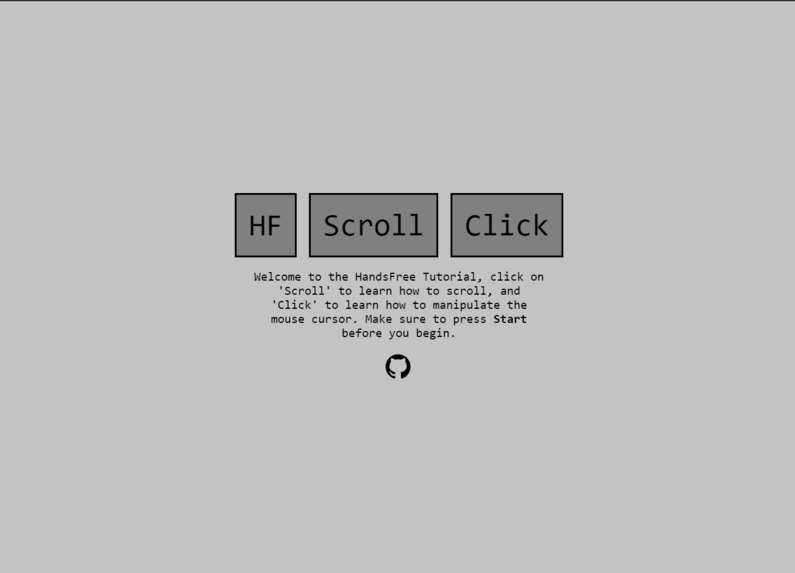

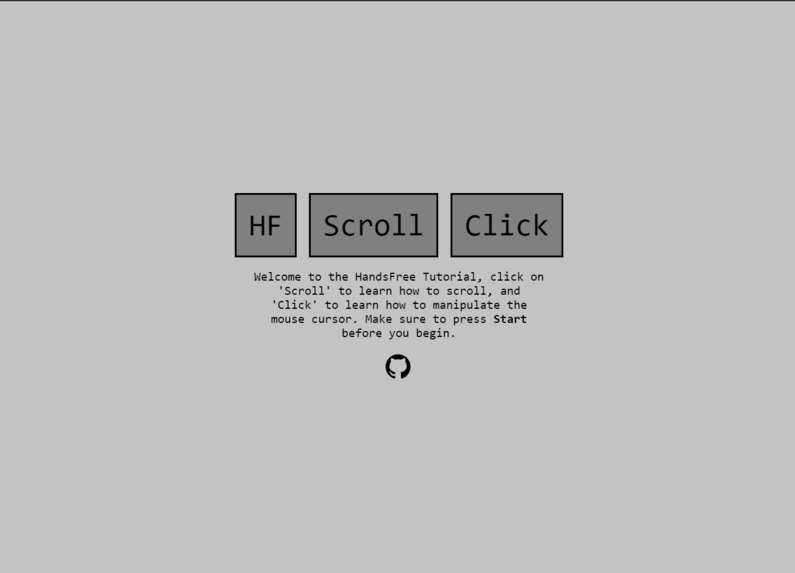

Tutorial Site

Inspiration

The inspiration for this project was a group-wide understanding that trying to scroll through a feed while your hands are dirty or in use is near impossible. We wanted to create a computer program to allow us to scroll through windows without coming into contact with the computer, for eating, chores, or any other time when you do not want to touch your computer. This idea evolved into moving the cursor around the screen and interacting with a computer window hands-free, making boring tasks, such as chores, more interesting and fun.

What it does

HandsFree allows users to control their computer without touching it. By tilting their head, moving their nose, or opening their mouth, the user can control scrolling, clicking, and cursor movement. This allows users to use their device while doing other things with their hands, such as doing chores around the house. Because HandsFree gives users complete touchless control, they’re able to scroll through social media, like posts, and do other tasks on their device, even when their hands are full.

How we built it

We used a DLib face feature tracking model to compare some parts of the face with others when the face moves around.

To determine whether the user was staring at the screen, we compared the distance from the edge of the left eye and the left edge of the face to the edge of the right eye and the right edge of the face. We noticed that one of the distances was noticeably bigger than the other when the user has a tilted head. Once the distance of one side was larger by a certain amount, the scroll feature was disabled, and the user would get a message saying "not looking at camera."

To determine which way and when to scroll the page, we compared the left edge of the face with the face's right edge. When the right edge was significantly higher than the left edge, then the page would scroll up. When the left edge was significantly higher than the right edge, the page would scroll down. If both edges had around the same Y coordinate, the page wouldn't scroll at all.

To determine the cursor movement, we tracked the tip of the nose. We created an adjustable bounding box in the center of the users' face (based on the average values of the edges of the face). Whenever the nose left the box, the cursor would move at a constant speed in the nose's position relative to the center.

To determine a click, we compared the top lip Y coordinate to the bottom lip Y coordinate. Whenever they moved apart by a certain distance, a click was activated.

To reset the program, the user can look away from the camera, so the user can't track a face anymore. This will reset the cursor to the middle of the screen.

For the GUI, we used Tkinter module, an interface to the Tk GUI toolkit in python, to generate the application's front-end interface. The tutorial site was built using simple HTML & CSS.

Challenges we ran into

We ran into several problems while working on this project. For example, we had trouble developing a system of judging whether a face has changed enough to move the cursor or scroll through the screen, calibrating the system and movements for different faces, and users not telling whether their faces were balanced. It took a lot of time looking into various mathematical relationships between the different points of someone's face. Next, to handle the calibration, we ran large numbers of tests, using different faces, distances from the screen, and the face's angle to a screen. To counter the last challenge, we added a box feature to the window displaying the user's face to visualize the distance they need to move to move the cursor. We used the calibrating tests to come up with default values for this box, but we made customizable constants so users can set their boxes according to their preferences. Users can also customize the scroll speed and mouse movement speed to their own liking.

Accomplishments that we're proud of

We are proud that we could create a finished product and expand on our idea more than what we had originally planned. Additionally, this project worked much better than expected and using it felt like a super power.

What we learned

We learned how to use facial recognition libraries in Python, how they work, and how they’re implemented. For some of us, this was our first experience with OpenCV, so it was interesting to create something new on the spot. Additionally, we learned how to use many new python libraries, and some of us learned about Python class structures.

What's next for HandsFree

The next step is getting this software on mobile. Of course, most users use social media on their phones, so porting this over to Android and iOS is the natural next step. This would reach a much wider audience, and allow for users to use this service across many different devices. Additionally, implementing this technology as a Chrome extension would make HandsFree more widely accessible.

Log in or sign up for Devpost to join the conversation.