-

-

-

The home screen allows users to quickly utilize their camera and see how the machine learning is classifying their image in real time.

-

After press the photo button, you see the results of the machine learning and get to pick what image recognition picked up.

-

Quickly see plant information such as conservation status, scientific name, nativity, and more.

-

An example of an endangered species.

-

An example of an alarmingly rare species.

-

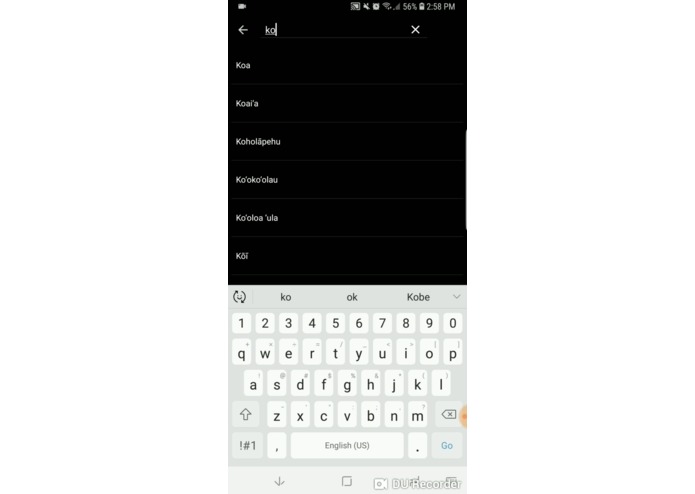

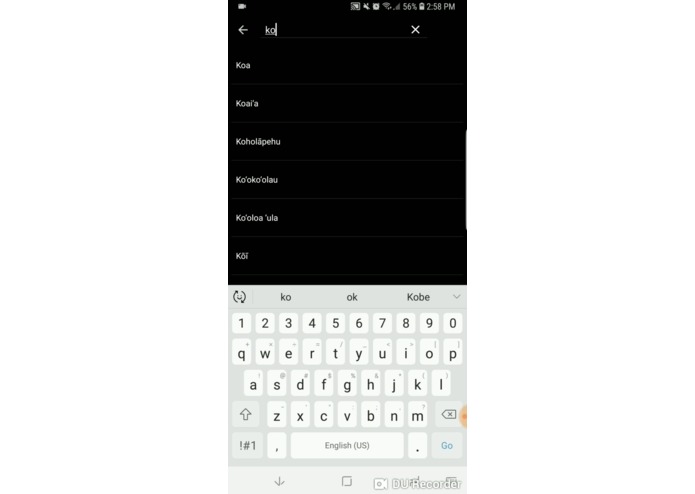

Search through all the native Hawaiian plants.

What we built

We built a powerful mobile app driven by Google's TensorFlow machine learning library that allows users to identify native and invasive plants within seconds. One might think that such an app would be massive due to the amount of data needed to train such an application, but in reality, our application takes up less than 15MB of space!

Some of the problems that we set out to solve when we created this app include:

- No definitive way to identify plants out in the field

- No way to keep track of plant sightings out in the field

- No way to cross reference plants found in the wild with reference material

- No pre-existing comprehensive mobile resource of native plant information

- No sustainable way to introduce information to newcomers or the public

In order to fix these problems, we implemented :

- A way to identify plants using machine learning software

- A scalable database and data hosting system to keep track of plant sightings

- User image submissions, allowing the user to report back to the DLNR with information such as plant appearance and sighting location

- Fast database searching, empowering users to quickly cross-reference plants with pictures already in our database

- Compressed machine learning models and images in order to allow for a small, portable application size

How we built it

We thoroughly researched, vetted, and implemented technologies that best fit our goals of a scalable, efficient, machine learning application. We ensured these technologies are sustainable to help modernize Hawaii’s software.

We started off our developmental process with designs and mockups of the user interface. We went through many iterations before finally reaching our current build. Considerations include a streamlined user interface and a hassle-free user experience.

The technologies we ultimately decided to utilize include :

- TensorFlow Lite (Open-source machine learning library for mobile devices)

- Firebase (Scalable document-driven database)

- ElasticSearch (State of the art full-text document search)

- Gradle (Open-source build automation system)

Why these technologies?

These technologies are on the forefront of development and will be supported for a long time into the future, ensuring our application's longevity and relevance.

We used TensorFlow Lite to handle our machine learning capabilities. It has the performance, low latency, and portability that we need for a mobile application. TensorFlow Lite does not require the image data to be on the device at all. This means that we can train the machine learning models in the cloud and eventually push them to all devices that have the application. This way everyone will have the most updated version of the model and will always be optimized with the best data to identify plants.

Our application functionalities required a database to handle database document and image storage. This is why we chose Google's Firebase as our backend. Firebase is a readily scalable backend database which has fast data retrieval and storage. It also allows us to save potential unknown flowers being sent in by users, allowing our application to be user driven.

Challenges we ran into

Searching for images and information on plants and flowers to use to train our app with wasn't easy. In order to make our app as accurate as possible, we needed to scrape the web for hundreds of images then make sure that these images were suitable to use to train our app.

For many of us, this was our first time using Android Studio, so there was a quite a steep learning curve for us in the beginning.

One of our biggest challenges was limiting the scope of our application's functionality due to time constraints. While we are satisfied with progress throughout the development period, there is so much more potential that can come out of our application, and more importantly, us.

Future enhancements

- Continue to add photos to train our machine learning model and make it more accurate

- Develop cross-platform applications and functionality

- Continue to improve the UI and make it more appealing to a larger base of people

- Additional client and server-side features

Built With

- android-studio

- firebase

- gradle

- tensorflow-lite

Log in or sign up for Devpost to join the conversation.