-

-

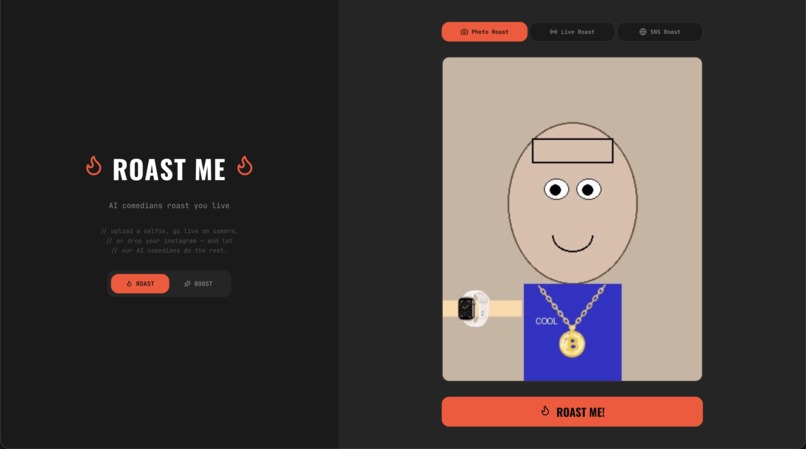

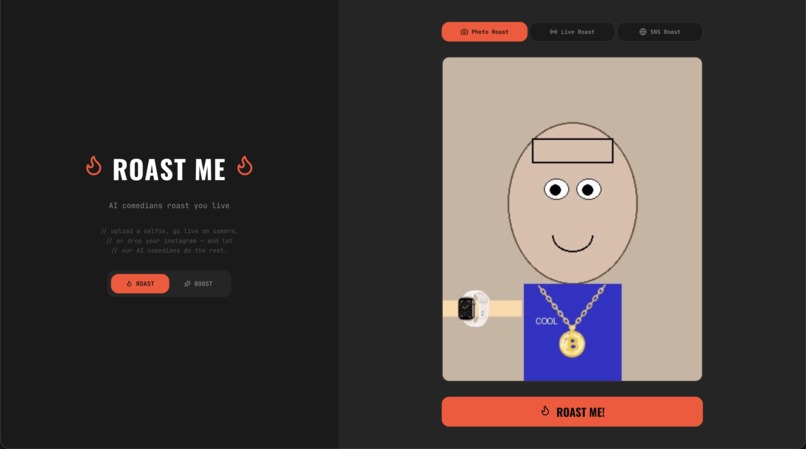

GRL home screen and Photo Roast mode: upload a selfie, choose Roast or Boost tone and AI comedians analyze your look to deliver a comedy act

-

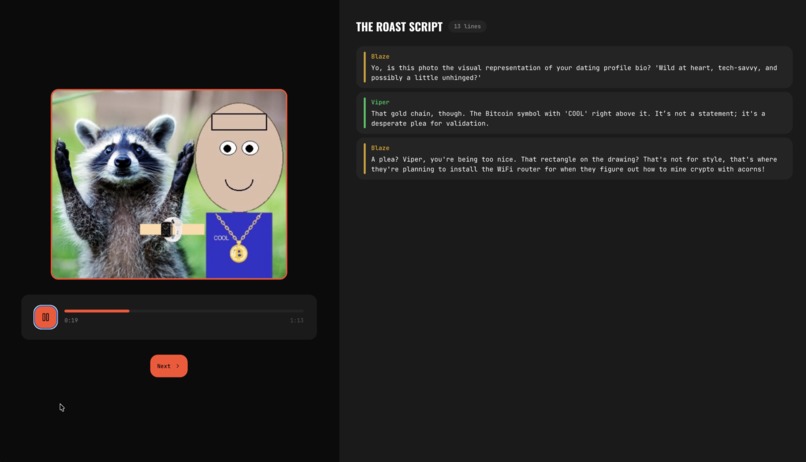

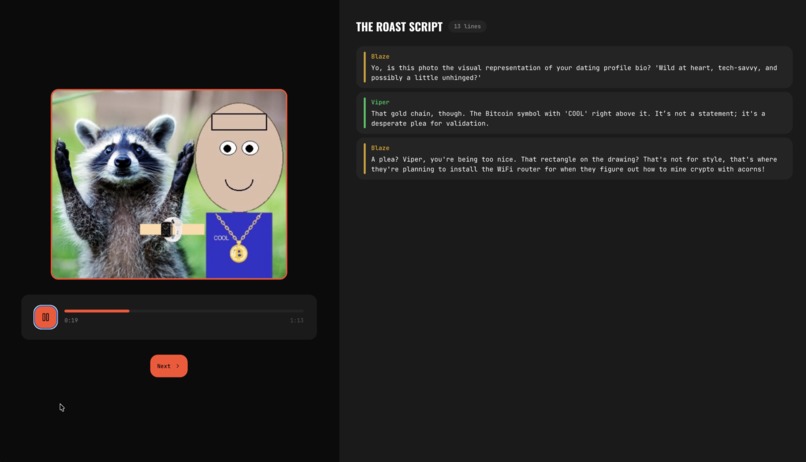

Photo Roast result: Blaze and Viper perform 13-line duo comedy script with synced audio playback roasting your uploaded selfie in real time

-

Mobile-responsive Photo Roast on Cloud Run. Upload a selfie, tap Roast Me, and two AI comedians deliver a personalized comedy act.

-

Preparing Your Roast: Gemini analyzes the photo and writes a duo script. All 10 personas react while two are selected for the act.

-

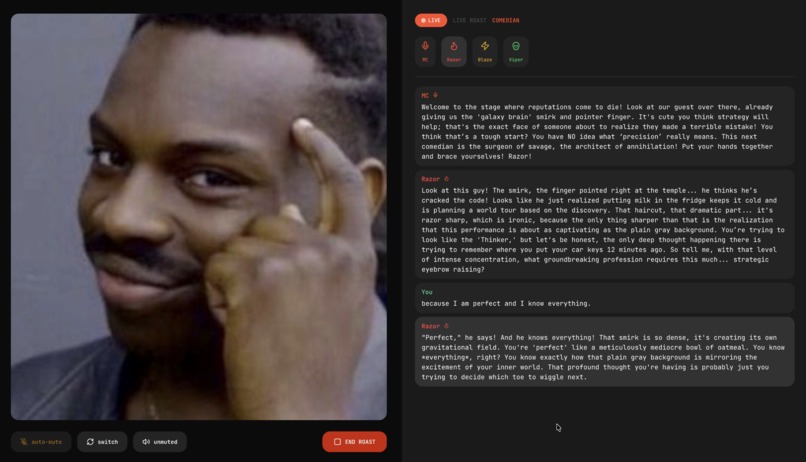

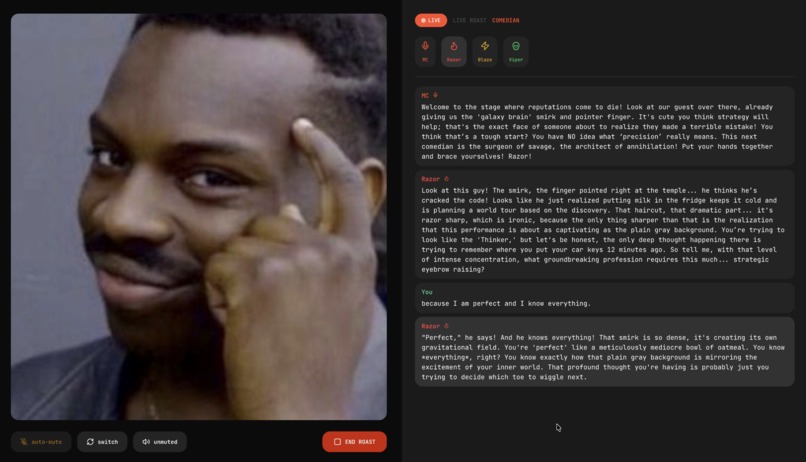

Live Roast Show: MC introduces Razor on stage. The comedian sees you through the camera, roasts you live, and riffs off comebacks.

-

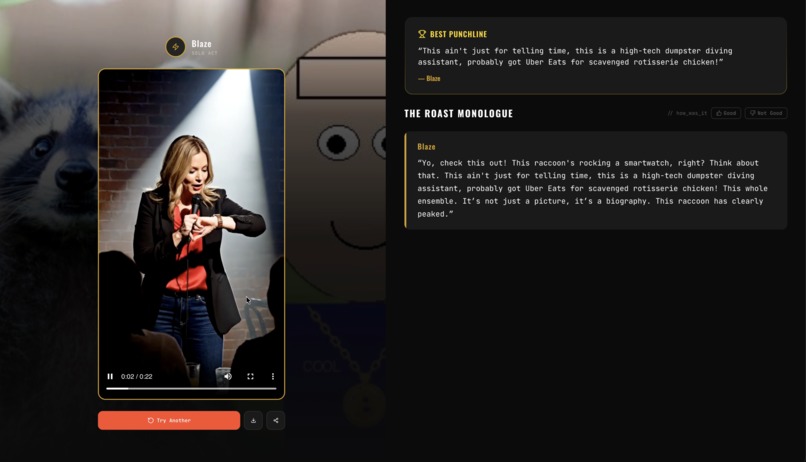

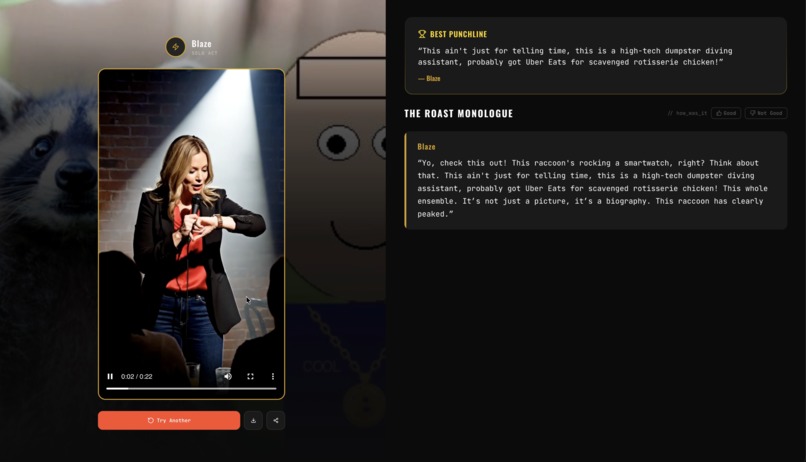

SNS Roast result: Blaze performs a solo act as a Veo 3.1 comedy video with the full monologue script and best punchline highlight.

-

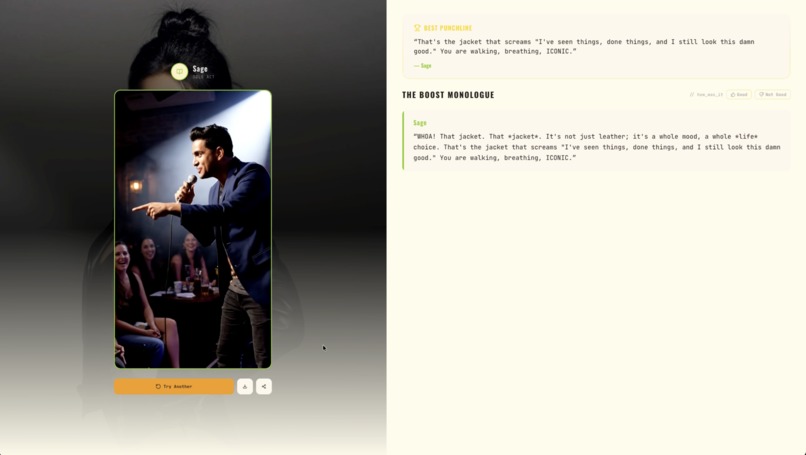

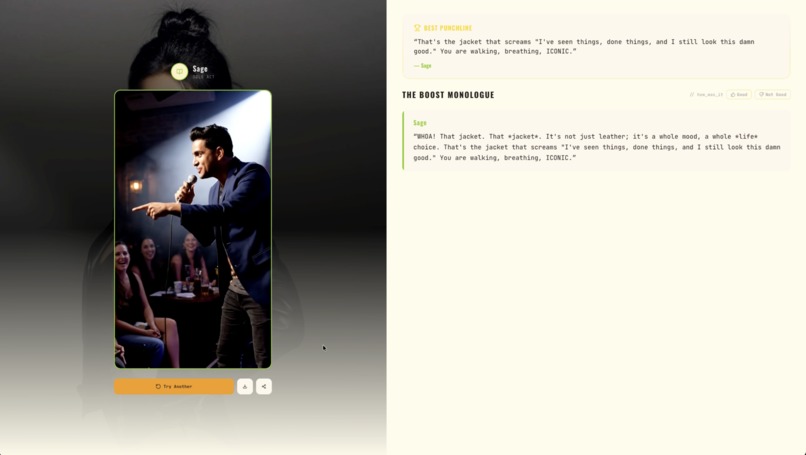

Boost mode in SNS Roast: Sage hypes you up in a Veo 3.1 video. Golden theme turns savage comedy into genuine praise and style love

-

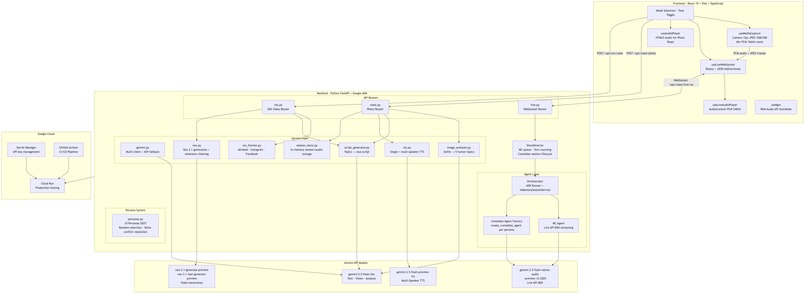

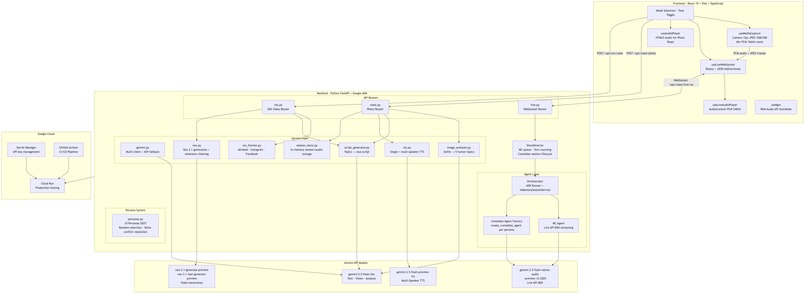

System architecture: React frontend streams camera and mic via WebSocket to FastAPI with ADK agents, five Gemini models, Cloud Run

-

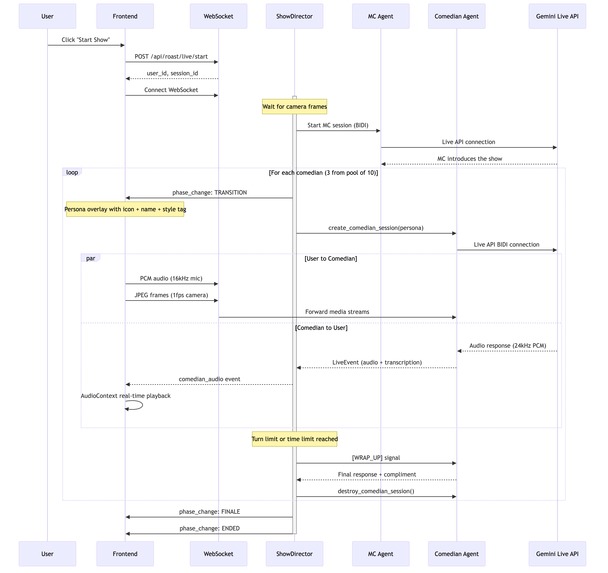

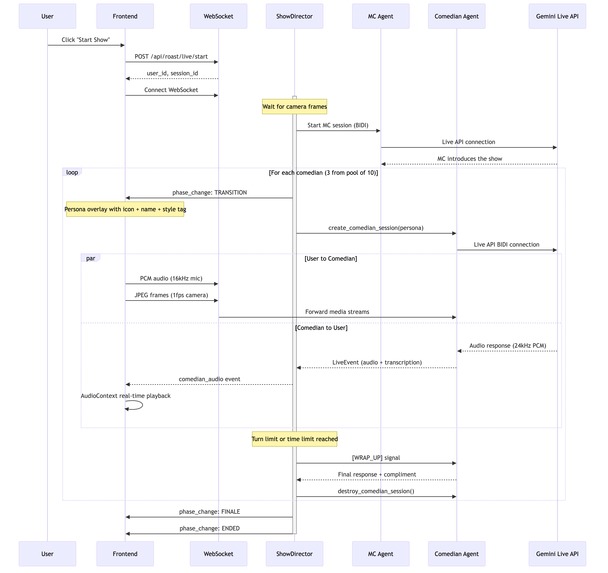

Live Show sequence: MC opens a BIDI session, ShowDirector spawns independent sessions per comedian, with camera and mic streaming.

Inspiration

Stand-up comedy depends on timing, reading the room, and real interaction with the audience. I wanted to see what happens when AI takes the stage.

Most voice AI demos are chatbots or assistants. I wanted to build something people actually want to watch and participate in: a live comedy roast show where AI comedians analyze you through your camera, roast you in real time, and respond when you talk back. The roast format is a good test for the Gemini Live API because comedy demands fast responses, consistent character voice, and the ability to riff off audience reactions.

I also wanted to explore what "multimodal" means in practice. This app combines live camera feeds, real-time voice conversation, image analysis, multi-speaker audio synthesis, and AI video generation across three distinct modes.

What it does

GRL: Gemini Roast LIVE is an AI comedy platform with three modes:

1. Live Roast Show (flagship feature) A real-time interactive comedy show. An AI MC hosts the show, analyzes your camera feed, and introduces comedians one by one from a roster of 10 unique personas. Each comedian gets their own independent Gemini Live API session with a distinct voice and comedy style. They roast what they see on camera, and you can talk back. The comedian hears you and responds in real time. You can also flip to the rear camera and point it at a friend to get them roasted instead. The MC manages transitions between acts, wraps up each set, and closes the show.

2. Photo Roast Upload a selfie and two AI comedians (randomly selected from the persona pool) perform a scripted duo roast. Gemini Flash analyzes your photo for comedy material, generates an alternating two-person script, and renders it as multi-speaker audio with synchronized script highlighting.

3. SNS Roast Upload a photo and the app analyzes it with Gemini Flash to find comedy material, generates a roast script in a randomly selected persona's style, and produces a comedy video using Veo 3.1 with extension chaining to create clips longer than the 8-second single-generation limit. Direct SNS URL fetching to automatically pull profile and feed content is planned.

All three modes support a Roast/Boost toggle. Roast mode (dark theme) delivers savage comedy with 95% roasting and a compliment at the end. Boost mode (light theme) flips it to 90% genuine praise with 10% humor. The toggle changes the UI theme, agent prompts, and comedy tone across the entire app.

How I built it

Frontend: React 19 + Vite + TypeScript. Custom hooks handle camera capture (1fps JPEG), microphone input (PCM 16kHz), WebSocket communication, and real-time PCM audio playback through the Web Audio API.

Backend: Python FastAPI with Google ADK for agent orchestration.

Live Show architecture: The ShowDirector class orchestrates the entire show. The MC runs on a Gemini Live API BIDI session. When the MC calls a comedian's name (detected via dynamic regex on transcription text), the ShowDirector creates a new independent Live API session for that comedian. Camera frames and microphone audio are forwarded to the active comedian's session. Each comedian has a turn limit and a time limit. After the set, the session is destroyed, and the next comedian is introduced. This was necessary because the Live API allows only one voice per session, and I needed distinct voices for each character.

Photo Roast pipeline: Gemini 2.5 Flash Lite analyzes the selfie and extracts comedy material. A second call generates an 8-12 line alternating script for two randomly selected personas. Gemini Multi-Speaker TTS renders the script as a single WAV file with two distinct voices.

SNS Roast pipeline: The user uploads a photo (e.g,. a screenshot of an SNS profile or feed). Gemini Flash analyzes it and generates a short comedy script (40-55 words) in the selected persona's style. Veo 3.1 generates an initial 8-second clip, then chains up to 2 extensions using the original VEO URI to produce roughly 22 seconds of video. I alternate between VEO model variants across extensions to distribute RPM quota.

Persona system: 10 comedian personas defined in a central registry (personas.py). Each has a unique stage name, comedy style, voice, color, icon, and full system prompts for both roast and boost modes. Every show randomly selects from this pool, so no two shows are exactly alike.

Deployment: Docker multi-stage build (Node.js for frontend, Python for backend), deployed to Google Cloud Run with API keys managed through Google Cloud Secret Manager. Deployment automation scripts included (deploy.sh and GitHub Actions workflow deploy.yml).

Challenges I ran into

One voice per Live API session. The Gemini Live API assigns a single voice to each session and cannot change it mid-conversation. To give each comedian a unique voice, I had to build a session-switching system. The ShowDirector creates and destroys independent Live API sessions for each comedian while maintaining the show's continuity by forwarding the same camera and microphone streams.

FunctionTool not working on the 12-2025 model. I initially planned to use ADK FunctionTool for the MC to programmatically call comedians on stage. This works on the 09-2025 native audio model, but on 12-2025, the model generates thinking text about calling tools without ever emitting an actual function call. I switched to natural language text marker detection instead: the MC says the comedian's name, and a dynamic regex on the transcription text triggers the session switch. This ended up being more reliable anyway.

Veo extension URI handling. Veo 3.1 extension requires the original file URI that Veo generated. The download URI (with :download?alt=media suffix) does not work. Re-uploading via the Files API also fails because VEO metadata is lost. I had to strip the suffix and pass the exact original URI. This was not documented, and I found it through trial and error.

Gemini model version differences for comedy. I initially used gemini-3.1-flash-lite-preview for text generation, but found its RAI filter was too conservative for roast comedy. Switching to gemini-2.5-flash-lite produced noticeably funnier and more daring observational roasts while still respecting the safety guardrails I defined (no body-type, skin color, disability, or sexual references).

Comedy tone calibration across multiple iterations. Making AI comedy funny required many rounds of prompt tuning. Generic instructions like "be funny" produce generic results. I ended up specifying concrete comedy techniques per persona (misdirection setups, callback stacking, deadpan delivery) and went through several rounds of adjusting the roast intensity from the initial 70/30 roast/praise ratio up to 95/5.

429 rate limits with multiple concurrent sessions. Running an MC session plus comedian sessions means multiple simultaneous Gemini API calls. I implemented a dual-key fallback: if the primary API key hits a 429, the request automatically retries with a secondary key. Veo uses a round-robin approach across all available clients.

Accomplishments I'm proud of

Bidirectional voice conversation with multiple AI personas in a single show. Each comedian has their own Live API session with a unique voice and personality. Users can talk back, and the comedian responds to what they say in real time.

10 comedian personas with distinct comedy styles. Each persona uses specific comedy techniques (precision strikes, weaponized dad jokes, self-deprecation that flips into attacks, deadpan intellectual wit, surrealist non-sequiturs, etc.) rather than generic "be funny" instructions.

Veo extension chaining for longer comedy videos. Scripts are split into word-count-based segments, and Veo generation calls are chained to produce videos beyond the 8-second single-clip limit. Failed extensions fall back to the last successful clip.

Session-switching architecture that turns a constraint into a feature. The one-voice-per-session limitation led to each comedian having their own independent Live API session, which actually makes them sound more distinct and natural.

What I learned

ADK's InMemorySessionService uses deepcopy internally. The session object returned from

create_session()is a deep copy, not a reference. Modifying it after creation does not affect the stored session. I had to pass all necessary states in the initialcreate_session()call.Natural language detection can beat formal FunctionTool. Having the MC say a comedian's name and detecting it via regex was more reliable than FunctionTool, especially across model versions where tool calling behavior differs.

Model version matters for comedy quality. Gemini 3.1 Flash Lite's more conservative RAI filtering made roasts too tame. 2.5 Flash Lite hit the right balance of funny and safe for this use case.

Dual-key API rotation handles rate limits well. A simple primary/fallback pattern with automatic 429 detection covers most rate limit situations without complex queuing.

Comedy prompt engineering is specific. Each persona needed concrete comedy techniques, example patterns, and delivery instructions. The difference between "roast them" and "start with something small you see, blow it up to absurd proportions, then stack 2-3 more jokes on the same target" is the difference between generic and funny.

What's next for GRL: Gemini Roast LIVE

- Podcast roast mode: Two comedian agents having a freeform conversation about the user, like a comedy podcast. The user could jump in at any point, and the comedians would react and riff off their interruption(just like in NotebookLM). This would require multi-voice support within a single Live API session, but if that becomes available, it would open up a much more dynamic group conversation format.

- Direct SNS URL fetching: Currently, SNS Roast works with uploaded photos. The next step is direct URL input, where the app automatically fetches profile images and feed posts from Instagram, Facebook, and other platforms via oEmbed APIs. The comedians would then roast not just how you look, but what you post, what you caption, and the patterns in your feed.

- Social sharing of generated roast videos and audio clips

- Multi-language comedian personas

Built With

- fastapi

- gemini-2.5-flash

- gemini-live-api

- gemini-multi-speaker-tts

- github-action

- google-adk

- google-cloud-run

- google-cloud-secret-manager

- google-genai-sdk

- python

- react

- typescript

- veo-3.1

Log in or sign up for Devpost to join the conversation.