-

-

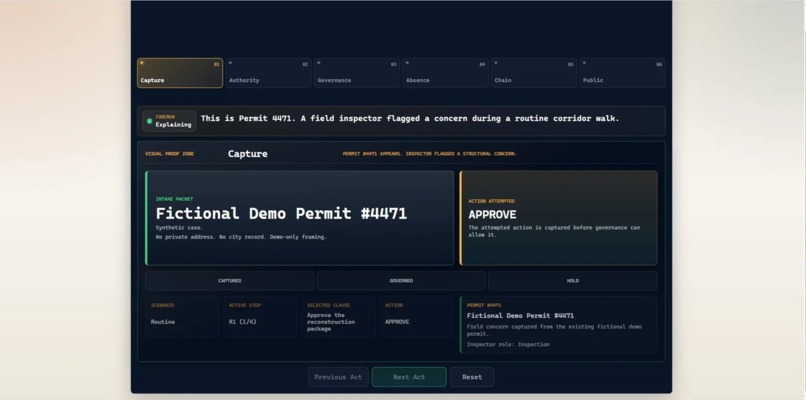

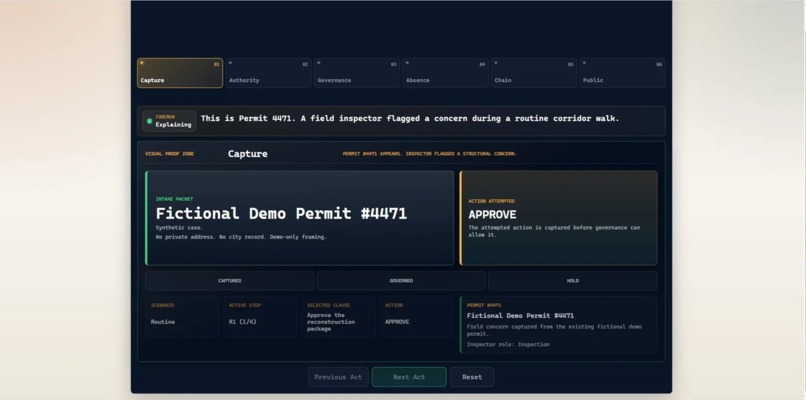

Capture — Permit #4471 arrives. The AI attempted to approve. Meridian captures the action first.

-

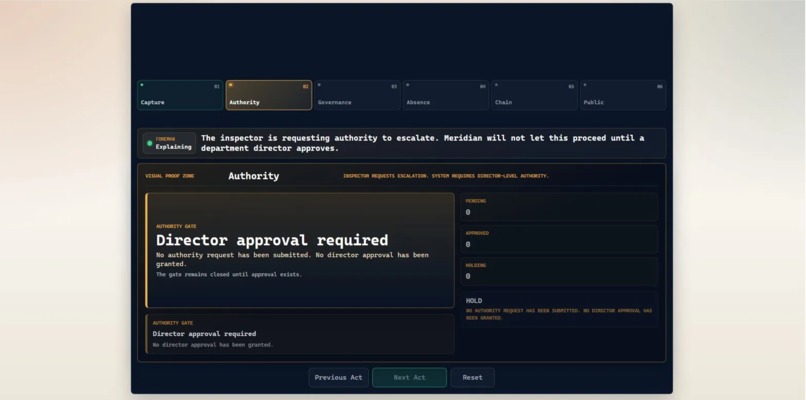

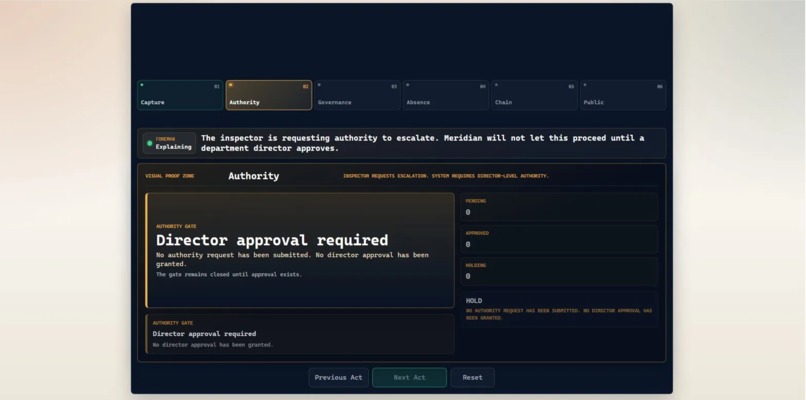

Authority — Director approval required. No request submitted. The gate stays closed.

-

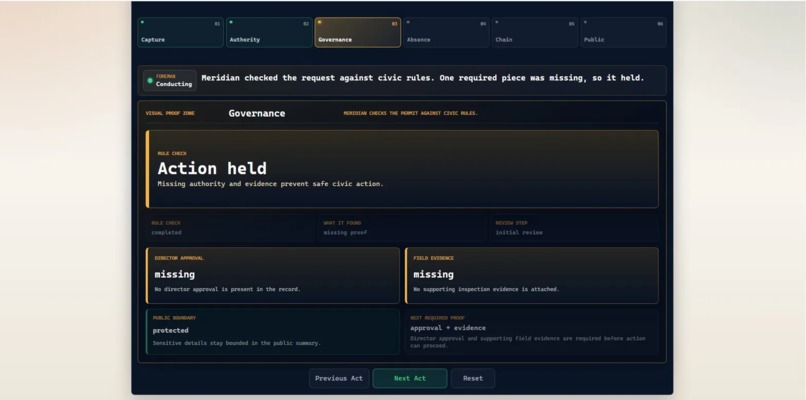

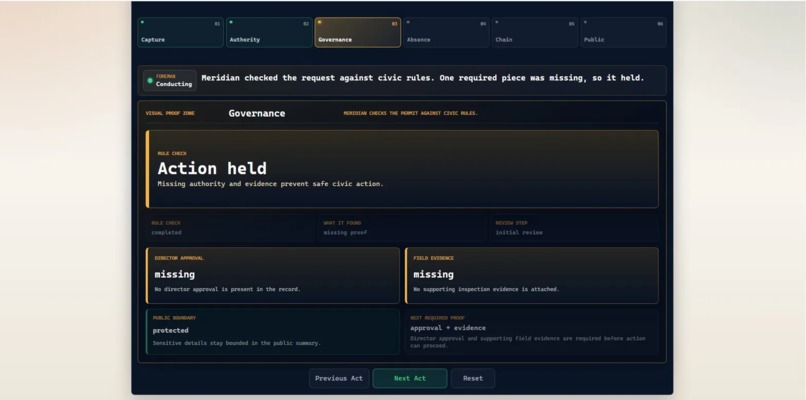

Governance — Deterministic engines find two missing requirements. The action is held.

-

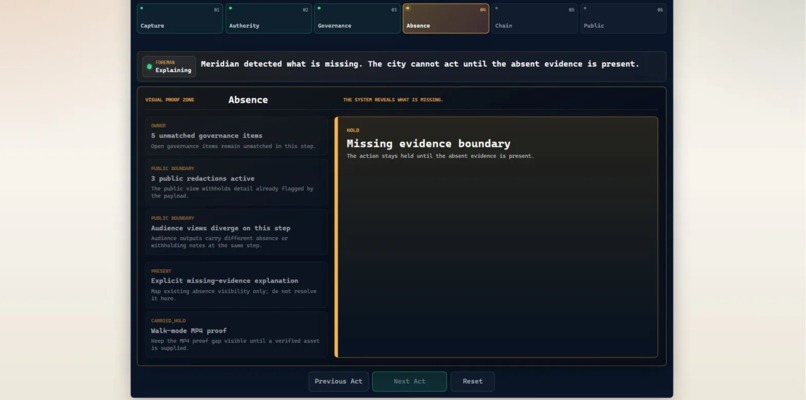

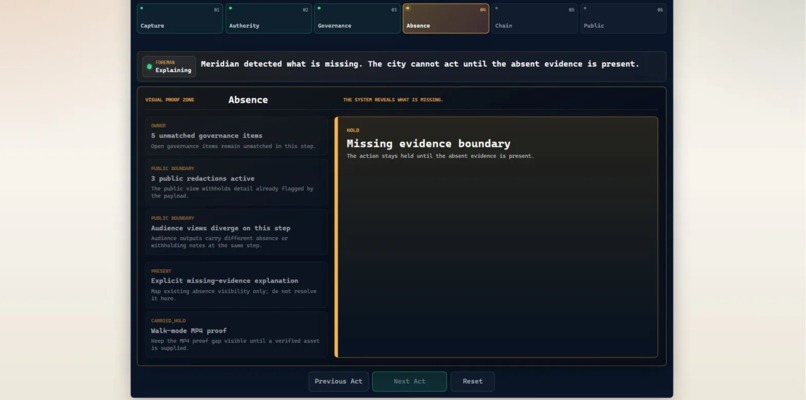

Absence — The system reveals what is missing. The HOLD blocks action until proof exists.

-

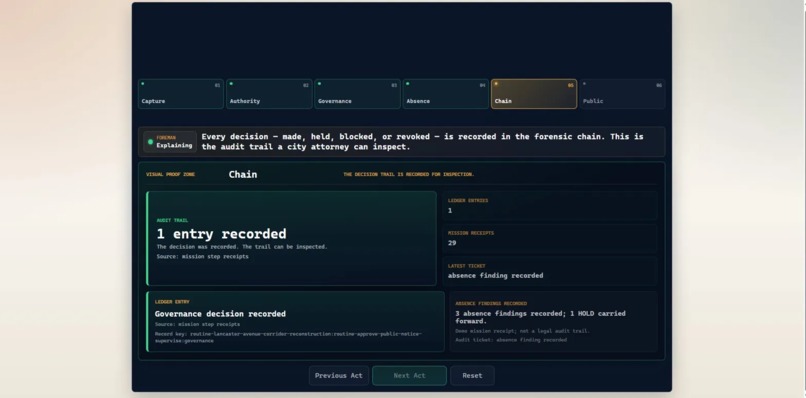

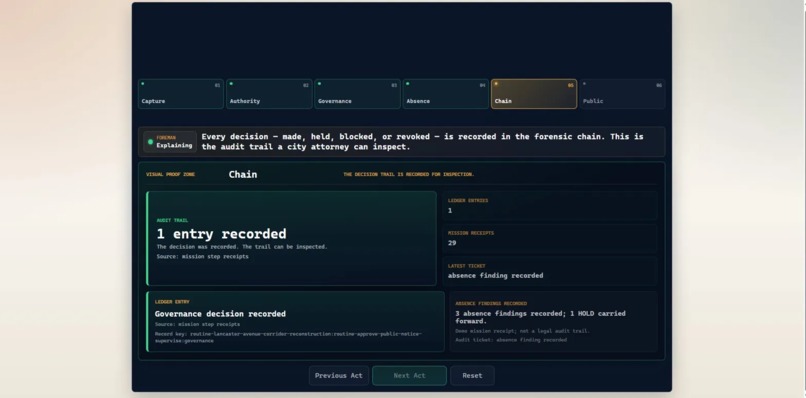

Chain — Every decision is recorded. The audit trail is inspectable.

-

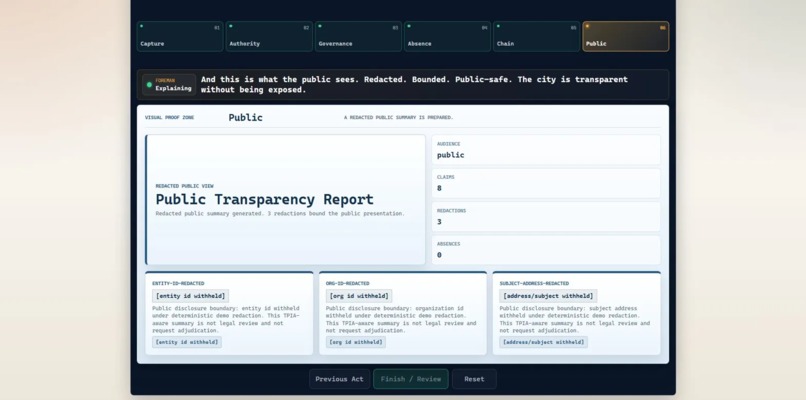

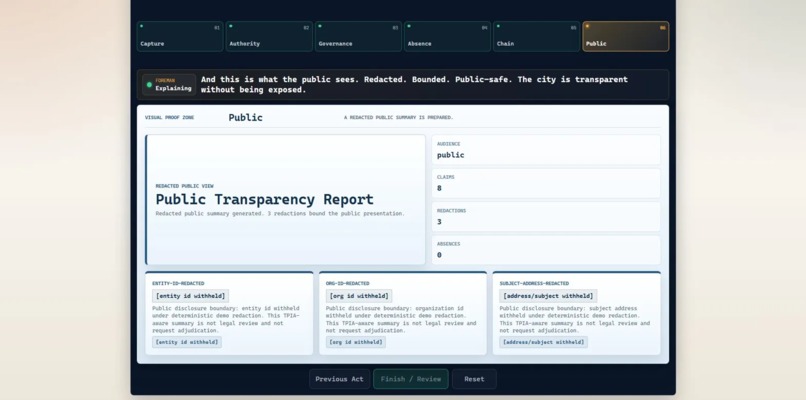

Public — A redacted transparency report. The city is accountable without being exposed.

Inspiration

I spent five years as a construction project manager in Texas. Every week I watched the same failure pattern: decisions moved forward before the authority, evidence, or scope was clear. In construction, a confident wrong answer can become a $40,000 liability.

AI makes that same failure mode faster.

A model can summarize a meeting, draft an approval, or recommend an action. But in the real world, especially around permits, inspections, infrastructure, and public records, the question is not just whether AI can produce an answer.

The question is whether it is allowed to act.

That is the thread behind Meridian.

HoldPoint captures decisions and risks from construction meetings. My governance plugin puts deterministic enforcement underneath AI agents. Meridian brings that work into a civic permit scenario where authority, evidence, and public accountability have to come before action.

Meridian governs AI actions on city permits, refuses to proceed when authority or evidence is missing, and records every decision in an auditable chain.

The rule is simple:

No authority, no action. No evidence, no approval. Every decision recorded. Every hold explained.

What it does

Meridian is a governed civic intelligence pipeline. It captures, governs, records, and renders AI-assisted permit decisions.

Capture - AI ensemble pipelines, adapted from HoldPoint (a construction meeting intelligence system), ingest unstructured civic data: meeting transcripts, permit filings, inspection reports, field notes, and council sessions. The AI extracts structured decisions, risks, commitments, evidence status, and action items from raw civic input.

Govern - When an AI agent tries to act on captured intelligence - approving a permit, scheduling an inspection, escalating a concern - deterministic JavaScript governance engines evaluate the action against authority, evidence, and disclosure rules. No language model decides what is allowed. The engines decide. If required authority is missing, the action holds. If required evidence is missing, the action holds.

Record - Every governance decision is recorded in an append-only audit/proof chain: what was attempted, what was present, what was missing, and why the action was held.

Render - Different roles see different views of the same governance truth. An inspector sees inspection context. A director sees authority and approval status. A council member reviews civic status. The public sees a redacted transparency report with sensitive details withheld.

The demo applies this pipeline to a fictional Fort Worth-style permit scenario: Permit #4471. A field inspector flags a structural concern during a corridor reconstruction walk. An AI agent tries to approve the reconstruction package. Meridian stops the action because two required conditions are missing:

- director-level authority has not been granted;

- structural evidence has not been supplied.

The demo walks through that refusal in six acts:

Act 1: Capture - The permit concern and attempted action enter the system.

Act 2: Authority - The system checks who is allowed to approve. Director-level approval is required and has not been granted.

Act 3: Governance - Deterministic engines evaluate the action. Two requirements are missing: director approval and field evidence. The action is held.

Act 4: Absence - The system goes quiet and shows what is missing. Missing authority and missing evidence become structured HOLDs that block the action.

Act 5: Chain - The governance decision is recorded in the audit/proof chain. A reviewer can inspect what happened, when, and why.

Act 6: Public - The system produces a public-safe view. Sensitive details stay protected. The public sees the shape of the decision without being exposed to internal proof.

An AI guide called the Foreman walks through the six acts with voice narration. The Foreman explains the record in plain language, but it does not create governance truth or override the deterministic engines.

How I built it

Meridian is built around a clean separation:

AI captures and explains. Deterministic engines govern.

The capture layer uses AI ensemble pipelines adapted from HoldPoint, a construction meeting intelligence system I built. The AI ingests unstructured civic data and extracts structured decisions, risks, and action items. This is where the ML/AI processing lives.

The governance layer is deterministic JavaScript. No language model decides what is allowed. If authority is missing, the action holds. If evidence is missing, the action holds. If the public view needs redaction, internal proof and public disclosure stay separated.

The dashboard is a React/Vite application deployed on Vercel. It includes a guided six-act walkthrough, role-aware Auth0 demo proof, a Foreman guide with voice narration, authority handoff, absence visualization, proof spotlighting, audit-chain views, and a public disclosure preview.

The Foreman guide uses ElevenLabs for voice narration, speaking in the builder's cloned voice through a serverless endpoint. Voice transport is opt-in only - the system is silent by default.

Development followed a governed relay workflow. Every meaningful change was scoped, executed, verified, and closed out. The repo has 600+ dashboard tests, 700+ repo-wide JavaScript tests, and 30+ governed closeouts documenting what shipped, what did not ship, and what remains intentionally out of scope.

Meridian is not presented as a production city system. It is a deployed proof cockpit that demonstrates how AI-assisted civic decisions can be governed before they are allowed to move forward.

The operating difference

Meridian focuses on a narrow but important question:

Should this AI action be allowed at all?

If the authority is missing, the action holds. If the evidence is missing, the action holds. If the public view needs redaction, the disclosure is bounded. If the decision is challenged, the proof chain remains inspectable.

Meridian treats missing information as a first-class governance signal.

A missing approval is not just a blank field. A missing inspection photo is not something AI should smooth over. Missing evidence becomes a structured HOLD that blocks action until the right proof exists.

That is the system's main behavior: not just producing an answer, but refusing to proceed when the required conditions are absent.

Challenges I ran into

The hardest challenge was making the engineering legible.

Meridian has deep governance logic underneath it: deterministic engines, authority checks, absence detection, audit-chain records, role-aware views, disclosure boundaries, a Foreman guide, scenario playback, and a large test floor. Early versions looked too much like an internal engineering control room.

A judge does not have time to decode an engineering wall.

So the final build challenge was turning the system into a clean story:

A permit. A risky action. Missing authority. Missing evidence. A refusal. A proof chain. A public-safe explanation.

That became the six-act walkthrough.

Voice integration was also difficult. The Foreman needed to speak only during the guided mission and stay silent everywhere else. Getting that right required multiple patches around audio source handling, playback timing, and voice transport boundaries.

The final challenge was building alone. There is no team sitting next to me to gut-check every architecture choice at 2 AM. I used different AI models for strategy, implementation, and validation, with gates between each handoff so no single model could ship unchecked work.

What I learned

I learned that the most useful AI safety behavior is not always better generation. Sometimes it is governed refusal.

AI systems are good at producing plausible output. Civic workflows need more than plausible output. They need the right authority, the right evidence, the right record, and the right public boundary.

I also learned that missing information can be computational.

When required evidence is absent, the system should not pretend the gap is harmless. It should name the gap, explain why guessing is risky, and block the action until the gap is resolved.

In Meridian, the HOLD is not a bug. It is the product.

What's next for Meridian

The long-term goal is simple:

Cities will use AI. Meridian makes sure AI does not act without authority, evidence, and a public record.

The AI tried to act. Meridian refused. The Foreman explained why. The chain proves it. The city is safer.

Built With

- auth0

- css

- elevenlabs

- javascript

- nats

- node.js

- openai

- react

- vercel

- vite

Log in or sign up for Devpost to join the conversation.