-

-

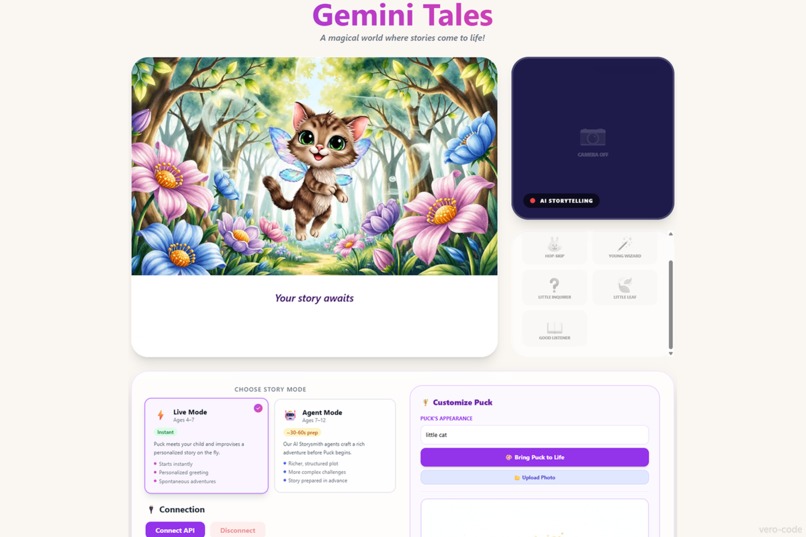

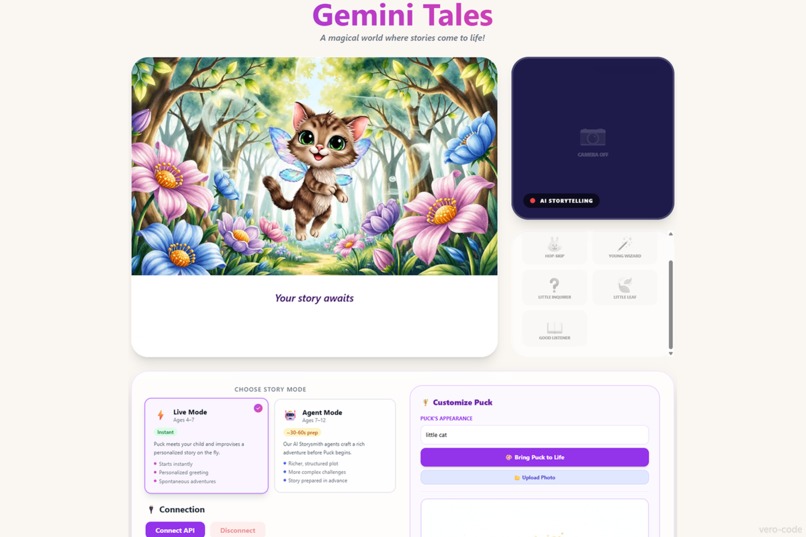

Magic Mirror Control Center: A unified React 19 dashboard managing real-time Gemini Live sessions, multimodal device inputs, and progress.

-

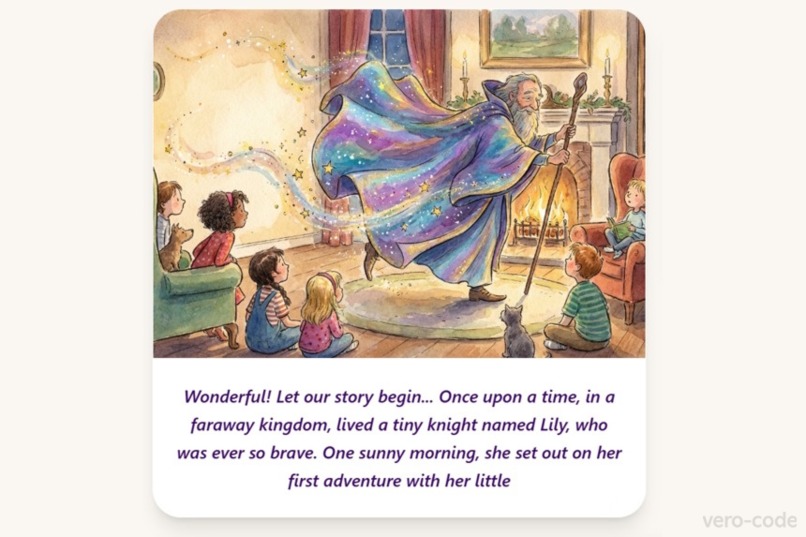

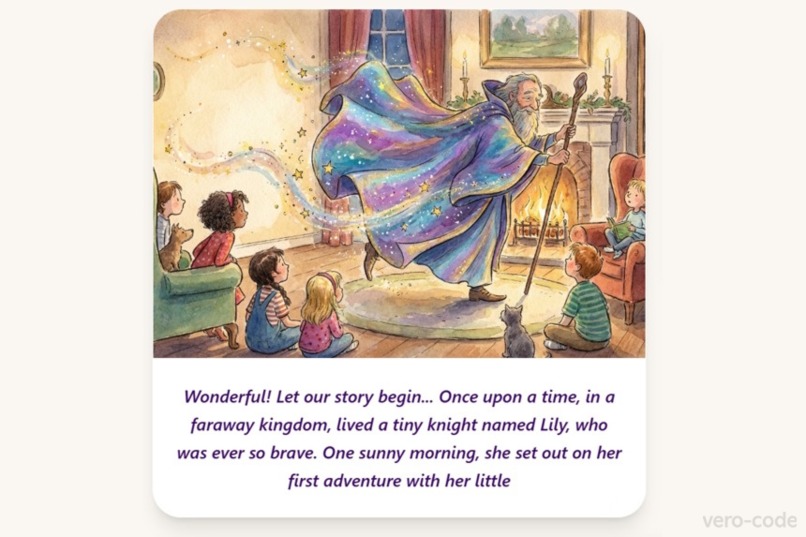

Seamless Interleaved Output: High-fidelity watercolor scene generation that evolves dynamically with the AI-driven narrative.

-

Immersive Visual Storytelling: Children embarking on a digital adventure with high-quality, scene-specific illustrations generated in real

-

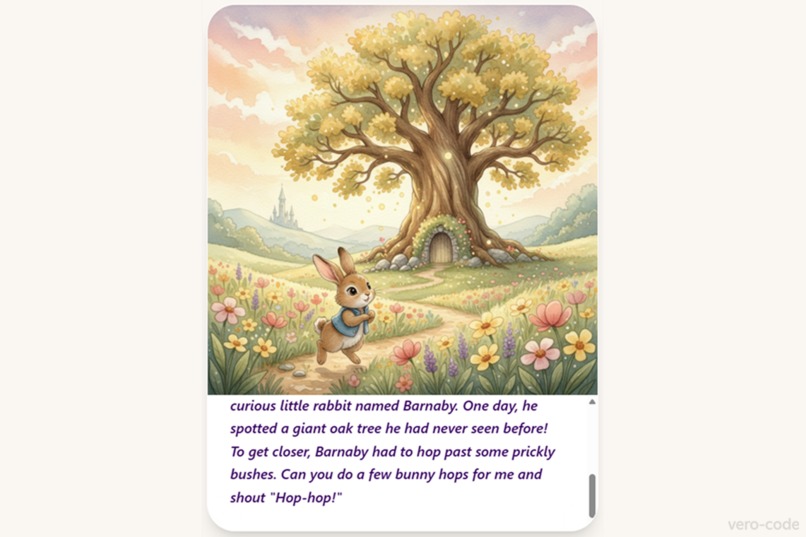

Movement-Driven Progression: Puck assigns physical challenges and uses Gemini Vision at 1 FPS to verify completion before the continues.

-

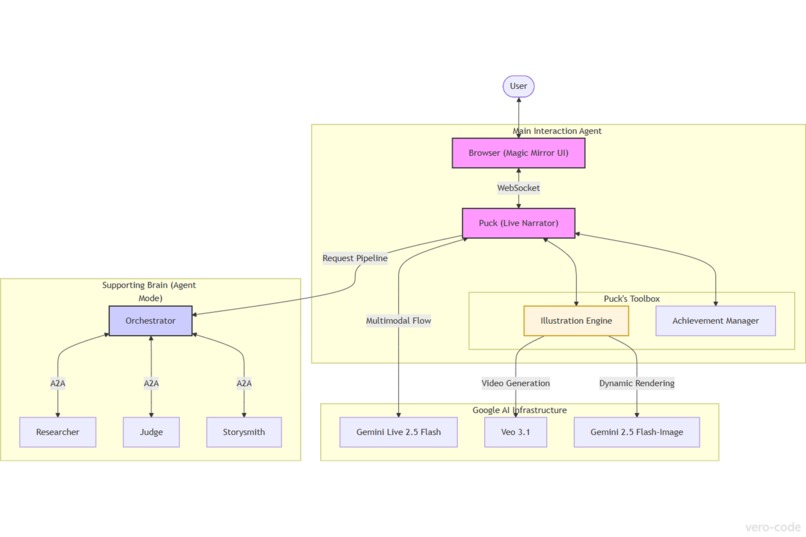

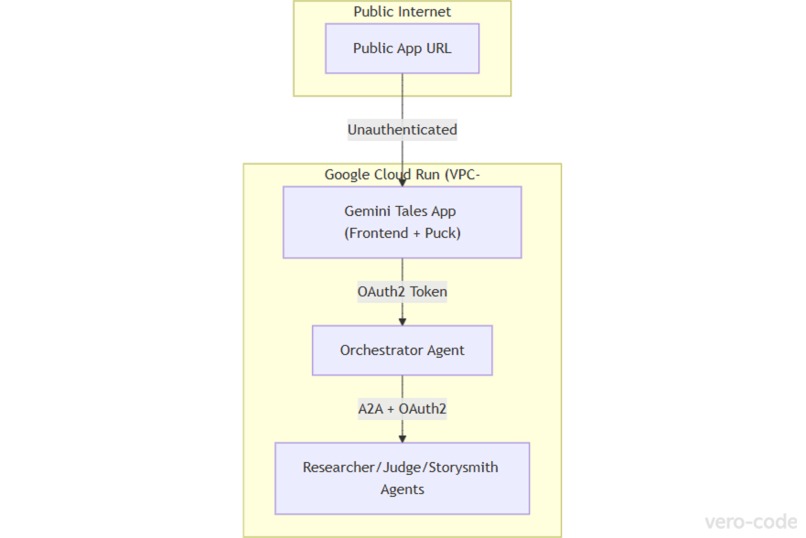

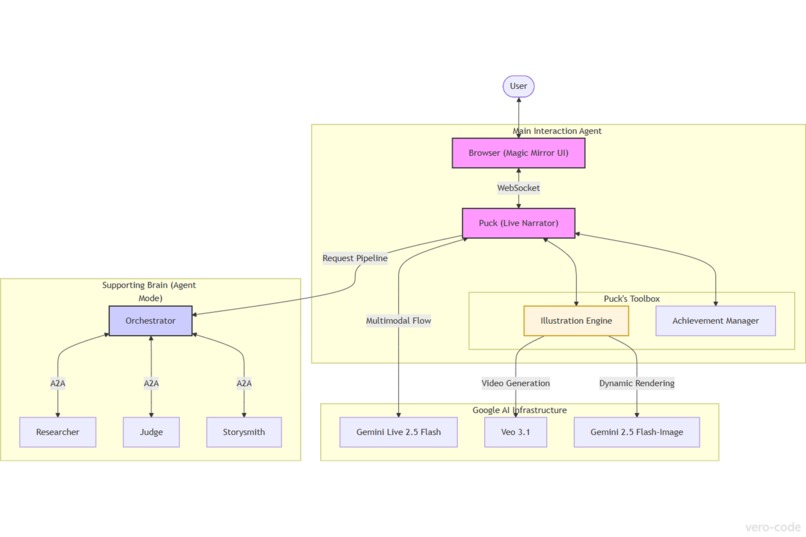

System Design: multi-agent orchestration layer (ADK) connecting Gemini Live for interaction with specialized agents via the A2A protocol.

-

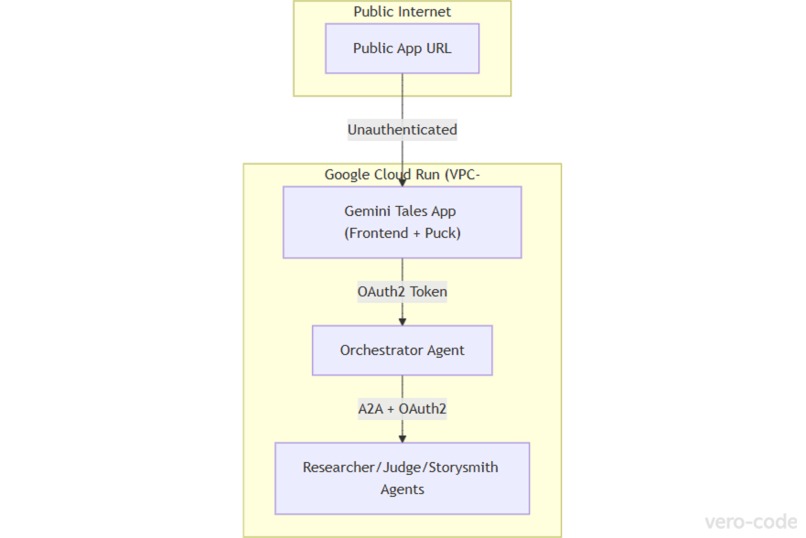

Production Infrastructure: A containerized microservice architecture deployed on Google Cloud Run with OAuth2-secured communication.

-

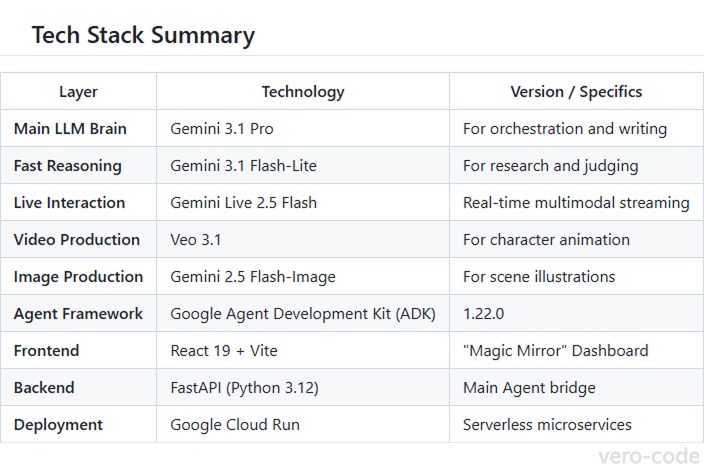

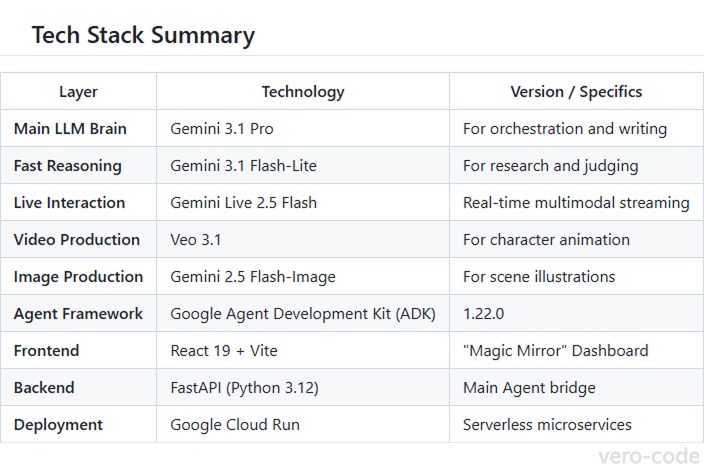

Cutting-edge Stack: Leveraging Gemini 3.1 Pro for reasoning, Gemini Live for multimodal streaming, and Veo 3.1 for character animation.

Inspiration

The Problem: 80% of children today don't move enough. As physical activity drops, technology is often blamed. My Solution: I use technology to solve the problem it created. Gemini Tales doesn't just tell stories — it asks children to ACT, transforming the living room into a magical quest where progress is powered by movement.

Kids spend hours passively consuming content. We wanted to flip that — what if the story literally couldn't continue unless the child got up and moved? That question became Gemini Tales: an AI nanny that doesn't just tell stories, it watches, listens, and waits for the child to act.

The most rewarding moment of the entire build? Seeing a child leap off the couch when the AI asked them to "show me how you jump." Screen time transformed from sedentary consumption into active play.

Technology is often the villain in this story. But what if it could be the hero? Gemini Tales is built on a simple belief: with the right architecture and intention, AI can entertain, engage, activate, and educate — all at once.

GDG profile, Google for Developers profile

What it does

Gemini Tales enters as a Creative Storyteller ✍️ — built around Gemini's native interleaved output: voice narration, watercolor illustrations, and vision-based verification woven into a single, uninterrupted stream. There is no "turn" — the experience is continuous and context-aware.

Gemini Tales is an interactive fairytale experience built around Puck — an AI storyteller that breaks the traditional "text box" paradigm. It offers two modes:

Live Mode — Puck improvises a story in real-time using Gemini Live 2.5 Flash, reacting to the child's voice and camera feed with near-zero latency.

Agent Mode — A background multi-agent pipeline (Researcher → Judge → Storysmith) pre-generates a researched, safety-checked narrative. Puck then narrates it live with expressive voice and auto-generated watercolor illustrations.

Key mechanics:

- Stories only begin when Puck sees the Magic Sign (two fingers up via camera)

- Puck issues physical challenges ("hop like a bunny") and verifies completion via Gemini Vision before continuing — no voice-only "anti-cheat" bypass

- Children earn badges for completing physical tasks

- Upload a photo → get transformed into a watercolor fairytale character (Gemini multimodal likeness transfer)

- Characters are animated with Veo 3.1 cinematic previews

How I built it

This project started as a journey through the Build Multi-Agent Systems with ADK track. The I finished Way Back Home series — featured in the official resources of the Gemini Live Agent Challenge. Last, but no least - offical docs Build your agent with ADK. I took those core architectural patterns and pivoted toward something bigger.

Frontend: React 19 + TypeScript + Vite + Tailwind CSS — a "Magic Mirror" dashboard with real-time device management, dual-stream chat, and a debug console.

Main Agent (Puck) is a Google ADK Agent with its own tool set:

say_hello, do_physical_exercise, draw_story_scene, and awardBadge.

Puck runs on Gemini Live with native audio, receives the live video feed,

and uses these tools to greet the child, verify the Magic Sign visually,

trigger watercolor scene illustrations, assign physical challenges, and award

badges only when it sees the action completed in the camera — not just hears

it claimed. The FastAPI layer handles WebSocket proxying and OAuth2 token

management for the Vertex AI connection, but Puck's behavior is fully

agent-driven, not scripted.

Multi-agent Brain (Google ADK):

- Adventure Seeker (

gemini-3.1-flash-lite+google_search) — researches story facts and lore - Guardian of Balance (

gemini-3.1-flash-lite) — evaluates safety and physical activity density - Storysmith (

gemini-3.1-pro) — writes the final Markdown narrative - Orchestrator (

SequentialAgent+ customEscalationChecker) — coordinates the pipeline with a quality loop: research → judge → escalate or retry (up to 3 iterations)

Each of the cloud-deployed agents is structured around 5 fundamental prompt engineering patterns:

- Identity — who the agent is

- Mission — what it exists to accomplish

- Methodology — how it approaches its task

- Boundaries — what it must never do

- Few-shot examples — concrete input/output pairs to anchor behavior

This pattern made each agent's behavior predictable in isolation, which was critical when debugging a distributed A2A pipeline where a single misaligned agent could corrupt the entire story context.

Agents communicate via A2A protocol as standalone microservices. Session state acts as the shared memory bus between them.

Media Factory: Veo 3.1 for character animation, Gemini 2.5 Flash-Image for scene illustrations.

Deployment: All five services containerised and deployed to Google Cloud Run with OAuth2 bearer token auth via a custom authenticated_httpx.py wrapper. Observability via OpenTelemetry → Google Cloud Trace.

Grounding & Safety: Adventure Seeker uses google_search grounding

to anchor story facts in real-world lore. Guardian of Balance enforces

a structured safety verdict before any content reaches the child. Puck

falls back to camera-check prompts if the Magic Sign is absent, and

to chat input if audio is unavailable.

The full source code — including ADK orchestration logic, deployment scripts, and frontend — is available on GitHub: vero-code/gemini-tales, with a comprehensive ARCHITECTURE.md for deep-dives.

For judges: The Architecture Diagram is included in the image carousel. Cloud deployment proof (Cloud Run console recording) is shows in video. Full spin-up instructions are in the README.

Challenges I ran into

- Starting from zero with ADK: The biggest challenge was the sheer volume of new material. I built agent by agent, learning as I went — the repository history reflects this: 111+ commits. Early versions used the raw Google Live API for multimodality with 5 agents built and deployed from scratch before Puck root agent was introduced into the stack.

- Late-stage architectural pivot: Four days before the deadline, after completing the Way Back Home course and the official Build your agent with ADK tutorial, I rewrote the core agent layer entirely in ADK. Risky this close to submission — but the result was a cleaner architecture where Puck as

root_agentcommunicates with the five previously cloud-deployed agents via A2A. - The $10.13 Lesson — Infrastructure Malpractice: While auditing the Way Back Home workshop scripts, I discovered a dangerous automation pattern in

billing-enablement.py. If the script fails to detect a workshop-specific billing account, it silently defaults toopen_accounts[0], renames the personal billing profile without consent, detaches the project from any credit-funded account, and links it to a personal card — resulting in $10.13 in unauthorized charges. Noinput()prompts, no interception possible. I had to manually patch the files withexit(0)to stop further damage. The educational content was 10/10. The infrastructure automation was a harsh reminder: always audit third-party setup scripts before running them. - Gemini Live proxying: Keeping credentials server-side while maintaining low-latency bidirectional audio/video required a custom FastAPI WebSocket proxy piping binary and JSON frames transparently.

- A2A on Cloud Run: Authenticated inter-service communication between independently deployed agents required handling OAuth2 bearer tokens automatically — especially tricky with Application Default Credentials on Windows.

- Physical challenge verification: Getting the AI to reliably pause narration, wait for the child to act, and resume only after visual confirmation — without false positives from voice alone.

- Pydantic Schema Validation & ADK Compatibility: One of the most subtle bugs we faced was a strict validation error between Pydantic and the ADK's internal event loop. We had to carefully align our output schemas for the Guardian of Balance (Judge) to ensure that the AI's structured feedback correctly serialized across the A2A protocol without triggering type-mismatch errors during the loop escalation process.

- Unpredictable agent behavior: An LLM-based agent is not a deterministic algorithm. Puck doesn't always follow the script — sometimes skipping steps, sometimes improvising beyond the prompt. There's no way to fully control this, and that's a fundamental property of the system. I learned to work with it rather than against it: treating unpredictability as a creative constraint and designing the instruction set to guide rather than force behavior.

Accomplishments that I'm proud of

- A genuinely novel interaction pattern: a story that physically waits for you

- Full dual-mode architecture (spontaneous Live Mode + structured Agent Mode) in a single app

- End-to-end multi-agent pipeline with safety review baked in before any content reaches a child

- Cinematic character animation from a single text description

- Auto-generated, narratively consistent watercolor illustrations throughout the story

- Clean production deployment: five independent Cloud Run services, authenticated and orchestrated

- Completed three ADK courses mid-hackathon and applied each directly to the project: Build Multi-Agent Systems with ADK, Way Back Home (waybackhome.dev), and the official Build your agent with ADK tutorial — each one visibly shifting the architecture forward

What I learned

- ADK changes how you think about agents: The Way Back Home course and official ADK tutorial reframed the entire architecture mid-build. The pivot was late and uncomfortable, but it produced a fundamentally better system — and proved that ADK's abstractions are worth the learning curve even under deadline pressure.

- Specialization over monoliths: The system became dramatically more reliable the moment I stopped relying on one giant prompt and started treating agents like a specialized team with distinct responsibilities.

- The Power of A2A Protocol: Once inter-agent communication clicked, the elegance of distributed, independently deployable agents became clear. The Orchestrator only needs to know the agent card URL — not the implementation.

- Infrastructure is code — and risk: Debugging the billing scripts was as much a learning experience as building the agents. It reinforced a hard rule: interactive confirmation in automated DevOps pipelines is not optional.

- Movement changes everything: Physical verification via vision is achievable at 1 FPS. The latency is acceptable for a children's interaction loop — and the behavioral result is genuinely different from passive screen time.

What's next for Gemini Tales

If this project wins the Gemini Live Agent Challenge, here's what I'm committing to build:

Phase 1: Educator Adoption (Months 1-2)

- Educator Dashboard: Teachers configure story themes, movement goals, and age-appropriate challenges per session

- School Deployment Pack: A simplified Cloud Run setup guide + Docker image for schools to self-host

- Movement Metrics: Track physical activity data per child (with parental consent) to prove the "screen time → move time" transformation

- Free Tier for Non-profits: Educational institutions get free Cloud Run quota for one academic year

Phase 2: Global Scale (Months 2-4)

- Multiplayer Mode: Two children, one story, coordinated physical challenges. Puck asks "Can you BOTH hop together?" and uses vision to verify synchronized movement

- Multilingual Support: Core stories in 10 languages

- Cultural Localization: Agent Mode story themes adapt to regional legends, holidays, and cultural values

- Mobile App: Native iOS/Android for living-room play without a laptop (React Native port of the "Magic Mirror")

Phase 3: Premium Tier (Month 4+)

- Gemini Tales Premium: Parent dashboard exposing the raw agent pipeline (Researcher, Judge, Storysmith working in real-time) so adults can see exactly how each story was crafted

- Custom Character Library: Upload your own character art (pet, stuffed animal, superhero OC) and have Puck transform it into the main character

- Extended Story Packs: Professionally written, multi-session adventures (The Dragon's Lair, The Enchanted Forest, The Lost Temple) with persistent progression across sessions

- Gamification API: Developers can integrate their own movement tracking devices (Fitbit, Apple Watch, smart scales) to unlock story-specific achievements

Phase 4: Research & Impact (Ongoing)

- Peer-Reviewed Study: Partner with pediatricians and child psychologists to measure sedentary reduction and cognitive engagement metrics

- Open Data Initiative: Anonymized, aggregated movement data shared with research institutions studying childhood activity

- ADK Extensibility: Document the multi-agent orchestration pattern as a reusable template for other child-safe AI applications

- Google Cloud Starter Kit: Contribute a "Gemini Tales Architecture" as an official Google Cloud Solution template for educational AI

The Bigger Vision 🌍

If we can prove that AI can inspire movement instead of sedentary consumption, we unlock a new category of tech: AI that optimizes for human health, not screen time.

Winning this challenge means the resources to show that Gemini Tales is reproducible, scalable, and genuinely life-changing for kids. It's not just a hackathon project—it's a proof-of-concept for the next generation of responsible AI.

The goal: 10,000 children moving more because of this app by end of 2026.

Built With

- a2a

- adk

- agent-development-kit

- agent-to-agent

- antigravity

- fastapi

- gemini

- google-ai-studio

- google-cloud

- google-cloud-run

- google-genai-sdk

- google-live-api

- opentelemetry

- python

- react

- tailwind-css

- typescript

- veo

- vertex-ai

- vite

- websockets

Log in or sign up for Devpost to join the conversation.