-

-

EcoSense initialization: launch the auditor with a single “Initialize Protocol” entry point (demo-ready, zero setup).

-

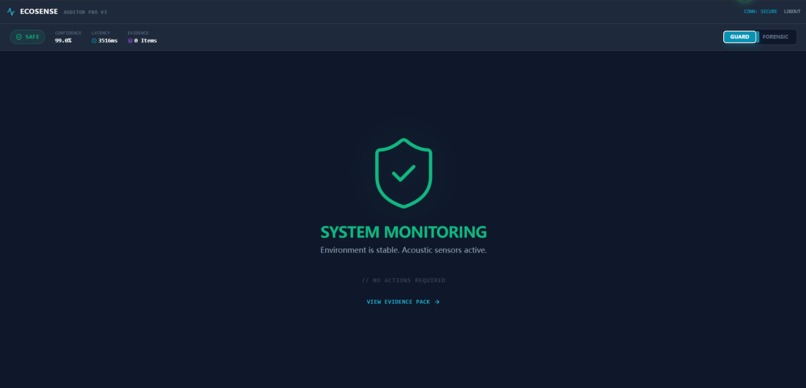

Minimal monitoring view: SAFE shield + key metrics, with “View Evidence Pack” to open audit details.

-

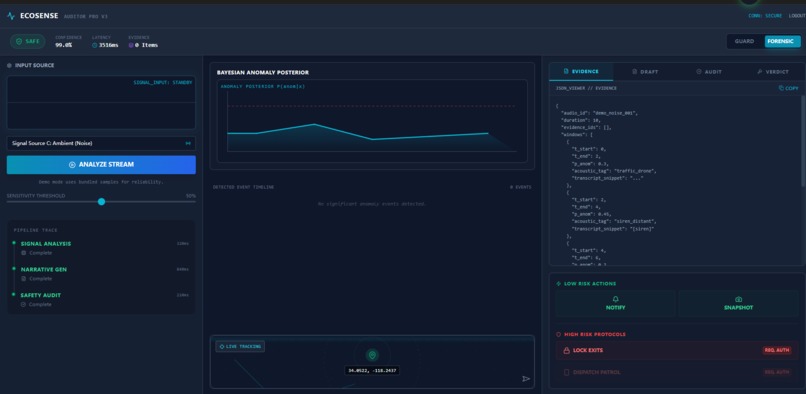

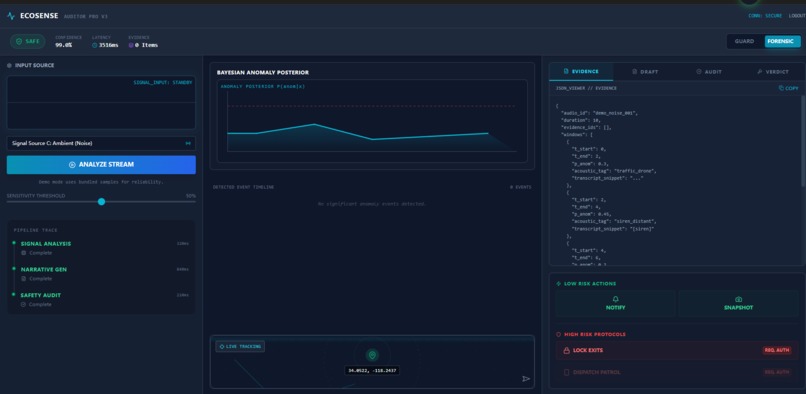

Transparent pipeline: posterior chart + ledger tabs + step trace, even when no incident is detected.

-

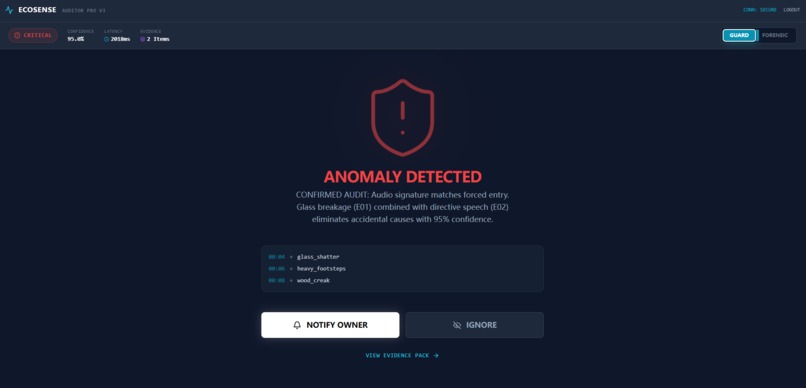

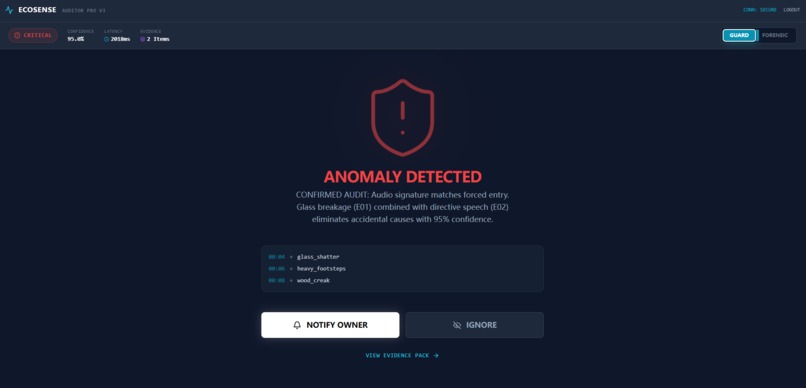

Critical alert with a short incident narrative and only two actions: Notify Owner or Ignore.

-

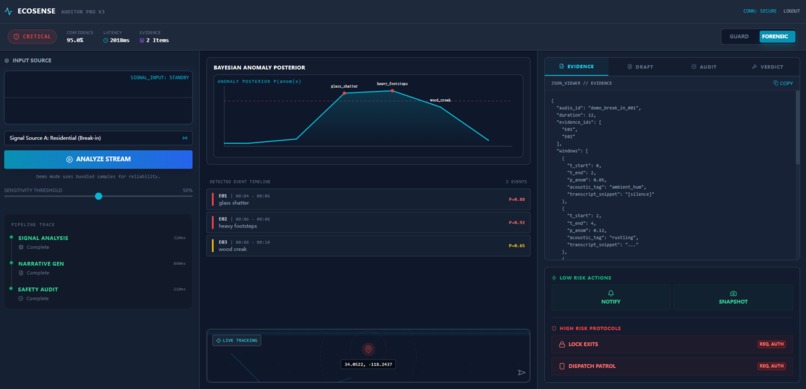

Evidence-linked audit: posterior spikes → E01–E03 timeline → JSON ledger; high-risk actions locked until CONFIRM.

-

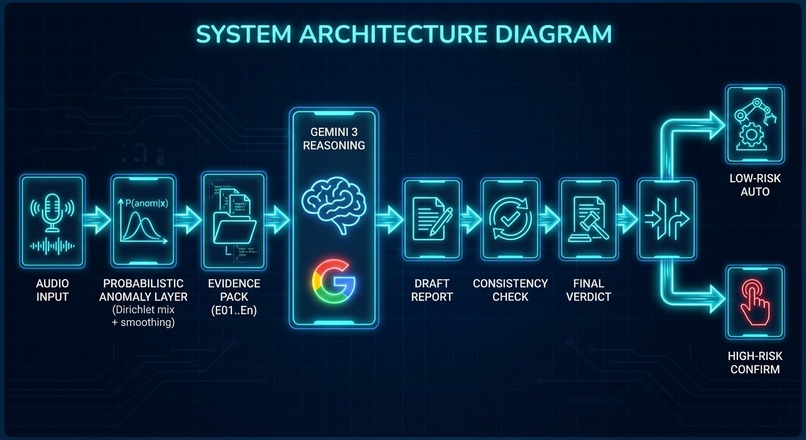

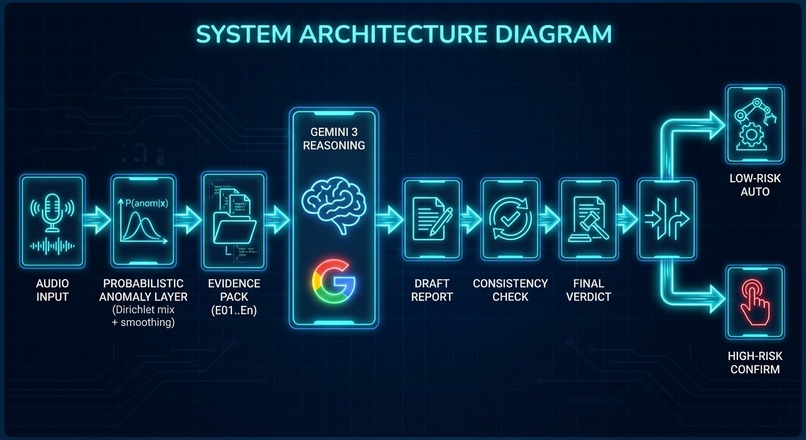

Audio → anomaly posterior → evidence pack → Gemini 3 draft + audit → final verdict → low-risk auto / high-risk confirm

Inspiration

The inspiration for EcoSense comes from two deeply personal stories that highlight the fragility of safety in our daily lives. First is my grandmother. She lives alone, and her health is declining. One afternoon, she felt thirsty and tried to get water, but her trembling hands dropped the glass cup. It shattered on the floor. Because of her poor eyesight, she couldn't see the shards clearly, slipped while trying to clean them, and fell. She lay there, unable to get up or reach her phone, until a neighbor eventually heard her faint calls. I keep asking myself: Why couldn't we know the moment that glass shattered? Second is my own experience. As a woman living alone and frequently staying in hotels, I often feel an underlying anxiety—a need to be "always-on." I wanted a digital guardian that doesn't sleep, one that can distinguish between a noisy TV and a window breaking, providing me with peace of mind. I built EcoSense to bridge this gap: to be the ears for the elderly who can't move quickly, and the shield for those living alone in an unpredictable world.

What it does

EcoSense is an Environmental Semantic Agent that transforms any device with a microphone into an intelligent security system. Unlike traditional alarms that only detect high decibels, EcoSense uses Google Gemini 3.0 to understand the context of sounds. Semantic Perception: It listens to audio streams and identifies specific acoustic events (e.g., "Glass Shatter," "Heavy Thud," "Footsteps") in real-time. Narrative Reconstruction: It doesn't just detect sound; it reconstructs the story. A "Thud" followed by "Silence" might indicate a fall (Grandma's scenario), while "Glass Break" followed by "Aggressive Voices" indicates a break-in. Dual-Mode Interface: Guard Mode: A high-contrast, large-text interface designed for the elderly, focusing on clear status updates. Forensic Mode: A detailed dashboard for technical analysis, visualizing probability curves and audit logs. Automated Protocols: In critical situations, it simulates locking perimeters or notifying emergency contacts based on a "High Risk" logic gate.

How we built it

We engineered EcoSense as a Serverless Client-Side Application to ensure privacy and low latency, leveraging the latest web technologies. Core Stack: Built with React 19 and TypeScript for a robust, type-safe frontend. We used Tailwind CSS to create a professional "Cyber-Physical" aesthetic. AI Engine: We utilized the Google GenAI SDK (@google/genai) with the gemini-3-flash-preview model. The 3-Stage Reasoning Pipeline: Signal Analysis: Slicing audio into time windows to calculate Bayesian Anomaly Probabilities ( ). Narrative Drafting: The AI acts as a forensic reporter, generating a draft incident report based on the acoustic evidence. Safety Audit: A self-correction layer where the AI critiques its own report to prevent hallucinations and false alarms. Visualization: We implemented HTML5 Canvas for real-time waveform rendering and Recharts to visualize the anomaly probability timeline.

Challenges we ran into

The "Context" Dilemma: Distinguishing between a harmless accident (dropped cup) and a hostile threat (break-in) was difficult. We solved this by implementing the "Narrative Layer," instructing the model to analyze the sequence of sounds rather than isolated events. Browser Audio Handling: Managing raw audio streams and converting them to Base64 for the Gemini API without causing memory leaks or UI freezes required optimizing our React.useEffect hooks and canvas rendering loops. Demo Reliability: For the competition, we couldn't physically break windows to test the app live. We built a robust Implicit Demo Mode (Factory Pattern) that seamlessly injects pre-validated acoustic datasets (Break-in, Accident, Noise) when live inputs aren't available, ensuring judges see the full capability of the system without needing a complex hardware setup.

Accomplishments that we're proud of

The Audit Layer: We successfully implemented a "Chain of Thought" workflow where the AI audits itself. This significantly reduced false positives, making the system trustworthy enough for security contexts. Inclusive Design: creating the Guard Mode. It was a proud moment to design an interface specifically for my grandmother—one that she could actually see and understand without confusion. Real-time Visualization: The Bayesian Probability Chart looks amazing and provides immediate visual feedback on how the AI is interpreting the environment.

What we learned

Multimodal AI Power: We learned that LLMs like Gemini are incredible signal processors. They can "hear" nuances in audio—like the difference between an angry shout and a joyful shout—that traditional DSP (Digital Signal Processing) code cannot easily define. Empathy-Driven Engineering: Building for a specific person (my grandma) clarified every feature decision. If it didn't help her stay safe, we didn't build it. State Management: Managing the complex state between "Analyzing," "Drafting," and "Auditing" taught us valuable lessons in React state machines.

What's next for EcoSense — Environmental Semantic Auditor

IoT Integration: We plan to connect EcoSense to smart home APIs (Philips Hue, SmartThings) to flash lights red or auto-lock doors when a threat is confirmed. On-Device Privacy: Moving the inference to Gemini Nano (local model) so the system works offline and audio data never leaves the user's house. Fall Detection 2.0: Integrating accelerometer data from the user's phone or watch alongside the audio analysis to create a multi-sensor fall detection system for the elderly.

Log in or sign up for Devpost to join the conversation.