-

-

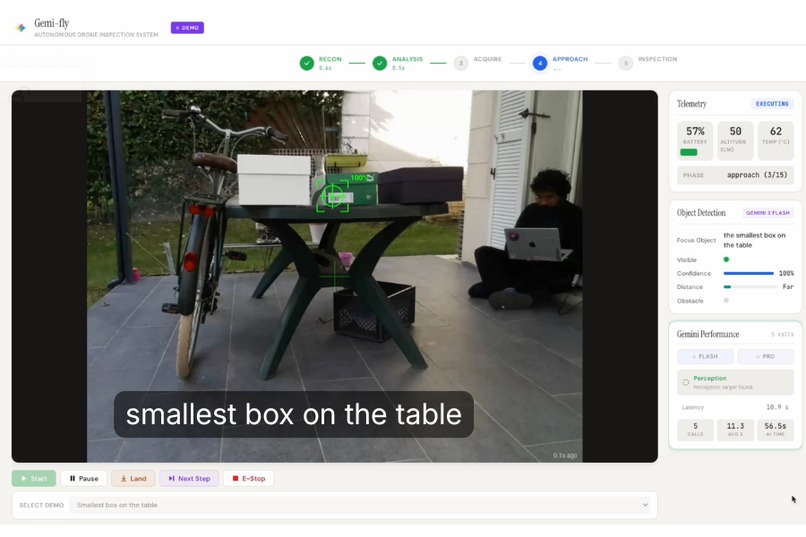

Query: "smallest box on the table" — Gemini compares visible objects, identifies the target, and guides the drone autonomously

-

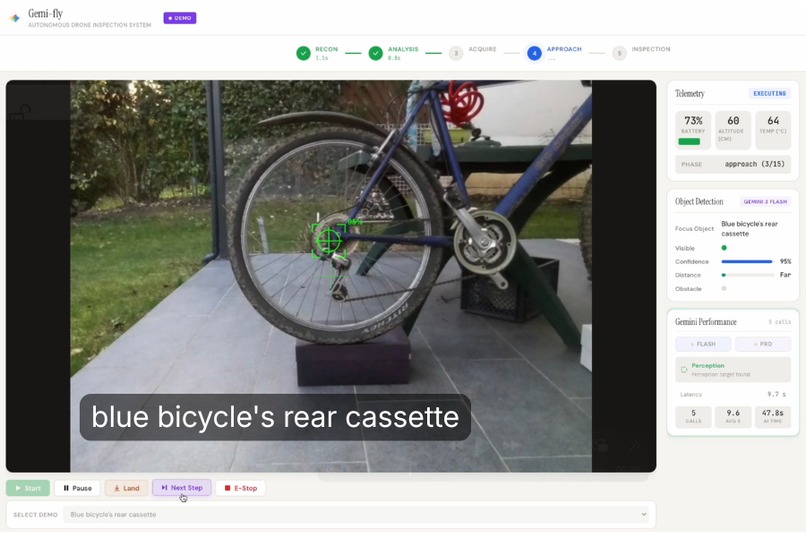

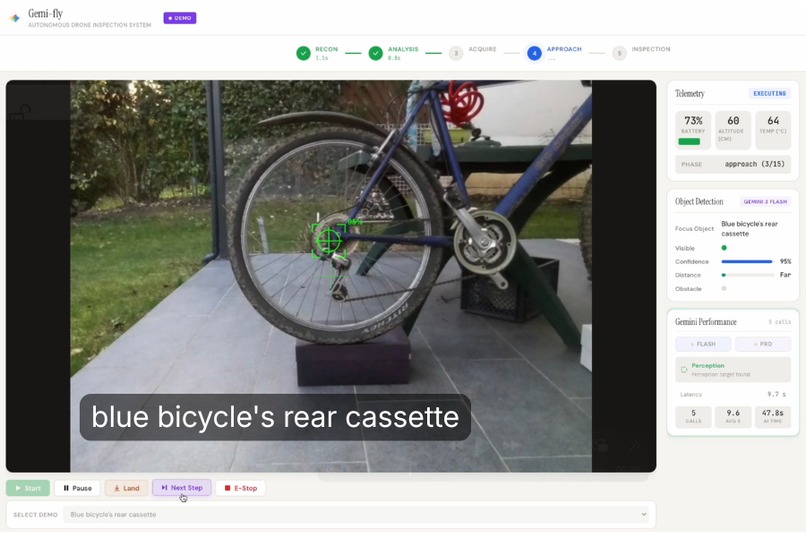

Query: "blue bicycle's rear cassette" — Gemini understands bike anatomy and flies the drone to inspect it. No manual control.

-

GIF

GIF

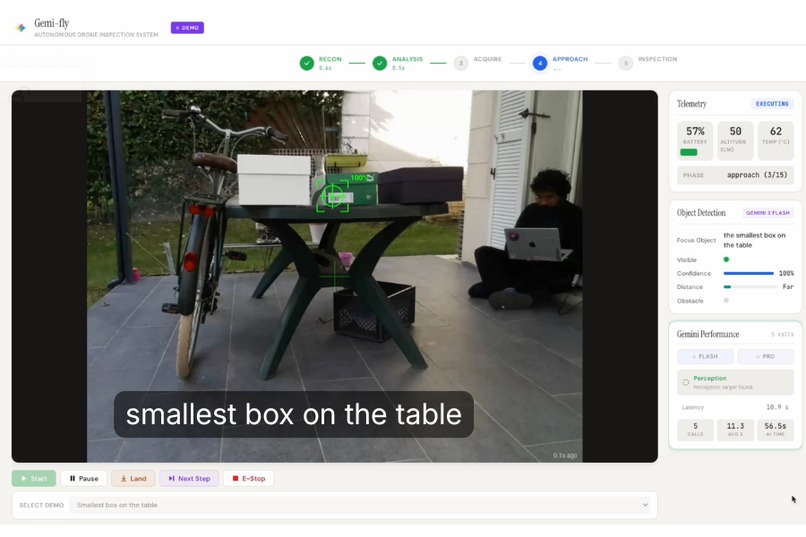

Dashboard view: Gemini 3 guides drone to target, inspects it, and generates a detailed report — fully autonomous

-

GIF

GIF

Gemini 3 guides the drone to its target using vision-based navigation

Inspiration

I've always been fascinated by autonomous vehicles and multimodal AI. The idea of machines that can see, reason, and act in the physical world feels like science fiction coming to life. So when the Gemini 3 hackathon was announced, I asked myself: what if I could control a drone entirely with AI?

I had never flown a drone before, but that made it even more exciting. I wanted something programmable and affordable, which led me to the DJI Tello — a small, hackable drone with a Python SDK. Finding one to buy took about a week, but once it arrived, the real adventure began.

What I Built

Gemi-fly is an autonomous drone inspection system powered by Google Gemini 3. You give it a target — like "red bottle" or "bicycle cassette" — and the drone takes off, explores the environment, locates the object, approaches it, and generates a visual inspection report. No manual piloting required.

The system uses a dual-model architecture:

- Gemini 3 Flash for fast perception — analyzing video frames to detect objects and estimate positions

- Gemini 3 Pro for strategic planning and inspection — spatial positioning, target label refinement, and generating detailed inspection reports from close-up frames

A web dashboard shows the live video feed, AI perception overlay, telemetry, and mission progress. At the end, you can export an inspection report with annotated frames and findings.

Why This Is Different: AI That Understands Intent

Unlike simple object detection, Gemi-fly interprets natural language queries through visual reasoning:

- "Find the smallest box on the table" → Gemini sees multiple boxes, compares their sizes, and identifies the correct target

- "Inspect the bicycle cassette" → Gemini understands bike anatomy: locate bicycle → find rear wheel → identify cassette → approach

- "Check the red bottle" → Gemini scans the scene, distinguishes objects by color and shape, locks on

This isn't template matching, it's multimodal reasoning. The same way you'd interpret the request.

Challenges

Working with physical hardware in the real world is humbling. I quickly discovered that drones don't behave like simulators:

- Wind — Even light air currents caused the drone to drift and struggle to stabilize

- Tight spaces — Narrow rooms limited maneuverability and made testing stressful

- Low light — Without enough ambient light, the Tello's optical flow sensor couldn't track position, causing unpredictable drift

Each of these forced me to adapt — finding the right indoor environment, waiting for calm conditions, and ensuring proper lighting. It was frustrating at times, but solving real-world constraints made the project feel tangible in a way pure software projects don't.

How I Built It

I leaned on AI tools throughout development, using the Gemini CLI to accelerate coding and iteration.

One key insight was achieving deterministic control from a non-deterministic model. LLMs can be unpredictable, but drones need precise commands. My solution was using Pydantic schemas to constrain Gemini's output — the model returns structured JSON that maps directly to drone actions, eliminating fragile regex parsing.

The dual-model architecture emerged naturally: Gemini 3 Flash handles fast perception during approach loops, while Gemini 3 Pro handles tasks requiring deeper reasoning — spatial positioning, target label refinement, and final inspection reports.

What I Learned

Beyond the technical skills, this project taught me patience. Hardware doesn't forgive bugs the way software does — a bad command means a crashed drone, not just a stack trace. I gained a deeper appreciation for robotics engineers who deal with this daily.

I also learned that multimodal AI is genuinely capable of spatial reasoning. Watching Gemini analyze a video frame and correctly estimate that a target is "slightly left, about 80cm away" felt like witnessing something new.

Accomplishments that I'm proud of

The first time the drone took off, scanned the room, locked onto a target, and flew toward it completely on its own — I just stood there in disbelief. No joystick. No manual input. Just AI reasoning over pixels and issuing commands.

It was one of those rare moments where the thing you imagined actually works. I felt incredibly grateful to have built something that genuinely surprised me.

What's next for Gemi-fly

More capable hardware. The DJI Tello is great for prototyping, but its limitations became clear: wind sensitivity, optical flow drift in low light, and a short battery life. The next step is adapting the system for a more stable drone with GPS, better sensors, and longer flight time.

New mission types. Right now, Gemi-fly only does inspection — find a target, approach it, report what it sees. But the underlying architecture (perception loop → planning → execution) could support other missions: perimeter patrol, inventory counting, search and rescue, or agricultural monitoring.

SDK extraction. The goal is to package the core pattern — frame capture, AI reasoning, structured command output, safety checks — into a reusable SDK. This would let developers build autonomous drone applications without reinventing the control loop each time.

The vision: make autonomous drone development as simple as writing a prompt.

Built With

- css

- dji-tello-sdk-(djitellopy)

- fastapi

- google-gemini-3-api

- html

- javascript

- opencv

- pydantic

- python

- websockets

Log in or sign up for Devpost to join the conversation.