-

-

Hold the camera in front of an instrument to hear sample audio and explore details in AR.

-

Audio plays to give an idea of what the instrument sounds like.

-

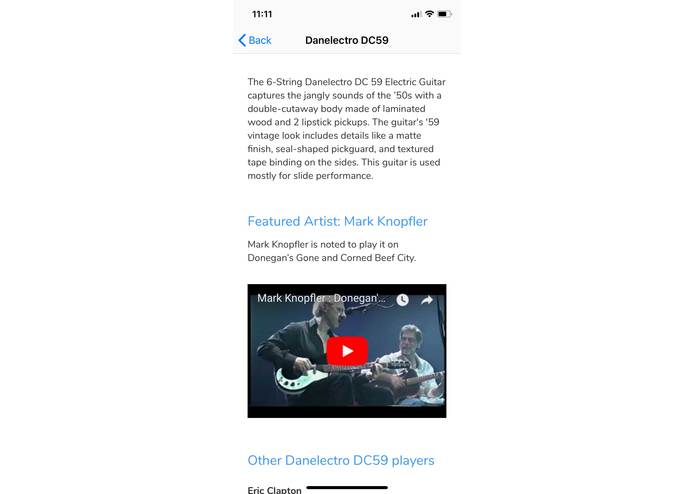

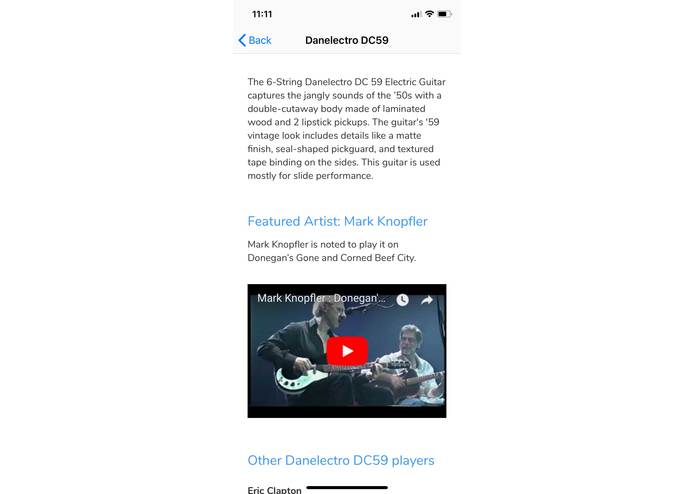

Learn more about the instrument—what artists use it, videos of it being played, how it sounds with other amp setups.

-

See details about components of the instrument.

Inspiration

Often, if you’re purchasing musical gear in a retail shop, most of the sales representatives are quite busy and getting advice is difficult due to this. And if you’re a beginner, you can quickly get overwhelmed by looking at all the options you have. It’s hard to know what an instrument sounds like just by looking at it, especially if you cannot play it yourself yet. Musical gear is expensive, so we want to empower users to make educated decisions.

What it does

geAR is an app that automatically recognizes musical gear and overlays additional information in Augmented Reality. Users hear a sound sample of the instrument as soon as they point at it. If the user is interested in learning more, they can view additional details about the item including videos of the gear in use and real audio samples from artists that use that gear. It’s also possible to hear different gear setup, e.g. a guitar with different types of amps or pedals. Plus, we envision an integration with existing virtual amp apps like Jamup Pro, so an experienced player can step into a rehearsal room, plug the guitar into their iPhone and try a specific amp setting themselves.

From the perspective of a store owner, the app provides a new way to engage with customers. The app also provides analytics data back to the owner about which products customers viewed, giving them more insight into how to optimize their business. Owners can view analytics from the app on a separate web-based dashboard. The app also allows the shop owners to 1) relieve their sales rep of some of their usual load and 2) offer an impressive, immsersive shopping experience that an online store would not be able offer, so they compete better with the online sales channels. The app is easy for owners to configure for their stores and leverage existing warehouse management systems. All they need to do is take a picture of each item they want to be recognized in AR and upload it on the web dashboard.

How we built it

geAR uses cutting-edge computer vision algorithms in the ARKit 1.5 beta to reliably recognize any item and accurately position information tags in AR. The iOS app is built with Swift and the native iOS SDK.

The backend is built with Meteor, MongoDB, and AWS. We use the 7digital API to pull information about artists and their music.

Challenges we ran into

Augmented reality has become much more powerful and accessible with the introduction of ARKit, but it still presents some challenges. Rendering nodes in AR space can be tricky, especially with all the possible tracking states. We’re happy that we got the AR experience working reliably.

Accomplishments that we're proud of

The app is truly AR in the sense that it’s augmenting reality. Many AR apps simply display a 3D model with a live camera feed behind it. Our app recognizes existing objects in the scene and provides contextual information relevant to the user.

We’re really happy with our custom audio waveform visualization. We are using the AudioKit framework to analyze the audio that’s playing and gather the data to power the waveform. The waveform uses a Core Animation and a custom path smoothing algorithm to make the waves look fluid.

We put a large emphasis on the user experience, and our user tests show that the app is intuitive to use.

What we learned

AR is powerful. While the effect is impressive on a mobile device, the future is in “AR glasses” that provide a passive AR experience. Imagining the possibilities of widespread augmented reality becomes a little clearer when building apps like this.

What's next for geAR

We would like to enable a “mixed reality” experience by letting the app dictate which amplifiers to use with a setup. Users could plug their phone into the setup, and simulate the amplifiers through the app. This would enable an experience that allows musicians to discover new combinations that mixes the real world and the digital world.

Log in or sign up for Devpost to join the conversation.