-

-

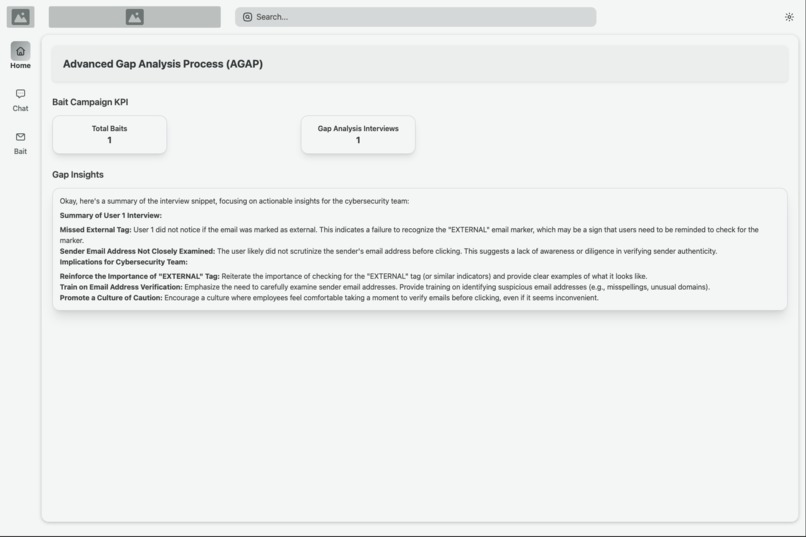

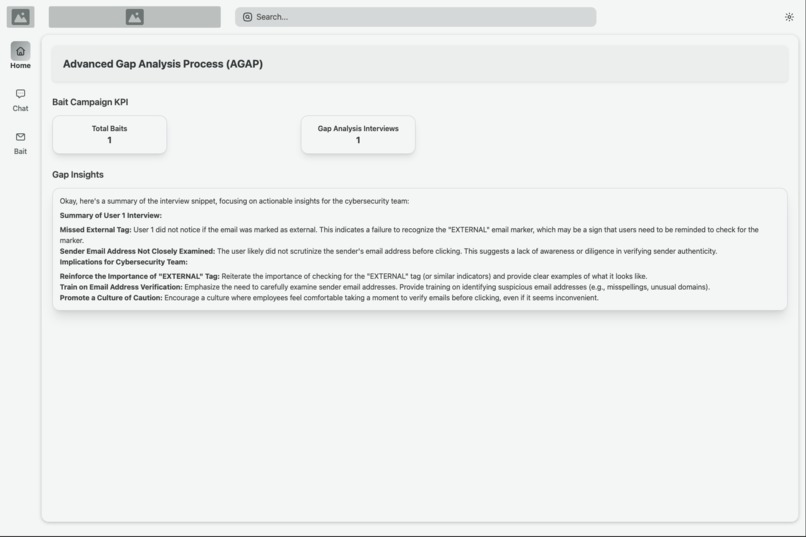

AGAP’s Gap Insights highlights key metrics and interview findings from the phishing campaign.

-

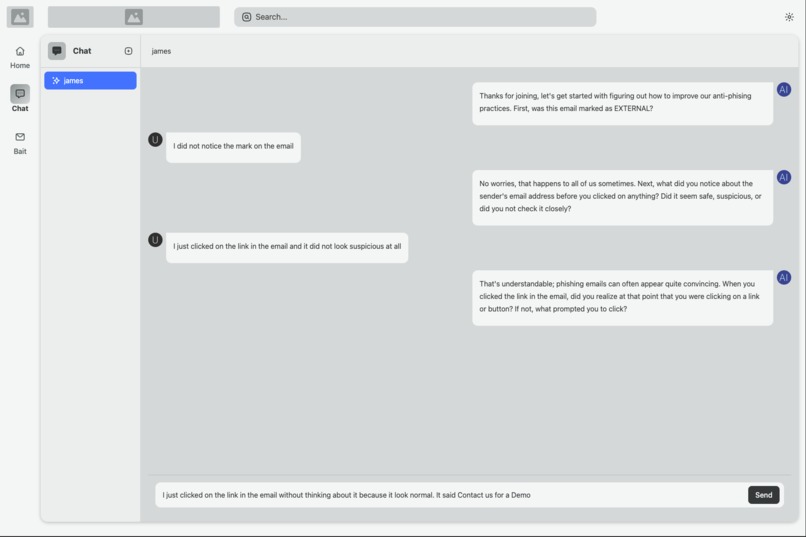

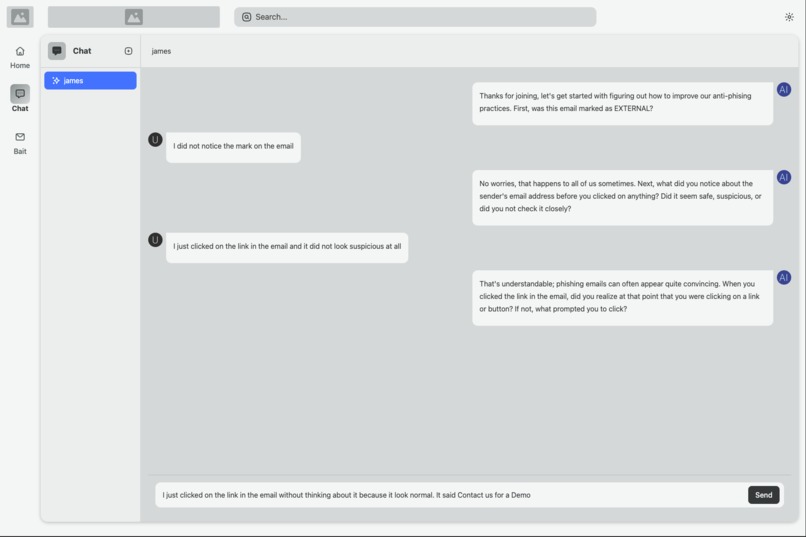

AGAP’s chat interface interviews a target about phishing behavior, uncovering insights for enhanced cyber defenses.

-

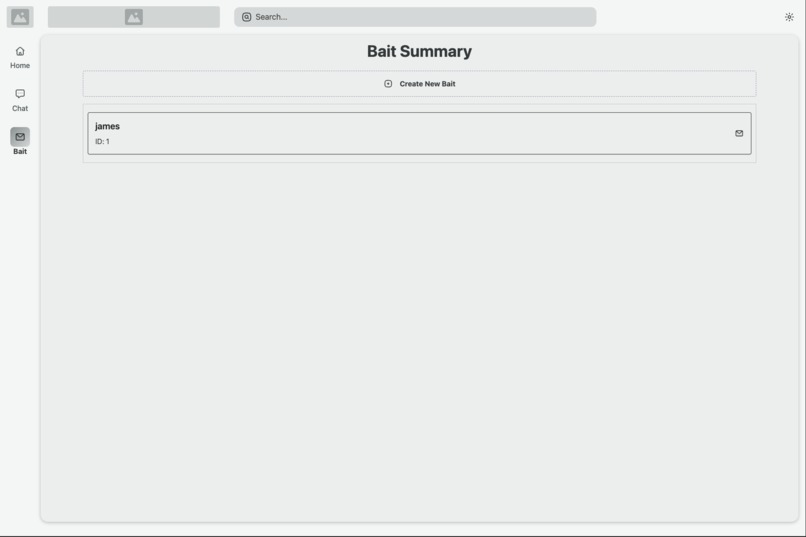

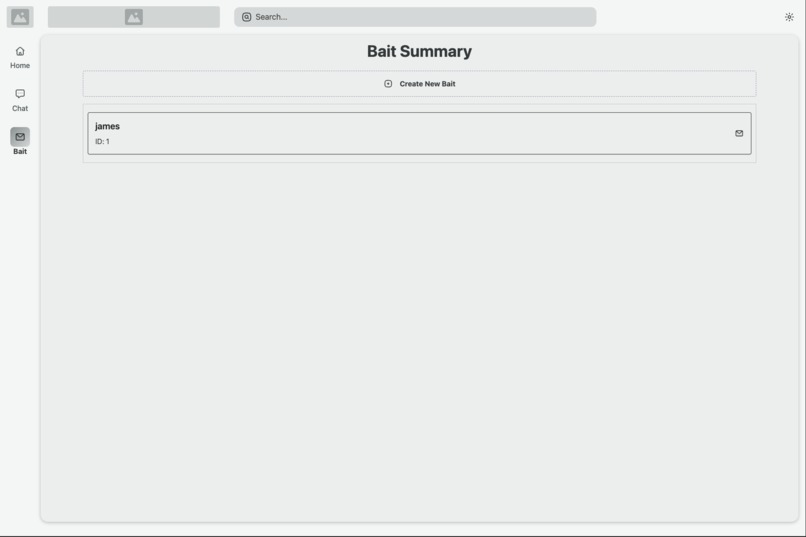

AGAP’s Bait Summary organizes phishing targets, streamlining campaign management for cybersecurity teams.

-

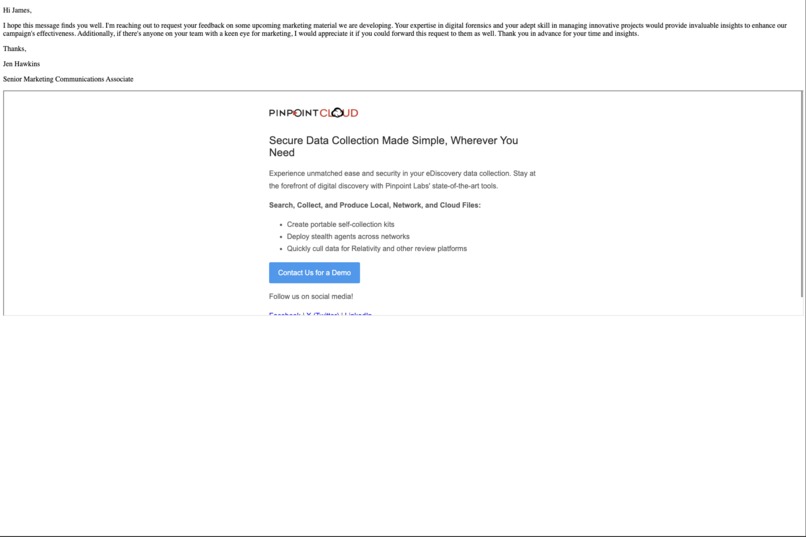

AGAP’s spear phishing email template uses targeted branding and a call-to-action link to entice recipients into clicking.

-

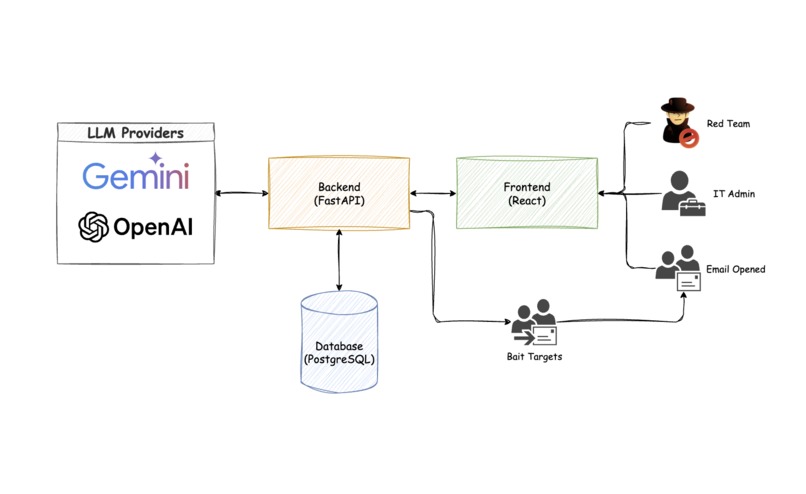

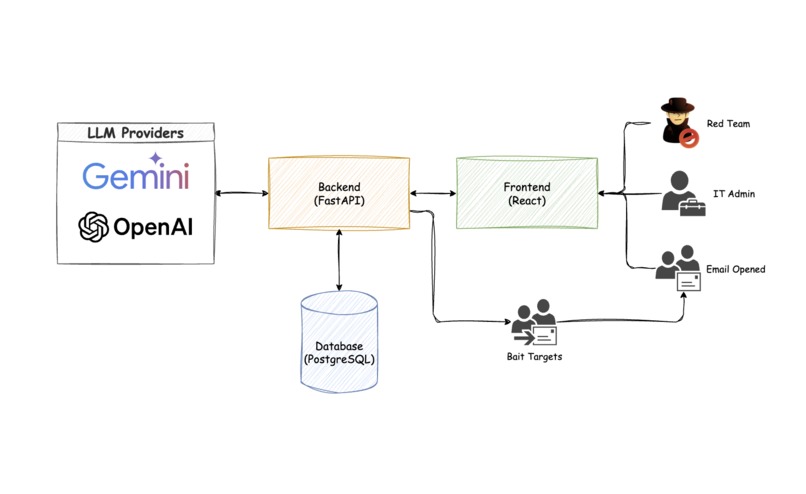

AGAP architecture: LLM providers, FastAPI backend, React frontend, and PostgreSQL, coordinating automated phishing tasks.

Inspiration

Tools like KnowBe4 and GoPhish provide valuable insights to cyber security professionals. We showcase our solution: Advance Gap Analysis Process (AGAP) to demonstrate how Agents can add value to these systems with AI Driven strategies.

Phase 1 - AI Enhanced Bait

To start our system, the red team chooses named targets to generate bait for. AGAP uses AI to parse the HTML for the target's LinkedIn and company webpage. Based on those insights, AGAP generates a personalized bait email to send to the target using their company branding, styling and comms strategy.

Phase 2 - AI Driven Interviews

Once a target clicks the bait email, they are redirected to AGAP’s Interview page. Here, the AI agent automatically loads the clicked email into its chat history and conducts a dynamic interview. The conversation is designed to uncover details such as email markings, forwarding behavior, sender address authenticity, and any suspicious links. This AI-guided dialogue not only engages the victim but also extracts critical information that can be used to refine the bait campaign.

Phase 3 - AI Powered Summary & Analytics

Manually reviewing all interviews from Phase 2 would be resource-intensive for a large organization. To streamline this, AGAP uses AI to aggregate and analyze the interview data. It then delivers a concise summary along with key performance indicators (KPIs) that assess the bait campaign’s effectiveness, empowering the cybersecurity team with actionable insights.

How we built it

Our system is built on a robust stack that includes:

- Postgres: for storing chat logs and bait emails

- Python with FastAPI: powering the backend and integrating our AI modules for text analysis and dynamic interactions

- React: forming the client interface

By embedding AI components throughout the stack, we ensure that data-driven decision-making is at the core of our cybersecurity strategy.

Challenges we ran into

Large Webpage Analysis: Reading extensive webpages like LinkedIn strains large language models (LLMs) and they often perform poorly. In fact, the context is too large for anything but super large context models like Google's Flash to handle. We mitigated this by doing string comparison between the html for the target and a baseline linkedin page and prompting on the differences with AI.

Guiding AI During Interviews: Keeping the LLM on rails during the victim interviews requires a prompt with a delicate balance between curiosity, which allows the interview to uncover deeper answers behind why targets took the bait, and structure which keeps the interview focused on questions of chief importance to the cyber security team. We fine-tuned the AI prompt to strike the right balance, ensuring that the interview process remained both investigative and efficient.

Built With

- fastapi

- gemini-flash-2.0

- gpt-4o

- postgresql

- python

- react

- rspack

- tanstack-query

- tanstack-router

Log in or sign up for Devpost to join the conversation.