-

-

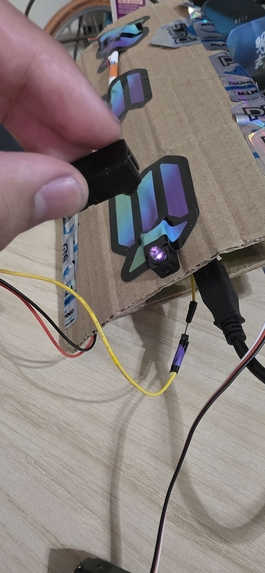

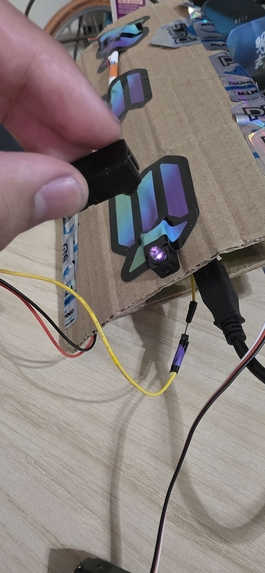

Ir sensor emitter

-

Ir sensor reciever (on hand) and Ir sensor reciever on the bottom

-

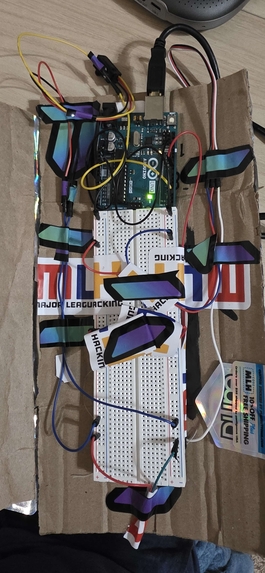

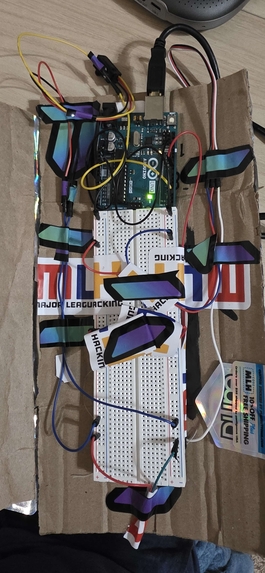

The Arduino

-

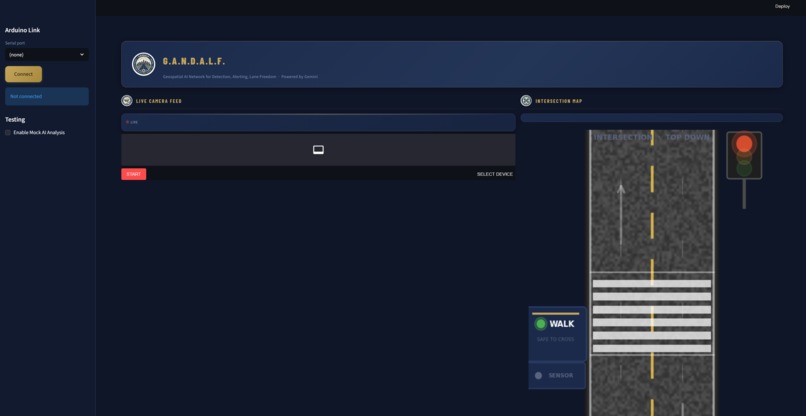

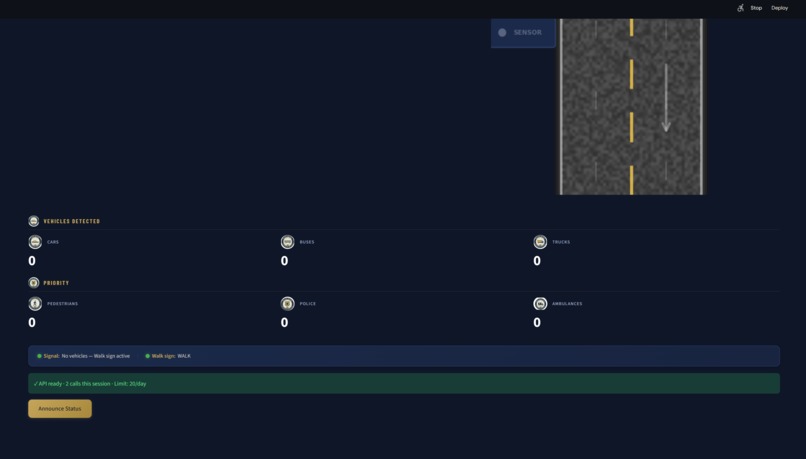

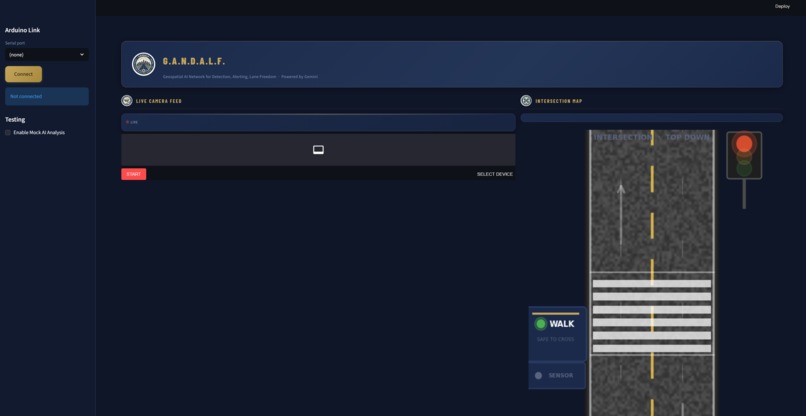

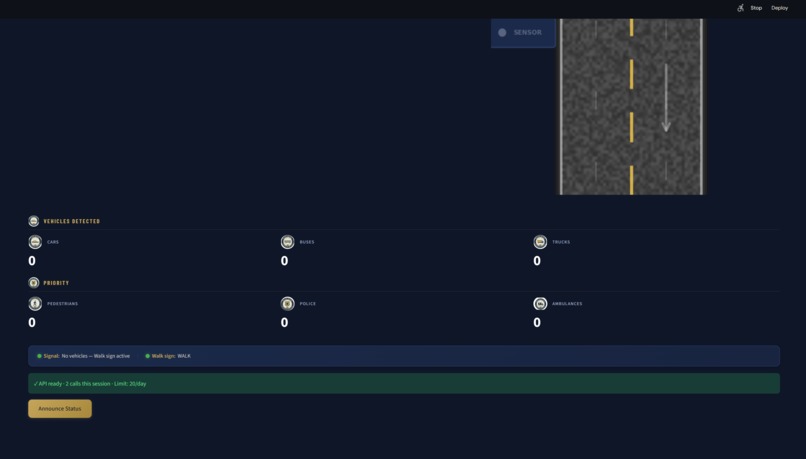

Home page (Everytime theres a type of vechile ex. Car, Police Car, ambulance, etc, it recognizes the it and displays it on the app)

-

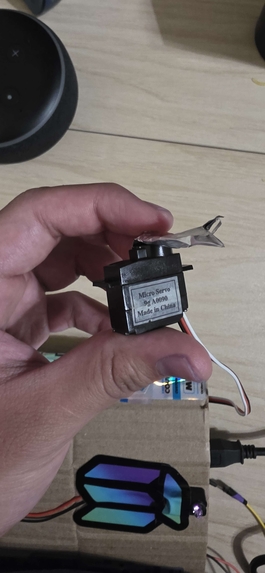

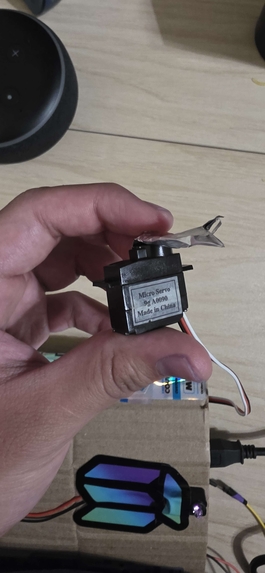

Micro servo to visualize a walking sign and/or a dont walk sign for pedestrians.

-

Echo dot for highway patrol (Walk, Stop, etc)

-

Counter of identification of each vechile, subjecting to data collection to improve less congestion in roads

Inspiration

Every minute an ambulance is stuck in traffic, the survival rate for cardiac arrest victims decreases by 10%. Emergency responders lose an average of 2 minutes per trip due to traffic congestion, with some urban areas seeing that time increase by as much as 5 minutes. There are enterprise solutions out there, like Los Angeles' 4,850 adaptive signals, saving 9.5 million driver hours annually, or the City Brain, which reduced emergency response time by 50% for the citizens of Hangzhou, China. The problem is, they cost millions of dollars, like the cost of upgrading an intersection, which is $10,000 for the hardware, plus $5,000 for the GPS transponder for each vehicle. Companies like Miovision, Econolite, and Kimley-Horn sell this technology exclusively to the well-funded metro governments that can afford them. We're not going to see the kind of budget that the city of Pittsburgh has anytime soon for the city of Rolla, Missouri, so we asked ourselves, "What if we could deploy the kind of technology that does the same job as that $10 million solution for the price of that $100 webcam?" What if we could deploy the kind of technology that any community could afford, that didn't require any transponders, didn't require any custom model, didn't require any special hardware? And that's GANDALF, the Gemini Analysis Network for Dynamic Adaptive Lane Flow.

What it does

GANDALF is a real-time AI traffic analysis and emergency vehicle preemption system. GANDALF sees, thinks, speaks, and acts.

Sees: A webcam streams live feed to Google Gemini 2.5 Flash's multimodal vision API. Zero-shot vehicle classification occurs without any training data and custom models. Vehicles include cars, trucks, buses, motorcycles, ambulances, fire trucks, police cars, pedestrians, and cyclists. IR breakbeam sensor ground-truth vehicle count data is available for cross-modal verification. Thinks: A structured decision engine analyzes the JSON data returned by the Gemini API via confidence-weighted data. Emergency vehicle detection occurs at 30% confidence level, soft alerting all traffic to yield. An 80%+ confidence level results in the lockdown of the entire intersection. Temporal analysis occurs across multiple sequential frames to track trends in traffic congestion. Sensor fusion logic cross-verifies the AI's visual vehicle count against the IR sensor data. Speaks: ElevenLabs Flash v2.5 creates dynamic voice alerts in under 150 milliseconds. These voice alerts are not pre-recorded but created in real-time using natural language processing. "Attention: Ambulance detected approaching from the north. All vehicles yield immediately." The voice alert is broadcast via a Google Nest Mini. Acts: The physical infrastructure is controlled autonomously. Micro servo barriers can block or clear roads. A Grove LCD changes color and displays real-time text. Once every 60 seconds, the Gemini API creates a natural language traffic intelligence briefing. This is something no computer vision solution can do. The briefing is then read aloud via ElevenLabs. GANDALF is run entirely on the Raspberry Pi with the webcam. The total hardware cost is less than $100.

How we built it

The main architecture consists of a Python-based event loop running on the Raspberry Pi, which manages four subsystems: Vision Pipeline: OpenCV captures the webcam feed every 5-6 seconds and sends the feed to Google Gemini 2.5 Flash using the google-genai SDK. We also created a sophisticated system prompt (codename: ATLAS – Advanced Traffic Logic and Analysis System) to query Gemini and parse the results in JSON format according to the Pydantic schema. The results include vehicle type and direction data, emergency vehicle data with confidence values, pedestrian locations, traffic congestion levels, weather and visibility data, and hardware control commands. Voice Synthesis: ElevenLabs Flash v2.5 decodes the voice scripts received from Gemini and plays the corresponding speech using the “Daniel” voice – deep and authoritative, like a BBC news anchor. The speech is streamed via Bluetooth to the Google Nest Mini smart speaker. Sensor Fusion: Three IR breakbeam sensors connect to the Raspberry Pi GPIO pins and measure vehicle counts approaching the road. The decision engine compares these values to the visual data received from Gemini. This creates an additional layer of verification. Physical Actuation: Three micro-servos (GPIO 18, 13, and 19) control the three barrier arms made from popsicle sticks. Gemini sends the control commands to the servos to control the road. Dashboard: A web-based interface created with Streamlit displays the current traffic state and vehicle data in real-time. Development: The entire project was completed in 36 hours. Initially, the development was done on laptops and later migrated to the Raspberry Pi for hardware integration.

Challenges we ran into

Gemini Latency vs. Real-time Requirements: API calls to the multimodal model take 1.5-3 seconds to complete, which translates to a distance of 120 feet at a speed of 40mph. We had to work around this by using the system to gain awareness of intersections, whereas the IR sensors would be used to detect the presence of a car. Structured Output Reliability: Getting Gemini to output a well-formed JSON string according to the Pydantic schema was a challenging task, requiring much prompt engineering effort. We initially observed malformed JSON strings 15% of the time. We fixed this by using examples of the expected JSON schema in the system prompt. Bluetooth Audio Pairing: We ran into unexpected difficulties pairing the Google Nest Mini as a Bluetooth speaker to the Raspberry Pi. We wrote a re-connection watchdog to automatically re-pair the device when the audio output fails. Servo Jitters: We initially experienced servos jitters when the servos were at rest using the standard GPIO library PWM functions. We fixed this by using the pigpiod daemon to provide hardware-timed PWM via gpiozero’s pigpio pin factory, which completely eliminated the servos jitters. Rate Limitations of Eleven Labs API: The Eleven Labs API has a monthly limit of 10,000 credits, which translates to a maximum of 10,000 API calls to generate dynamic audio alerts every few seconds. We implemented a caching system to hash the alert text, which reused the previously generated audio, reducing API calls by 70%.

Accomplishments that we're proud of

Our system is a complete-stack AI system that closes the digital-physical divide in 36 hours. Typically, a project at a hackathon exists entirely on a screen. GANDALF has a multi-sensory output that can be seen, heard, and even felt as servos move, the LCD screen glows in color, and a speaker announces alerts. Our zero-shot emergency vehicle detection does not require a single image in the training data. Existing solutions such as YOLOv8, SSD, Faster R-CNN require thousands of images labeled as "ambulance" to train the model. Our solution replaced the entire machine learning stack with a carefully crafted natural language input. Our sensor fusion architecture combines the power of AI vision using Gemini and the accuracy of infrared breakbeam sensors as a ground truth. It has a verification effect that neither system can achieve on its own. Our periodic traffic intelligence briefing is the secret sauce to the system. Every 60 seconds, GANDALF sends the last 10 analyses to Gemini and asks it to provide a natural language summary: "Traffic has increased 40% on northbound approach over the last 5 minutes, likely due to school dismissal. Recommend extending green phase by 15 seconds." This capability is beyond the capability of YOLO to provide. It shows AI providing intelligence, not just bounding boxes.

What we learned

Prompt engineering is the new model training. We have invested more time in crafting the prompt for our Gemini system than any other part of the codebase. The distinction between a good prompt and a well-constructed prompt was the distinction between arbitrary free-text responses and well-structured JSON with actionable hardware commands. The prompt is the model for multimodal AI. Physical computing humbles you very quickly. Software developers often forget that deterministic execution is a given. Hardware has timing constraints, electrical noise, mechanical tolerances, and failure modes that no amount of well-written code can abstract away. Servo jitter, Bluetooth dropouts, and I2C addressing issues taught us more about systems engineering in one weekend than a semester of lectures. The distinction between a prototype and production codebase is real, and that's perfectly fine. While our cloud-dependent architecture would never be suitable for production use in a traffic safety system (latency, internet dependency, etc., as well as the associated energy costs), that's not the point of the project. The point of the project is to demonstrate that zero-shot multimodal AI can be used to replace traditional detection model training as well as traditional hardware detection solutions to solve the preemption problem. Cross-modal verification was a big part of the project, too. While the IR sensors detected 5 vehicles, Gemini only detected 3, which forced us to think about failure modes, occlusion, etc. While a real-world safety-critical system would need redundancy, we learned a lot by implementing a very simple fusion layer, which gave us a huge appreciation for why AVs use lidar, radar, and cameras together.

What's next for G.A.N.D.A.L.F.

Edge deployment: The vision pipeline should be migrated from the cloud Gemini API to the locally deployed model (Gemma 3 4B or Qwen2.5-VL 7B), which does not require the internet, thus reducing the latency time from seconds to milliseconds. The cloud API will act as the higher-order coordinator for multiple intersections. Multi-intersection coordination: The GANDALF nodes should be interconnected in a network, such that the upstream intersections send notifications about the arrival of an ambulance to the downstream intersections, thus providing the rolling green wave before the ambulance reaches the intersection. Real traffic validation: The researchers should work with the civil engineering department of the Missouri S&T school to validate the GANDALF performance using the camera feeds of the intersections. Vehicle-to-Everything integration: Vehicle-to-Everything should be integrated as an additional input, not as an alternative, to the computer vision input. This is because not all cars will have transponders, while all cars can be seen by the camera. Open-source release: GANDALF should be released as a turnkey product that any city can deploy with the Raspberry Pi and the webcam, not just the ones that can afford the enterprise version. The objective is that smart traffic infrastructure should not be limited to the ones that can afford it, but should be available for every community.

Built With

- c+

- claude

- elevenlabs

- gem

- python

- raspberry-pi

Log in or sign up for Devpost to join the conversation.