-

-

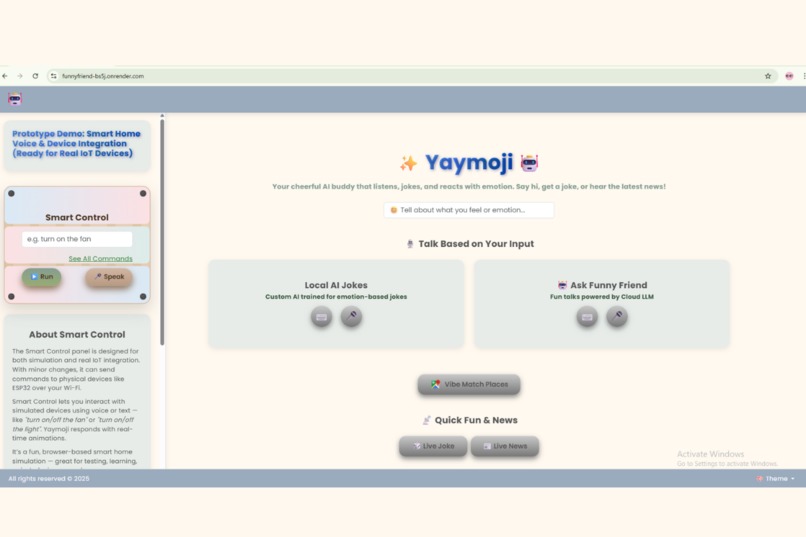

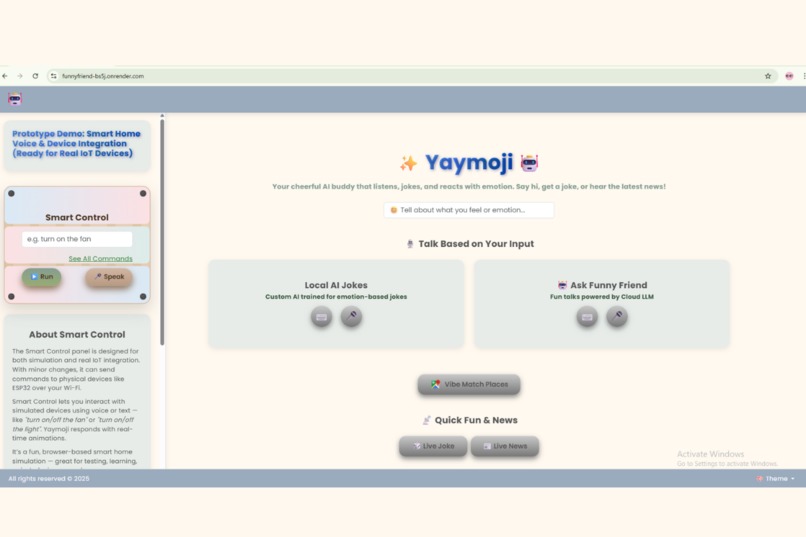

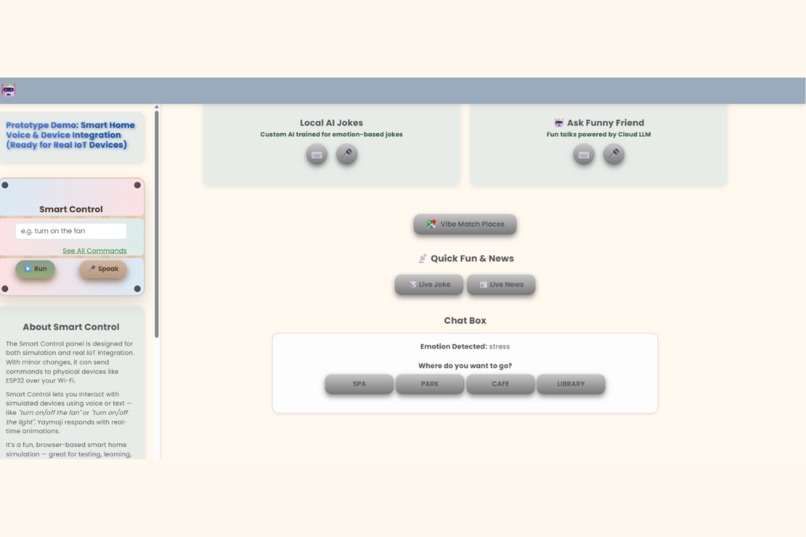

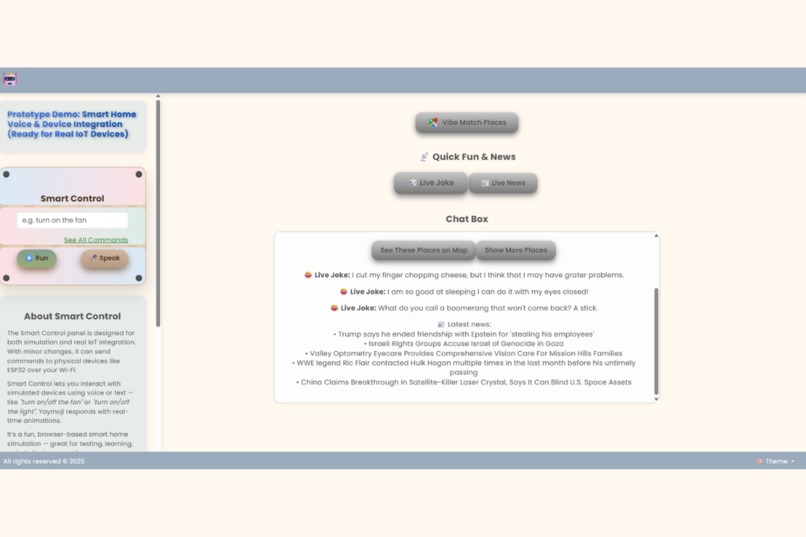

Homepage UI (Browser Version) "Yaymoji’s browser-based assistant welcomes users with voice or text — ready to detect emotions and help."

-

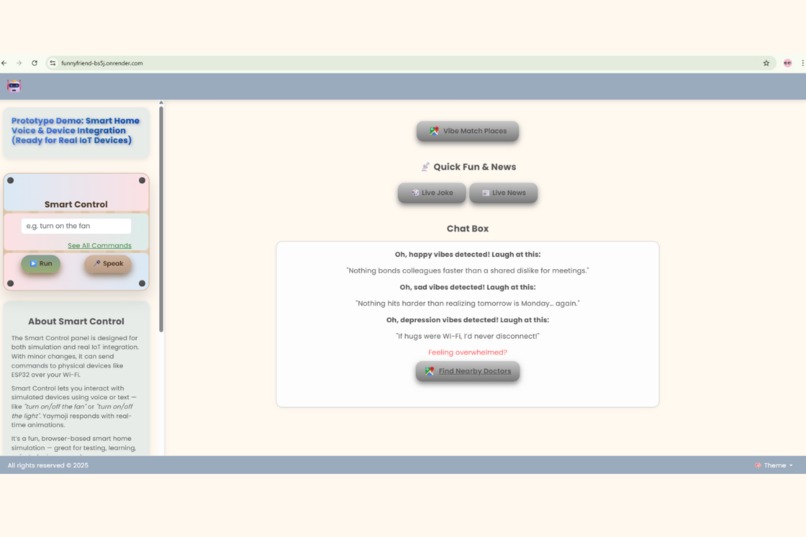

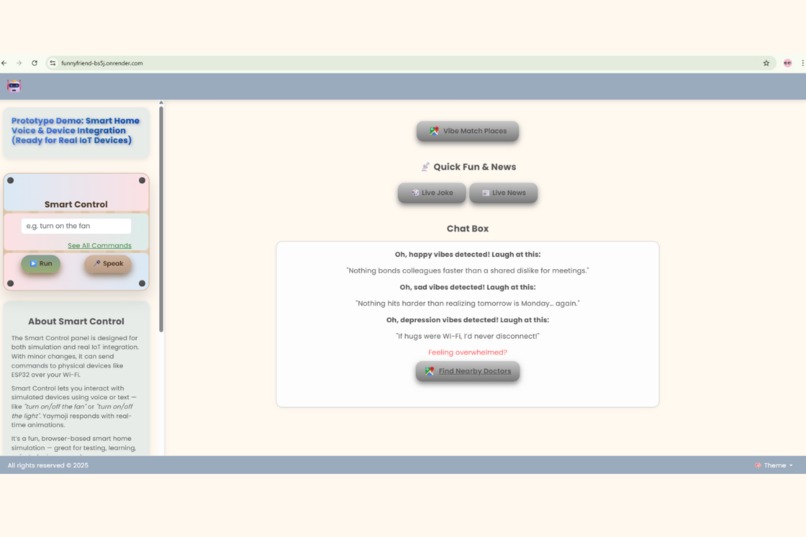

Jokes & Doctor Suggestions by Emotion "Based on your mood, Yaymoji offers empathetic jokes and suggests nearby doctors using Google Maps."

-

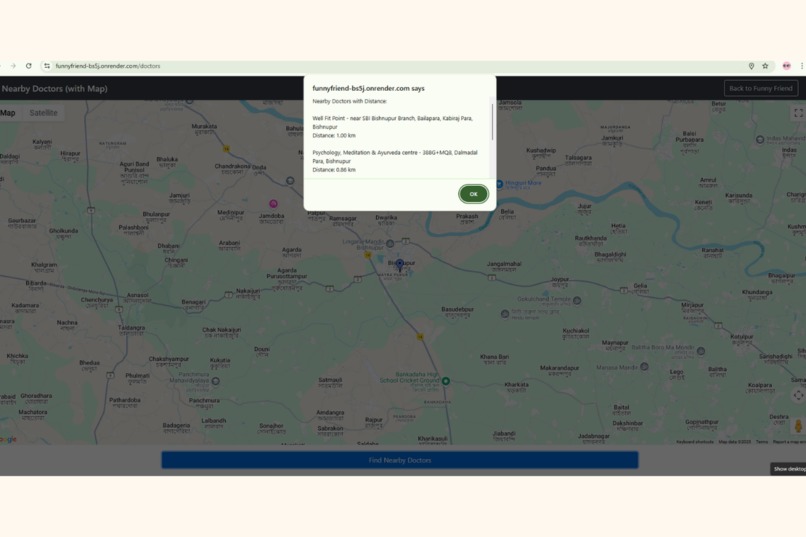

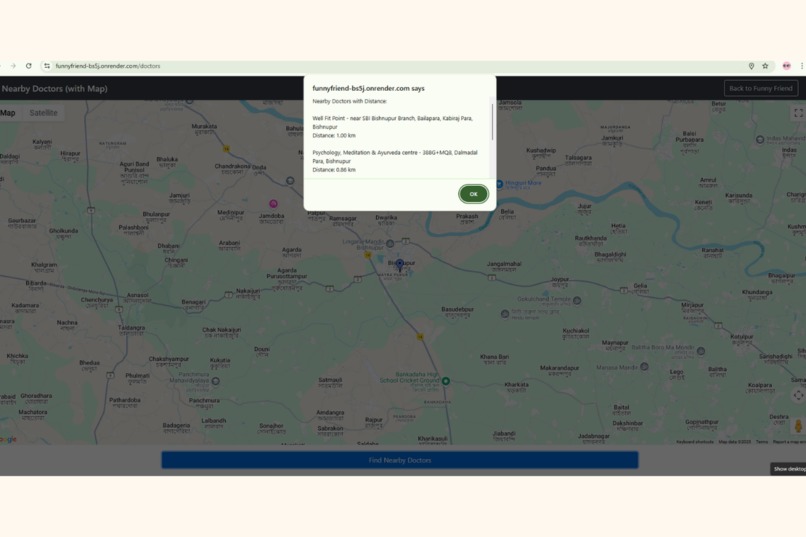

Map View of Nearby Doctors "Google Maps integration lets users see nearby doctors based on their emotional state."

-

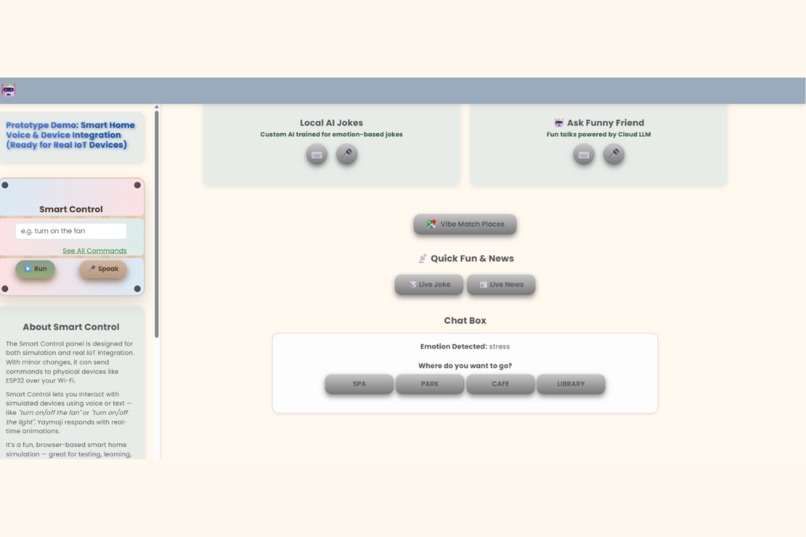

Place Suggestions by Emotion "Just type how you feel — and Yaymoji finds vibe-matching places nearby with Google Maps integration."

-

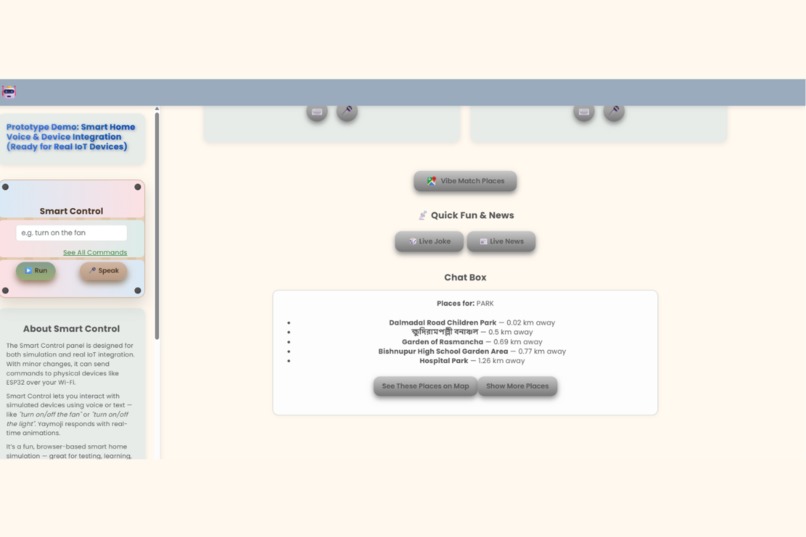

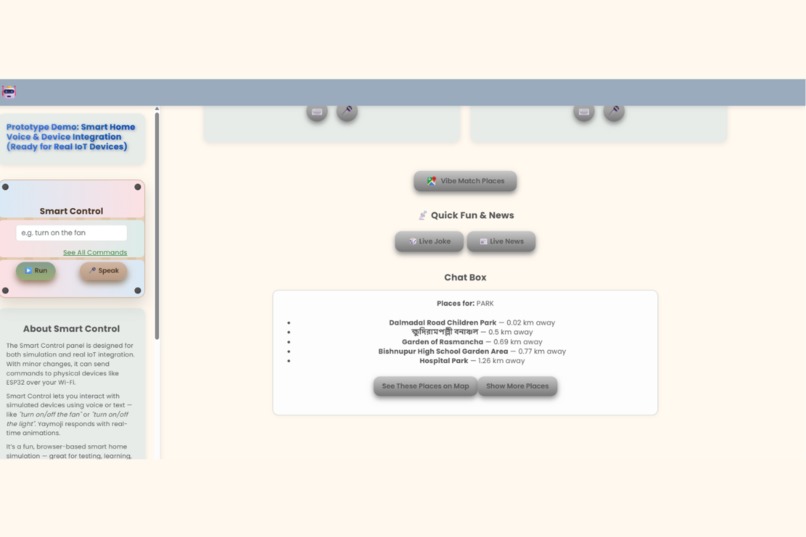

Vibe-Matching Places List "A curated list of nearby parks, spas, and more — tailored to your mood, shown directly in the browser."

-

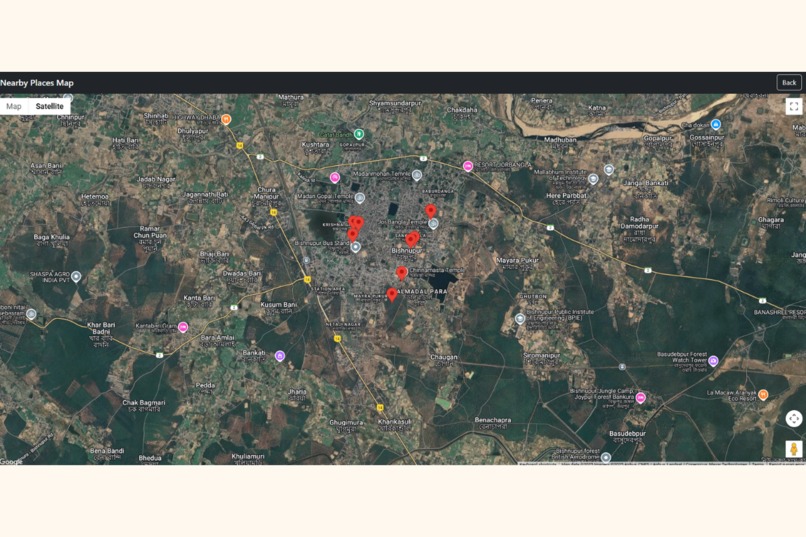

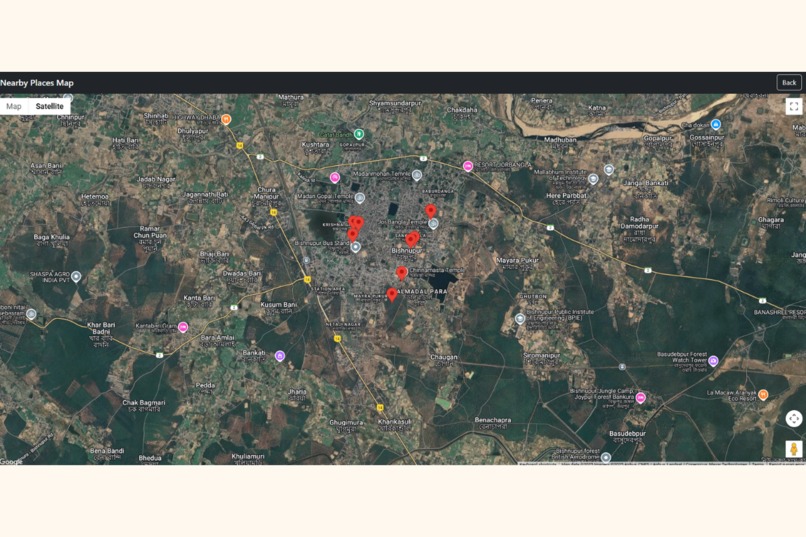

Map View of Nearby Places "Emotion-filtered nearby spots shown directly on Google Maps — just click and explore."

-

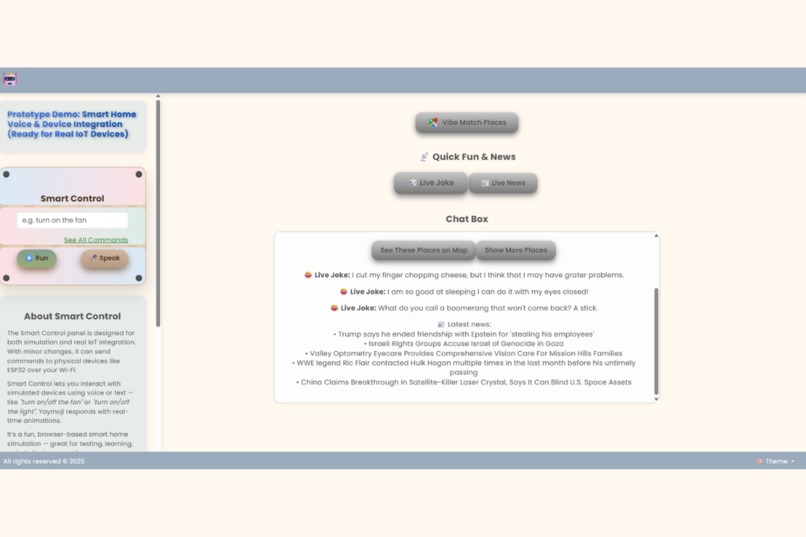

Jokes & News Clickable Feature "Get jokes and the latest news with a single click — designed to cheer up users instantly."

-

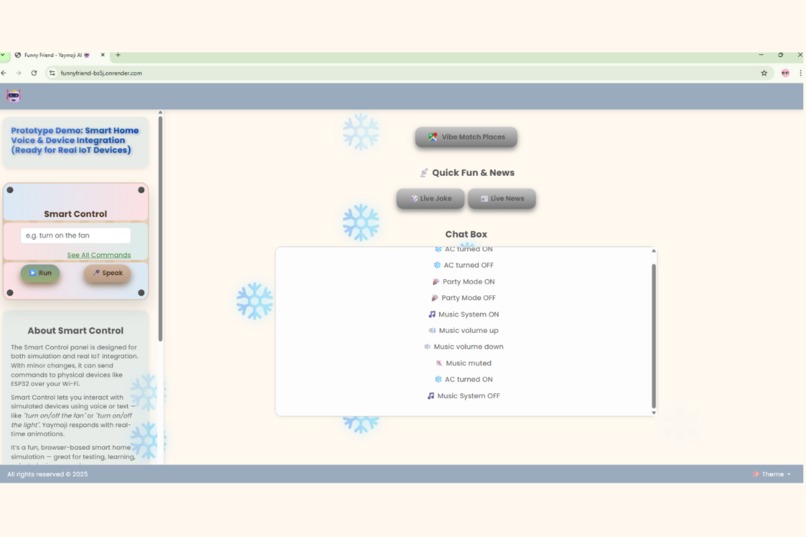

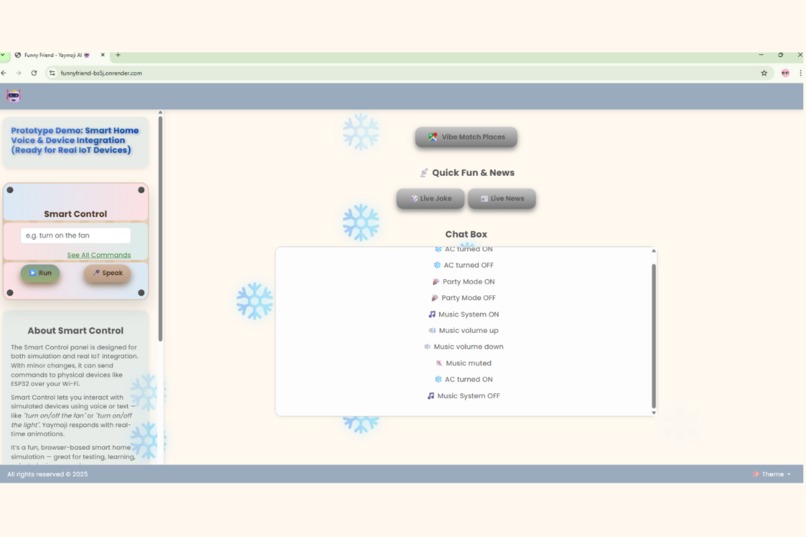

Smart Device Control "Control fan, lights, AC, and music via simple voice or text commands — smart home integration included."

-

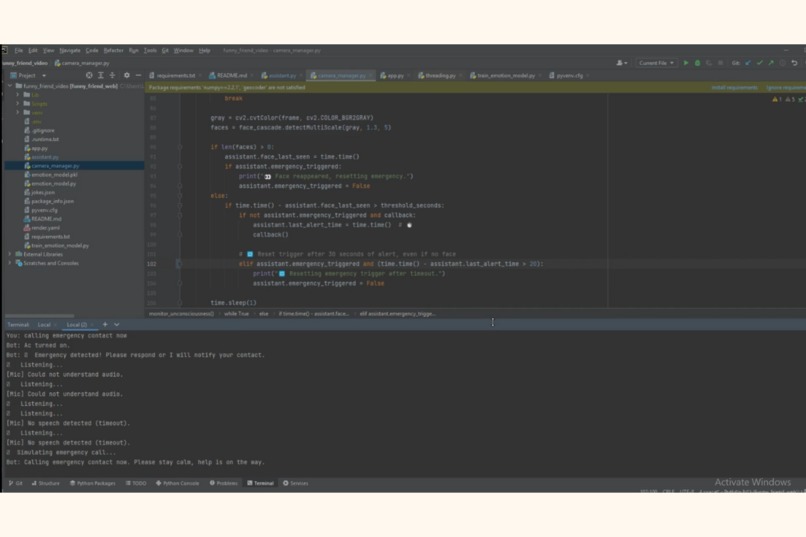

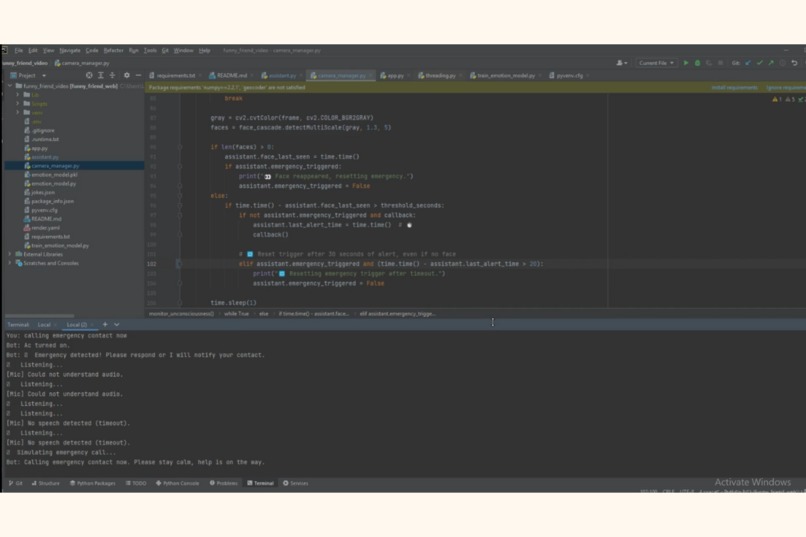

Emergency Alert in Prototype (Camera-Based Assistant) "It detects user disappearance on camera and triggers an emergency call."

-

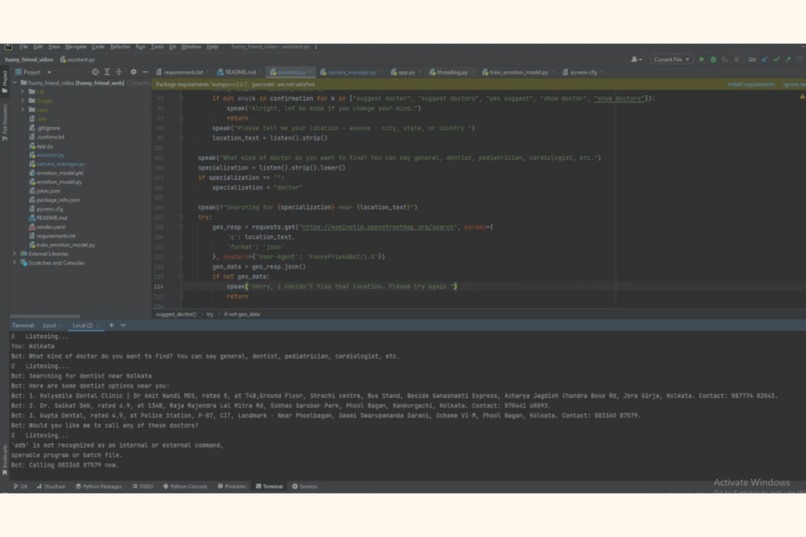

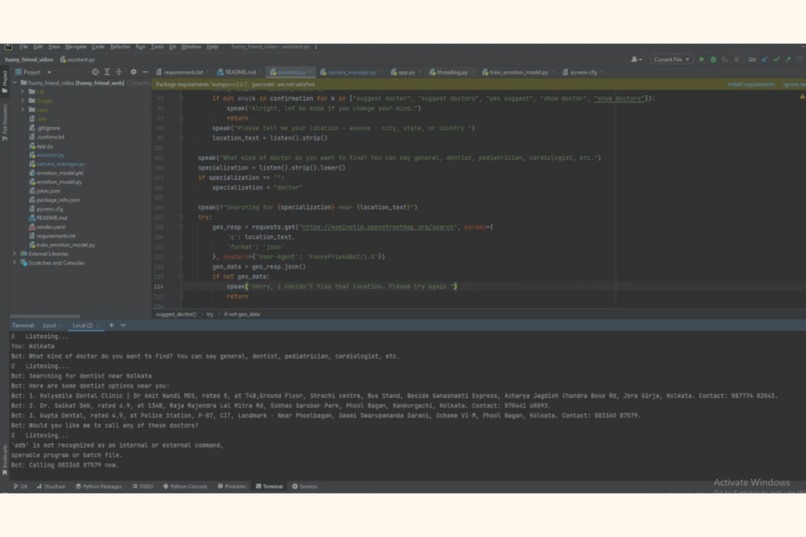

Doctor Suggestion (Video-Based Assistant) "Voice-based prototype suggests nearby doctors using Google Maps when user seems unwell or Low."

Inspiration

Yaymoji was inspired by the need for emotional support tools designed for people who are elderly, alone, or unwell.

What it does

Yaymoji is an emotion-aware voice assistant built for the Google Maps Platform Awards. The browser-based version detects your mood through text or voice, responds with jokes or LLM-powered chat, suggests nearby doctors and vibe-matching places using Google Maps, and controls smart home devices through voice or text.

Its extended prototype goes even further — using the camera to detect emergencies when the user is not visible, offering real-time doctor suggestions, and speaking empathetically like a trusted companion.

How we built it

The frontend is built using HTML, CSS, and JavaScript (with Bootstrap for responsiveness). It communicates with a Python Flask backend that powers all logic and API handling.

We used OpenRouter (with Mistral-7B) to generate empathetic or witty one-liner replies depending on the user's mood. Google Maps Places API is integrated to show nearby doctors and vibe-matching locations based on emotional input.

For smart home control, Dialogflow is used to handle voice commands and trigger webhooks connected to the backend, which then sends commands to virtual devices like fan, lights, AC, and music.

The extended prototype uses OpenCV to access the webcam and monitor if the user disappears from the frame — triggering emergency alerts and phone calls if needed. All features are connected in a single lightweight assistant that runs seamlessly in-browser or via voice.

Challenges we ran into

Integrating voice, camera, and multiple APIs into a smooth browser-based experience was technically challenging. Managing real-time inputs without lag required careful design. Since the entire project was built using free-tier APIs and local tools, performance and speed were sometimes limited — a more robust version could be achieved with premium infrastructure.

Accomplishments we're proud of

Creating a fully browser-based assistant that responds with empathy, suggests doctors when you're feeling low, and shows vibe-based nearby places using Google Maps. It also tells jokes, shares news, and controls smart home devices — all through simple voice or text commands.

Its extended version goes further: it can detect emergencies when the user is not visible on camera, offering help in critical moments. Even young children helped shape the design and colors, adding a sense of warmth and trust.

What we learned

We learned how to bring together voice, vision, maps, and emotion detection into one cohesive assistant. It also taught us the importance of designing with empathy — not just features.

What's next for Funny Friend: Yaymoji

We're working toward a more advanced version of Yaymoji — a video-based voice assistant that can detect real emergencies beyond just face disappearance. The goal is to build deeper emotional intelligence and smarter safety responses, using Google Maps to suggest immediate local help when it matters most.

We're also exploring:

Memory-based personalization, so Yaymoji remembers preferences and patterns,

Telemedicine and helpline integration, to connect users with real support,

And deployment on smart displays like Google Nest, combining voice, vision, and Maps for helpful companionship in real-world settings.

Built With

- bootstrap

- css

- dialogflow

- flask

- google-maps

- google-places

- html

- javascript

- mistral-7b

- opencv

- openrouter

- python

- text-to-speech

- web-speech-api

Log in or sign up for Devpost to join the conversation.