-

-

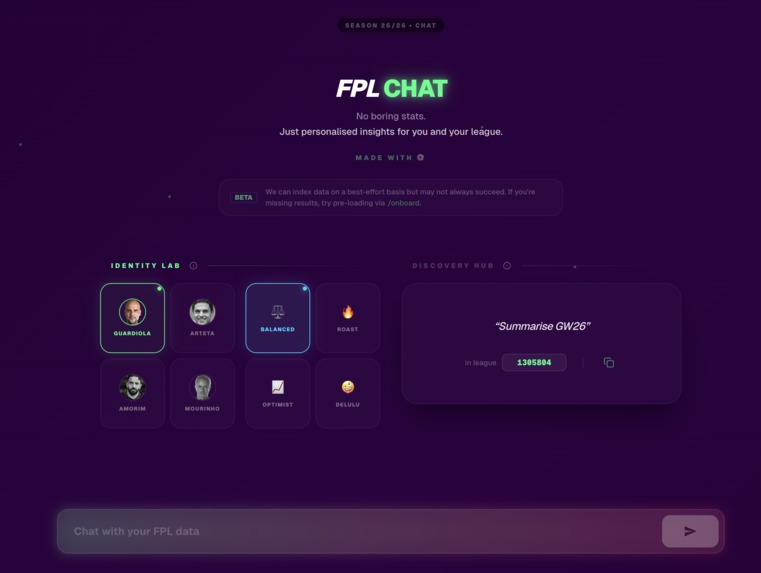

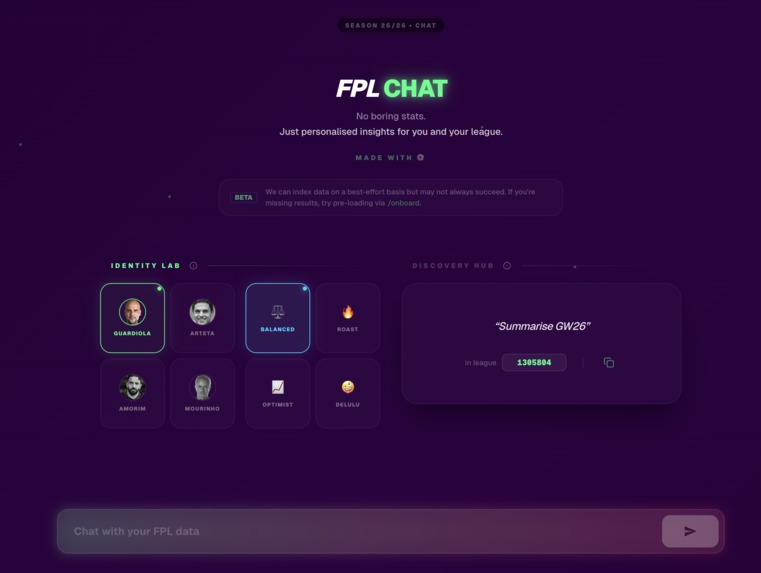

FPL Chat - Landing page

-

Dynamic data queries and custom charts

-

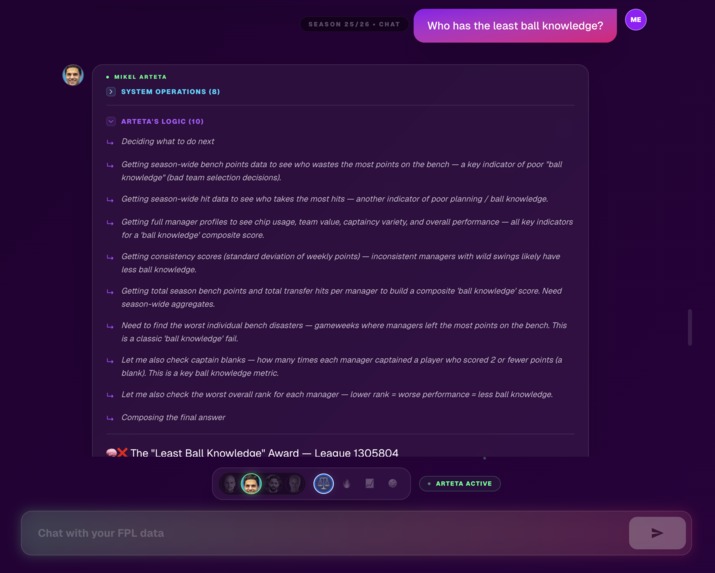

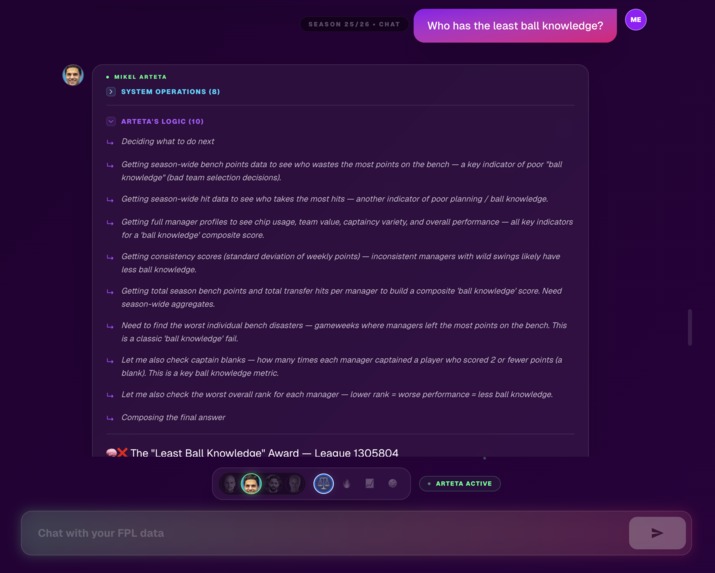

Explainable AI - Chain of thought reasoning

-

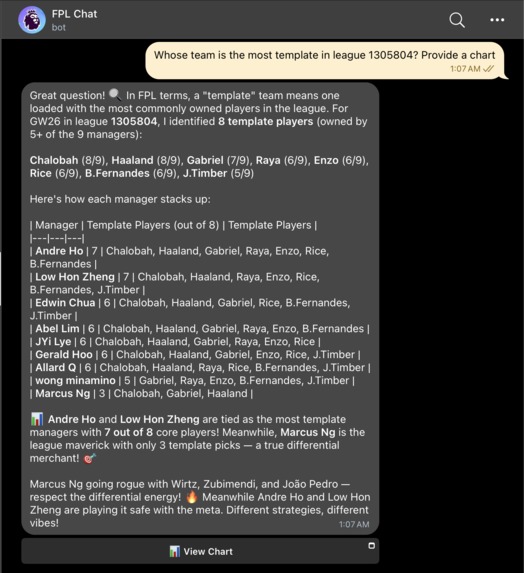

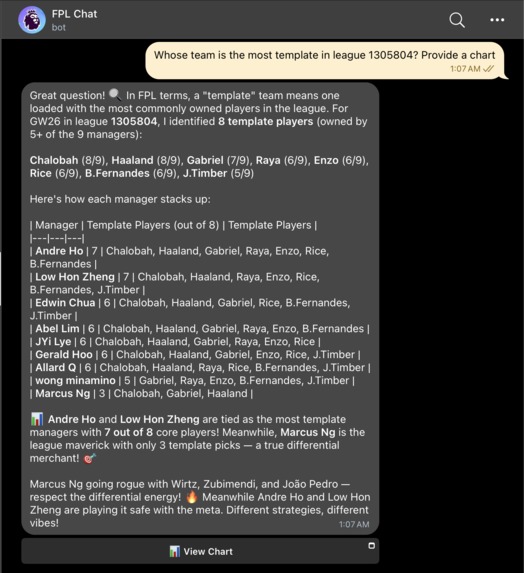

Embedded Agent - Telegram integration

-

Native Telegram chart visualization

-

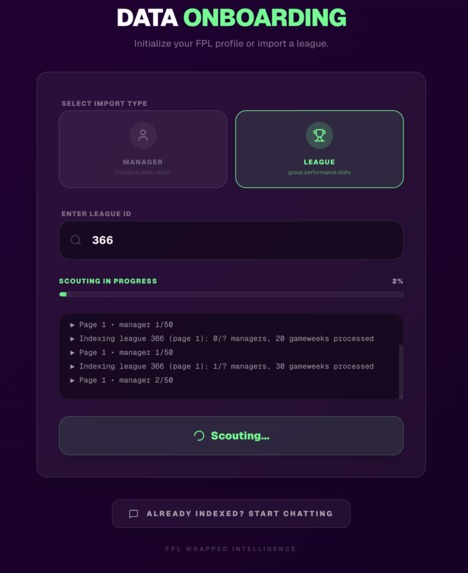

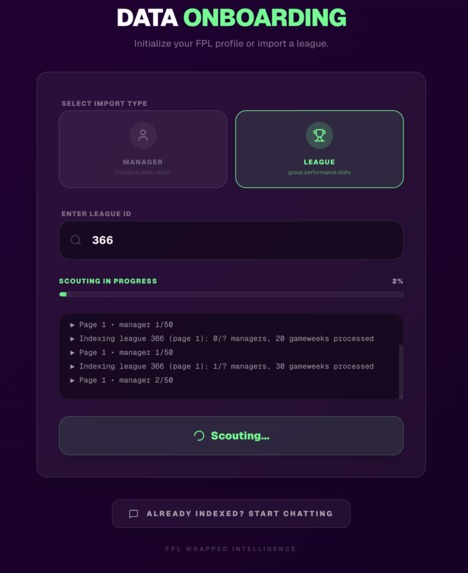

Onboarding (data indexing)

-

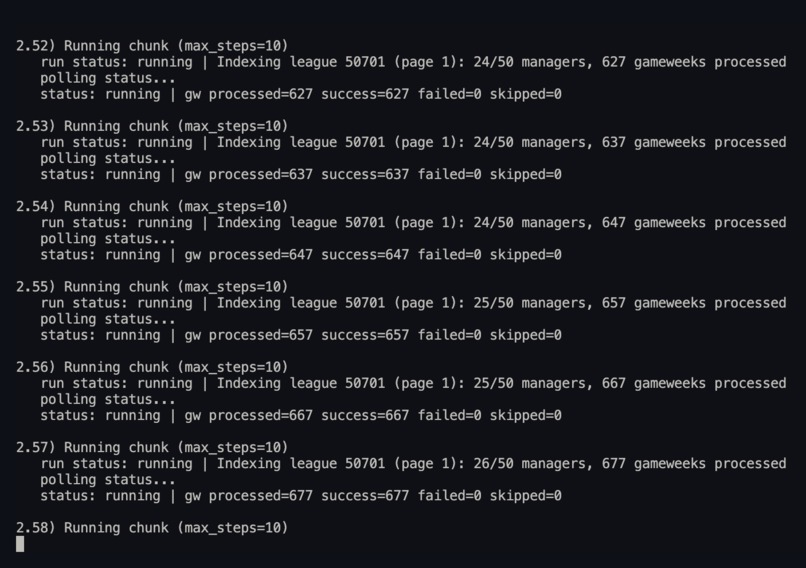

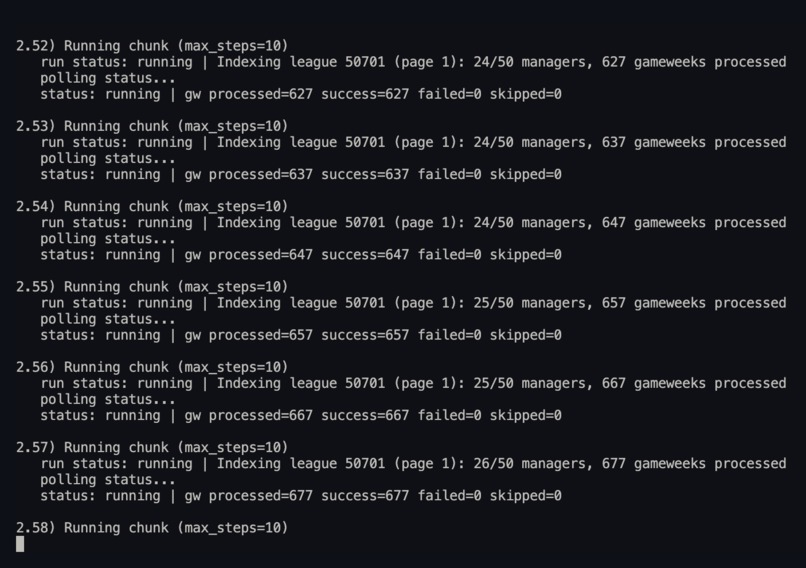

Data indexing logs

-

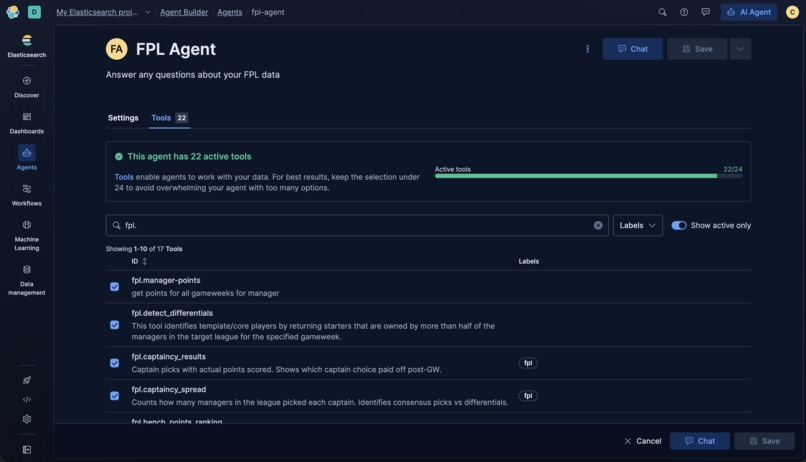

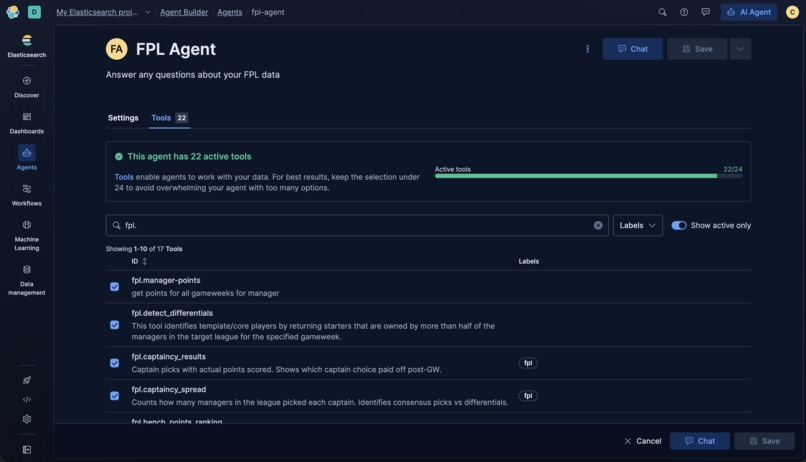

Elastic Agent Builder - Agent & Tools

-

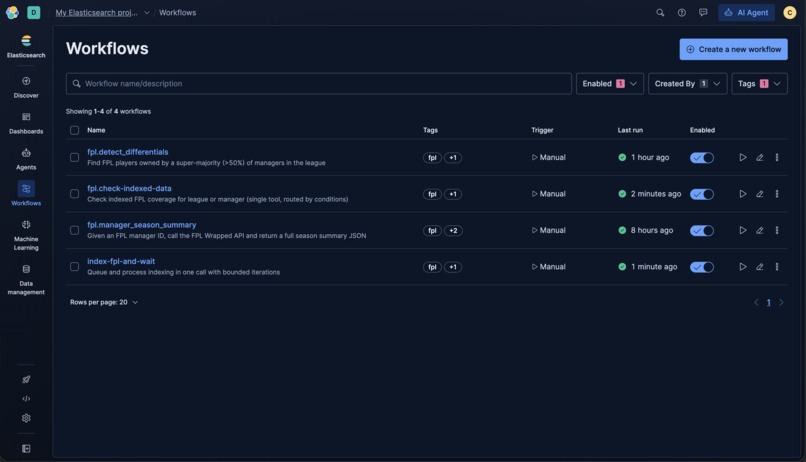

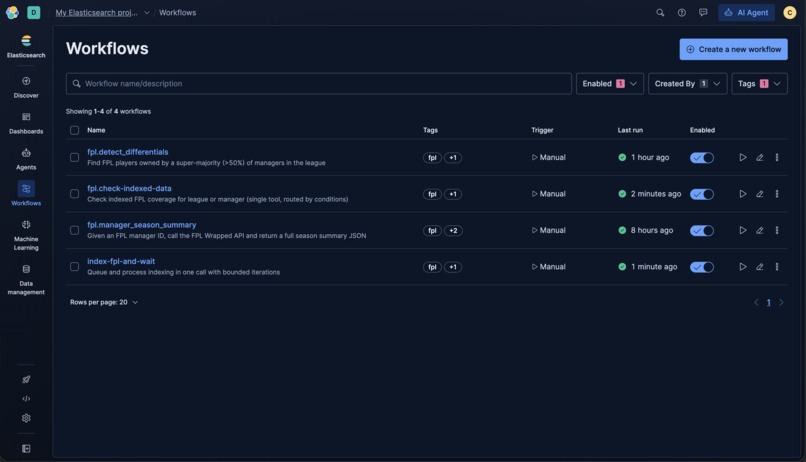

Elastic Agent Builder - Workflows

-

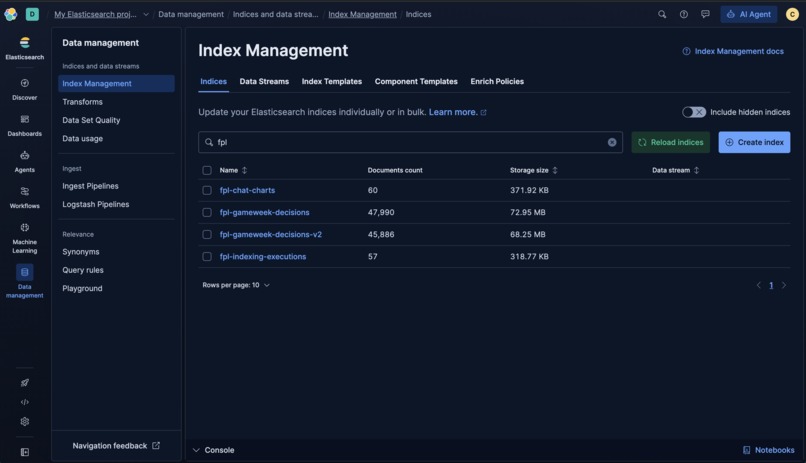

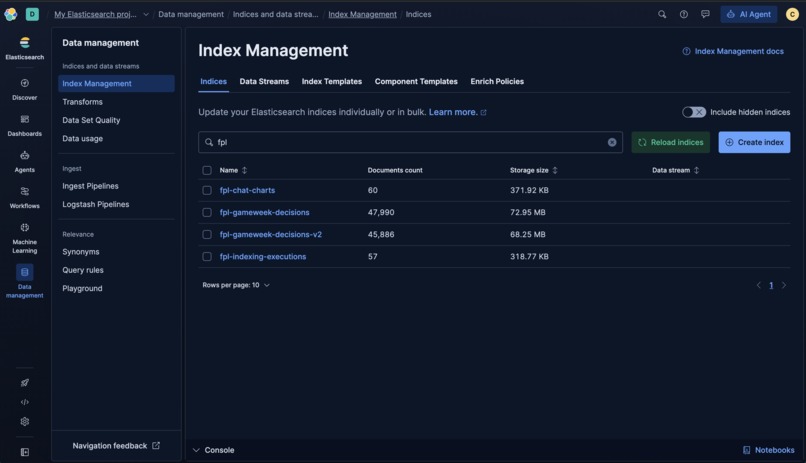

Elasticsearch - Index Management

Inspiration

As an Fantasy Premier League (FPL) manager in many mini-leagues, I spend hours every week struggling to keep up with every gameweek's events --- from the pre-games phase (of checking competitors' transfers made, team selections, and chip usage), to the in-games and post-games phases (of checking the latest points scored by my competitors).

What it does

What if there was a way for managers like myself to get all the answers they want at their fingertips, in a timely manner, with interactive visualizations and engaging, personalised storylines -- all without them having to get their hands dirty? That's FPL Wrapped Chat.

Powered by Elastic Agent Builder, FPL Wrapped Chat is a conversational agent capable of leveraging 20+ tools and tailored workflows to execute the right Elasticsearch actions, including performing safe on-demand indexing, and returns explainable, data-driven, and interactive storylines to users.

Key Features

- On-demand, natural-language chat for mini-league / manager / Gameweek questions (captaincy spreads, chips, hits, differentials, points trends).

- Generates custom data visualisations

- Personalises responses with manager personas and tone customisations (e.g. Delusional Amorim)

- Auditable trails: see what queries and data are accessed via "System Operations"

- Explainable insights: follow the chain of thought reasoning via "Manager Logic"

- Meet users where they are: embeddable in Web UI or Telegram for native workflows with end-to-end functionality supported via slash commands

- Automated indexing of FPL data (best-effort)

How we built it

- Leveraging Elastic Agent Builder capabilities, we built ~20 tools, 4 workflows, and 1 expert agent

- Elasticsearch for indexed gameweek and manager documents, generated interactive charts, and indexing progress/states (3 indices)

- Elastic Agent Builder Tools using ES|QL for custom, domain-specific use cases, and Workflows for orchestrated indexing, fetching proprietary data sources for data enrichment, and performing repeated analytical steps

- Elasticsearch Pipelines for cleaning messy data and preparing data for more optimized and accurate results

- Proprietary agent prompt that was iteratively optimized using meta-prompting to be more robust

- On-demand Elastic indexing API and

/onboardUI that runs chunked, resumable indexing with preflight coverage checks and execution logs for provenance. - telegraf (modern Telegram bot framework) for Telegram agent integration

- Vercel for Web UI and Telegram bot deployment

- Vega-Lite for interactive visualizations, and Telegram Mini Apps (TMA) to support such visualizations on Telegram

- Live streaming of responses included chain-of-thought and reasoning to the chat UI to support explainable AI features

Challenges we ran into

- Indexing scale: handling larger leagues (40+ managers) was non-trivial due to issues relating to timeouts (especially on free tiers), chunking, rate-limits, and retries.

- Platform reusability and scalability: To support embeddable agents where users are (e.g. Web, Telegram, etc.), functionality and features across all platforms had to be carefully built in a reusable and scalable manner, while isolating platform specific handling such as display of interactive charts

- Handling when a model provider is down: Using the Elastic-enabled LLMs, the main model Claude Opus unexpectedly went down without a fallback LLM in place, leading to a dilemma whether to expend time and resources to fix, or wait for the model to recover

- Trial constraints: As a new user, it was not clear how the existing project on Elastic Serverless Cloud could be seamlessly migrated to a new trial account with minimal downtime on the production application

Accomplishments that we're proud of

- Built a (decently) robust, multi-step agent from scratch as a beginner to Elastic Agent Builder

- Successfully expanded beyond traditional Web UI to native Telegram integration, providing the groundwork for future deployments in more platforms such as Discord, X, etc.

- Implemented resumable, auditable indexing so the agent can expand its dataset safely on request, with minimal manual intervention only as a fallback

- Reached hundreds of users in the span of a few days by sharing the product on various social media outlets and received valuable user feedback for future iterations

- Seeing the app through product through end-to-end from conceptualization to launch given limited time and resources

What we learned

- Explainable AI is vital for user trust: showing tool traces and model reasoning helps users make sense of edge cases when responses do not match their expectations

- Sometimes less is more. Including more agents just to satisfy trends such as "multi-agent orchestation" may not necessarily improve performance or quality; there must be a real value add or use case rather than trying to force-fit a solution

- Building where users already are is so crucial for adoption. FPL being a global experience, its users in different regions use different platforms (e.g. Discord, X, Telegram, etc.) which if supported by an agnostic agent could be key to the product gaining traction

- Expert knowledge is important in supporting testing of domain-specific concepts. For instance, to determine players' overall points and rank, the agent needs to be taught to deduct or account for hit (penalty) points

- Denormalization of arrays to increase support for queries

- Building custom Agent Builder Workflows to index data on-demand and handling pagination

- Customization options for charts by using prompt engineering to support features like tooltips and various chart types

- Elasticsearch does NOT guarantee array order preservation for search operations (surprising as a first-time Elastic user)

- Programmatic index updates and index data migrations especially given quick iterations during a hackathon

What's next for FPL Wrapped Chat

- Orchestrate a multi-agent system: Add an indexing agent to handle verification of latest data onboarded and long-running indexing tasks, and a persona agent that takes the raw outputs and refines them into more personalised and engaging responses based on user preferences (e.g. manager persona and tone selections)

- Run stress tests for large-league indexing and implement retry mechanism in view of timeouts, connectivity issues, rate-limiting, etc.

- Collect metrics: answer success rate, avg time-to-answer, indexing throughput, and reduction in manual steps

- Improve UI/UX: observe actual user behaviour and inputs to identify areas where friction could be reduced or processes could be sped up

- Scalable deployment: consider most cost-effective Elastic subscription, LLM providers for integration, as well as pricing tiers for deployments on Vercel, Render, etc.

Built With

- elastic

- elastic-agent-builder

- elasticsearch

- esql

- node.js

- typescript

- vega-lite

Log in or sign up for Devpost to join the conversation.