-

Our final logo!

-

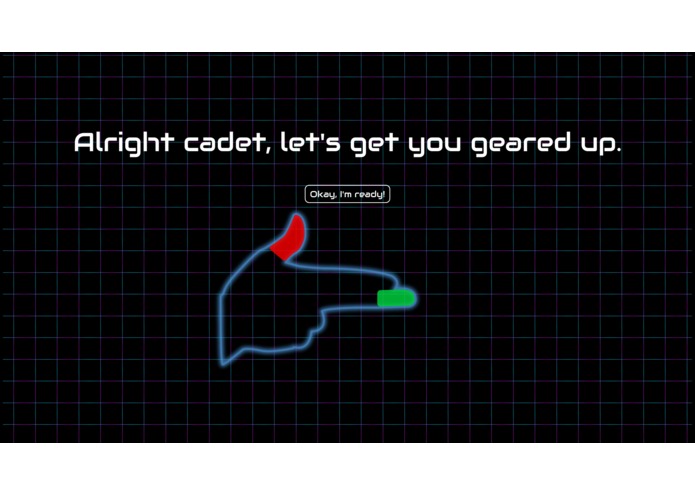

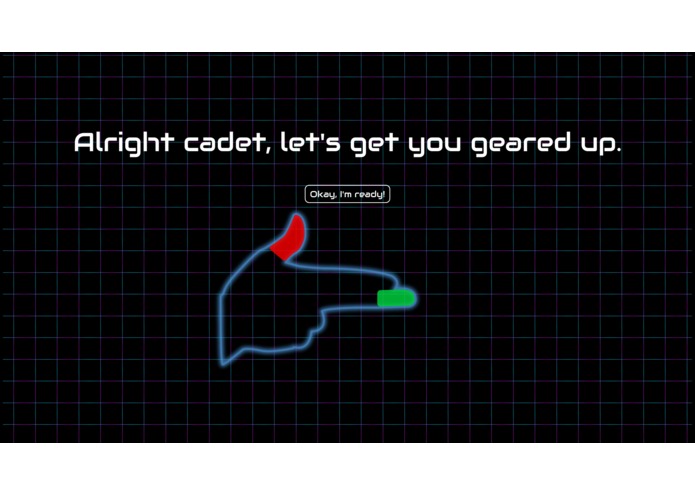

This screen shows the player how to wear the vision targets so our system can detect your fingers.

-

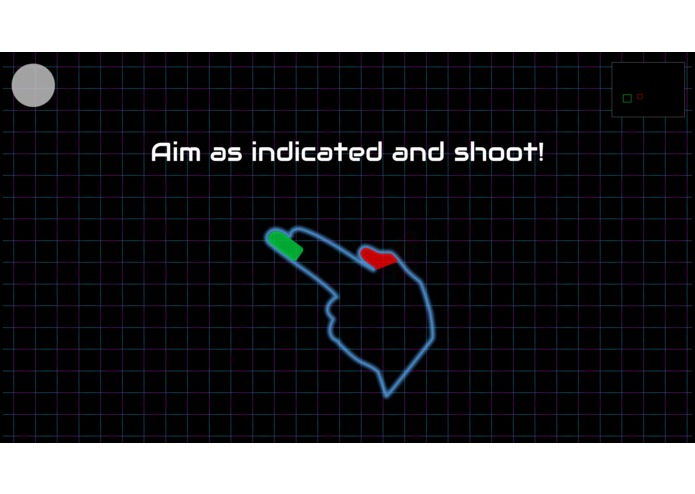

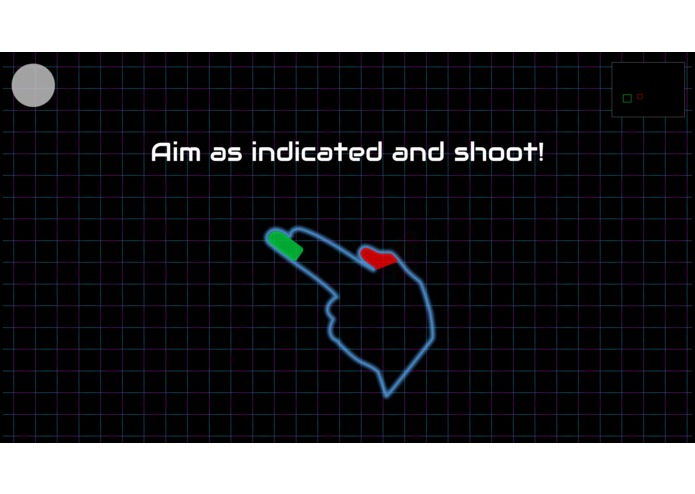

Our calibration screen adjusts the parameters used to detect aiming and firing

-

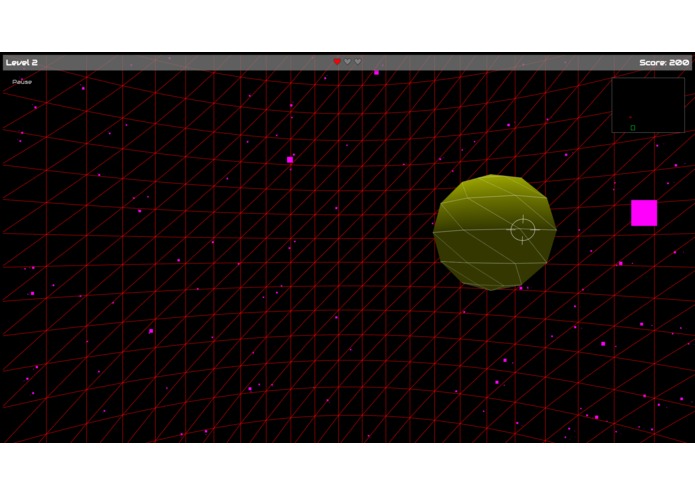

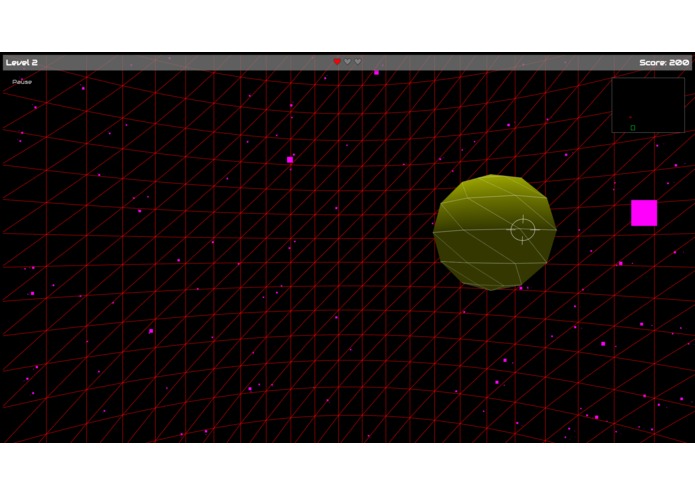

The in-game view, with image recognition feedback in the top-right corner

-

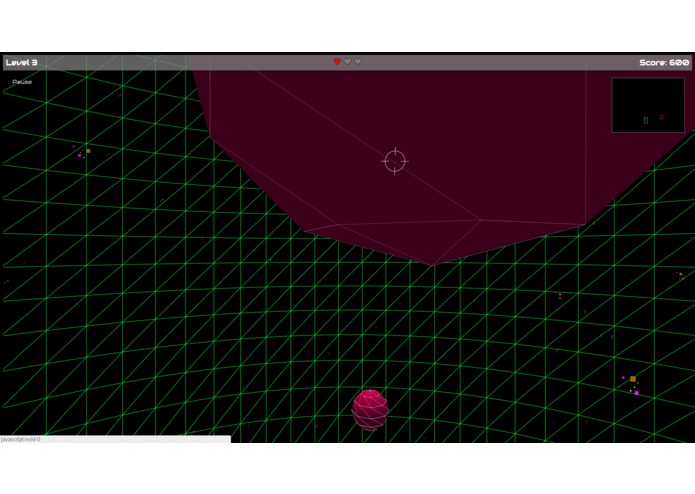

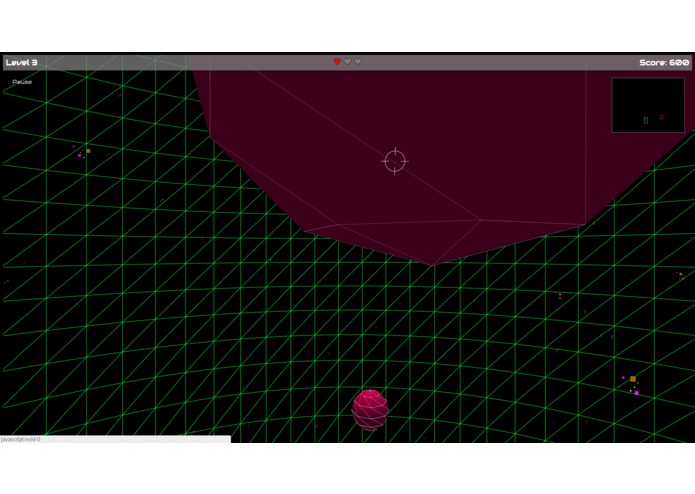

Another shot of the in-game view

-

The official Fingrr Trackers constructed with pinpoint accuracy and precision in mind

-

All assets were edited in Illustrator and saved to lazer-sharp SVGs

Inspiration

When our team saw the title "Dare to Venture," we immediately thought of the one place everyone wishes they could go in their lifetimes: SPACE! Similarly, we wanted to end the world hunger for real life finger guns. But… is it possible to do both in only 36 hours? Well friend, we introduce to you Fingrr, a state-of-the art solution to the dreaded lack of virtual bullets emitting from your fingertips… in space.

What it does

Fingrr implements optical object recognition to detect your fingertips whilst immersing you into a dangerous asteroid shower plummeting towards earth. It's up to you and your handy dandy finger gun to save humanity and destroy all the asteroids. Are you up to the task, cadet?

How we built it

The number one thing we wanted to have this project represent was accessibility. That's why, to run Fingrr, you need NOTHING but a laptop (NOT EVEN AN INTERNET CONNECTION!). No more clunky external hardware to feed your optical-recognition frustration, all you need is your built in webcam.

Using webcam optical recognition and tracking.js, we were able to successfully track all the necessary components of your deadly finger gun. We start by filtering the captured webcam image to a range of HSV values and detecting groups of pixels within the filtered image, identifying the coordinates of the vision targets on the webcam image. To go from these coordinates to the angle of the gun in-game, we need to know where the edges of the screen are. For this reason, we ask the player to calibrate the gun by shooting at the top-left and bottom-right corners of the screen. At the same time, we calibrate the trigger motion by initially detecting the shot with very low thresholds and using the two calibration shots as a reference for what an "actual" shot looks like, adjusting our finger velocity thresholds accordingly.

For the immersion factor, we built our asteroid shower out of three. Using everything from spheres and lights to orbits and sound buffers, we truly took a deep dive into the environment. The software itself throws you into a mesh sphere in which your view is locked to a 60 degree vertical and a varying width dependent on your screen size. The game progresses by slowly increasing the speed and frequency of asteroids being generated as the player progresses the levels. As the player shoots down the asteroids, he or she accumulates points. The game ends once three of his or her lives are lost/three asteroids hit the player.

All sounds are royalty free and acquired legally. We simply downloaded wav and mp3 files and incorporated them into buffers/loaders to play in game both simultaneously and individually.

Challenges we ran into

With our ideal vision of using no external hardware and/or APIs, tracking and trigger reads were probably one of our biggest problems.

Color detection was also a significant issue since we could not simply track a single hexcode due to varying lighting. This required us to use HSV values for filtering; however, since we didn’t want to use external APIs or Libraries (besides threeJS and trackingJS) we ended up making functions locally incorporating LOTS of mathematical transformations and logic. Though it was a hassle, it allowed us to detect a much wider range of reds and greens than a single hexcode for reference.

Accomplishments that we're proud of

We’re definitely proud of how complete the game experience ended up feeling from the initial website load. We weren’t sure we would have time for a life system + game over mechanic, or a proper introductory sequence to explain game rules with helpful graphics, but we managed to fit it all in. Overall, we came in thinking we could build a partially functional game but instead ended up building a nearly fully fleshed out immersive-gaming experience.

However, like previously stated, we are immensely proud of our ability to incorporate all functionality with practically no external-hardware and third-party-apis/libraries for hand tracking. Keeping it simple yet making the biggest impact possible: that was our motto for the entire hackathon. Raise our own baby without any help from those pesky nannie APIs or Libraries.

What we learned

As with any good hackathon project, we encountered one learning experience after another. Exploring how threeJS generates 3D objects using just an HTML canvas was new territory for all of us, requiring research on webcam integration + HSV detection, particle physics for explosion animations, and overcoming the quirks of the threeJS library. Overall, we learned that with JavaScript and a well written library, anything is possible!

What's next for Fingrr

There are many directions we can take this project. One option we considered was incorporating the Spotify API to retrieve the tempo and energy level of a song. With this, we could adjust the rate of asteroid creation and color intensity to match what the player is listening to, almost like a rhythm game. We also considered using OpenCV for JS to achieve more complex image detection, potentially allowing us to ditch our finger color patches entirely by recognizing a human hand.

Log in or sign up for Devpost to join the conversation.