Inspiration

Companies expect 70% of their employees to heavily use data to make decisions by 2025, a jump of over 40% since 2018 [Tableau, 2021]. Still, 74% of employees report feeling overwhelmed when working with data [Accenture, 2020]. While a majority of business decisions are influenced by financial data, only 30% of employees from non-financial departments feel confident in their ability to understand and use financial information effectively [Deloitte, 2021]. In the USA only, the lack of data and financial literacy is estimated to cost companies over $100B per year [Accenture, 2020].

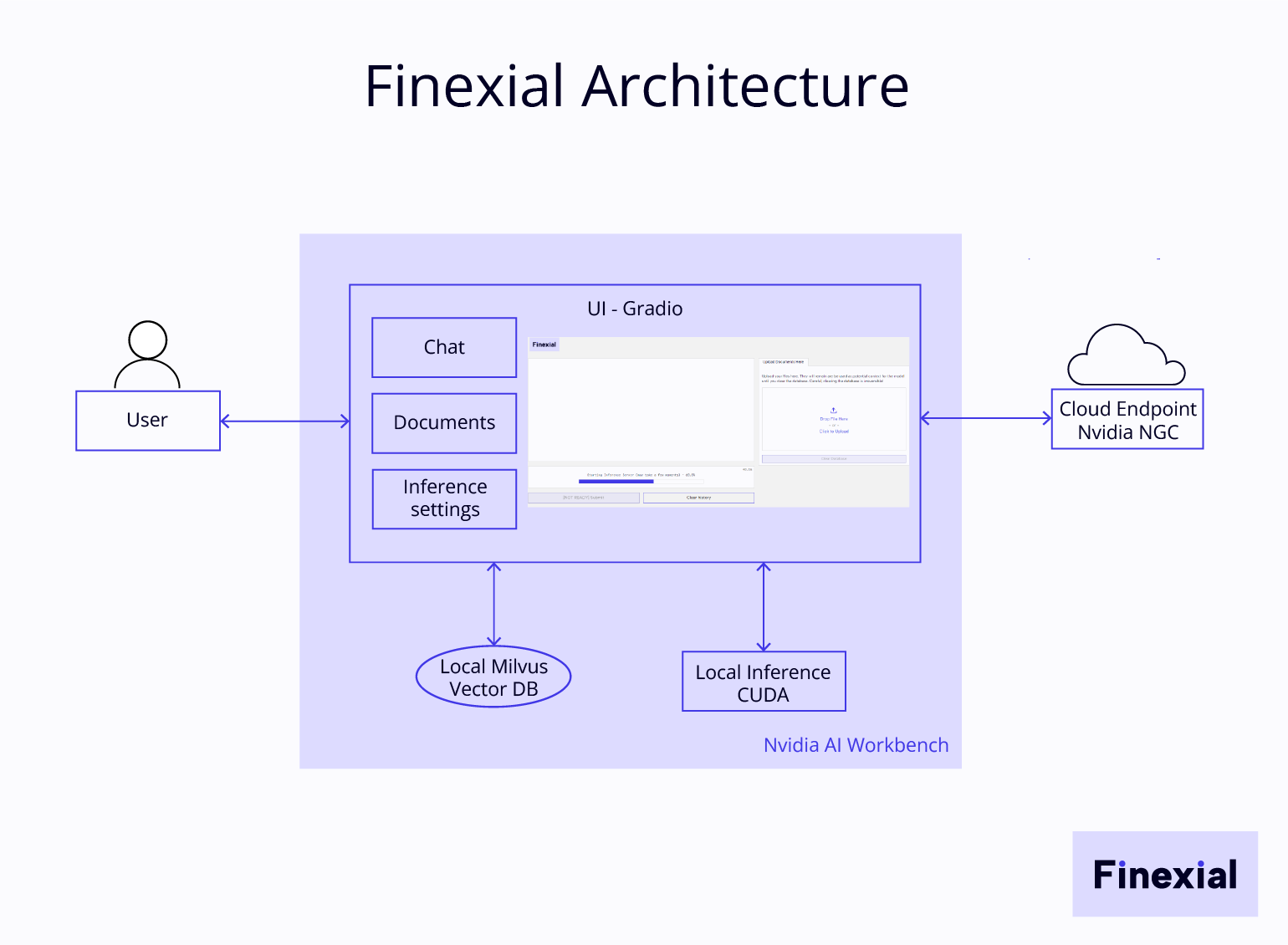

That's why for this competition I created Finexial, an AI-powered tool designed to help employees from non-financial departments make the most of their financial data and feel more confident working with large quantities of data-heavy documents. Finexial relies on a Large Language Model (LLM) and Retrieval Augmented Generation (RAG) to allow users to query their financial documents and reports using natural language.

Requirements

Finexial is a tool for corporate employees. Here are the few essentials I established for the project:

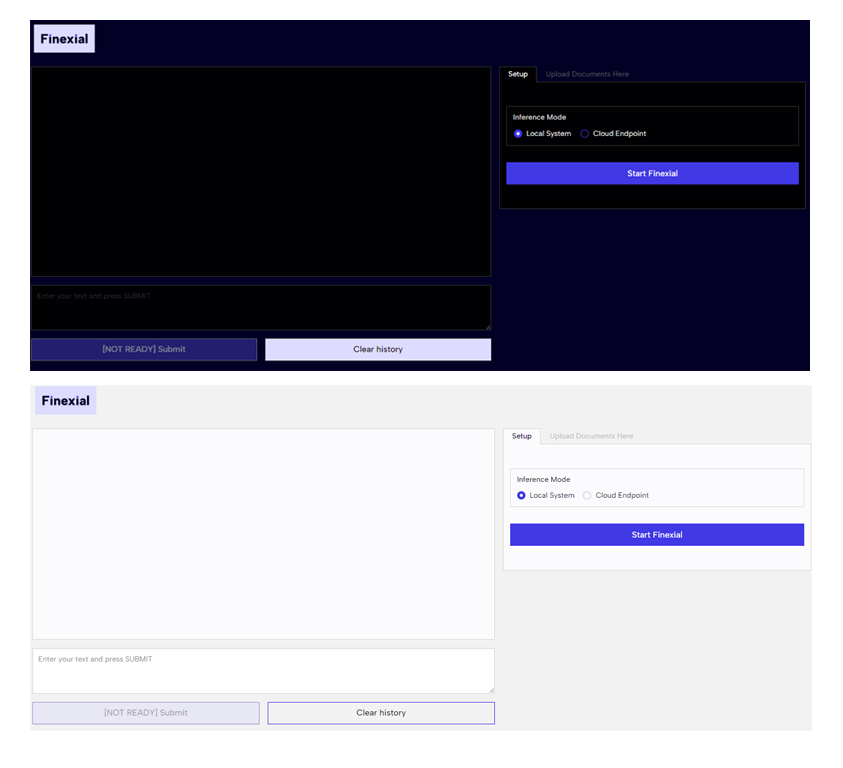

- Accessibility: Finexial needs to be easy for non-tech savvy users. The interface, designed with Gradio, has to be as simple as possible.

- Privacy: Many companies do not allow the use of AI technologies by their employees for privacy reasons, that's why Finexial is set to be used locally by default so no document or data is shared with any 3rd party. Users can also choose to run inference in the cloud using Nvidia Cloud endpoint.

How it works

Finexial has been designed to be both easy to run and to use thanks to Nvidia AI Workbench. It relies on the non-gated nvidia/Llama3-ChatQA-1.5-8B model to dialogue with your documents.

How to get started

- Set up Nvidia AI Workbench following Finexial's GitHub and Nvidia's guides.

- Clone/import Finexial project from GitHub.

- Add your own Hugging Face and Nvidia NGC Token to the project to run locally/on the cloud.

- Launch the project via Nvidia AI Workbench, a Gradio UI will open in a web browser. Both a light and a dark mode are available. the default follows the user's browser settings.

- Choose your inference mode (local or cloud endpoint).

- Click on "Start Finexial". Once the model is ready, you can upload your financial document(s).

- Ask anything to Finexial about the document(s) provided using natural language.

Example

As an example, I am using Nvidia's official Financial Results for Q3 2024.

How I built it

- To facilitate access to AI for non-technical users, Finexial relies heavily on Nvidia AI Workbench to manage AI development environments.

- Local inference uses Nvidia CUDA and I use a desktop computer equipped with an Nvidia RTX 3090.

- Local vector database uses Milvus.

- Cloud inference can be selected via the interface, however, it requires an NGC account and token.

- The user interface is built with Gradio.

Challenges I overcame

While Nvidia AI Workbench set-up was quite smooth, I had to face some hardware limitations with local inference as the Nvidia RTX 2080 Ti I had was not sufficient to run the model. Thanks to the support of the community on Discord and Twitter, the problem was quickly identified and I borrowed an RTX 3090 from a friend of mine.

Limitations

- A common problem with LLMs is hallucinations. Finexial uses the nvidia/Llama3-ChatQA-1.5-8B model which sometimes makes up data despite the system prompt designed to prevent it.

- Finexial works with financial reports, but it struggles with table-only documents such as companies' P&L or some spreadsheets.

Future work for Finexial

- Among the future work planned for Finexial is a more in-depth evaluation of the model performance and further testing of its ability to understand and explain financial data. Consequently, I plan to establish a list of financial documents with a set of predefined questions and ground truth answers. This would help me improve the system prompt created, establish an evaluation baseline and could be used as training data for fine-tuning the model.

- Given that Finexial has been designed to run primarily locally and to be usable with a wide range of hardware, I would like to also explore the use of a smaller model, possibly via distillation or simply by selecting a different base model.

Log in or sign up for Devpost to join the conversation.