Inspiration

The idea is inspired by federated authentication/security/single-sign-on(SSO) (e.g. Okta, Auth0) way of implementing security in a way that individual APIs or services can treat data protection as a cross-cutting concern and trust a central system/service with responsibility of protecting all data exchange.

Another inspiration comes from the area of a service-mesh or a data-mesh and how something like mutalTLS(mTLS) is done in a way that makes it almost 'automatic' for each service that becomes part of such a mesh and with very little additional configuration. I envision this project to enable data protection/compliance verification in a similarly 'automatic' way through just configuration for each new service that registers itself in the mesh.

What it does

The project is divided into 2 components -

- A centralized server/service called the

HIPAA-Verifier- responsible for codifying the following -- Registration of all services that will be governed

- Specific mapping of API end-points + type of data that will be exchanged

- For these specific types of data :

- Identify which fields need to be protected and how.

- Identify which fields need to be verified and how.

- A sample service that acts as a

data ownerand will interact with the aboveHIPAA-Verifierto protect and verify any outgoing data.

The proposal is described in more detail at this Gist

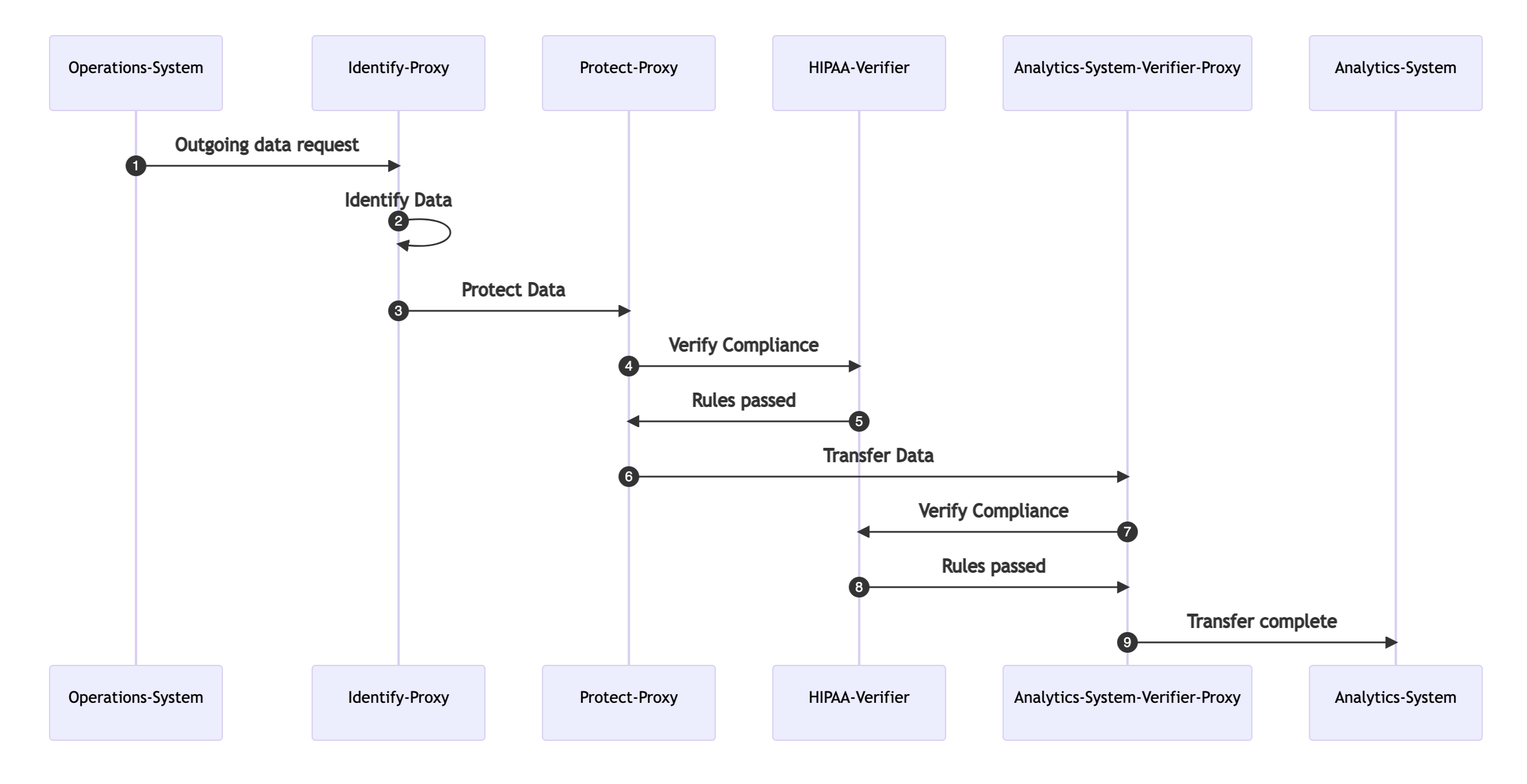

Example data flow

The outgoing data flow from "data owner" is essentially proxied through 2 stages where the HIPAA-Verifier will -

- "Identify and Protect" the outgoing data based on the rules already published for the specific

data typeand thedestinationfor the outgoing data. - "Identify and Verify" the protected data to make sure we didn't miss anything.

How we built it

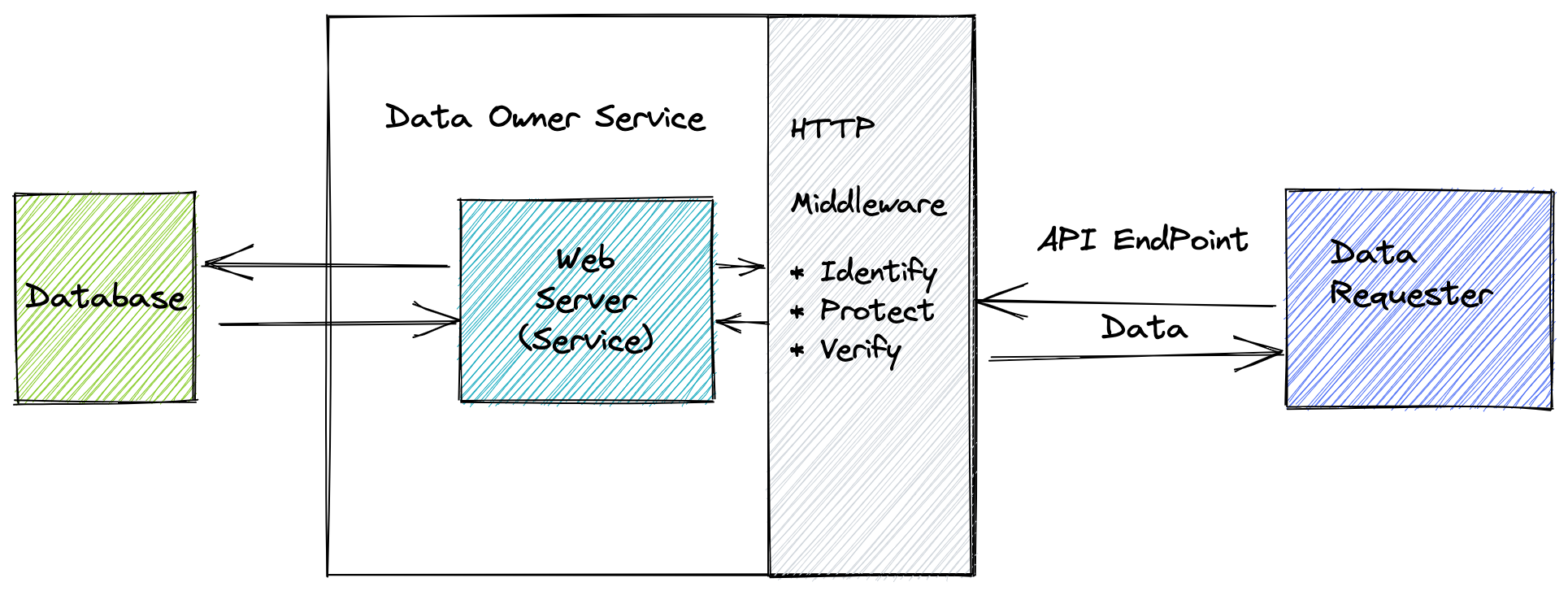

Initially, I approached this project in the form of a "data owner" doing everything -

This is when all the logic to -

- identify what fields in a given data type are to be protected,

- how will they be protected and finally,

- how will the protection be verified

all was done was inside the service itself, as an HTTP middleware which calls functions that exist inside the same code base.

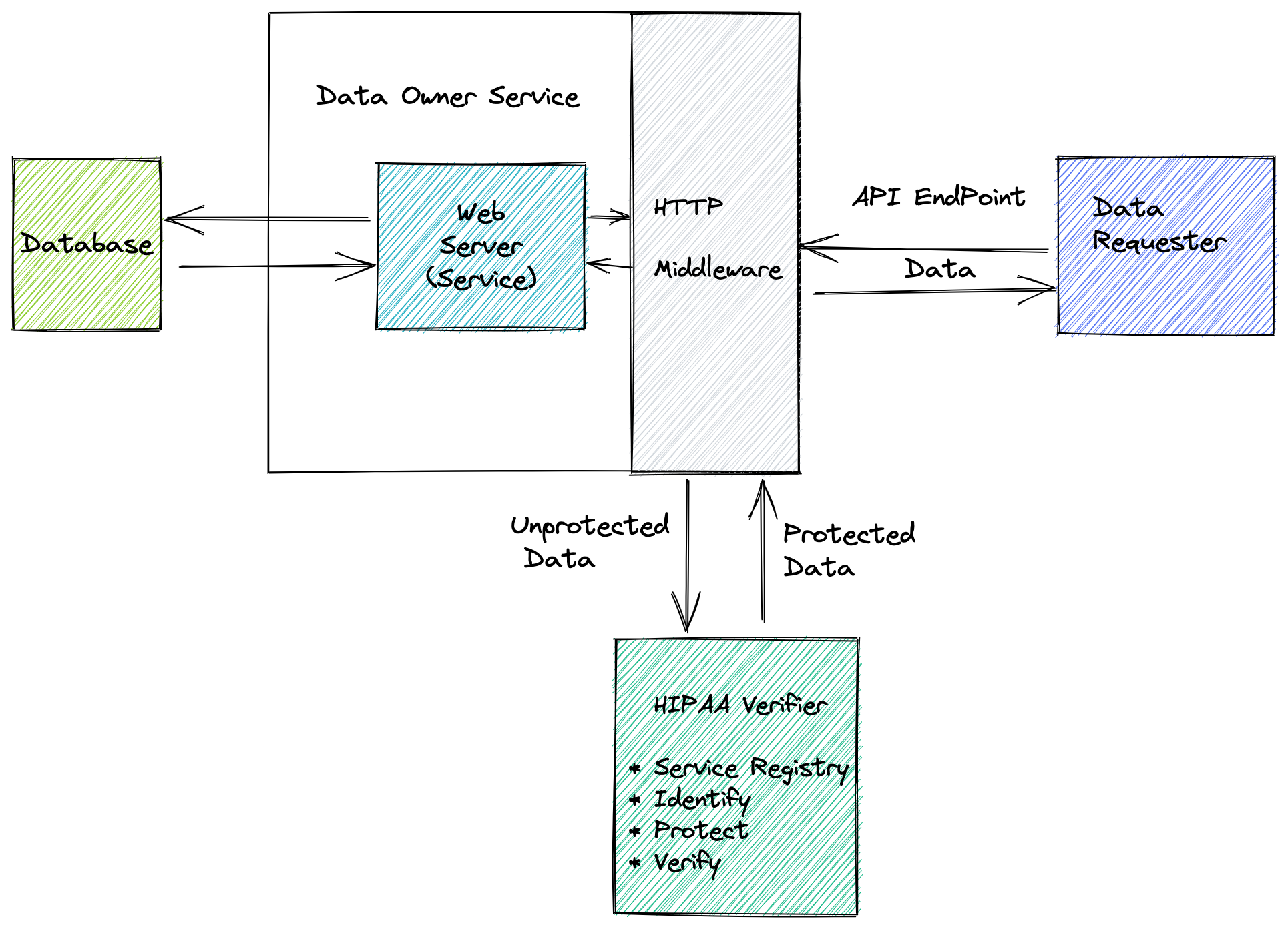

The second step was to move all the rules and associated functions to the HIPAA-Verifier abstraction that offers an API endpoint for data protection and verification -

This is only different from the previous step in who does the data protection and verification and how does the HIPAA-Verifier abstraction know which "data owner" is talking to which other "data requester" and the corresponding entry in its "registry" that specifies what data type to expect so that it apply the right set of rules.

For identifying which rules to apply, we look at the source (data owner) and the destination (data requester) and then go through the existing entries in our registry to find the right set of protect-rules and verify-rules.

An example of a protect-rule is -

{

"SSN": {

"functionType": "Mask",

"functionName": "MaskingFunction",

"Arguments": [ "XXX-XXX-XXXX" ],

}

}

which applies a masking function using the specified pattern on SSN field in a data record.

For each field (e.g. SSN) inside a data record type (e.g. an EHIR record), we specify a path that can be used by the above protect-rule to do the necessary logic on it. Similary, we can specify different fields having different logic being applied on them (e.g. hashing, encryption, redaction, scrubbing etc.), depending on what 'protect' means for that field.

In the same way, we have verify-rules that specify how an individual field in a given data record can be verified that it was 'protected' correctly. The format is similar to the protect-rule, except what the the function returns a true or false based on the value of that field in the record. In the SSN example, the verify-rule checks whether the SSN field matches the value XXX-XXX-XXXX or not. Otherwise, we return a false.

Assumptions of this implementation

- Biggest assumption is that the

HIPAA-Verifiershould be atrustedparty and should already be compliant to any standards it is trying to verify others on. - We only look at

outgoing-data(requested by somebody) in more detail in this project and not atdata-at-restorincoming-data- these can be looked in a similar way as a future work. - The fields addressed in this implementation is a very small subset of what might be considered PHI and is purely to simplify the implementation complexity.

Challenges we ran into

Challenge for me was to simplify the idea/abstraction to a point where I could reasonably think about implementing it and also see how it will work at a small scale or on a smaller scope. I started with the FHIR EHR and Claims data and tried to think about what privacy would mean when we look at these data-sets together. But then to simplify, I went more in the direction of just looking at one data/record type i.e. EHR data and then looking at it more closely.

Accomplishments that we're proud of

I am quite happy that this hackathon gave me a chance to build on my idea and find related resources to learn from. Writing it down and visualizing it gives me more idea of pros and cons of such an idea vs whats already out there.

What we learned

Along the way, I found out other significant work that already been done in similar area -

- Kodex - https://heykodex.com/docs/ - a toolkit to codify security and privacy rules to protect data exchange

- Privacy-first data-mesh - https://www.thoughtworks.com/en-us/insights/articles/privacy-first-data-via-data-mesh

I could learn from these more mature implementations to see how I can progress this idea further.

What's next for Federated/centralized data protection and compliance

- Around data, right now this only supports running simple functions at a

fieldlevel in a data record. This could be extended to having it run over a list of data records and perform more complex techniques like k-anonymity or aggregation - To ease adoption, this could be offered either as a centralized server/service or as a language library that can be used in the code directly without needing a network call.

- Service registration to a central

HIPAA-Verifieris hard-coded right now. This could be replaced by a dynamic registration where each newdata-endpointthat is exposed, automatically registers with theHIPAA-Verifierby sending its metadata (source, destination, data-type, field-identification-paths), allowing theHIPAA-Verifierto protect and verify the outgoing data. - Adopt a more advanced technique like `differential-privacy' and maybe Google's SDK to apply such logic on a list of outgoing data records.

- Use a data exchange format like protobuf to define service registry and record types so that its easier to apply strong type checking without having to duplicate record types in both places.

Built With

- fiber

- golang

Log in or sign up for Devpost to join the conversation.