🪞 Mirr.AI

Sometimes the future isn't about doing something new—it's about finally making something we've dreamed of for 30 years. We're bringing the wardrobe from Clueless (1995) to life.

The Problem

Every year, online shoppers return $890 billion worth of merchandise—and fashion is the worst offender.

The numbers are brutal:

- 30-40% of online clothing purchases get returned, compared to just 8% in stores

- Size and fit issues cause 53% of all apparel returns

- Retailers lose an average of $21-24 per returned item in processing costs alone

- The total cost to process fashion returns? $38 billion annually in the US

Meanwhile, the environmental toll is staggering:

- E-commerce returns generate 24 million metric tons of CO2 emissions every year

- Clothing returns alone release emissions equivalent to 3 million cars on US roads

- 9.5 billion pounds of returned products went straight to landfills in 2022

- Up to 44% of returned fashion items never reach another customer—they're destroyed, liquidated, or dumped

The root cause is painfully simple: you can't try clothes on through a screen.

So shoppers "bracket"—ordering the same item in multiple sizes, keeping one, returning the rest. 51% of Gen Z does this routinely. It's not their fault. It's the only rational response to a broken system.

Retailers have tried everything: stricter return policies (customers just shop elsewhere), better photos (still can't show fit), size charts (nobody trusts them). The solutions treat symptoms, not the disease.

The fashion e-commerce market is worth $781 billion globally. But it's hemorrhaging money, destroying the planet, and frustrating customers—all because of one unsolved problem: visualization.

That's where Mirr.AI comes in.

The Solution

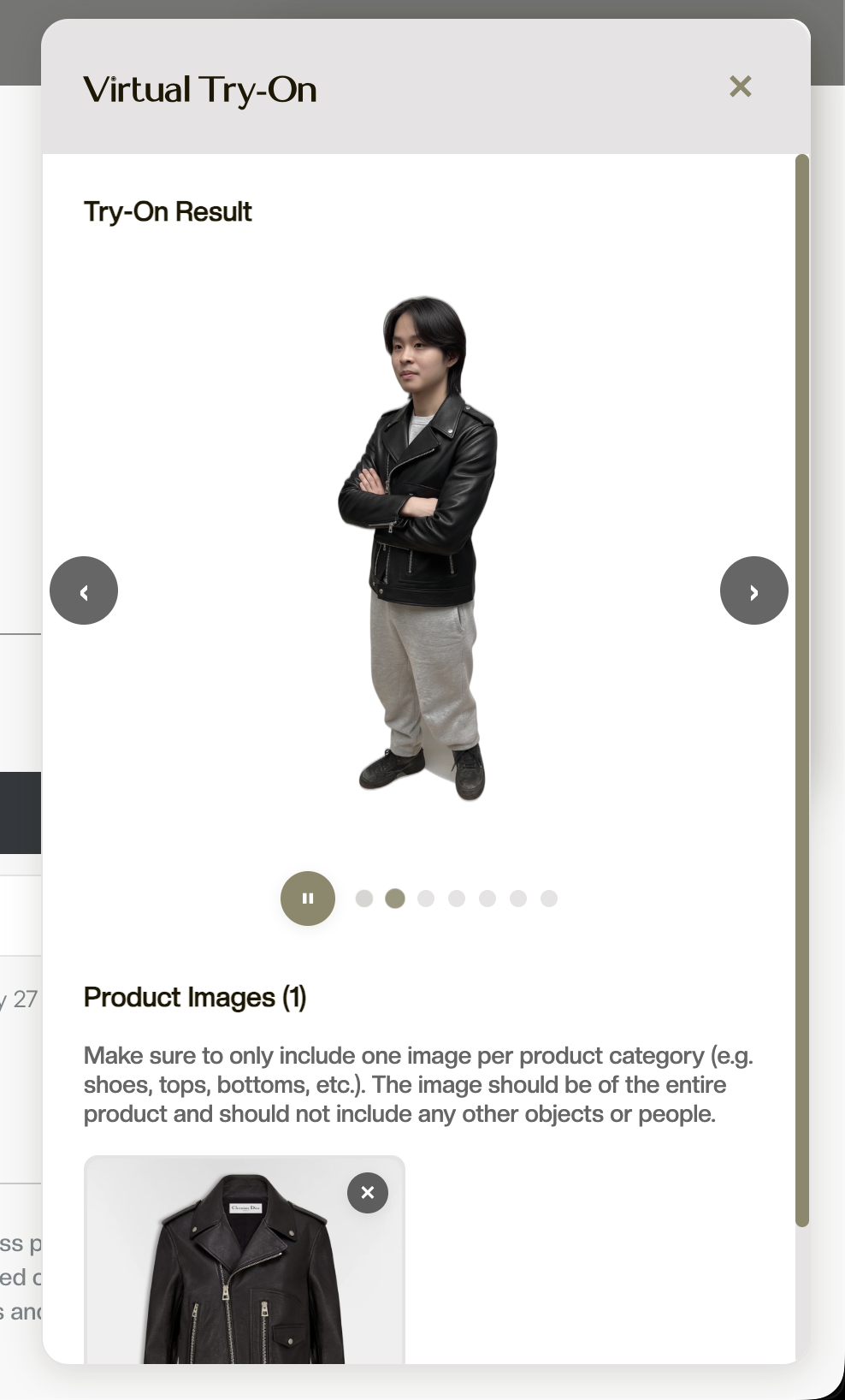

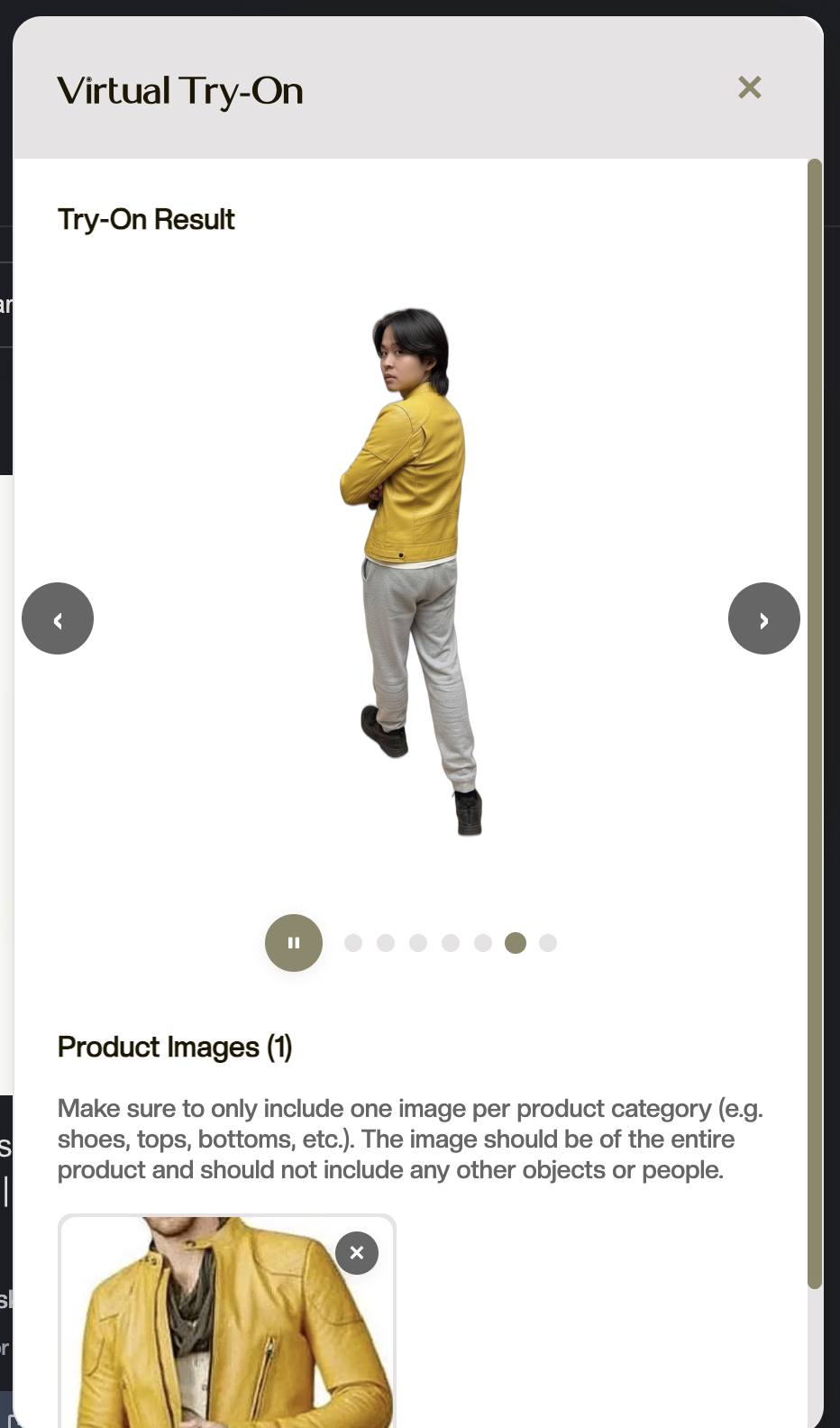

Mirr.AI is a free Chrome extension that lets you virtually try on any clothing item from any online store, see yourself from 7 different angles, and get AI-powered outfit recommendations—all from a single photo.

Inspired by the futuristic wardrobe from the opening scene of the 1995 comedy Clueless, Mirr.AI finally makes that 30-year-old vision real.

Here's how it works:

1. Upload once, try on forever Take a single photo. Our pipeline uses Google's Vertex AI diffusion models to generate a photorealistic avatar that preserves your body, pose, and proportions.

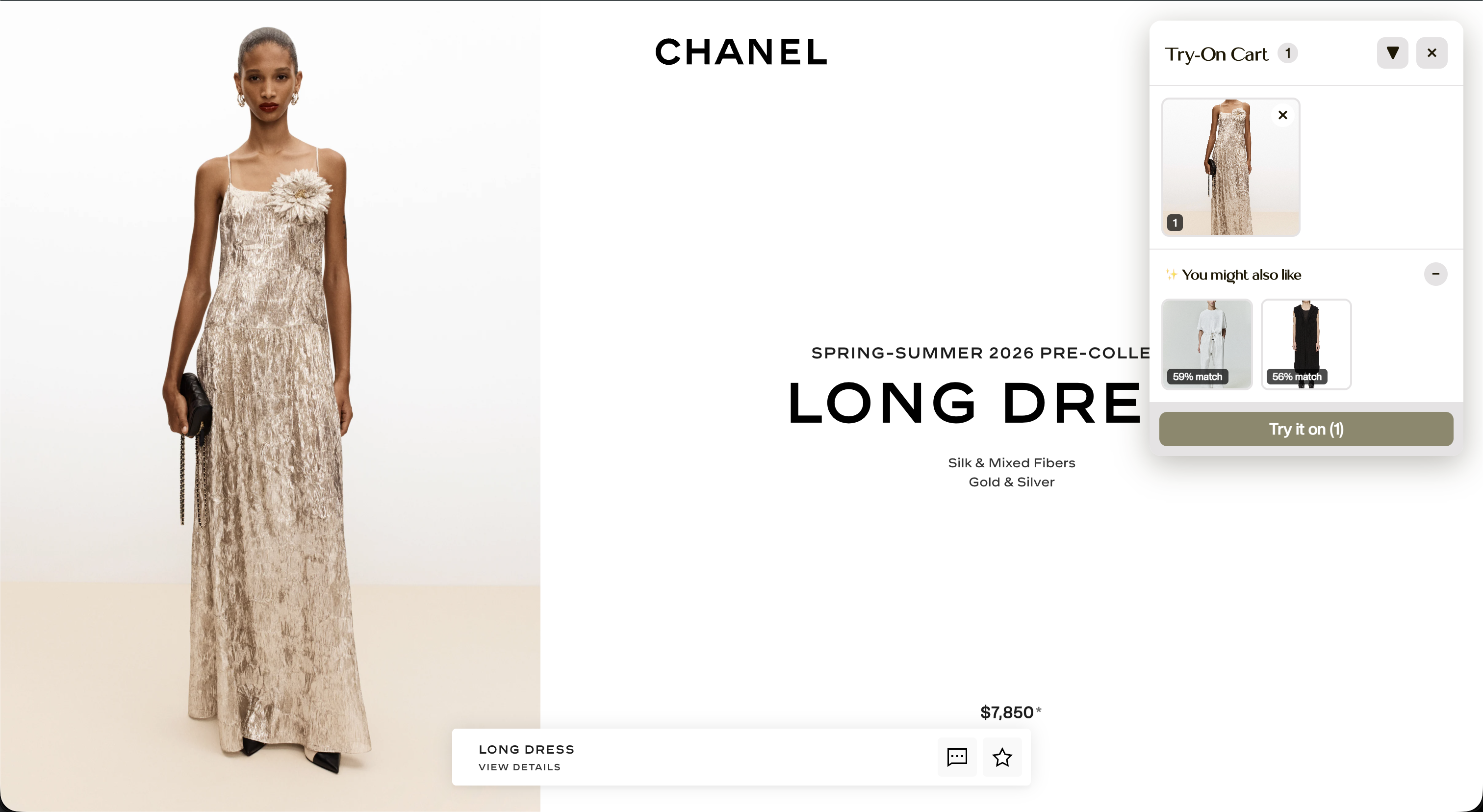

2. Shop anywhere, try on anything Browse your favorite stores normally—Amazon, Zara, H&M, ASOS, anywhere. Hover over any clothing item, click the Mirr.AI button, and watch as our AI fits that exact garment onto your avatar in real-time.

3. See yourself from every angle Not just a flat image—our Gumloop-orchestrated distributed inference pipeline dispatches 7 parallel Gemini 2.5 workers to synthesize camera views from 0° to 270°. See how that jacket drapes from behind, or how those jeans look from the side—in ~4 seconds instead of 21.

4. Build complete outfits Layer multiple pieces. Mix a top from Zara with pants from H&M. See the full look before you buy anything. Track your total cost in real-time.

5. Get AI-powered style recommendations Found a top that looks great but not sure what bottoms match? Our FashionSigLIP-powered recommendation engine analyzes your cart and suggests complementary pieces from your browsing history—based on visual style, not keywords.

How We Built It

The AI Pipeline

We built a cascaded generative system that processes your photo through multiple AI stages:

- U2-Net Segmentation — Isolates you from the background with precise alpha matting

- Google Vertex AI Virtual Try-On — Diffusion-based garment transfer conditioned on body pose and garment segmentation masks

- Cloudinary CDN — Uploads the composite to a global edge network for low-latency access

- Gumloop Agentic Orchestration — Dispatches 7 parallel Gemini 2.5 Flash workers through a DAG execution engine

- Marqo FashionSigLIP Embeddings — 768-dimensional fashion vectors enabling semantic similarity search for outfit recommendations

Why Gumloop for Distributed Inference?

Sequential Gemini API calls for 7 camera angles would take ~21 seconds. That's unacceptable for real-time try-on.

We use Gumloop's agentic workflow orchestration to achieve true parallelism:

| Method | Total Latency | Parallelism |

|---|---|---|

| Sequential Gemini API | ~21s (7 × 3s) | 1x |

| Async Python (rate-limited) | ~12s | ~2x |

| Gumloop Distributed | ~4s | 7x |

Gumloop's DAG execution engine dispatches all 7 inference tasks simultaneously, handles automatic retries with exponential backoff, and aggregates results only when all workers complete (barrier synchronization).

The Extension

Built with Plasmo, React 18, and TypeScript, our content scripts inject try-on buttons directly into any e-commerce DOM. The popup interface manages your avatar, shopping cart, and outfit gallery. We use Chrome Storage API for persistent state across sessions.

The Backend

Flask serves as our API gateway, handling multipart form uploads and SSE streaming. MongoDB Atlas stores session data, garment metadata, and pre-computed embedding vectors for sub-100ms recommendation queries. Cloudinary stages assets on a global CDN before dispatching to Gumloop.

Challenges We Ran Into

1. Sequential inference bottleneck Generating 7 camera angles sequentially was unacceptably slow (~21s). We solved this by integrating Gumloop's distributed workflow engine, reducing total latency to ~4 seconds through true parallel execution.

2. Sequential garment composition Layering multiple clothing items (top + bottom + jacket) required careful ordering through the diffusion pipeline. Naive approaches created artifacts where garments occluded incorrectly. We solved this by iteratively updating the pose reference after each garment pass.

3. Maintaining image quality across AI passes Running an image through segmentation → diffusion → CDN upload → distributed inference → segmentation again introduced cumulative quality loss. We implemented careful aspect ratio handling, lossless CDN encoding, and bilinear resize strategies to preserve resolution.

4. Novel view consistency Generating 7 camera angles that look like the same person wearing the same outfit—not 7 slightly different people—required extensive prompt engineering with Gemini 2.5 Flash and consistent conditioning on the reference image URL from Cloudinary.

5. Universal product detection Amazon structures product pages completely differently from Zara, which differs from independent Shopify stores. We built a multi-strategy detection system using meta tags, Open Graph data, structured data schemas, and fallback DOM traversal.

6. Gumloop integration complexity Coordinating asset staging (Cloudinary), workflow triggering (Gumloop), and result aggregation required careful error handling. We implemented retry logic and fallback to sequential generation if the distributed pipeline fails.

Accomplishments We're Proud Of

24 hours, fully functional product — From concept to working Chrome extension with a complete multi-model distributed AI pipeline in one hackathon sprint

5x faster multi-view generation — Gumloop's parallel DAG execution reduced 7-angle synthesis from 21s to ~4s

Works on any site — Universal compatibility with Amazon, Zara, H&M, ASOS, Shopify stores, and virtually any e-commerce platform

True 360° visualization — Not just a single flat image, but 7 synthesized camera angles for genuine try-on confidence

Production-quality photorealism — Results that actually help you make purchase decisions, not uncanny valley artifacts

Semantic outfit intelligence — AI recommendations based on visual style understanding through FashionSigLIP, not keyword matching

Intuitive UX — Zero learning curve. Hover, click, try on. Shopping stays as fast as it always was.

Exceptional teamwork — Seamless collaboration across frontend, backend, and ML workstreams under extreme time pressure

What We Learned

Distributed inference is a game-changer. Sequential API calls are the silent killer of real-time AI applications. Gumloop's agentic workflows let us achieve true parallelism without managing infrastructure.

CDN staging matters for distributed systems. Passing large images directly to workers creates bandwidth bottlenecks. Staging on Cloudinary's global edge network ensures all 7 Gemini workers can fetch the reference image with minimal latency.

Diffusion models are powerful, but sequencing matters. The order in which you composite garments dramatically affects the final output. A jacket must "know" there's a shirt underneath.

Fashion-domain embeddings beat general-purpose CLIP. Marqo's FashionSigLIP, trained on 300M+ fashion image-text pairs, understands that a "floral sundress" is semantically similar to a "botanical print midi dress" in ways vanilla CLIP doesn't.

The hardest part isn't the ML—it's the integration. Getting Vertex AI, Cloudinary, Gumloop, Gemini, U2-Net, FashionSigLIP, SSE streaming, Chrome extension APIs, and React state management to work together seamlessly required more debugging than any individual model.

The Impact

Virtual try-on technology is proven to reduce return rates by 20-40% and increase conversion rates by 30%.

If Mirr.AI achieved even a 25% reduction in fashion returns:

- $9.5 billion saved in processing costs annually (US alone)

- 6 million metric tons of CO2 emissions prevented

- 2.4 billion pounds of clothing diverted from landfills

And the market is ready. According to research, 85% of apparel retailers either currently use or plan to implement virtual try-on technology. The virtual try-on market is projected to grow from $15 billion in 2025 to $48 billion by 2030.

We're not building for a hypothetical future. We're building for a market that's actively searching for this solution.

What's Next for Mirr.AI

Short-term improvements:

- Higher fidelity avatars with sharper detail and reduced edge artifacts

- Better handling of oversized and loose-fitting garments

- Support for accessories: hats, bags, shoes, jewelry, sunglasses

Medium-term roadmap:

- Swimwear and athletic wear support

- True garment layering (jackets over shirts, coats over sweaters)

- Body measurement estimation for proactive size recommendations

- Mobile app with AR mirror mode

Long-term vision:

- Social features—share outfits with friends for feedback before purchasing

- Integration with retailer inventory systems for real-time availability

- Cross-brand outfit building from a unified interface

Mirr.AI: Try before you buy—from anywhere on the web.

Because the best way to reduce returns isn't stricter policies. It's giving people confidence before they click "Add to Cart."

Built With

- flask

- gemini

- google-cloud

- mongodb

- node.js

- plasmo

- react

- typescript

- vertex-ai

Log in or sign up for Devpost to join the conversation.