Inspiration

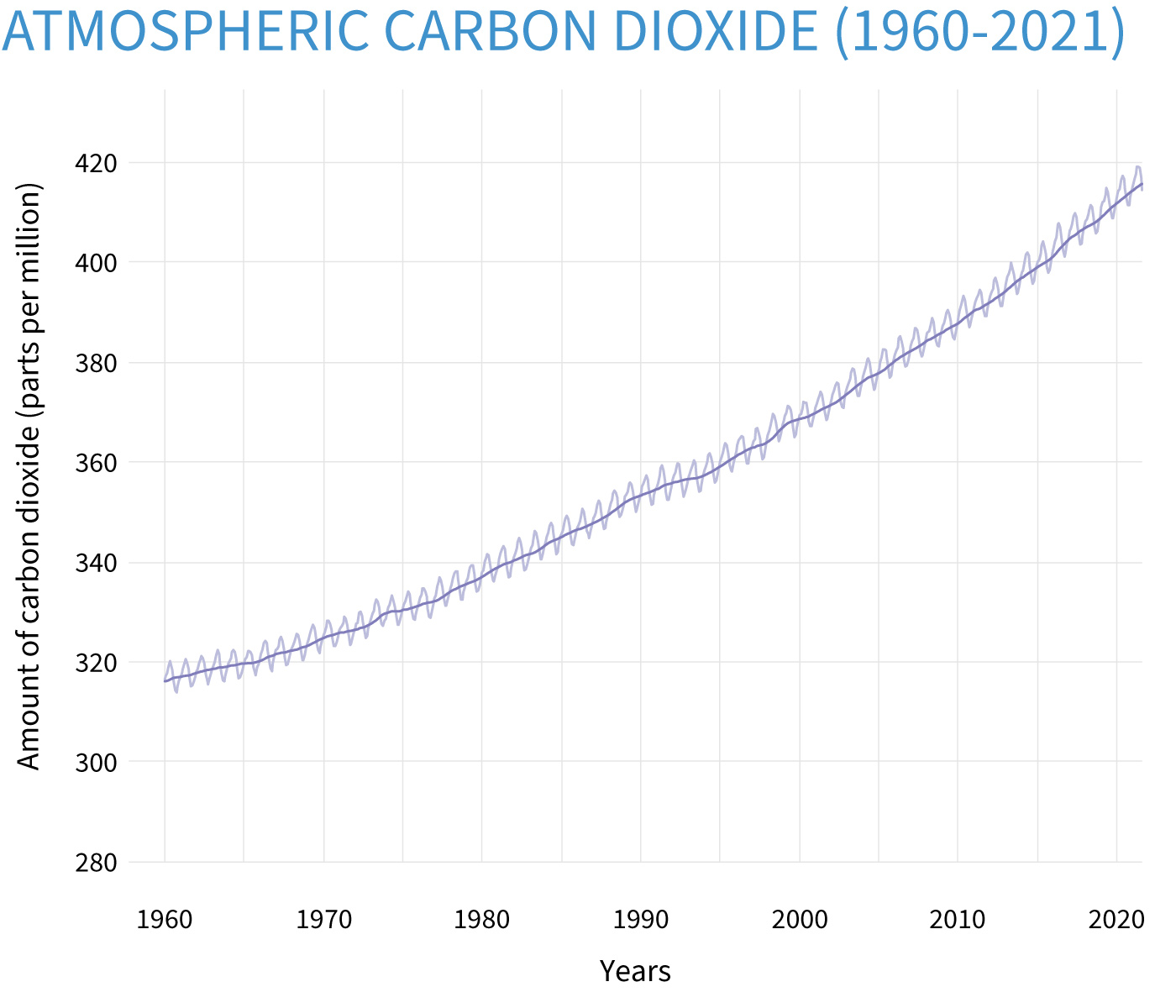

Nowadays, people are dependent on their smartphones whenever or wherever they are. That can lead to good and bad causes depending on how we "as the agent of creativity" drive the agenda of smartphone usage. Based on analysis from NOAA’s Global Monitoring Lab, the global average atmospheric carbon dioxide was 414.72 parts per million (“ppm” for short) in 2021, setting a new record high despite the continued economic drag from the COVID-19 pandemic.

Trees are natural carbon capture and storage machines, absorbing carbon dioxide (CO2) in the atmosphere through photosynthesis and then locking it up for centuries. It's why planting trees is touted as one of the key solutions to saving the planet.

Trees are natural carbon capture and storage machines, absorbing carbon dioxide (CO2) in the atmosphere through photosynthesis and then locking it up for centuries. It's why planting trees is touted as one of the key solutions to saving the planet.

By combining these facts, we can make a campaign to tell people how important planting trees are using this Snapchat AR technology that is accessible anytime and anywhere in a fun way. Hopefully, people are aware of the importance of planting trees when they're using our lens.

What it does

It simulates how we plant and grow trees in the real world with the help of AR technology. This lens aims to make people get used to planting and growing their own trees in the real world to help remove carbon dioxide from the air.

Usage Flow :

- Activate EZ Plant lens

- Click anywhere to start planting. There will be a pile of dirt spawned at the location that you clicked on.

- Click anywhere to shoot fertilizer. After you put the pile of dirt, you will be prompted to aim the fertilizer at your pile of dirt and wait for 3 seconds.

- Click anywhere to shoot water. Aim the water at your plant to make it bigger. And Congratulations, you've just contributed to saving our planet.

- Repeat those steps and plant as many trees as you want :)

How I built it

I used some great stuff from the lens studio such as World Mesh Detection, Tap to Spawn, Raycast, VFX Particle, etc.

- World Mesh Detection was used to analyze our real-world environment and the possibility to spawn objects into our lens.

- Tap to Spawn is a template that I used to instantiate new objects into the lens based on the real-world environment that was created by World Mesh Detection.

- Raycast is great stuff to detect the instantiated object that exists in front of our camera. Once the raycast detected the instantiated object, then it sends a trigger to update the plant mesh based on the current state (pile, small plant, or big plant).

- VFX Particle was used as the animator for fertilizer and water shooter.

Challenges I ran into

Of course, there are a lot of challenges that I discovered during the development of this lens. A few of them are detecting an instantiated object, making VFX particles for the fertilizer and water shooter, dealing with the global state, creating a delay after detecting an instantiated object, and many more bugs that we considered as challenges.

Accomplishments that I am proud of

This is my very first time using Lens Studio and I am very proud that I can learn and actually build something from it. One of the best moments that I'm proud of is when I finally figured out a way to use raycast to detect the instantiated object and update its mesh using a global state. I was having fun using Lens Studio to build this EZ Plant lens.

What I learned

There are many things that I learned during this journey of developing the EZ Plant lens. First of all of course I learned how to use Lens Studio to build interactive and fun Augmented Reality apps without having to know the low level of AR. It opened a lot of possibilities to bring more utilities for AR app to actually solves real-world problems in a fun way.

What's next for EZ Plant

I have a lot of planning for the next development of the EZ Plant lens, here are the points :

- Add geotagging for objects so the instantiated plant will exist every time I load up the EZ Plant lens

- Add more trees variation

- Add more steps to grow the plant

- Collaborative Plant

- Improve Performance

Built With

- javascript

- lens

- snapchat

- studio

Log in or sign up for Devpost to join the conversation.