Eye5G

Eye5G is an experimental aid for people with visual impairments featuring low-latency, real-time object detection using 5G Edge Computing. Source code and more details available at: https://github.com/SilentByte/eye5g

Website: https://eye5g.silentbyte.com

Inspiration

An estimated 100 million people worldwide have moderate or severe distance vision impairment or blindness [1]. It is important to understand that blindness is a spectrum [2] and each person's experience is unique. Visual impairments include blurry vision, tunnel vision, difficulties with depth perception or object detection, etc. Some people are left with only light perception or are completely blind.

We have decided to investigate potential solutions to alleviate symptoms of vision impairment and to develop a system that will be submitted to the aforementioned challenge.

What it does

In consultation with a doctor, we have created a system that is able to detect 80 different everyday objects (indoors & outdoors) in real-time and announce them verbally. Eventually, through a prototype wearable IoT device, visual feedback can be given in the form of light flashes. The following points should be addressed:

The system should...

- ...be able to detect a range of objects

- ...be customizable to address varying needs

- ...provide real-time feedback, verbal and visual

- ...be simple & convenient to use

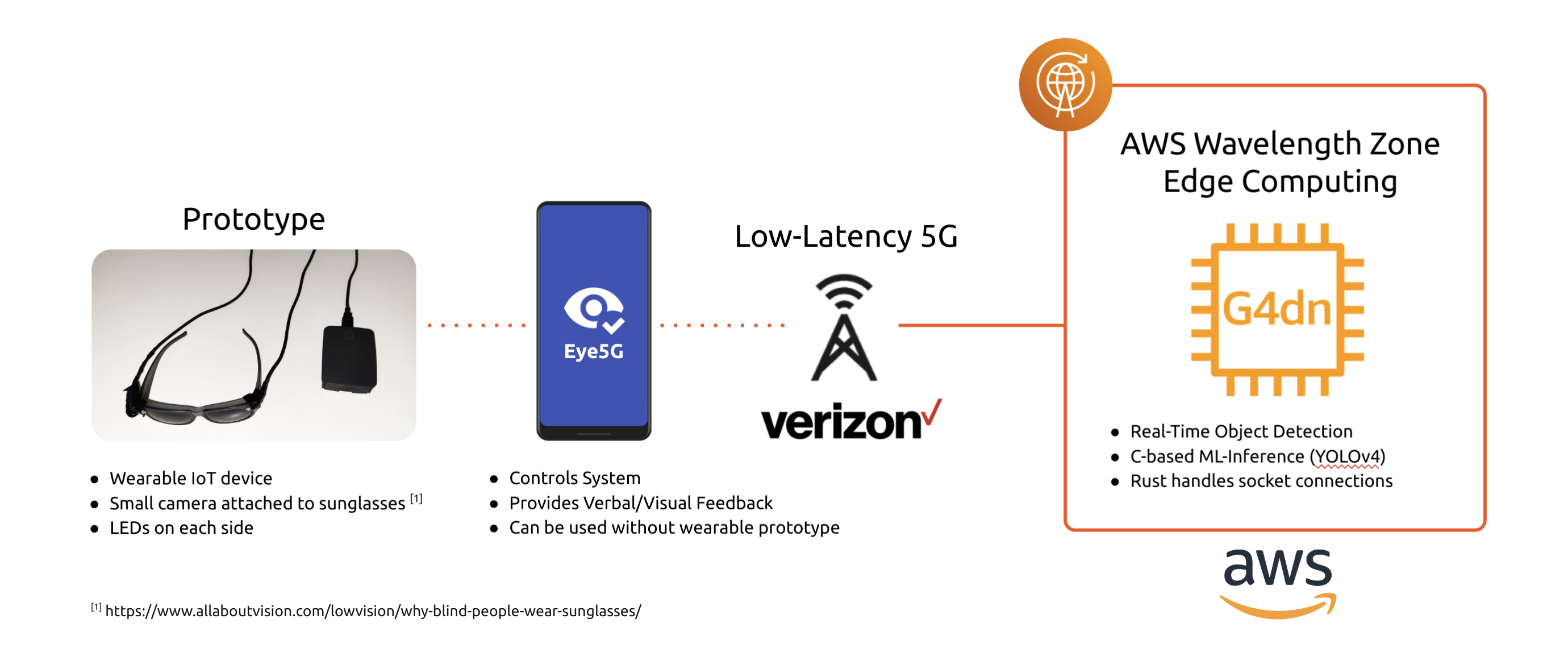

This lead to the architectural overview below:

The optional prototype smart-glasses with camera and LED attachments are intended to be controlled by an Android app. The app forwards a video stream over 5G using the Verizon network to an EC2 G4dn instance sitting in an AWS Wavelength zone. The EC2 instance performs real-time object detection and sends the results back to the phone which then announces the objects to the user verbally.

For our use case, AWS Wavelength is perfect to offload processing to reduce battery usage on the devices and to be able to deploy a powerful and accurate ML model with high performance.

How we built it

Back-End ML Inference

The back-end of the system is designed to run inside of an AWS Wavelength Zone on an EC2 G4 instance optimized for Machine Learning. Incoming connections are handled by a Websocket server written in Rust that decodes received frames and forwards them to the ML model. For object detection, we have incorperated the excellent C-based Darknet & YOLOv4.

By utilizing the GPUs available on EC2 G4 instances, we are able to perform real-time object detection in ~0.05 seconds per frame.

Android App

The user-facing part of the system is an Android app that accesses the camera and forwards the video feed to the back-end server for real-time object detection. Frames are properly resized and compressed to decrease bandwidth requirements.

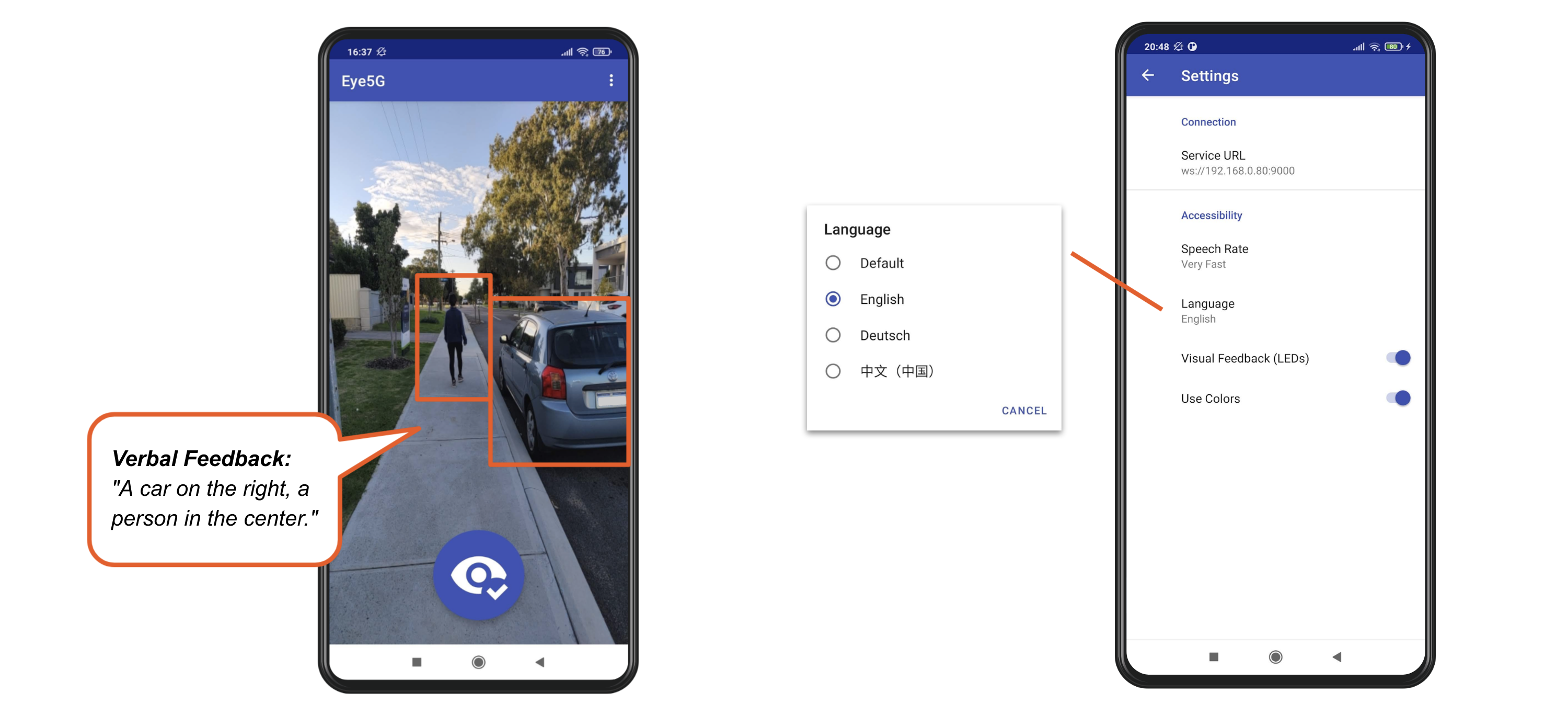

Detected objects returned by the server are then prioritized and grouped. For example, a car is more important and potentially more dangerous to the user than a person. Such objects are announced first and will eventually affect the blink-rate/colors of the LEDs when using the wearable IoT device. When scanning the scene illustrated below, the phone will verbally inform the user: "A car on the right, a person in the center."

To address the needs of people with various different types and degrees of visual impairment, the app features accessibility settings such as varying the speech rate (how fast object announcements are spoken), what language to use (currently, English, German, and Chinese are supported), as well as settings related to the visual feedback that will be used once integration with the wearable device is established.

Challenges we ran into

Video Encoding

As we examined a few different approaches to streaming video, including RTSP, we discovered that available implementations were buffering frames to reduce lag/stutter. Unfortunately, this generally resulted in a delay of about two seconds (depending on buffer size), which is slow long for our use case. Reducing the buffer size would inevitably result in noticable compression artefacts that negatively affected the object detection. We eventually decided to resize and compress frames manually and were able to achieve great results.

Testing

One of the main challenges we ran into was testing the system. AWS Wavelength is currently only available in select cities in the United States and can only be accessed through the Verizon Network. As we are based in Perth, Australia, we are far way from the AWS Wavelength Zones and thus experienced high latency. Access to the Nova Testing Platform kindly provided by Verizon for the duration of this challenge was helpful and allowed us to test the Android app in a 5G environment that is able to access AWS Wavelength.

For general testing during development, we mainly resorted to using a Local Area Network environment and deployed onto a test system running on a standard AWS EC2 instance in the Asia Pacific region. This turned out to be a good compromise because once the system is running properly in a regular AWS region, it can easily be deployed into an AWS Wavelength zone.

Accomplishments that we're proud of

We are excited that we managed to get accurate real-time object detection working reliably and that we are able to announce objects using text-to-speech in different languages tailored for different needs. We hope that this app can be useful for people that suffer visual impairment.

What we learned

During the course of this project, we had the chance to try out various things that were new to us:

1) We got to know AWS Wavelength and 5G teachnology, giving us access to ultra-low-latency connections and high bandwidth.

2) Real-time object detection using Machine Learning on GPU-enabled EC2 G4 instances.

3) A deep-dive into video capture and encoding on Android.

What's next for Eye5G

We are aiming to further improve verbal object announcements and make them even smarter. Currently, objects are sorted by priority and grouped into their rough location. While this works well, it can be rather chatty. Implementing object persistence (keeping track of objects accross multiple frames as they move) would allow us to reduce noise and make announcements clearer.

Also, we would like to improve and properly integrate the wearable IoT device with the app so that they can communicate with each other.

Development & Building

Server & ML Inference

The content in the server/ folder is a Rust project that can be built by running cargo build --release. The binary requires access to the YOLOv4 model weights that can be downloaded here: https://github.com/AlexeyAB/darknet/releases/download/darknet_yolo_v3_optimal/yolov4.weights

The folder contains two convenience scripts:

1) ./download_weights.sh will automatically download the model weights if they have not been downloaded before.

2) ./dev_server.sh automatically downloads the model weights, builds the Rust project using Cargo, and executes the server with a default configuration listening on 0.0.0.0:9000.

The server binary accepts the following flags:

Usage: eye5g_server [--host <host>] [--port <port>] --model-cfg <model-cfg> --model-labels <model-labels> --model-weights <model-weights> [--objectness-threshold <objectness-threshold>] [--class-prob-threshold <class-prob-threshold>]

Eye5G Server.

Options:

--host the hostname on which the server listens.

--port the server's port.

--model-cfg the model configuration file (*.cfg).

--model-labels the label definition file (*.names).

--model-weights the model weights file (*.weights).

--objectness-threshold

the objectness threshold (default: 0.9).

--class-prob-threshold

the class probability threshold (default 0.9).

--help display usage information

If you would like to build Eye5G without GPU support, modify Cargo.toml and remove the Cuda and CudNN features.

Test Client

Once the server is running, it can be tested using the Python test client located here: server/test_client/test_client.py. Run python test_client.py test_image.jpg to send the specified test image on which the inference is to be performed by the server. The output will be returned as JSON and printed in the terminal.

Dependencies

The following dependencies are required to build Eye5G:

- Build essentials

- cmake

- Clang

- NVidia Cuda & CudNN.

On AWS EC2 G4, installing nvidia-toolkit simplifies the process.

Android App

The Eye5G app is located in the app/ folder and is set up as an Android Studio project. Once opened in Android Studio, simply build and run the project. Ensure that Gradle has been synchronized correctly and that it has automatically downloaded all dependencies successfully.

Log in or sign up for Devpost to join the conversation.