-

-

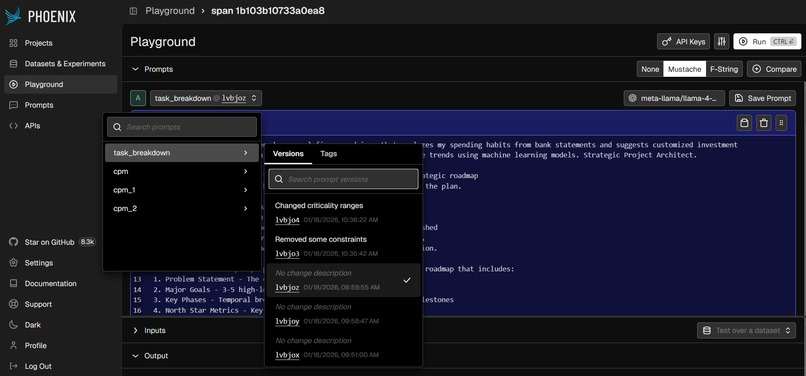

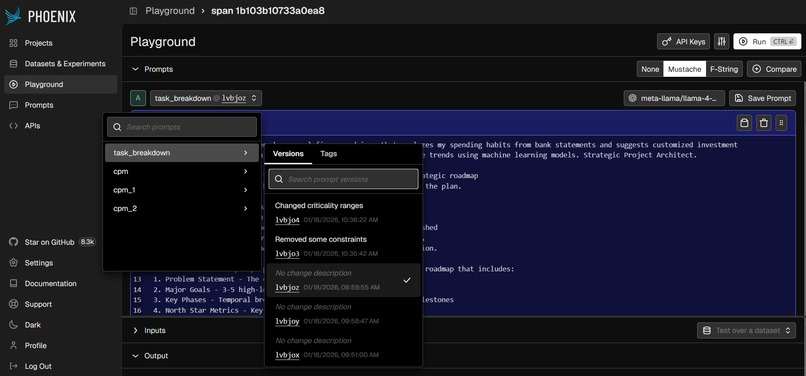

Prompt Engineering using Arise Phoenix Playground

-

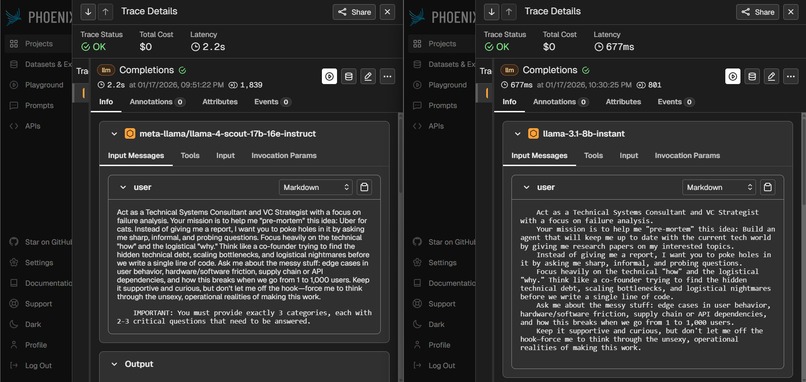

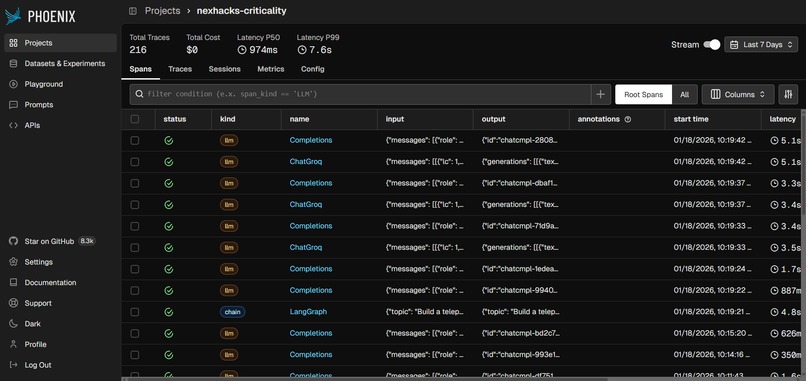

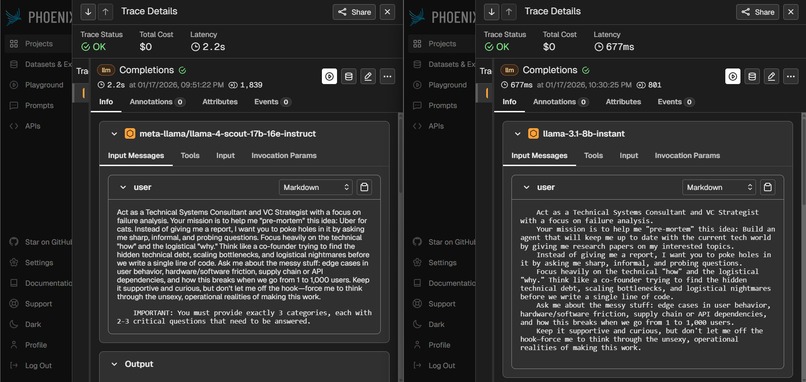

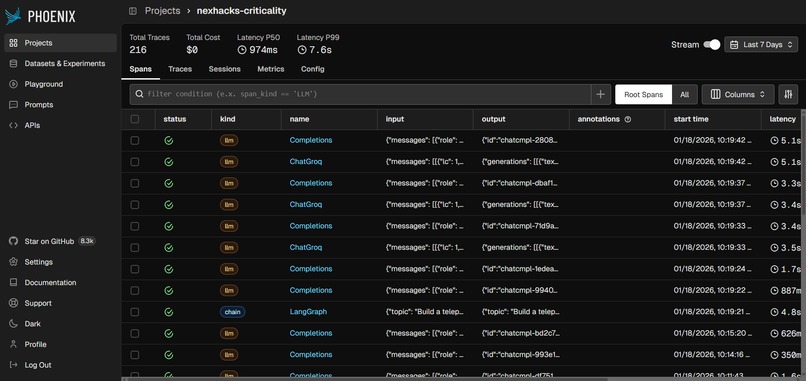

Massively Improved Latency and Token Reduction achieved through Arize Phoenix Tracing

-

Effective Arize Phoenix Playground during development stages

-

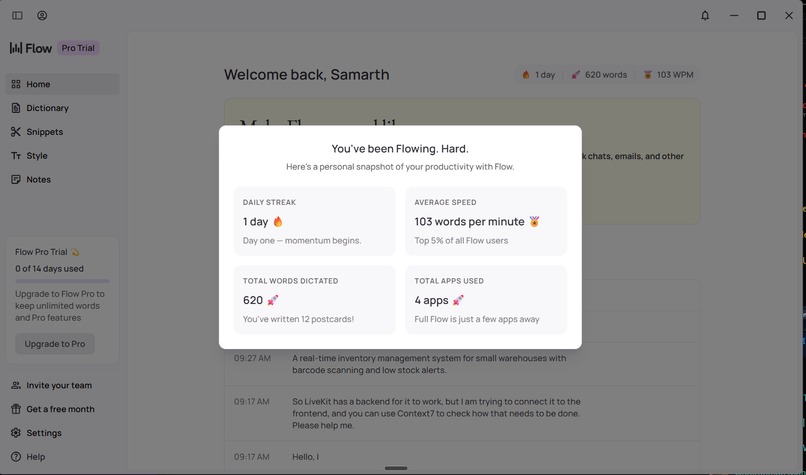

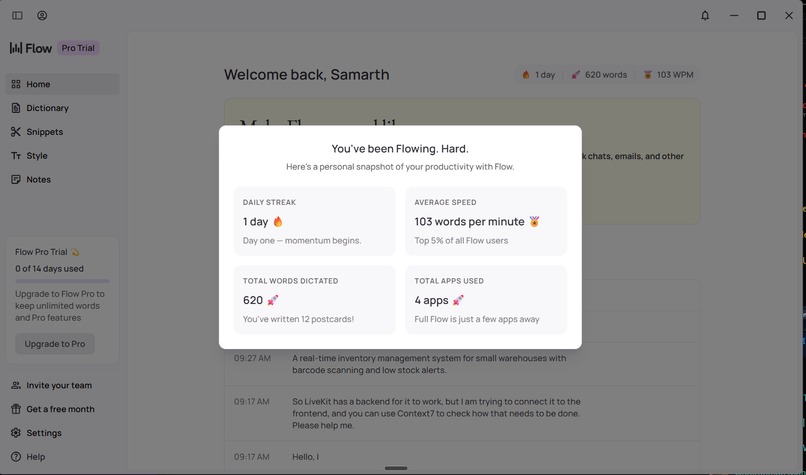

Hitting our Flow State with WisprFlow

-

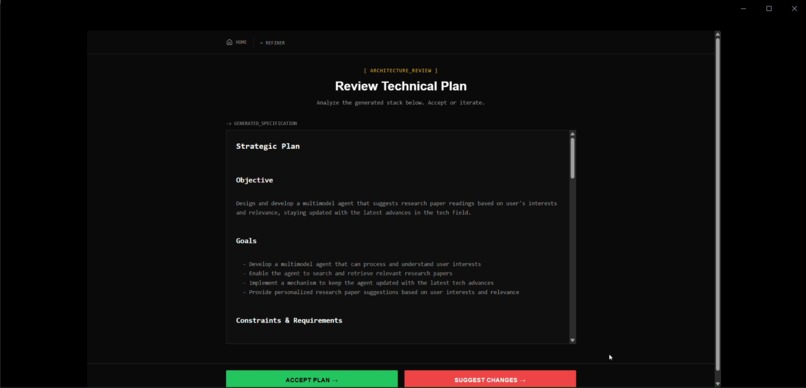

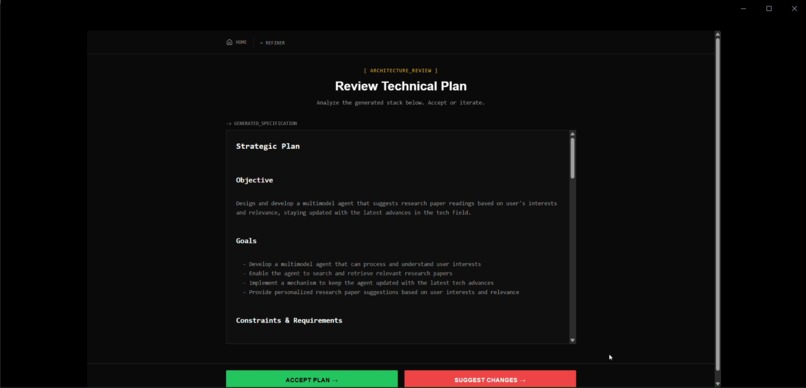

Review Implementation Plan View

-

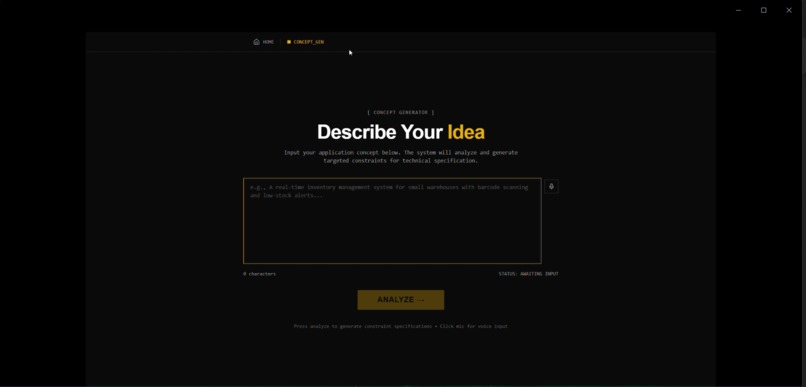

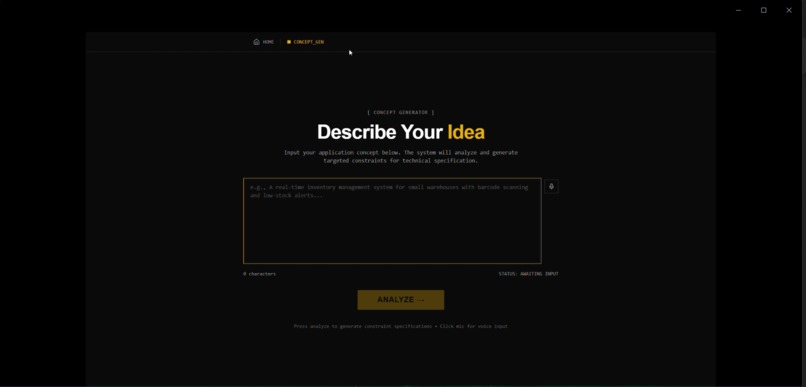

Home Page View

-

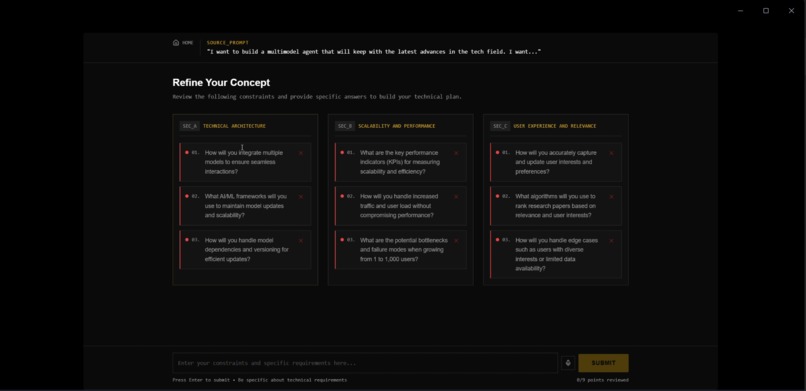

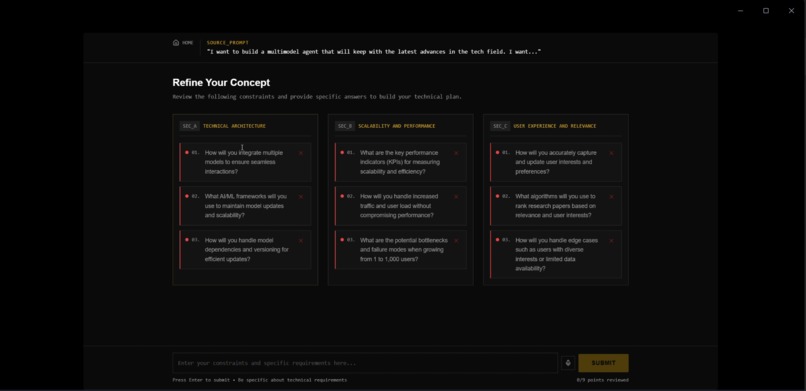

Constraints List View

-

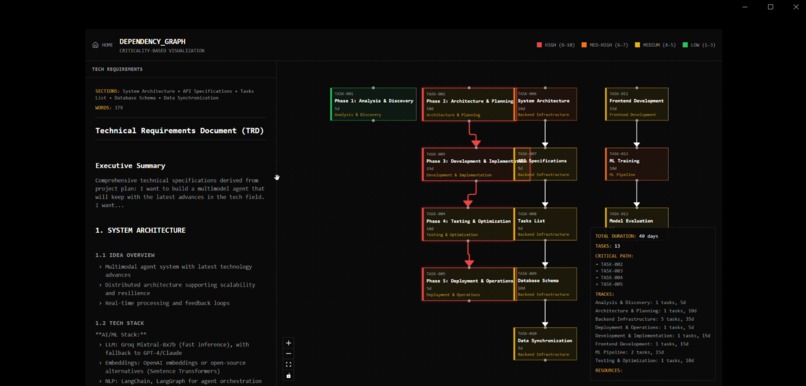

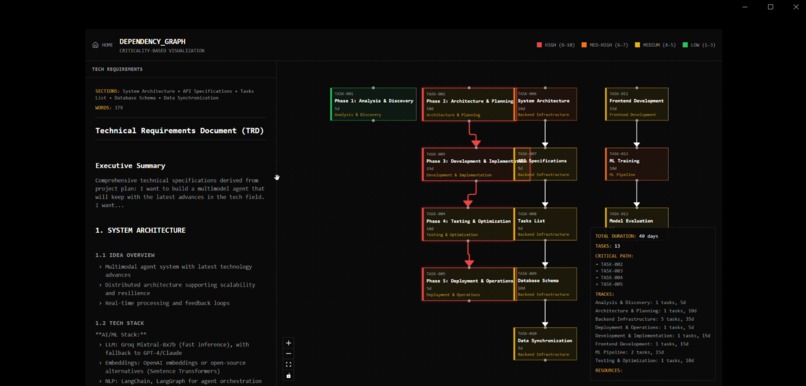

Critical Path Graph View

Eureka: Architect First. Code Second.

Inspiration

"The Saturday Night Crisis."

We have spent the last three years organizing RevolutionUC, watching hundreds of brilliant teams pitch their hearts out. But for every team that demos a polished product, there are three that crash and burn at 3:00 AM on Sunday.

The reason is rarely a lack of coding skill. It is almost always a lack of planning. Teams jump into VS Code immediately, write code for 15 hours, and then realize their database schema doesn't support their feature set or their scope is impossibly wide.

We built Eureka to solve the problem we've seen destroy so many potential winners. We wanted to build the tool we wish every hackathon team (and every engineering lead) had before they wrote a single line of code.

What it does

Eureka is an AI Project Architect that turns raw ideas into rigorous, executable engineering blueprints. It is not a "yes-man" generator. It is a constraint-based system that pushes back on your assumptions.

- Voice-Integrated Brainstorming: Users describe their abstract idea using a natural voice interface - powered by LiveKit.

- Architectural Stress Test: Instead of blindly generating code, Eureka identifies gaps in the logic (e.g., "How will you handle real-time auth with that database?") and forces the user to define constraints.

- Tech Stack Validation: Based on the constraints, it recommends a tailored stack (e.g., Next.js + Supabase vs. Python + Redis).

- The Critical Path: The core output is a generated Critical Path Method (CPM) graph. This visualizes the project as a directed acyclic graph (DAG), showing exactly which tasks are dependencies and the optimal order of operations to ship on time.

How we built it

We architected Eureka as a high-performance pipeline connecting multi-agent orchestrations, voice intent, and graph theory.

- The Interface: We built the frontend with Next.js and Tailwind CSS, adhering to a strict "Industrial Blueprint" design system to keep the focus on structure.

- Voice Pipeline: We utilized a LiveKit Agent to handle the real-time audio stream. The agent performs low-latency Speech-to-Text (STT) and manages the conversation flow, allowing users to "dump" their brain without typing.

- Reasoning Engine: We used Llama models prompted with strict Personas to implement a multi-turn critique loop where the model must validate constraints before generating the plan.

- Graph Generation: The Task Dependency Graph is rendered using React Flow (

@xyflow/react). We used thedagrelayout algorithm to automatically organize task nodes into a hierarchical tree based on their dependencies. - Observability & Latency Tuning: We integrated Arize AI to trace the LLM's decision-making process. Beyond just explainability, we used these traces to identify bottlenecks in the agent hand-off process, allowing us to shave AI response-to-graph latency.

- Development Velocity: We used Wispr Flow throughout the weekend to dictate documentation and boilerplate code, allowing us to maintain a high development velocity despite the complex UI elements and multi-agent architecture.

Challenges we ran into

1. The "Yes-Man" Problem: Initially, the LLM just wanted to agree with the user. If the user said, "I want to build Facebook in a weekend," the AI would say, "Great! Here's a plan." We had to rigorously engineer the system prompts to force the AI to be critical and identify scope creep.

2. Visualizing Dependencies:

Generating a text list of tasks is easy; generating a mathematically valid dependency graph is hard. We had to struggle with the dagre auto-layout engine to ensure the nodes didn't overlap and that the "Critical Path" was visually distinct from non-blocking tasks.

Accomplishments that we're proud of

- Real-Time Voice Latency: Achieving a "near-human" response time with the LiveKit integration. It feels like talking to a co-founder, not a bot.

- The Critical Path Algorithm: We successfully implemented the sorting logic to calculate the Longest Path in the DAG This allows us to determine the most critical tasks required to complete and ship the project on time.

- DevTools Fit: Building a tool that we actually want to use. We are already using Eureka to plan our next side project.

What we learned

- Constraint is Clarity: The more we forced the user to answer hard questions upfront, the higher the quality of the final output.

- Voice is Data-Dense: Users provide about 3x more context when speaking versus typing into a form. LiveKit was essential for capturing the nuance of a complex project idea.

- Observability is Non-Negotiable: With complex agentic workflows, using Arize AI to see why the model failed or succeeded was the only way we could debug the "brain" of the application.

What's next for Eureka!

- Continuous Workflows: Our vision goes beyond static planning to continuous, human-in-the-loop workflows.

- Dynamic Scoping: Imagine real-time scope negotiation, trading features against deadlines dynamically.

- Active Learning: The AI learns from your expertise and preferences to become a better architect with every project.

Log in or sign up for Devpost to join the conversation.