EthiFlow AI: Multi-Agent Governance System

Ensuring Responsible AI Governance, Data Privacy, and Ethical AI Usage.

Table of Contents

- Introduction

- Future Enhancements

- Key Features

- Cost Optimization Strategy

- Best Practices in Agent Design

- Dynamic Policy Enforcement

- Comprehensive Auditing & Transparency

- Basic User Role Simulation

- Compliance-Driven Design

- Technical Stack

- Getting Started

- Usage

- Testing Instructions

Note on Resource Optimization: This prototype is intentionally deployed with minimal AWS Lambda resource configurations (e.g., 128MB memory, 3-second timeout) due to hackathon-specific resource constraints. This strategic choice demonstrates our commitment to cost optimization and highlights the inherent scalability of AWS Lambda. In a production environment, these resources can be seamlessly adjusted upwards to meet higher performance demands without any code changes, ensuring optimal responsiveness and throughput.

Note on Policy Management UI: In this prototype version, we have developed a comprehensive Policy Management screen to allow administrators to view and edit policies in real-time. Due to time and resource constraints of this solo-developer hackathon, we haven't fully integrated this UI with the backend APIs yet. However, the underlying logic and the design of dynamic policy enforcement have been fully developed and are accessible via the API endpoints. This demonstrates our vision for a complete, user-friendly AI governance platform.

In this prototype version, we have developed a Policy Management screen to allow administrators to view and edit policies in real-time (perhaps showing a locally running UI screen). Due to time and resource constraints for the Hackathon, we haven't deployed this part to production yet. However, the underlying logic and the design of dynamic policy enforcement have been fully developed.

Introduction

In the rapidly evolving landscape of Artificial Intelligence, ensuring compliance, data privacy, and ethical usage is paramount. EthiFlow AI is a cutting-edge prototype of a Multi-Agent AI Governance System designed to address these critical challenges. Developed as a hackathon project, its primary goal is to demonstrate a robust and transparent framework for managing AI interactions through dynamic policy enforcement and comprehensive auditing.

Future Enhancements

EthiFlow AI is designed with scalability and extensibility in mind. Here are some key areas for future development:

- Advanced AI/NLP Capabilities: Full integration with Amazon Bedrock LLMs for generative capabilities, and more sophisticated PII/PHI detection and handling using advanced NLP services.

- Dedicated User Interface: A dedicated UI for comprehensive policy creation and management.

- Strengthened Security Posture & User Management: Implement robust secret management (e.g., AWS Secrets Manager) for sensitive credentials, enhance input validation to mitigate advanced attack vectors, and develop a full-fledged user authentication and authorization system.

- Enhanced System Resilience: Implement advanced retry mechanisms with exponential backoff, circuit breaker patterns, and Dead Letter Queues (DLQ) for robust error handling and fault tolerance in distributed environments.

- Optimized Performance & Scalability: Integrate asynchronous processing with message queues (e.g., SQS) for non-blocking operations, implement caching strategies for frequently accessed data (e.g., policies), and explore advanced infrastructure scaling solutions.

- Advanced Observability: Introduce distributed tracing (e.g., OpenTelemetry) to monitor request flows across agents, establish comprehensive metrics and dashboards for real-time system health monitoring, and provide real-time streaming with advanced analytics for audit logs.

- Improved Maintainability & Testability: Develop a comprehensive suite of automated unit and integration tests, and refine code organization for clearer interface contracts and modularity.

- Dynamic Configuration Management: Explore solutions like AWS AppConfig for dynamic updates of application configurations without service restarts.

Key Features

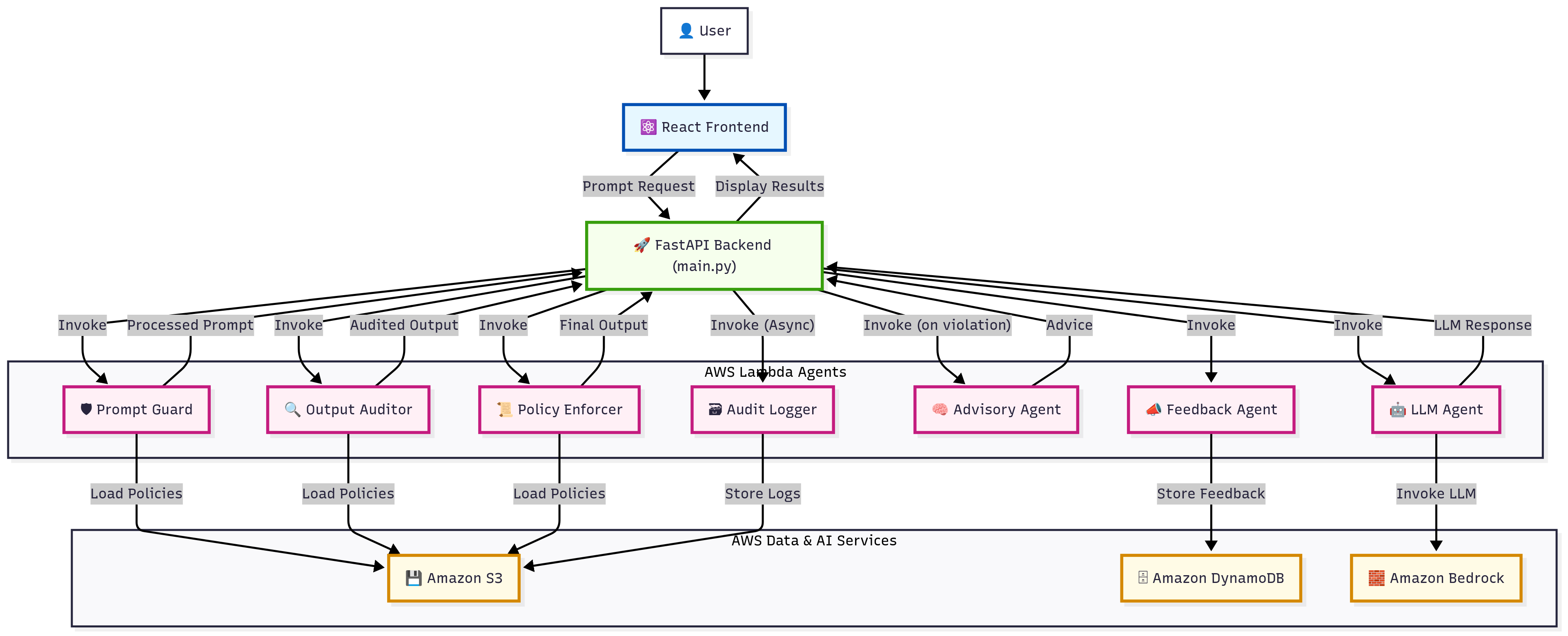

EthiFlow AI is built upon a modular multi-agent architecture, where each agent specializes in a specific aspect of AI governance:

- Prompt Guard: Filters and validates incoming user prompts against predefined policies, detecting and mitigating risks such as offensive language or Personally Identifiable Information (PII).

- LLM Agent: Directly invokes Large Language Models (LLMs) on Amazon Bedrock to generate responses based on processed prompts.

- Output Auditor: Inspects and processes the LLM-generated outputs, performing crucial tasks like PII detection and redaction, and flagging unverified or sensitive information.

- Policy Enforcer: Applies a layer of governance rules and controls to the final processed outputs, ensuring strict adherence to organizational and regulatory compliance frameworks.

- Advisory Agent: Provides transparent, contextual, and actionable guidance to users when their prompts or generated outputs are blocked, modified, or flagged due to policy violations.

- Audit Logger: A dedicated agent responsible for meticulously recording all significant activities and decisions made by the agents for comprehensive auditing and compliance reporting.

- Feedback Agent: Collects user feedback on the system's performance and policy enforcement, facilitating continuous improvement and user satisfaction.

Cost Optimization Strategy

EthiFlow AI is designed with a strong emphasis on cost-effectiveness, especially crucial in a hackathon context where resources are self-funded. While the system is fully scalable, the current prototype leverages minimal AWS Lambda configurations:

- Minimal Lambda Resources: Lambda functions are configured with the lowest possible memory (128MB) and a short timeout (3 seconds). This significantly reduces operational costs.

- Performance vs. Cost Trade-off: This configuration might introduce higher latency, particularly due to cold starts and network overhead between microservices. However, this is a deliberate trade-off to demonstrate cost optimization. In a production environment, these resources can be easily scaled up to meet performance demands without code changes.

- Strategic Service Selection: Utilizing cost-effective services like Amazon S3 for storage and DynamoDB with on-demand capacity for feedback, further optimizes the overall solution cost.

The system operates with a clear separation of concerns, following a microservices pattern:

- The React Frontend provides the user interface for submitting prompts and viewing results.

- User requests are sent to the FastAPI Backend (

main.py), which acts as the central API gateway. - The FastAPI backend invokes specialized AWS Lambda functions (e.g., Prompt Guard, LLM Agent, Output Auditor, Policy Enforcer) via their Function URLs in a sequential workflow.

- Each Lambda function performs its specific governance task, interacting with Amazon S3 for policy retrieval and Amazon DynamoDB for metadata/state management.

- All significant events are logged to the Audit Logger Lambda, which then stores them in Amazon S3.

- When policies are violated, the Advisory Agent Lambda is invoked to provide contextual guidance.

- The final processed output and the detailed audit trail are returned to the FastAPI backend, which then sends them back to the React frontend for display.

Best Practices in Agent Design

Each agent in EthiFlow AI is developed following AWS Lambda best practices to ensure robustness and efficiency:

- Global Clients: Utilizing global Boto3 clients (e.g., for S3, Lambda, DynamoDB, Bedrock) to optimize for warm starts and reduce invocation latency.

- Robust Error Handling: Comprehensive try-except blocks and consistent error response formatting to ensure graceful degradation and clear debugging.

- Structured Logging: Implementing structured logging with Python's

loggingmodule for better observability and easier analysis in CloudWatch.

Dynamic Policy Enforcement

EthiFlow AI features a dynamic policy management system, allowing for real-time adjustments to governance rules without requiring code redeployment:

- S3-Backed Policies: All core policies (for Prompt Guard, Output Auditor, Policy Enforcer, and Advisory Agent configurations) are centrally stored and managed in Amazon S3 buckets.

- API-Driven Updates: The FastAPI backend exposes dedicated API endpoints (

/policies/main,/policies/advisory) that enable administrators to retrieve and update these policies on the fly. This ensures that the system always operates with the most current set of rules.

Comprehensive Auditing & Transparency

Transparency and accountability are at the core of EthiFlow AI:

- Detailed Audit Trails: Every interaction and decision made by the agents is meticulously logged, creating a comprehensive audit trail.

- Enhanced Frontend Visualization: The React-based frontend provides an intuitive dashboard to visualize these audit logs:

- Timeline View: Presents a clear, step-by-step timeline of agent interactions, showing the flow of data and decisions.

- Audit Summary: Offers a quick overview of the processing, including the total number of agents involved, and counts of successful, blocked, and error-prone operations.

Basic User Role Simulation

To demonstrate robust Data Access Controls and differentiated policy enforcement, the system includes a basic user role simulation:

- Users can select a role (e.g., "Admin", "Standard User", "Guest") from a dropdown in the frontend.

- This selected role is passed to the backend, allowing for the conceptual application of role-based policies (e.g., an "Admin" might see less redacted information than a "Guest").

Compliance-Driven Design

EthiFlow AI is designed with a strong emphasis on regulatory compliance and ethical AI principles, incorporating considerations from various frameworks:

- FISMA Security Controls

- GDPR Data Sensitivity Levels

- EU AI Act Risk Categories

- Digital Services Act

- NIS2 Directive

- ISO/IEC 42001

- IEEE Ethics Guidelines

Technical Stack

EthiFlow AI leverages a modern and scalable technology stack:

- Backend: Python (FastAPI), running as AWS Lambda functions.

- Frontend: React, TypeScript, Vite, ShadCN/UI, Tailwind CSS.

- Cloud Platform: Amazon Web Services (AWS).

- Key AWS Services: AWS Lambda, Amazon S3, Amazon DynamoDB, Amazon Bedrock (direct integration for LLM Agent).

Cost Optimization Strategy

EthiFlow AI is designed with a strong emphasis on cost-effectiveness, especially crucial in a hackathon context where resources are self-funded. While the system is fully scalable, the current prototype leverages minimal AWS Lambda configurations:

- Minimal Lambda Resources: Lambda functions are configured with the lowest possible memory (128MB) and a short timeout (3 seconds). This significantly reduces operational costs.

- Performance vs. Cost Trade-off: This configuration might introduce higher latency, particularly due to cold starts and network overhead between microservices. However, this is a deliberate trade-off to demonstrate cost optimization. In a production environment, these resources can be easily scaled up to meet performance demands without code changes.

- Strategic Service Selection: Utilizing cost-effective services like Amazon S3 for storage and DynamoDB with on-demand capacity for feedback, further optimizes the overall solution cost.

Getting Started

To set up and run the EthiFlow AI demo, follow these steps:

Prerequisites

- Python 3.8+

- Node.js 18+ & npm

- AWS CLI: Configured with credentials that have permissions to create/manage Lambda functions, S3 buckets, and DynamoDB tables.

- AWS Account: An active AWS account.

Backend Setup (FastAPI & Lambda Functions)

Clone the Repository:

git clone <YOUR_REPOSITORY_URL> cd EthiFlow-AIInstall Python Dependencies:

pip install -r requirements.txtCreate S3 Bucket: This bucket will store your policies and audit logs. Replace

your-unique-bucket-namewith a globally unique name.aws s3 mb s3://your-unique-bucket-name --region <your-aws-region>Create DynamoDB Table:

aws dynamodb create-table \ --table-name EthiFlowFeedback \ --attribute-definitions AttributeName=item_id,AttributeType=S \ --key-schema AttributeName=item_id,KeyType=HASH \ --provisioned-throughput ReadCapacityUnits=5,WriteCapacityUnits=5 \ --region <your-aws-region>Deploy Lambda Functions:

- Deploy the Python code for

prompt_guard,output_auditor,policy_enforcer,advisory_agent,llm_agent,audit_logger, andfeedback_agentas AWS Lambda functions. (Their code is insrc/agents/) - Crucially, configure Function URLs for each Lambda.

- Environment Variables: Set the following environment variables for your

main.py(FastAPI) application and for the Lambda functions themselves:ETHI_FLOW_PROMPT_GUARD: Function URL of Prompt Guard LambdaETHI_FLOW_OUTPUT_AUDITOR: Function URL of Output Auditor LambdaETHI_FLOW_POLICY_ENFORCER: Function URL of Policy Enforcer LambdaETHI_FLOW_ADVISORY_AGENT: Function URL of Advisory Agent LambdaETHI_FLOW_LLM_AGENT: Function URL of LLM Agent LambdaETHI_FLOW_FEEDBACK_AGENT: Function URL of Feedback Agent LambdaETHI_FLOW_AUDIT_LOGGER: Function URL of Audit Logger LambdaETHI_FLOW_S3_POLICY_BUCKET_NAME: Your S3 bucket name (e.g.,your-unique-bucket-name)ETHI_FLOW_S3_POLICY_KEY:policies/initial_policies.jsonETHI_FLOW_ADVISORY_AGENT_ADVICE_KEY:policies/advice_config.jsonAUDIT_BUCKET_NAME: Your S3 bucket name (for Audit Logger)ETHI_FLOW_FEEDBACK_TABLE_NAME: Your DynamoDB table name (for Feedback Agent)ETHI_FLOW_BEDROCK_MODEL_ID:amazon.titan-text-express-v1(for LLM Agent)AWS_REGION: Your AWS Region (for LLM Agent)

- Upload Initial Policies: Upload

src/policies/initial_policies.jsonandsrc/policies/advice_config.jsonto your S3 bucket under the respective keys.

- Deploy the Python code for

Frontend Setup (React Application)

Navigate to Frontend Directory:

cd frontendInstall Node.js Dependencies:

npm installConfigure API Base URL: Create a

.envfile in thefrontend/directory and add your FastAPI backend URL:VITE_API_BASE_URL=http://localhost:8000 # Or your deployed FastAPI URLRun Development Server:

npm run devThis will start the React development server, typically accessible at

http://localhost:5173(or similar).

Usage

- Submit a Prompt: On the homepage, enter your prompt in the text area.

- Select User Role: Choose a user role from the dropdown to simulate different access levels.

- Process: Click "Process Prompt" to initiate the multi-agent workflow.

- Review Results: Observe the "Processing Results" section, including the final output, policy status, and the detailed audit trail presented in a timeline view.

- Provide Feedback: Use the feedback card to submit your thoughts on the system's performance.

- Manage Policies: Navigate to the

/policiesroute to view and dynamically update the governance policies stored in S3.

Testing Instructions

To verify the functionality of EthiFlow AI, please follow the usage steps above. The system's comprehensive audit logs (visible in the UI and CloudWatch) provide detailed insights into each agent's operation and policy enforcement. Each agent is designed with robust error handling and logging to ensure transparency and debuggability.

Log in or sign up for Devpost to join the conversation.