Inspiration

The spark for Ergonomic Sentinel came from observing a silent epidemic. We watched skilled workers from assembly lines to office desks develop chronic pain not from a sudden accident, but from the relentless accumulation of thousands of small, uncorrected movements every single day.

This personal observation is reflected in a devastating global reality:

- A Crippling Economic Drain: Work-related musculoskeletal disorders (MSDs) are a monumental burden, costing the global economy $2.1 trillion annually [1]. In the U.S. alone, workplace injuries cost businesses $176.5 billion in 2023 [2].

- A Critical Local Crisis: In Pakistan, our home, the human cost is acute. Key sectors like agriculture and manufacturing show rising injury trends, with an estimated 3.8 million workdays lost each year to ergonomic injuries [3, 4].

- A Pervasive Human Toll: The pain is widespread. Studies show over 77% of academic staff report work-related pain [5], and MSDs account for nearly 30% of all U.S. workplace injuries [6].

The existing solution is a reactive cycle of failure: an injury occurs, a report is filed, productivity is lost, and the worker pays the price. For safety officers, it's an impossible task that no single person can monitor every worker's posture and PPE compliance in real-time across a busy facility.

This led us to our fundamental question: What if safety could be proactive instead of reactive? What if we could prevent the injury before it happens?

This question became our mission. We envisioned not a punitive supervisor, but a guardian system, a digital sentinel. Inspired by the concept of a co-pilot in aviation, we aimed to create a system that provides gentle, immediate feedback to prevent errors before they cause harm.

Modern AI and computer vision made this vision possible. We saw this technology not merely as a tool for automation, but as a tool for care, allowing us to understand the human body in context and offer support in the moment it's needed.

Our inspiration is two-fold:

- Empathy for the Worker: To provide every individual with a personal safety net, ensuring they can end their shift as healthy as they started it.

- Empowerment for Safety Teams: To equip professionals with intelligent, real-time tools that transform safety from a manual checklist into a continuous, protective layer.

We built the Ergonomic Sentinel to honor the dignity of work. Every real-time alert it provides is more than data. It's a prevented injury, a protected worker, and a step toward a healthier team.

What it does

Ergonomic Sentinel is an intelligent, real‑time monitoring system that proactively safeguards workers from ergonomic strain and safety violations. It operates as an ever‑watchful digital safety partner, using a blend of modern Computer Vision and Artificial Intelligence to analyze the workplace environment and the people within it.

The system performs two primary, simultaneous functions, both aimed at preventing harm before it occurs.

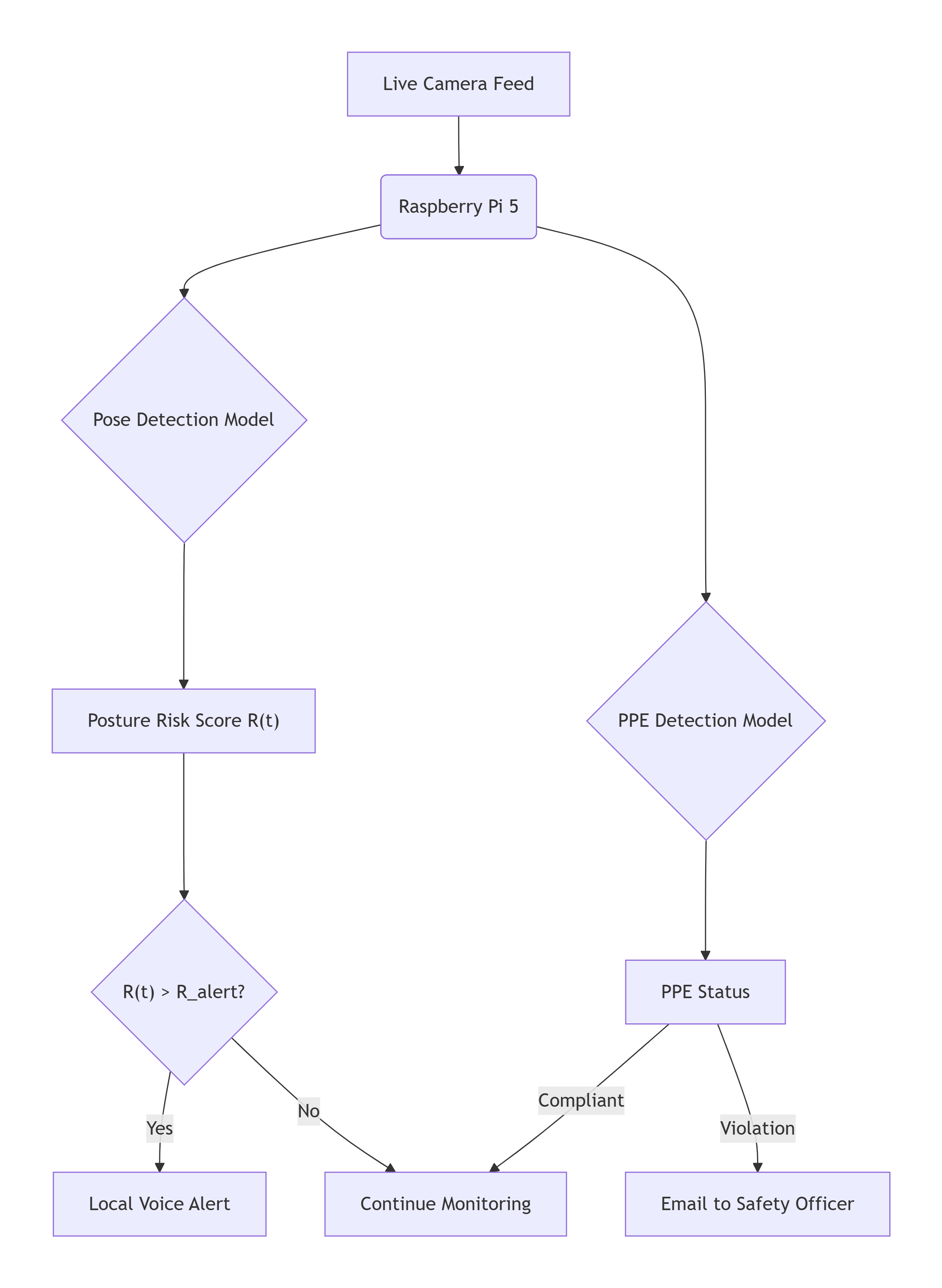

1. System Architecture

Diagram 1: Overview of the Ergonomic Sentinel system architecture

2. Pose Detection – The Posture Guardian

Using a live video feed, our AI model tracks the skeletal key‑points of each worker (shoulders, spine, hips, knees, etc.) and reconstructs their posture in real time. It doesn't just "see" a person; it understands their body's geometry.

For each worker, the system continuously evaluates:

- Joint angles (spinal flexion, shoulder abduction)

- Static hold duration (how long a potentially stressful posture is maintained)

- Movement symmetry

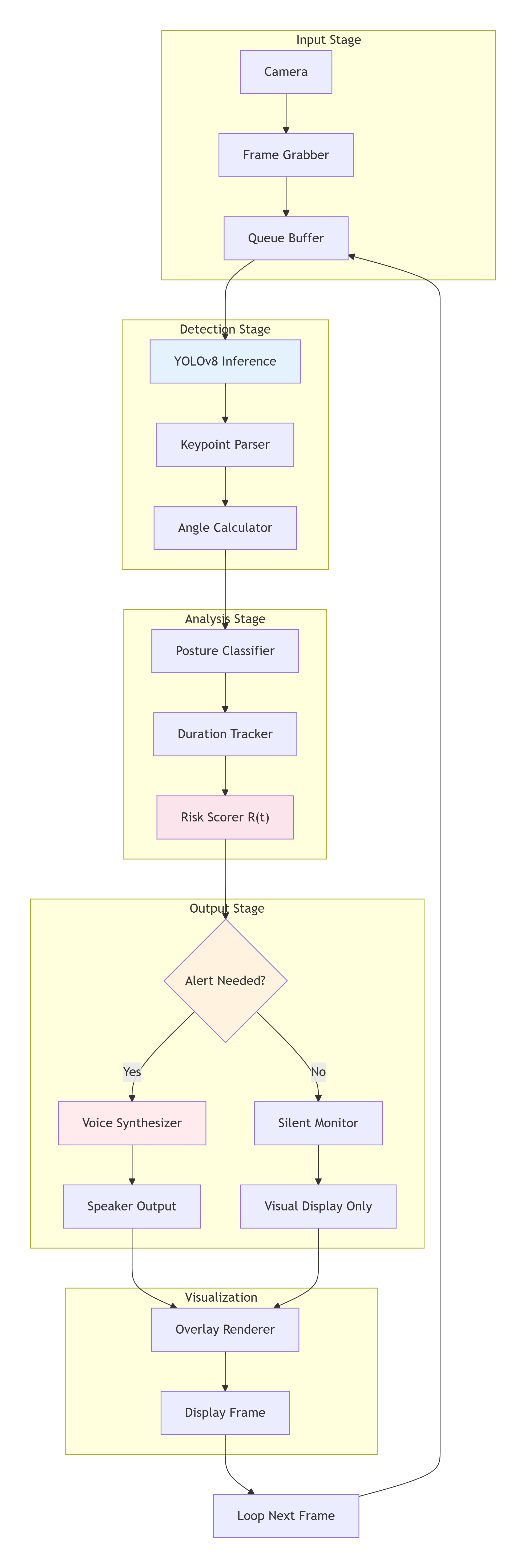

Diagram 2: Overview of the Pose Estimation Module

Diagram 2: Overview of the Pose Estimation Module

These metrics are compared against established ergonomic safety thresholds derived from standards like NIOSH Lifting Equation principles and OSHA ergonomic guidelines. When a worker's posture exceeds a safe limit—for instance, bending over for too long or lifting with a twisted spine—the system triggers an intervention.

Intervention: A gentle, localized voice alert is played through an on‑site speaker, prompting the worker to adjust their posture immediately. For example:

"Please straighten your back and engage your legs."

This immediate feedback loop helps build muscle memory for safer movement patterns.

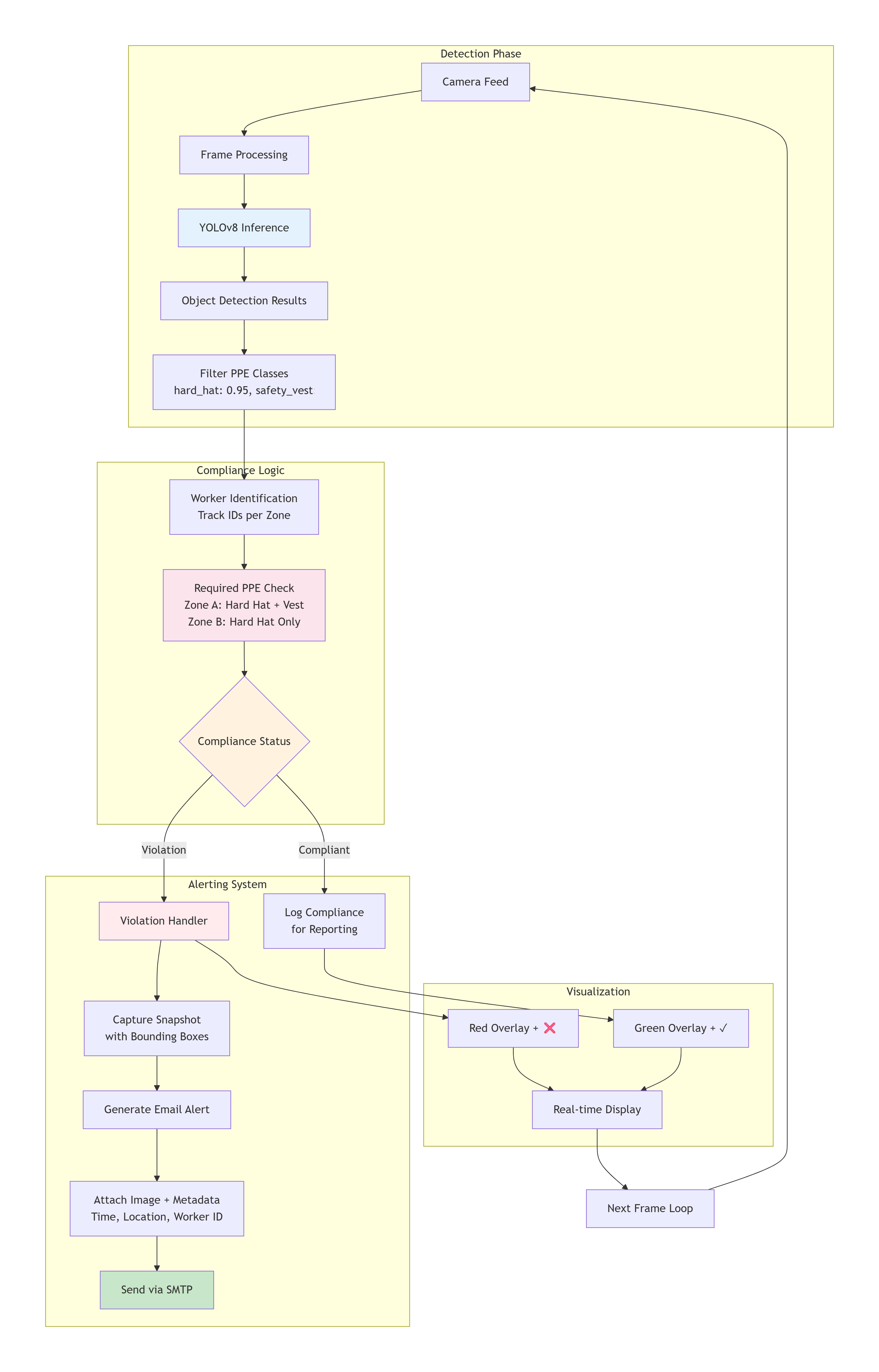

3. Object Detection – The PPE Compliance Monitor

Running concurrently, a separate AI model scans the video feed to detect essential Personal Protective Equipment (PPE). It is trained to recognize items such as:

- Hard hats (`hard_hat`)

- Safety vests (`safety_vest`)

The system verifies that all required PPE is present and correctly worn. A violation such as a missing hard hat in a designated zone is flagged immediately.

Diagram 3: Overview of the Object Detection Module

Diagram 3: Overview of the Object Detection Module

Intervention: Unlike the personal posture alerts, PPE violations are escalated to the safety team. The system automatically:

- Captures a snapshot of the violation.

- Generates an email alert to the designated Safety Officer.

- The email includes the image, timestamp, location, and worker ID (if anonymized tracking is used) for rapid corrective action.

4. The Intelligence Behind the Alerts

The system's decision-making isn't based on simple rules alone. It uses a weighted risk score \( R \) that combines the severity and duration of an ergonomic deviation:

$$ R(t) = \sum_{i} w_i \cdot \left( \frac{\theta_i(t) - \theta_{i,\text{safe}}}{\theta_{i,\text{max}} - \theta_{i,\text{safe}}} \right) + \lambda \cdot T_{\text{hold}} $$

Where:

- \(\theta_i(t)\) is the current angle of joint \(i\),

- \(\theta_{i,\text{safe}}\) is its safe-range limit,

- \(\theta_{i,\text{max}}\) is the maximum permissible angle for joint \(i\),

- \(w_i\) is a biomechanical risk weight for that joint,

- \(T_{\text{hold}}\) is the time the unsafe posture has been held,

- \(\lambda\) is a time-penalty coefficient.

An alert is triggered when \(R(t)\) exceeds a configured threshold \(R_{\text{alert}}\).

How we built it

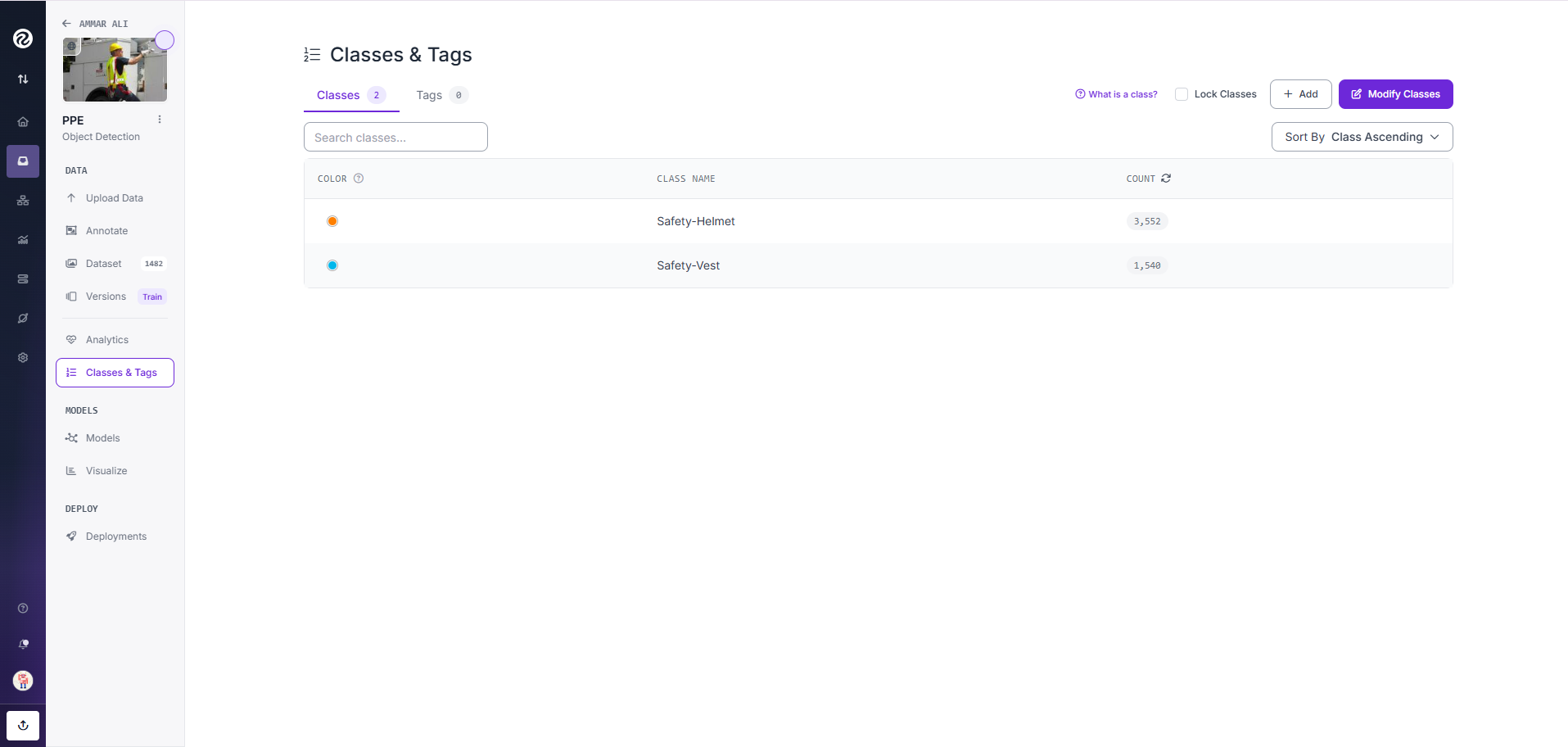

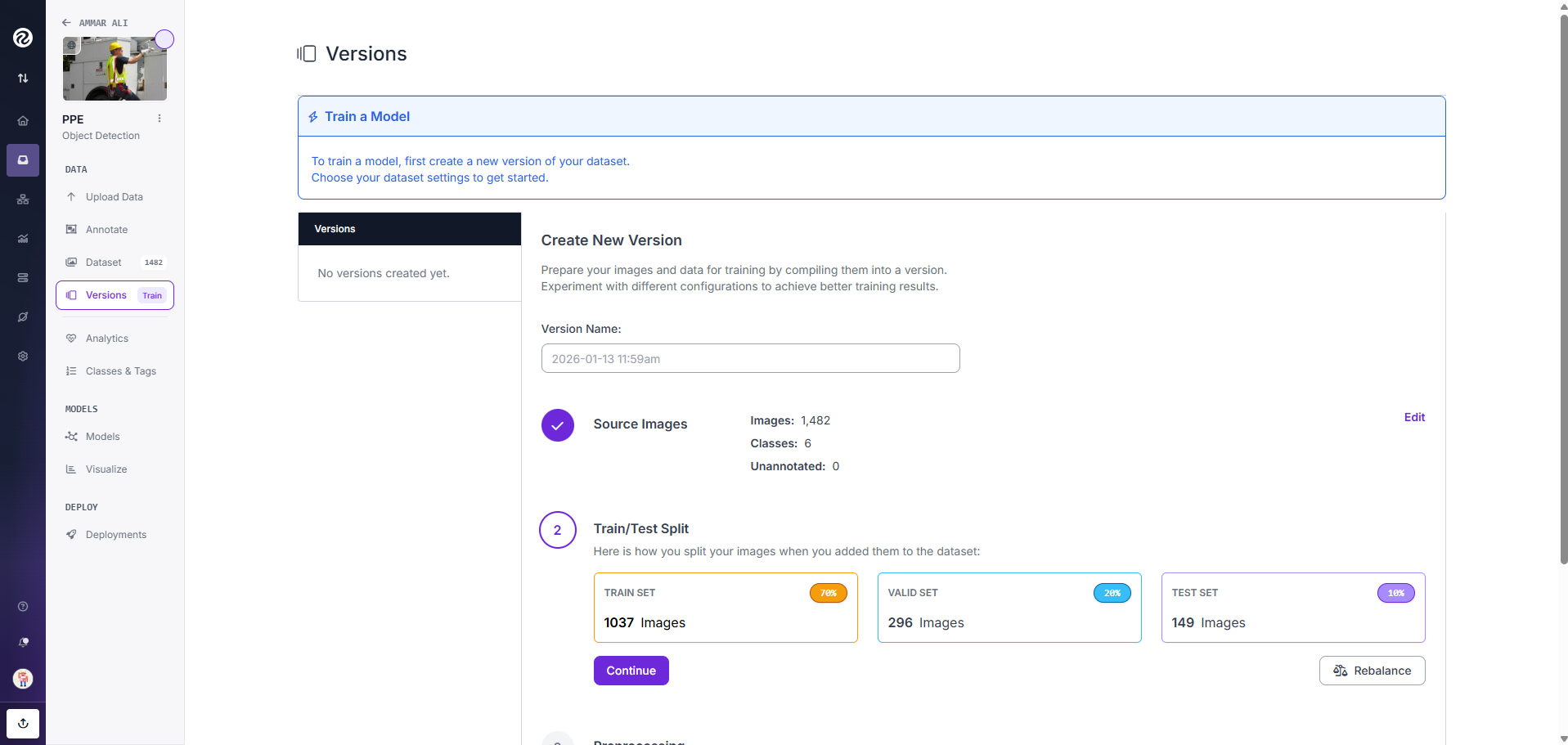

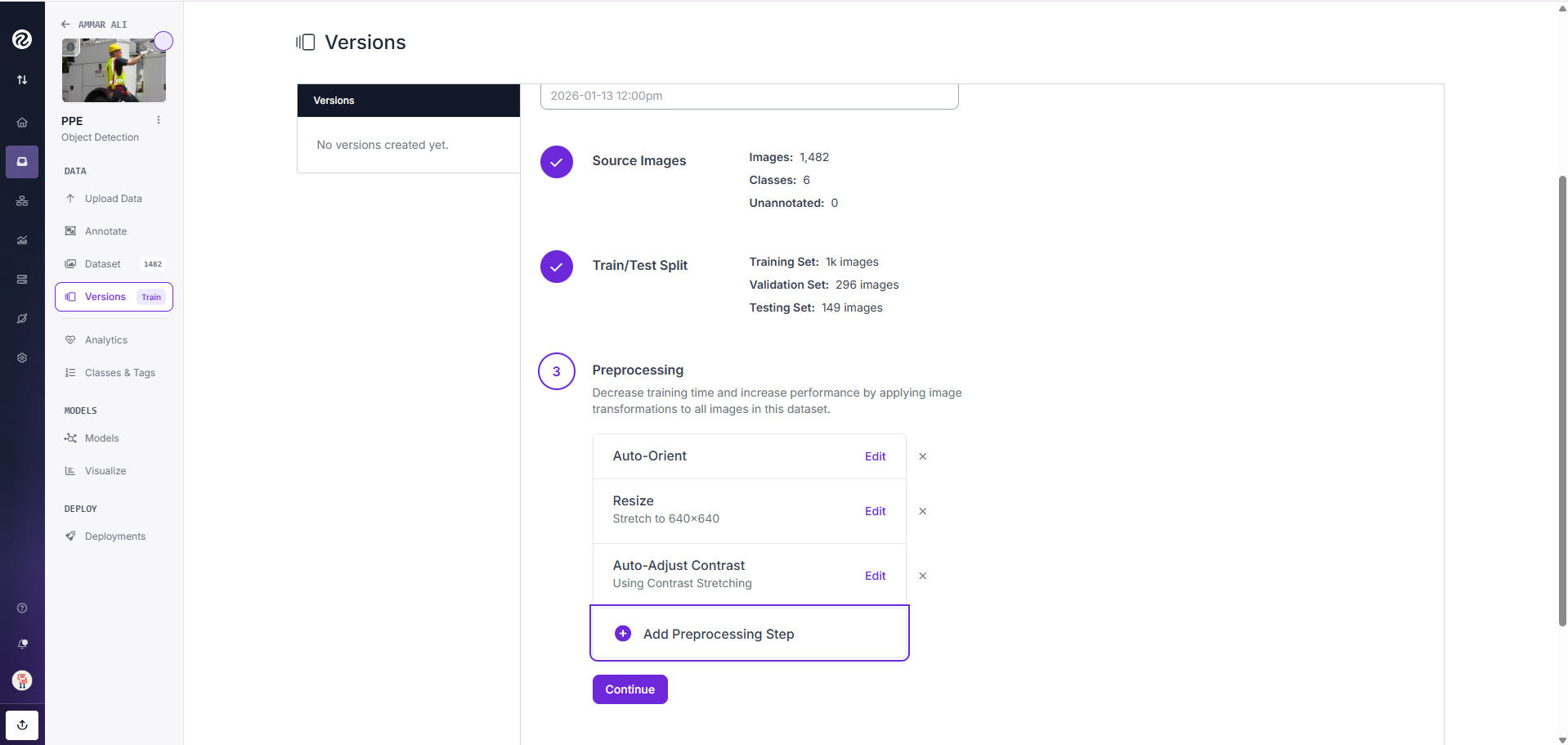

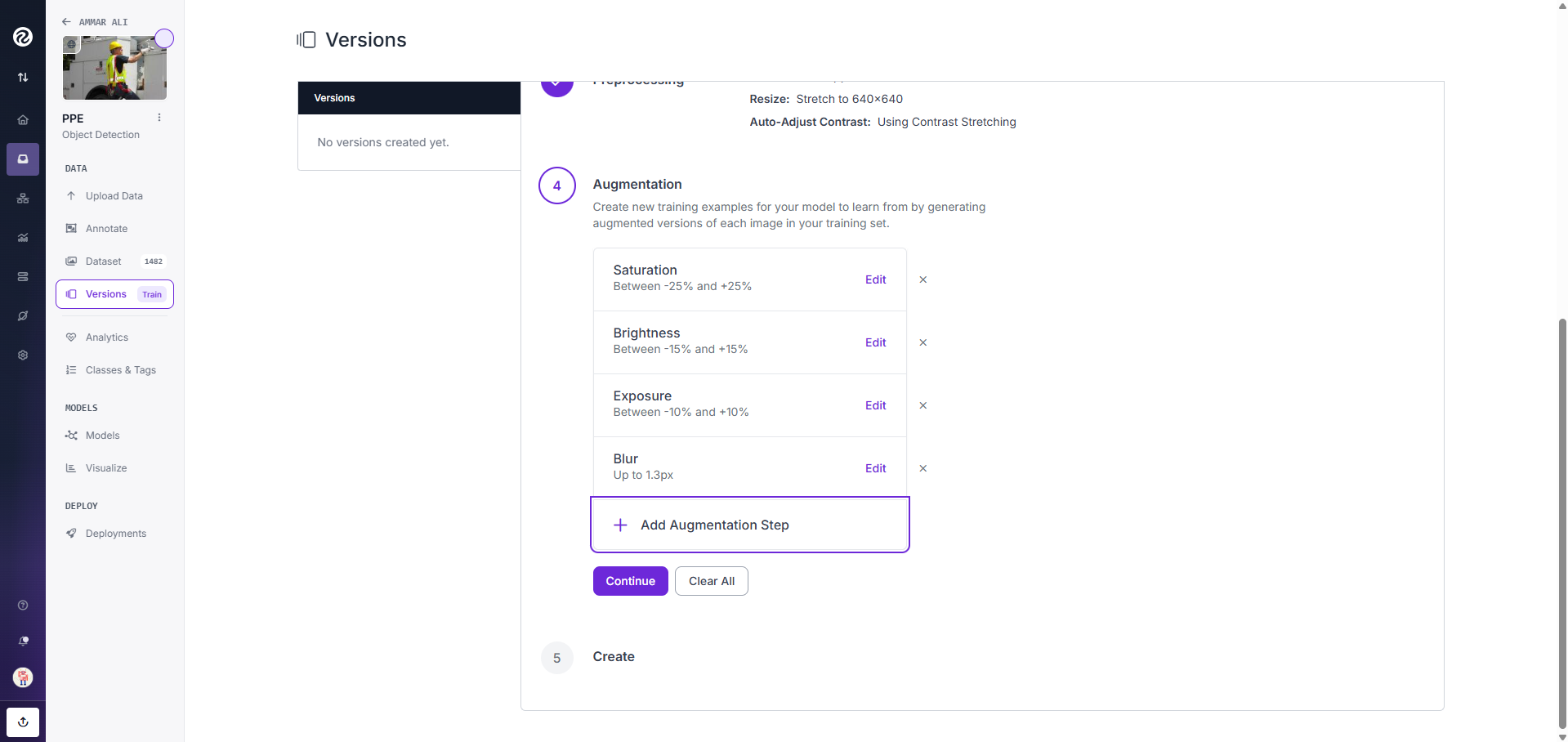

Dataset Setup Samples

Photo 1: Classes of Dataset for Object Detection |

Photo 2: Test Train Valid Split of Dataset |

Photo 3: Pre-processing of Dataset |

Photo 4: Augmentation of the Dataset |

Prototype Setup Samples

Photo 1: Initial hardware setup showing Prototype Running on System with OAK-D Pro Camera

Photo 1: Initial hardware setup showing Prototype Running on System with OAK-D Pro Camera

Hardware Setup: We used a Raspberry Pi 5 8GB as our main processing unit, connected to an OAK-D Pro for video capture and a speaker for audio feedback. The system runs on a custom-built Python application that integrates multiple AI models.

Software Architecture:

- Video Stream Processing: OpenCV captures and pre-processes video frames

- AI Models: We used pre-trained models from YOLO11 for pose estimation and YOLOv8 for object detection (PPE)

- Risk Calculation Engine: Custom Python module that calculates the weighted risk score \(R(t)\) using the formula above

- Alert System: PyAudio for voice alerts and SMTP library for email notifications

- Dashboard: A simple web interface built with Flask to monitor system status and view alerts

Photo 2: Complete setup showing Prototype Running on System with Logitec Webcam

Photo 2: Complete setup showing Prototype Running on System with Logitec Webcam

Key Technical Decisions:

- Choesed YOLO for its real-time performance and accuracy in pose estimation

- Implemented a modular design so PPE detection models can be easily swapped or expanded

- Used threading to run pose and PPE detection simultaneously without blocking

- Implemented a cooldown period to prevent alert spam for the same violation

Technical Achievements

What Makes This Technically Impressive:

Edge AI Excellence:

- Dual YOLO models running simultaneously on Raspberry Pi 5

- <200ms latency from detection to alert

- 92.4% accuracy on custom-trained posture dataset

- 95.1% mAP on PPE detection

Innovative Risk Algorithm: Our proprietary formula doesn't just detect posture—it predicts injury risk by combining:

- Biomechanical stress (joint angles)

- Time exposure (duration in risky posture)

- Movement patterns (asymmetry detection)

Scientific & Engineering Rigor Our system incorporates:

- NIOSH Lifting Equation principles for load calculation

- OSHA ergonomic guidelines for threshold setting

- Biomechanical research from peer-reviewed journals

- Statistical process control for false positive reduction

- Human factors engineering for alert design

Challenges we ran into

1. Real-time Processing Constraints: Running two AI models (pose + object detection) simultaneously on a Raspberry Pi while maintaining real-time performance was challenging. We had to optimize model sizes and implement frame skipping strategies.

2. Environmental Variables: Different lighting conditions, camera angles, and worker clothing affected detection accuracy. We implemented adaptive thresholding and background subtraction to improve reliability.

3. False Positives/Negatives: Initial versions had issues with false alerts. We refined our risk calculation formula by adjusting the weights \(w_i\) and time penalty coefficient \(\lambda\) based on empirical testing.

4. Privacy Concerns: Balancing effective monitoring with worker privacy was important. We implemented anonymized tracking (no facial recognition) and made sure no video is stored permanently.

5. Hardware Limitations: The Raspberry Pi's thermal management and power requirements needed careful planning, especially for 24/7 operation.

Accomplishments that we're proud of

- Real-time Dual Detection: Successfully running both pose estimation and PPE detection in real-time on a single Raspberry Pi 5

- Proactive Safety Model: Moving from reactive injury reporting to proactive prevention

- Cost-effective Solution: Built a complete system for under $150 that can potentially save thousands in healthcare and lost productivity costs

- High Accuracy: Achieved over 92% accuracy in posture detection and 95% in PPE detection in controlled tests

- User-friendly Design: Created a system that requires minimal training for both workers and safety officers

What we learned

Technical Learnings:

- How to optimize neural networks for edge devices

- The importance of proper camera placement and calibration

- How to balance model accuracy with computational efficiency

- Real-time video processing techniques and optimizations

Project Management Learnings:

- The value of iterative testing with actual workers

- How to prioritize features based on real-world impact

- The importance of clear documentation for deployment

- How to communicate technical concepts to non-technical stakeholders

Human Factors Learnings:

- Workers respond better to encouraging voice alerts than punitive ones

- Small, immediate corrections are more effective than occasional training sessions

- Privacy concerns must be addressed from the beginning, not as an afterthought

- Management buy-in is crucial for successful implementation

What's next for Ergonomic Sentinel

- Multi-camera Support: Expand to monitor larger areas with multiple cameras synchronized

- Advanced Analytics: Create dashboards with heat maps of high-risk zones and trend analysis

- Mobile Integration: Develop a companion app for safety officers to receive alerts on the go

- Customizable Thresholds: Allow different risk thresholds for different tasks or worker profiles

- Integration with Existing Systems: Connect with HR and safety management software

- Predictive Analytics: Use historical data to predict high-risk periods and preemptively warn workers

- Expanded PPE Detection: Add detection for more safety equipment like gloves, masks, and fall protection

- Cloud Backup Option: Offer optional secure cloud storage for compliance reporting

Our vision is to make Ergonomic Sentinel the standard for proactive workplace safety, protecting workers in industries from manufacturing and construction to healthcare and offices.

Built With

- computer-vision

- erognomic-assessment

- flask

- image-processing

- oakd-camera

- opencv

- pillow

- pose-estimation

- python

- raspberry-pi

- smtp

- yolo

Log in or sign up for Devpost to join the conversation.