-

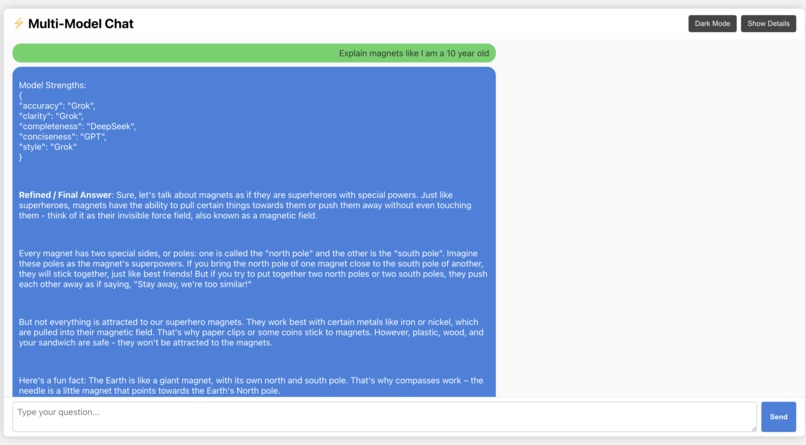

"A dark-themed interface featuring smooth auto-scrolling and animated typing effects for an immersive conversational experience."

-

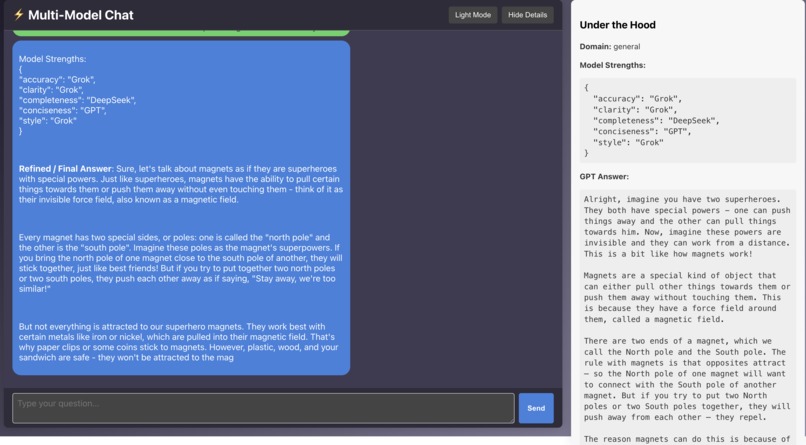

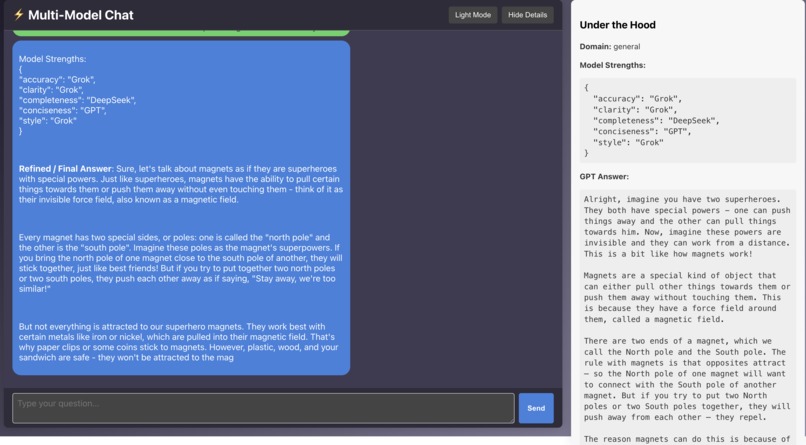

Live debug panel: shows domain detection, model outputs, and refinement stages for complete AI orchestration transparency.

-

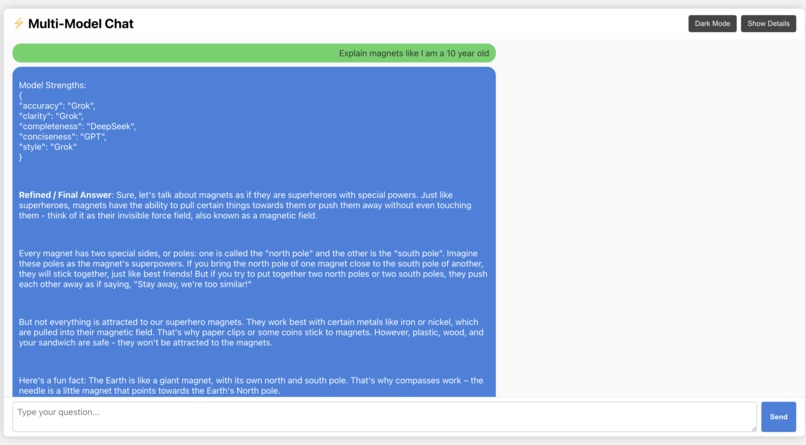

"A clean, modern chat interface in light mode that clearly distinguishes user queries from AI responses with distinct colours and styling."

Inspiration

I was inspired by the rapid evolution of large language models and the realisation that no single AI can excel in every domain. I observed that each model possesses its own unique strengths, I envisioned a platform that could route queries to the most suitable AI and then refine their outputs to create a truly optimised answer. This insight ultimately led to the creation of Ensemble Chat.

What it does

Ensemble Chat is a multi-model orchestration platform that routes user queries to the best-suited AI models and then synthesises their responses into one refined, context-aware answer. It not only delivers high-quality responses but also features a live debug panel that transparently displays the backend process, which had detailed stages such as domain classification, model selection, and the refinement proces, so that users can appreciate what happens behind the scenes.

How I built it

Frontend:

I developed a hackathon-ready React interface with a two-panel layout. The left panel displays the chat conversation with user queries and AI responses aligned, ensuring a clear visual separation. The right panel shows live debug information, including domain detection, raw model outputs, and the final refined answer. I also incorporated dark mode, auto-scrolling, and subtle animations to provide a polished and engaging user experience.Backend:

The backend is built with FastAPI. It accepts the entire conversation history, converts it into the required format for multiple AI models (such as GPT and DeepSeek), and utilises domain classification to determine which model should handle a query. I then refined the outputs into a single, coherent answer using an intelligent synthesis engine.

Additionally, I conducted a thorough evaluation of each model’s performance across different domains. For example, in the legal domain I measured metrics such as accuracy, clarity, completeness, conciseness, and style. I found that GPT excelled in accuracy and conciseness, whereas DeepSeek was superior in clarity, completeness, and style. This evaluation informed the model routing and refinement process, ensuring that the final answer leverages the best attributes of each model.

Challenges we ran into

- Multi-AI Integration: Coordinating several AI services with differing input and output formats was challenging, particularly in ensuring that the system fully capitalised on each model's strengths.

- Real-Time Debugging: Streaming updates from the backend required a deeper understanding of asynchronous programming and event-driven architectures.

- Scalability: Ensuring that the system could handle multi-turn conversations and remain responsive under increasing loads presented a significant technical challenge.

Accomplishments that I'm proud of

- Intelligent Query Routing: I successfully implemented an algorithm that dynamically directs queries to the most appropriate AI models, enhancing the overall quality of responses.

- Refinement Engine: Ensemble Chat does more than simply merge outputs; it intelligently refines them into a unified, high-quality answer.

- Live Debug Transparency: The integration of a debug panel that displays backend processing has been a standout achievement, offering both transparency and valuable insights into the system's inner workings.

- Polished, Hackathon-Ready UI: The final interface, which had a two-panel layout, dark mode, and smooth auto-scrolling, provides a professional and engaging user experience.

What I learned

Working on Ensemble Chat showed me just how powerful combining different AI models can be. I found that routing queries to the right specialised system really boosts the quality of the responses. The project was also a crash course in asynchronous web development, real-time data streaming, and integrating multiple APIs. Plus, I learned about designing user-friendly interfaces and refining them through trial and error to create a smooth, modern experience.

What's next for Ensemble Chat

Moving forward, I plan to:

- Expand Model Integration: Incorporate additional AI models (e.g., Anthropic, Grok) to further enhance the platform’s capabilities. The system is designed to be highly scalable and will only improve as more questions are asked and more models are integrated.

- Enhance Live Debugging: Improve the real-time debug panel to provide even more detailed, step-by-step insights into the backend processing.

- Implement Persistent Storage: Add database-backed conversation storage so users can review past interactions.

- Advanced Customisation: Allow users to tailor the AI response style and adjust the weighting of different models.

- Optimise for Scalability: Refine the backend architecture to handle increased loads and ensure seamless performance as the platform grows.

Ensemble Chat is an evolving project, and I am excited to continue pushing the boundaries of multi-model AI integration while making the system even more transparent, customisable, and robust.

Built With

- anthropics-api

- css

- deepseek-api

- fastapi

- grok

- httpx

- openai-api

- python

- react

- typescript

Log in or sign up for Devpost to join the conversation.