Inspiration

We wanted to integrate an ML model with a full stack project since we all had different areas of experience before the hackathon. So, we wanted to work together and learn from each other to bring the project to life!

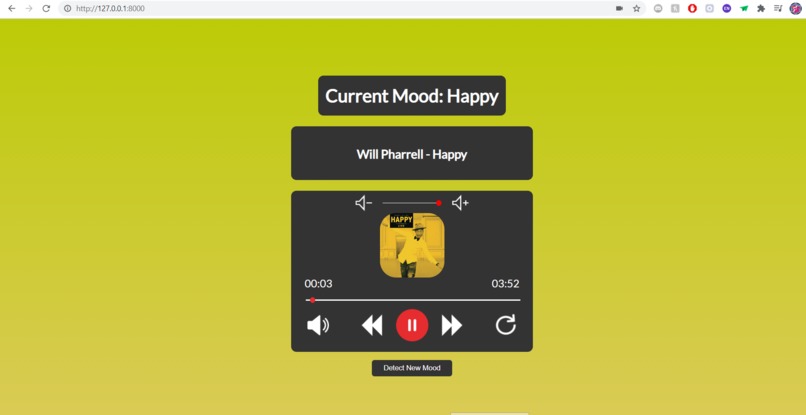

What it does

Essentially, how the music player works is it runs off a website which hosts the music player, then sends a request through the backend (Django) for a screenshot of the person's face through their webcam. It will first try to locate the person's face utilizing the Haar Cascade machine learning algorithm, then classify it using a trained CNN. The CNN was trained on an open-source Kaggle dataset provided by a competition named "Challenges in Representation Learning: Facial Expression Recognition Challenge." The CNN is able to classify four emotions: happy, sad, angry, and calm. If it doesn't detect an emotion, the music player will default to calm. Depending on the detected mood, the music player will then recommend an appropriate song to play.

How we built it

Before the project, we planned out our idea, and in the process, decided on the songs that were going to be used, the mood expressions that were going to be detected, and the frameworks that we were going to incorporate. When we started coding, we worked on the front end and backend separately. On the front end side, we got the music player working using JavaScript, and then styled the UI. At the same time, we were working on training the ML model and making the routes on the backend. Finally, we worked on connecting the front end and the backend together. We ran into some issues on the front end at afterwards, but worked on that and finished the project.

Challenges we ran into

The biggest challenge we faced was integrating the Django backend with the CSS and Javascript frontend. We struggled with integrating the static elements of the frontend with the backend side of things, and we spent most of our time trying to work out the issues surrounding that.

Accomplishments that we're proud of

We're proud of the nice UI that we were able to design and code. We're also proud of making the project we did in such little time with not much prior experience beforehand.

What we learned

The main thing we learned was how to properly integrate CSS and Javascript with a backend framework such as Django. This allowed us to create a dynamic website which integrated machine learning through a dynamic backend while also incorporating a static frontend.

What's next for Emotion-Based Music Player

The next step would be to integrate more complex machine learning and deep learning models, as the backend now functions properly. The Haar Cascade algorithm is a purely machine learning, and not deep learning, approach. Subsequently, it occasionally cannot detect the user's face from the webcam feed in one frame. However, if we were to implement an object detection algorithm such as SSD or YOLO, it could potentially improve the user experience by accurately detecting the face each time.

Log in or sign up for Devpost to join the conversation.