-

-

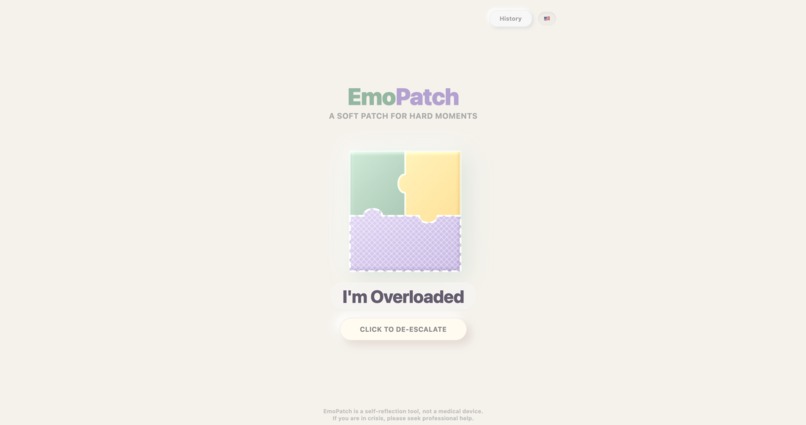

Home Screen: "A Soft Patch for Hard Moments" with a one-click trigger to de-escalate anxiety.

-

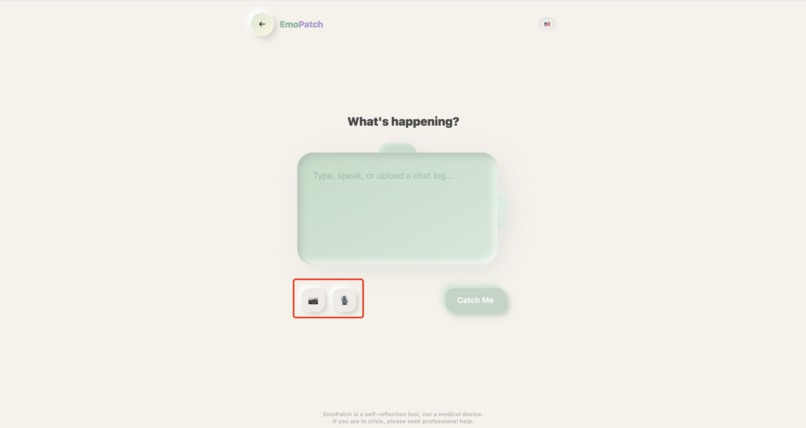

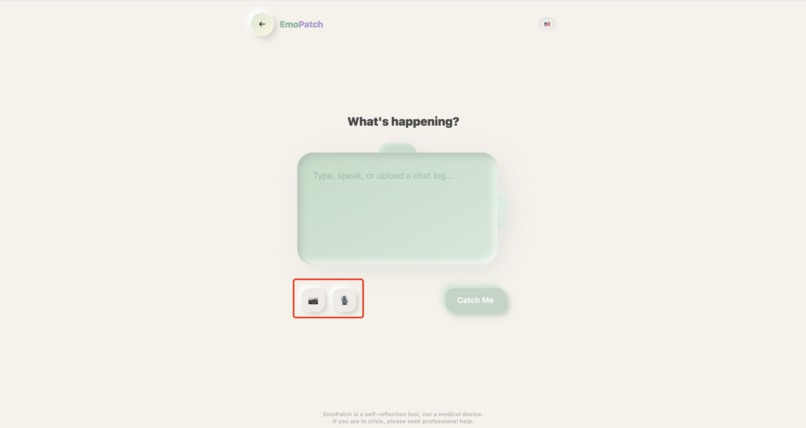

The Multi-modal Entry: A seamless interface allowing users to "dump" their raw thoughts and feelings through voice, text, or image uploads.

-

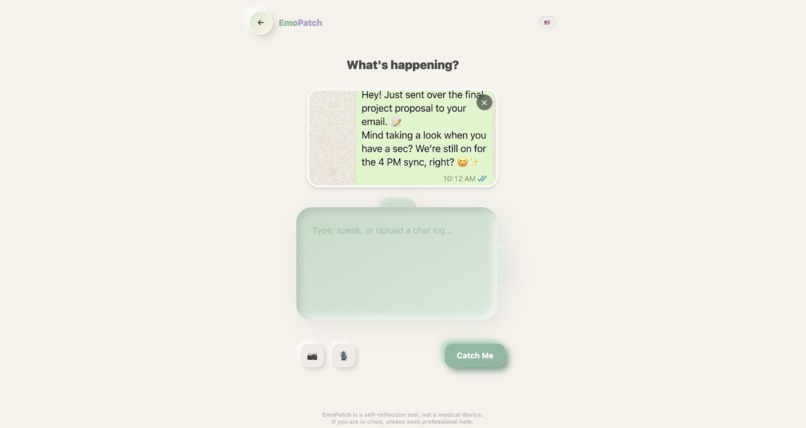

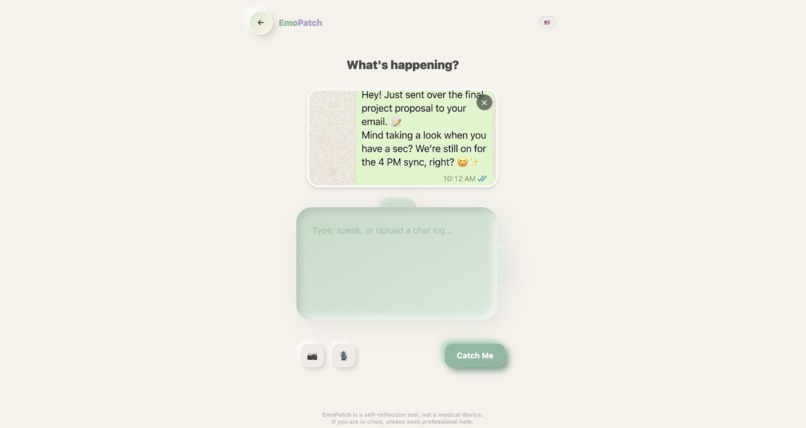

Native Vision Processing: Uploading social media screenshots for Gemini 3 to decode hidden visual cues like response delays.

-

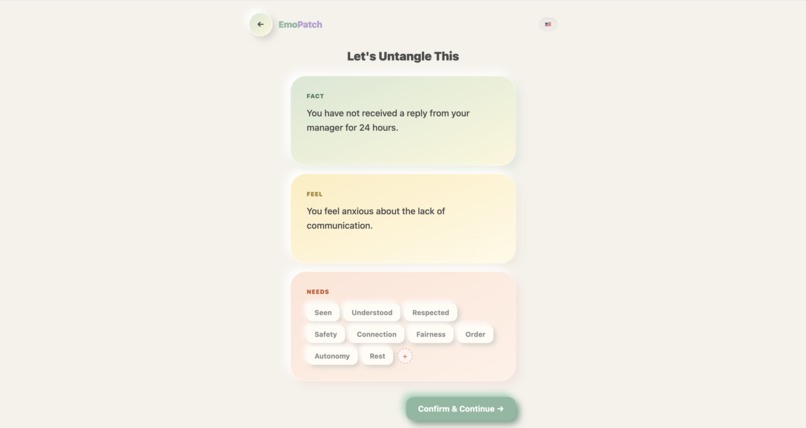

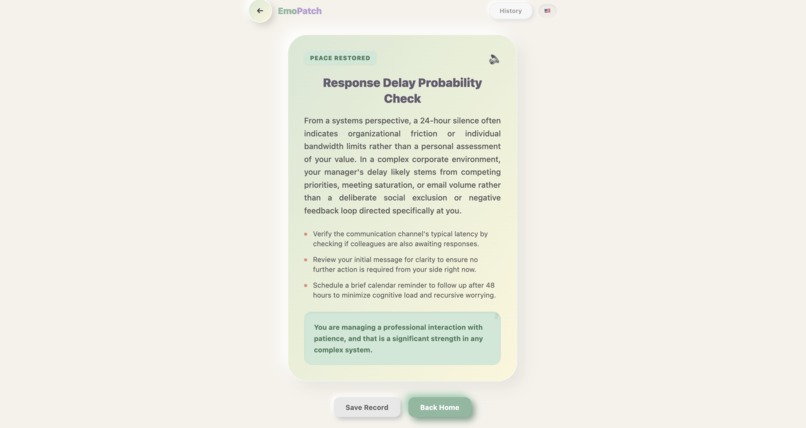

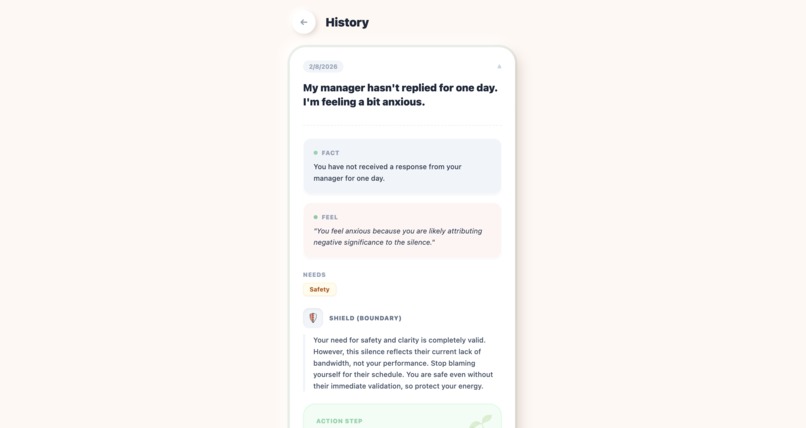

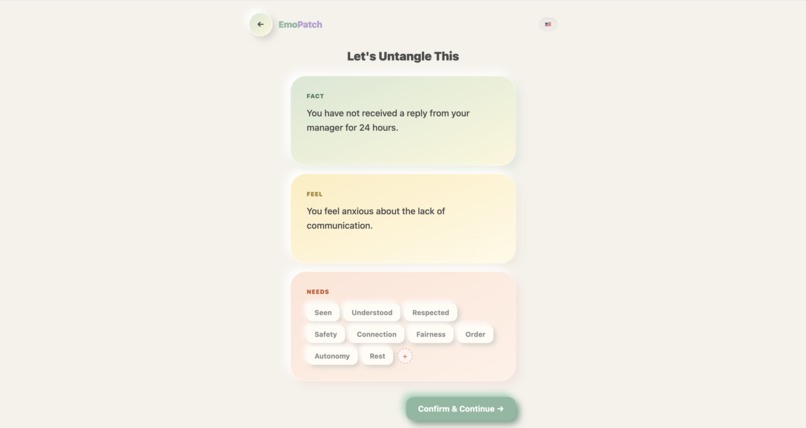

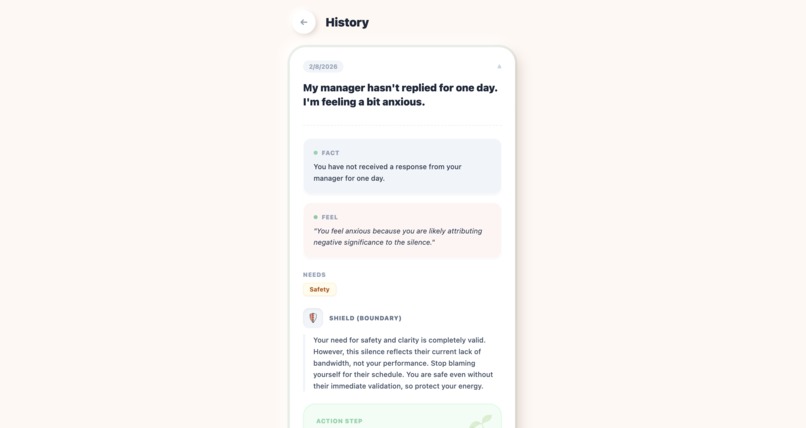

"Cognitive Untangling": Gemini 3 automatically separates objective Facts from subjective Feelings and Needs.

-

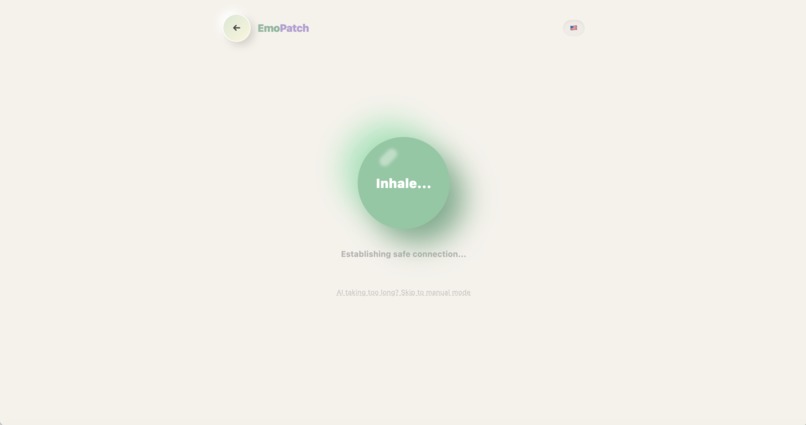

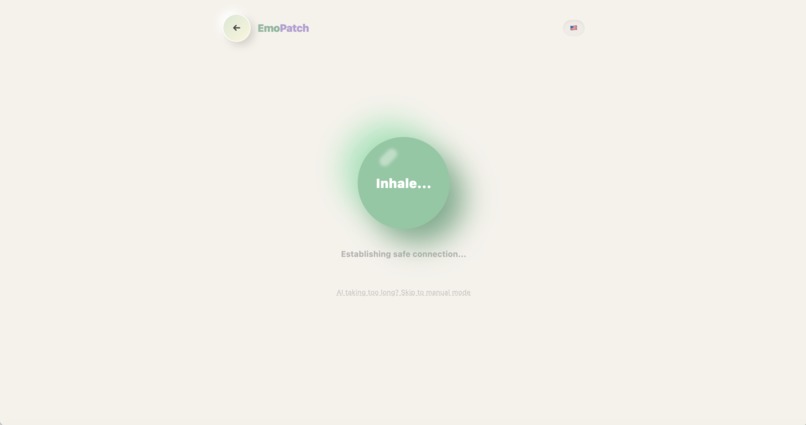

Soft UI Transition: Transforming API latency into physically grounding the user before analysis.

-

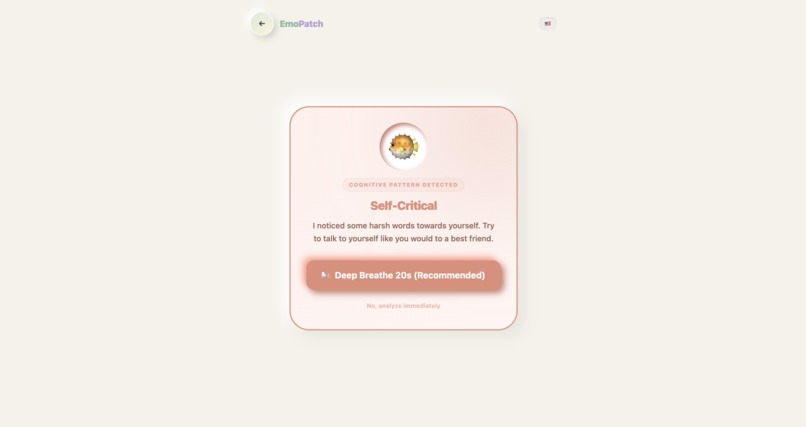

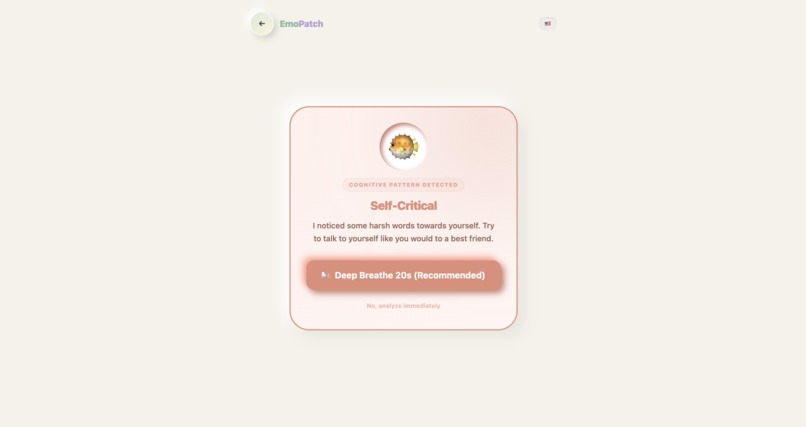

The "Safety Intercept": Identifying unhelpful thinking patterns and pausing for a 20s breathing reset before analysis.

-

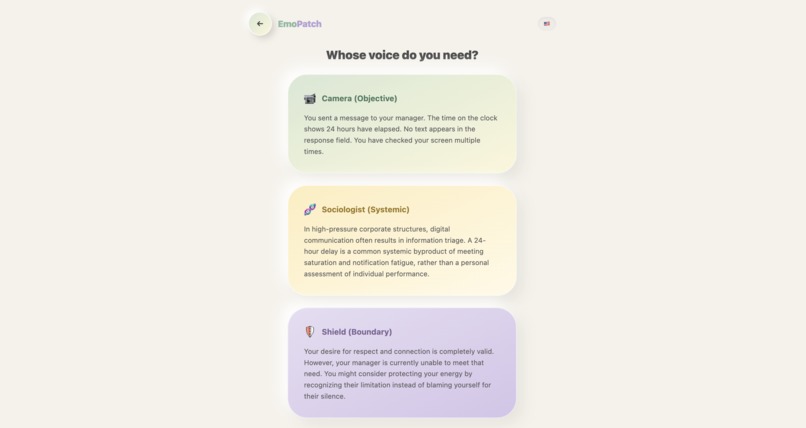

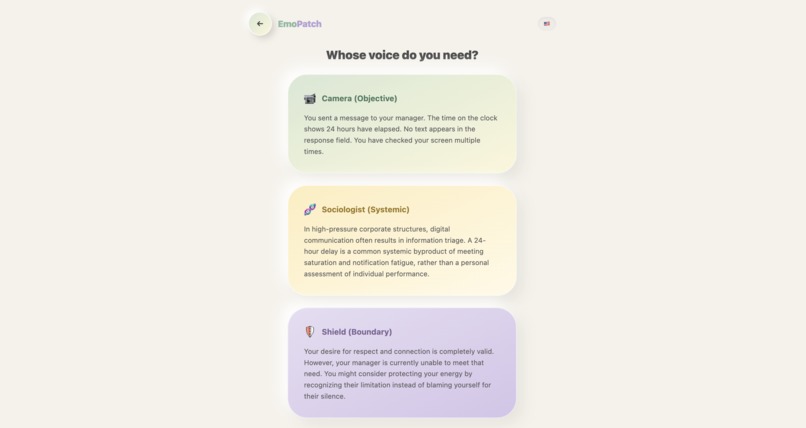

"Multi-Perspective Reframing": Gemini 3 generates three distinct cognitive lenses (Objective, Systemic, Boundary) to break the anxiety loop.

-

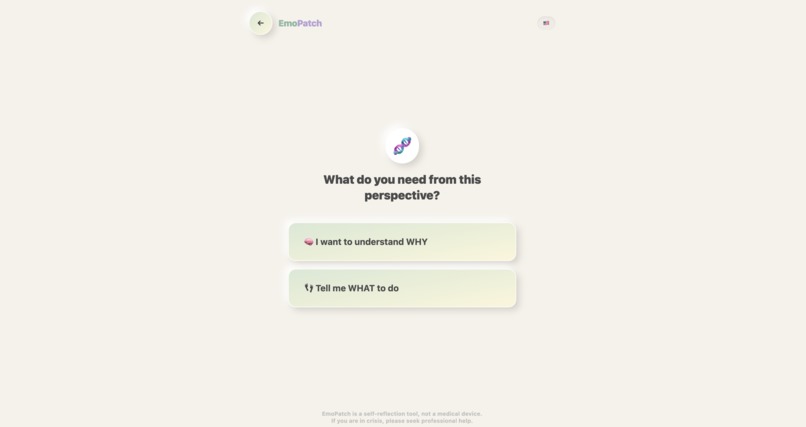

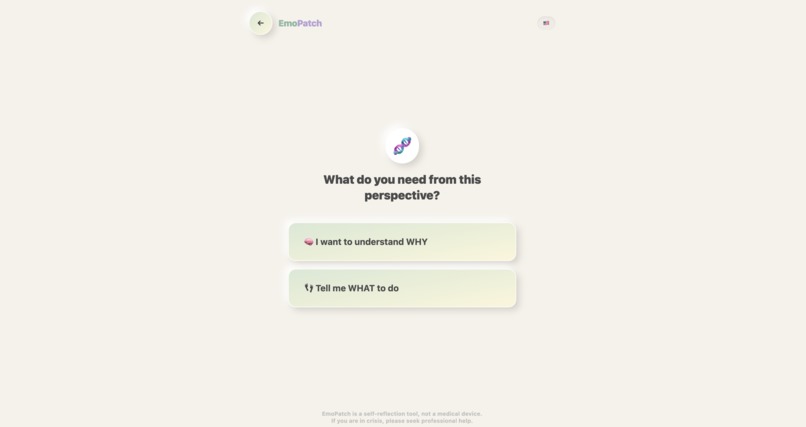

"Tailored Guidance": EmoPatch asks for the user's intent to ensure Gemini 3 generates the most relevant cognitive support.

-

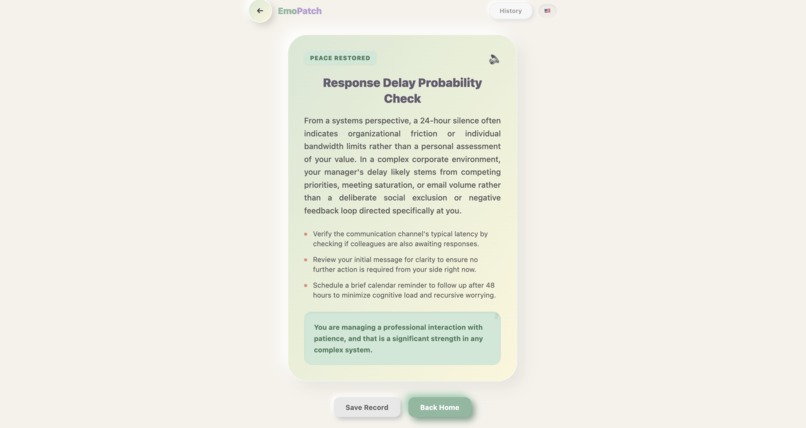

"The Cognitive Patch": Gemini 3 delivers structured, actionable advice that combines psychological insight with practical next steps.

-

"Privacy First": The History tab allows users to review past journeys locally. All data is stored in IndexedDB instead of external servers.

-

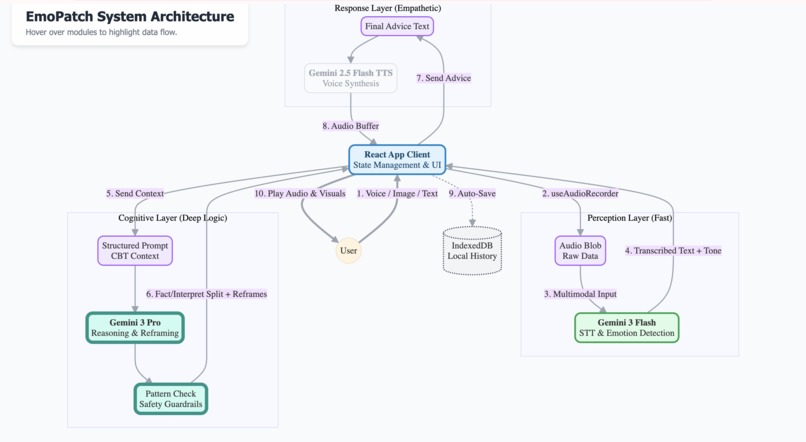

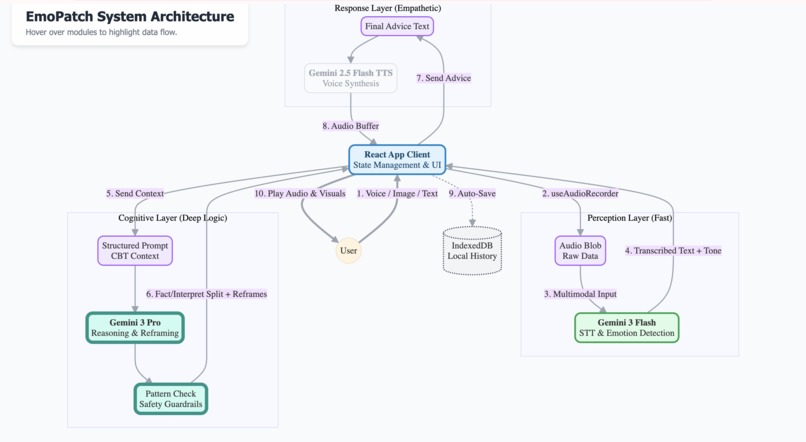

Gemini 3 Architecture: Features Flash perception, Pro cognitive reframing, and robust safety interceptors.

About EmoPatch: A Soft Patch for Hard Moments

[!IMPORTANT] Medical Disclaimer: EmoPatch is a wellness tool for emotional clarity and is not a medical device. It does not provide medical diagnosis or treatment advice. If you are in a crisis, please seek professional help immediately.

Inspiration

As a Highly Sensitive Person (HSP), I often found myself overwhelmed by small triggers like a single ambiguous glance or a "dry text" message. In these moments of high emotional volatility, my mind struggled to distinguish "what actually happened" from "what I feared happened".

I didn't need a diagnosis; I needed clarity. I wanted a "Cognitive Circuit Breaker" to act as a switch between raw facts and overwhelming narratives.

So I built EmoPatch. It is not designed to be a therapist or a medical tool. Instead, it serves as a digital grounding companion. It acts as a "reality check" for those "micro-moments of overwhelm" and helps users pause, process, and regain perspective when their thoughts feel too heavy to carry.

What it does

EmoPatch is a bite-sized emotional clarity application that applies Cognitive Reframing principles to immediate triggers. It acts as a specialized "Thought Sorter":

- The Great Split: It takes your raw input—whether spoken, typed, or captured via image—and separates the objective Fact from the subjective Interpretation.

- Safety Check: It identifies unhelpful thinking patterns (e.g., Catastrophizing, Mind Reading) and interrupts with a "Pause & Breathe" session if it detects high stress.

- Structured Reframing: It offers three distinct cognitive lenses:

- 📹 The Observer: Dry, mechanical objectivity.

- 🧬 The Sociologist: Systemic and external perspective.

- 🛡️ The Shield: Personal agency and boundary-focused.

How I built it

The project is built with a focus on Emotional Engineering and AI Orchestration.

Technical Stack

- Frontend: React 18 & TypeScript (Vite).

- Visual Persona: "Claymorphism" UI for a soft, tactile feel.

- Motion: Framer Motion for "breathing" UI transitions.

- Persistence: Local-first privacy using IndexedDB (

idb-keyval).

AI Logic & Math

I utilized a multi-model orchestration strategy to balance reasoning depth and speed. The core logic of "Reframing" can be represented as:

$$ Observation = Fact + Interpretation $$

By isolating the Fact from the Interpretation, I can compute new perspectives Pn:

$$ P_n(Fact) = f(Fact, Context_{Persona_n}) $$

I also engineered a Breathing Buffer to handle the latency of the gemini-3-pro-preview model. The breathing cycle follows a specific cadence:

$$ T_{cycle} = T_{inhale}(4s) + T_{exhale}(6s) = 10s $$

By enforcing this cycle (10s for standard flow, or 20s for stabilization), I ensure the user's parasympathetic nervous system is activated while the AI finishes its deep reasoning in the background.

Challenges I ran into

- The "Latency Gap": The most capable reasoning models (like Gemini 3 Pro) take several seconds to process complex emotional nuance. I solved this by turning the waiting period into a feature—the Breathing Circle—which provides 10-20 seconds of grounding value while masking the AI's processing time.

- Persona Consistency: Ensuring that the "Sociologist" didn't sound like the "Observer" required rigorous system prompt engineering. I treated each persona as a distinct cognitive function rather than just a different "tone of voice".

- Bilingual Nuance: Translating psychological concepts between English and Chinese required more than literal translation; it required cultural adaptation of how safety and boundaries are communicated.

Accomplishments that I'm proud of

- Zero-Backend Privacy: I successfully implemented a high-power AI tool that stores nothing on a central server. All history remains strictly in the user's browser.

- Vibe Engineering: Achieving a "Claymorphic" aesthetic that actually feels calming rather than just "trendy".

- Multimodal "Reading Between the Lines": Developed a pipeline where users can upload screenshots of stressful conversations. EmoPatch leverages Gemini 3's native multimodal vision to detect visual social cues—like the specific impact of a period in a short reply or the visual weight of "seen" receipts—providing a level of objective analysis that text-only models simply cannot reach. This transforms a "cold" screenshot into a "warm" logic map.

What I learned

I learned that Micro-Intervention is more effective than "Long-Form Therapy" in a crisis. When someone is in a "thought loop", they don't need a 30-minute conversation; they need a 30-second logic interruption. I also learned that the texture of a UI (soft edges, warm colors) is just as important as the logic of the code when building for mental wellness.

What's next for EmoPatch

- Expanded CBT Toolkit: Adding more formal distortions like "Labeling" and "Personalization" filters.

- Personalized Voice: Enabling users to choose which "Persona" speaks the final advice based on their specific comfort needs.

- "Fact vs. Feeling" Training: A gamified micro-exercise for users to practice identifying objective facts versus subjective interpretations in their own thoughts.

- Emotional Trend Analytics: A visual "Change Curve" to track and summarize emotional shifts and significant life events over time based on local history analysis.

Built With

- ai

- audio

- css3

- framer

- gemini-3-flash

- gemini-3-pro

- html5

- indexeddb

- javascript

- react

- tailwind

- typescript

- vite

- web

Log in or sign up for Devpost to join the conversation.