-

-

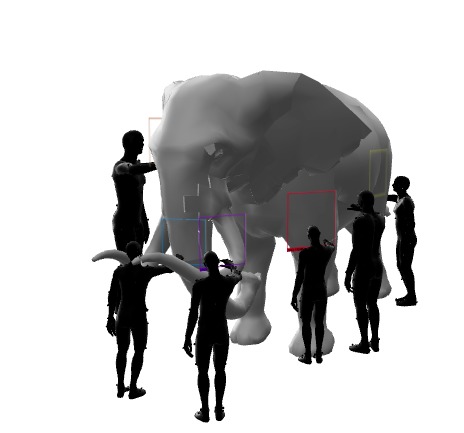

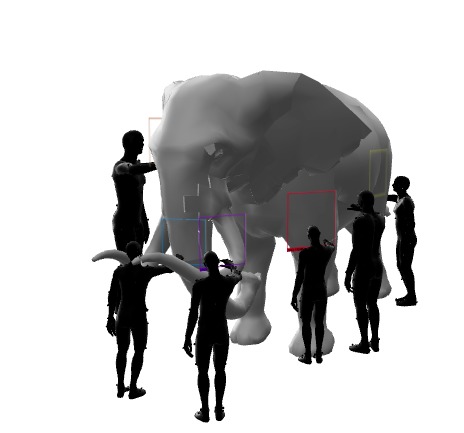

seventh AR experience: 3d model of the whole elephant

-

example AR marker. Pattern is written in Braille letters. The marker unlocks the first AR experience when scanned with the phone.

-

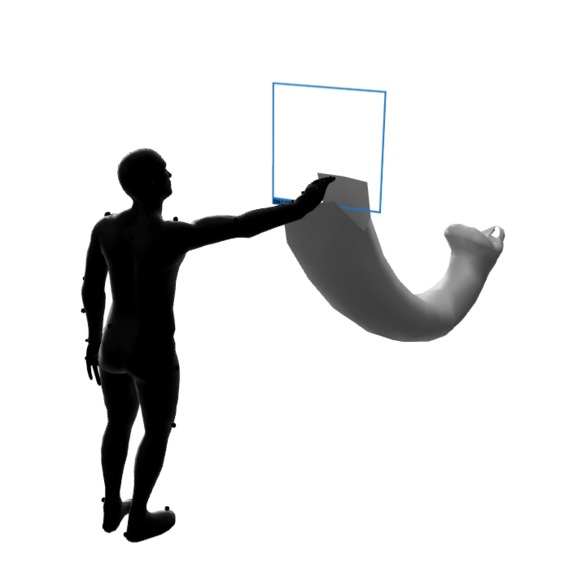

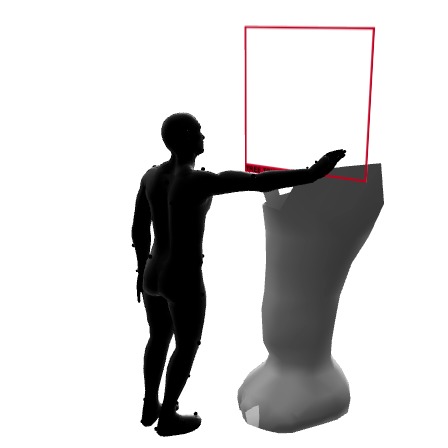

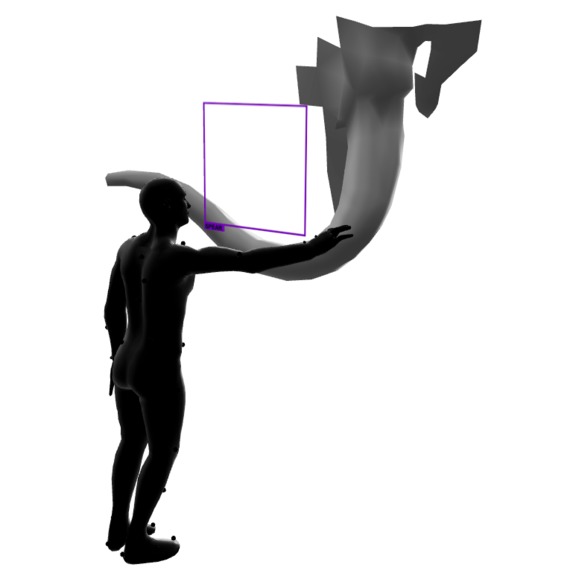

first AR experience: ear of the elephant, the blind person thinks he is touching a fan.

-

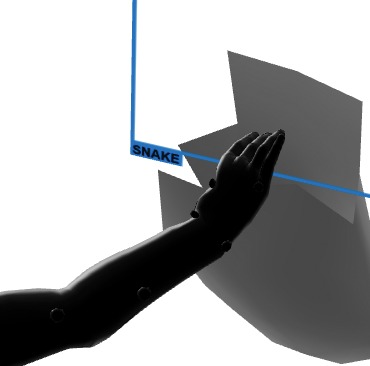

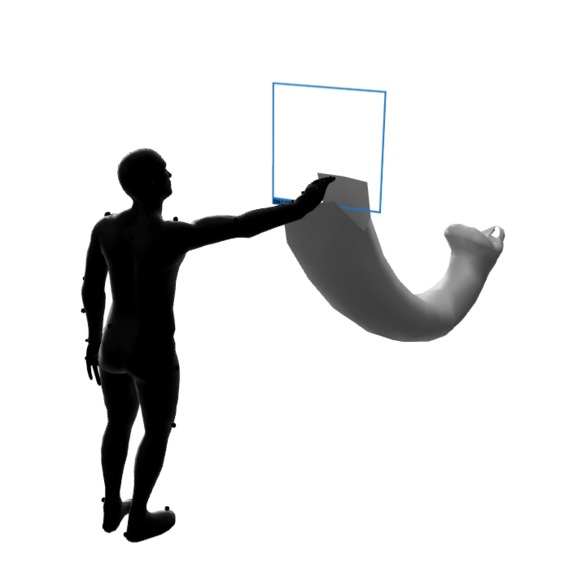

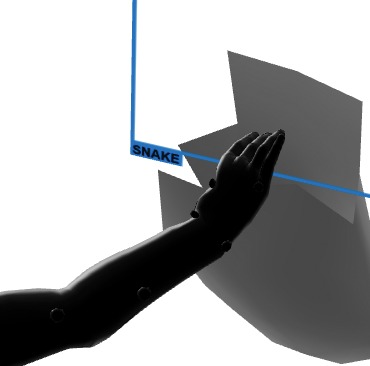

second AR experience: trunk of the elephant, the blind person thinks he is touching a snake.

-

detail second AR experience: label snake

-

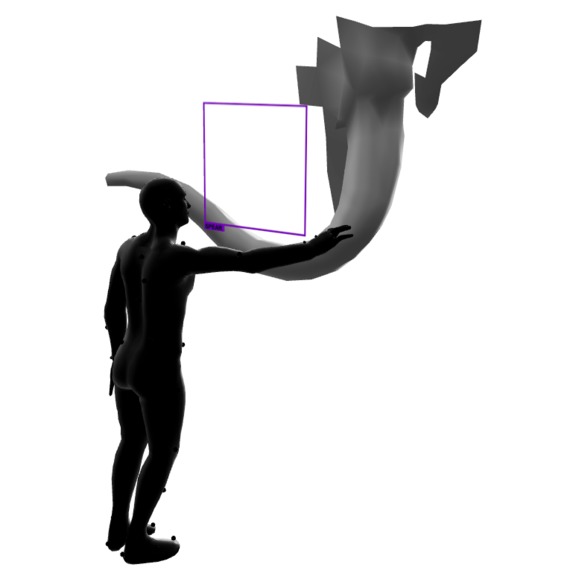

third AR experience: tail of the elephant, the blind person thinks he is touching a rope.

-

fourth AR experience: tusk of the elephant, the blind person thinks he is touching a spear.

-

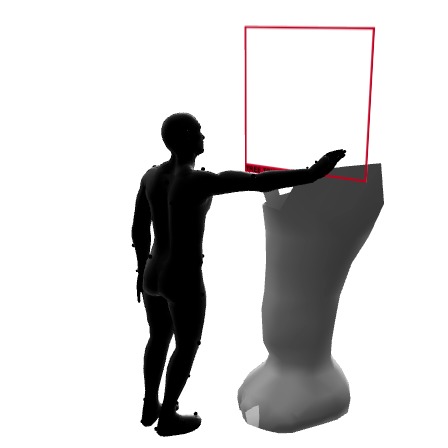

fifth AR experience: a foot of the elephant, the blind person thinks he is touching a tree trunk.

-

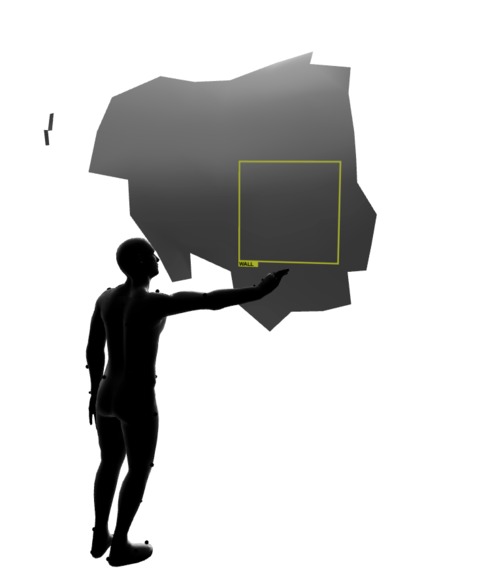

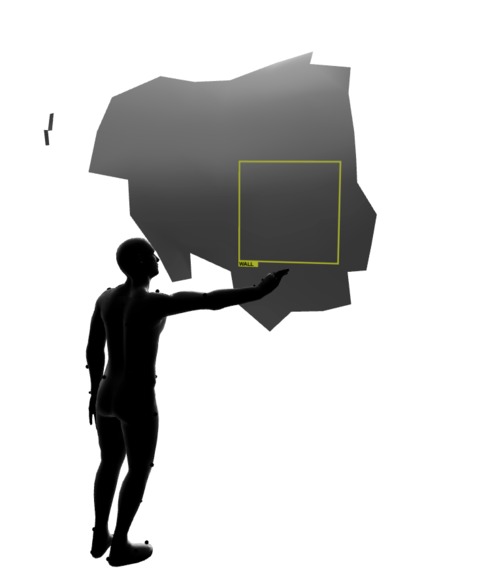

sixth AR experience: side of the elephant, the blind person thinks he is touching a wall.

COMPUTER VISION, THE BLIND MEN AND THE ELEPHANT

Concept:

Similar to the blind men in the parable of the blind men and the elephant*, failing to see the whole elephant, in computer vision there is object detection that is about trying to find and recognize a pattern and detect an object and show the label of the object. For being able to detect objects the computer has to be taught, how an object looks like in order to be able to distinguish pixels and what labels should be assigned, which have to be defined by a human being. The computers knowledge is limited, shaped and biased by what we feed it with.

There are 6 AR (Augmented Reality) markers with 6 different patterns, displaying text (tusk, foot, side, tail, trunk, elephant) written in Braille code, that have each a different AR experience embedded, which gets triggered, deciphered when the user holds the phone camera towards the marker. Each marker based AR experience is showing a different fragment of the elephant (ear, trunk, trail, side, foot and tusk) . Similar to object detection, where a content is linked to a label, a marker and its pattern are attached to a unique AR experience with a label. The AR experiences embody, represent in a 3D model, the pixel patterns on the markers and are so the content behind the machine readable, decipherable code of the marker. Each AR elephant fragment has attached the label that the blind men misperceived that part of the elephant for (labels: rope, tree trunk, fan, spear, wall and snake). While always one marker is in the spotlight and one AR experience unlockable to be viewed with the phone, the other markers are hidden and the fragments they contain remain blind spots. Like each of the blind men only touching a puzzle piece of the elephant, the phone can only see one marker at the time and fails to see the whole elephant puzzle. A video loop displays the markers, one at the time and changes to another marker after 20 seconds. A 7th marker that shows up at the end of the video shows the whole elephant in AR.

*parable of the blind men and the elephant: “A group of blind men heard that a strange animal, called an elephant, had been brought to the town, but none of them were aware of its shape and form. Out of curiosity, they said: “We must inspect and know it by touch, of which we are capable”. So, they sought it out, and when they found it they groped about it. The first person, whose hand landed on the trunk, said, “This being is like a thick snake”. For another one whose hand reached its ear, it seemed like a kind of fan. As for another person, whose hand was upon its leg, said, the elephant is a pillar like a tree-trunk. The blind man who placed his hand upon its side said the elephant, “is a wall”. Another who felt its tail, described it as a rope. The last felt its tusk, stating the elephant is that which is hard, smooth and like a spear.“-Wikipedia

Inspiration

Parable of the blind men and the elephant https://en.wikipedia.org/wiki/Blind_men_and_an_elephant

What it does

The work is a multiple marker based AR experience that reflects on the topic of object detection and the resulting bias of labeling. Each AR experience is the visual representation and embodied content of an AR markers pattern. What the content attached to the marker is, remains a mystery to the human eye until the marker with machine readable code is scanned by the phone.

How I built it

I built it using ar.js, created customized AR markers and used the 3D modeling software Blender.

Challenges I ran into

Integrate multiple AR markers and different AR experiences within the same html link.

Accomplishments that I am proud of

I managed to figure out how to use multiple markers within the same window.

What I learned

I learned more about ar.js .

What's next for Computer vision, the blind men and the elephant

Add another sensory component to the visual AR experience: I plan to add spatial audio to each of the AR experiences, that tells what the blind person thinks he is touching from his perspective and how he came to that conclusion. I also have to resolve a bug that I currently have, since the customized AR markers with Braille letters don't work yet.

Built With

- ar

- ar.js

- augmented-reality

- blender

- html

Log in or sign up for Devpost to join the conversation.