-

-

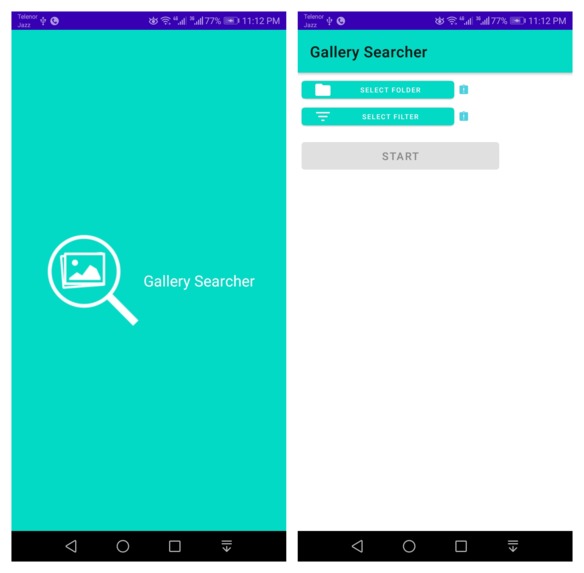

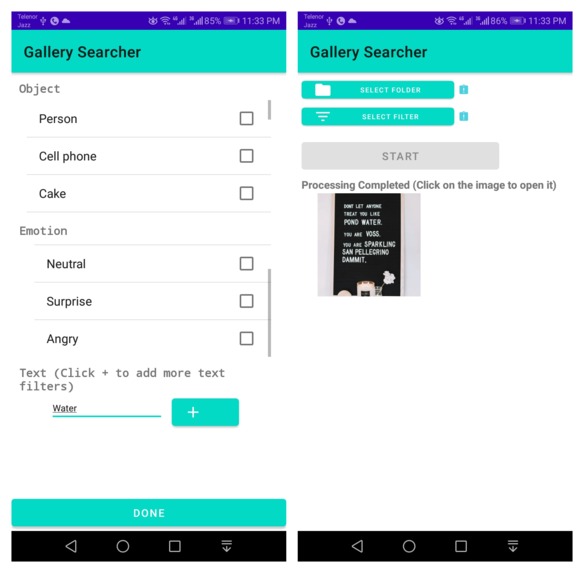

Splash Screen and Main menu

-

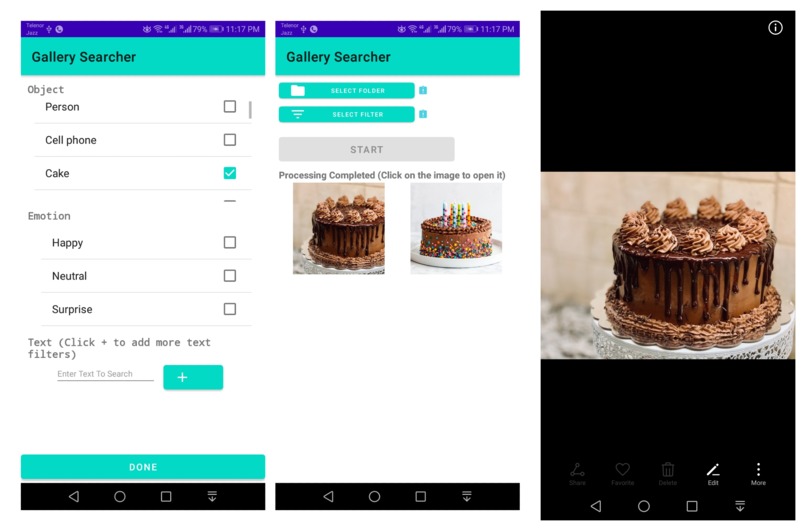

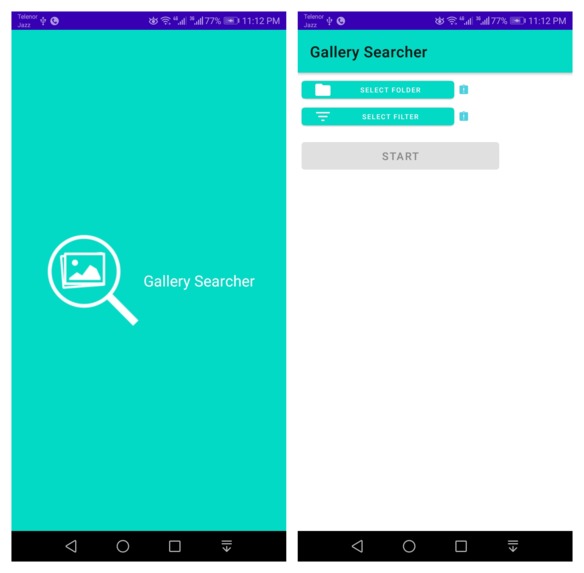

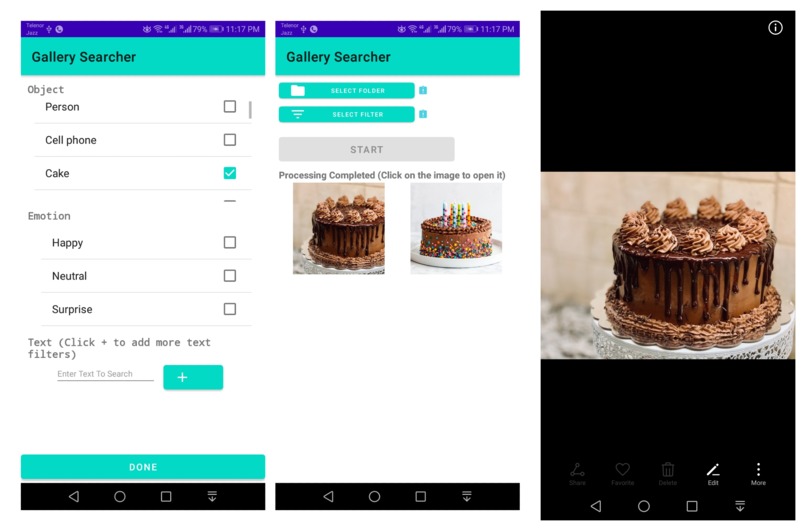

Object Detection Filter for "Cake" with results. Tapped on first image result to view in gallery as shown by rightmost image

-

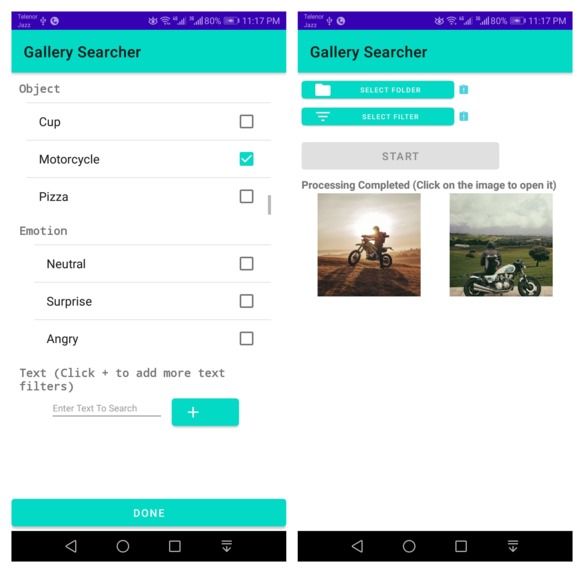

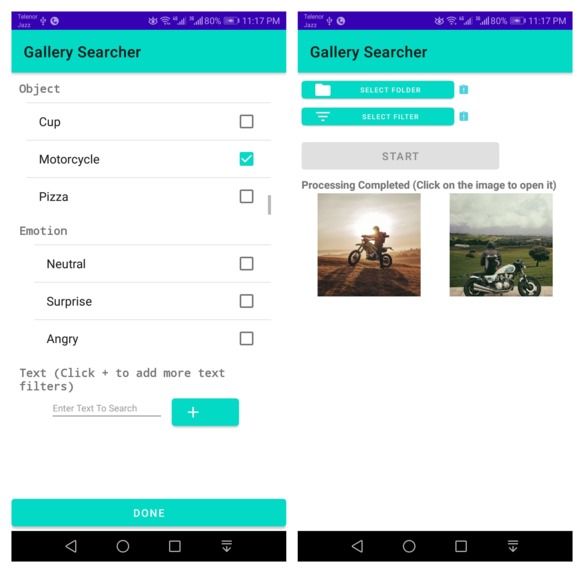

Object Detection Filter for "Motorcycle" with results

-

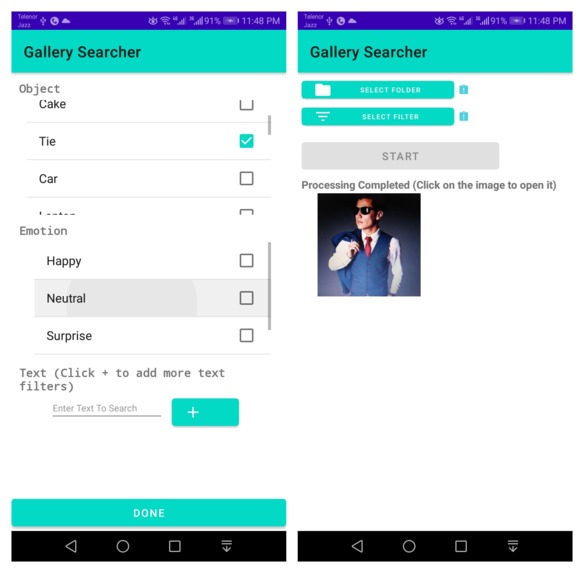

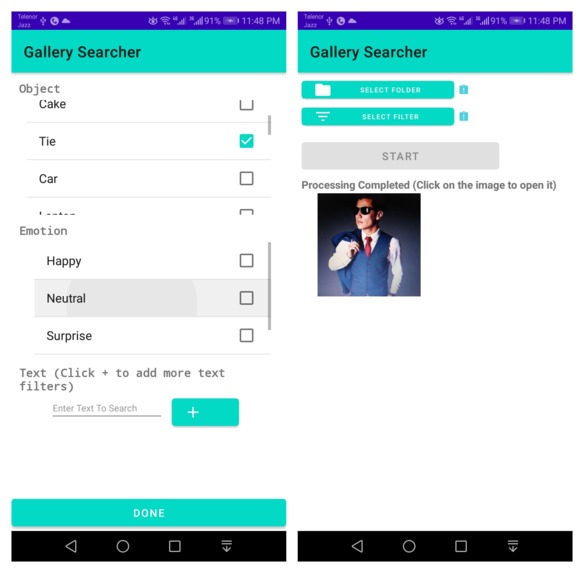

Object Detection Filter for "Tie" with results (can be used to find formal images)

-

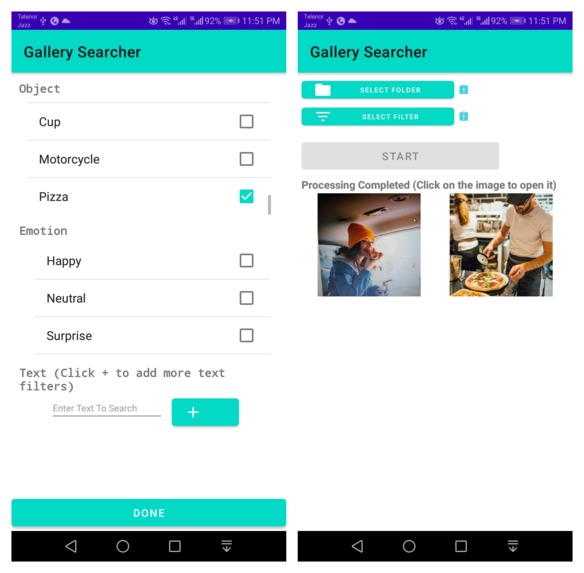

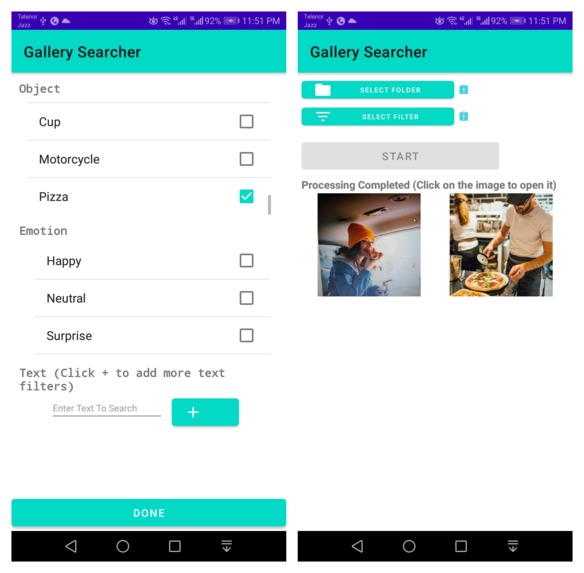

Object Detection Filter for "Pizza" with results

-

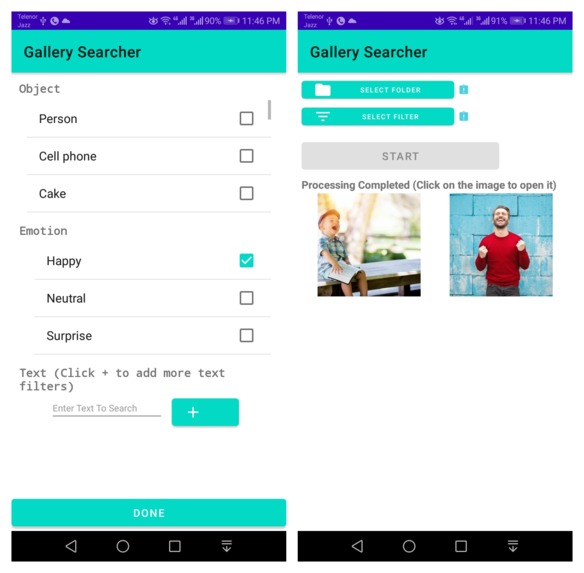

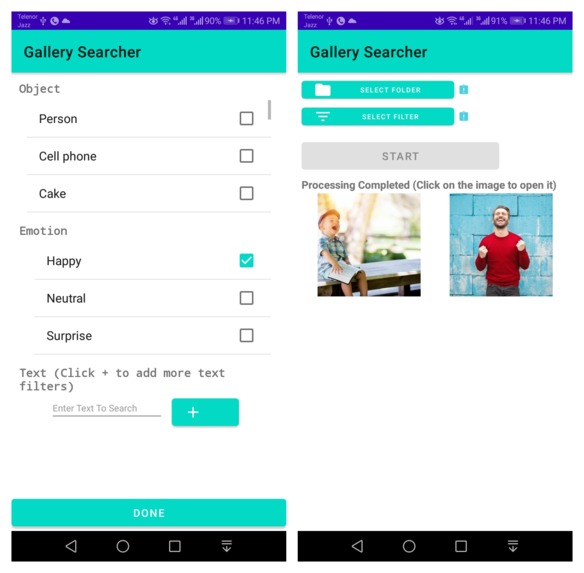

Emotion Detection Filter for "Happy" emotion with results

-

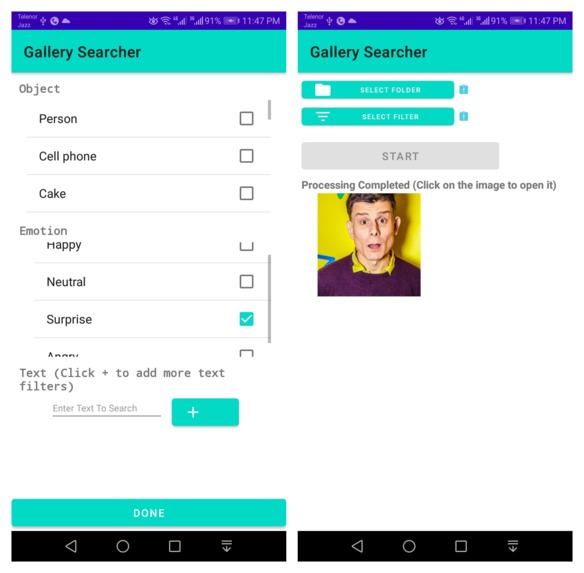

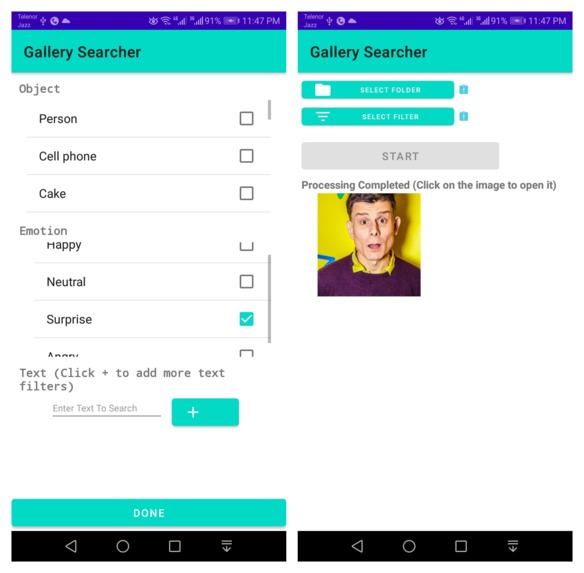

Emotion Detection Filter for "Surprise" emotion with results

-

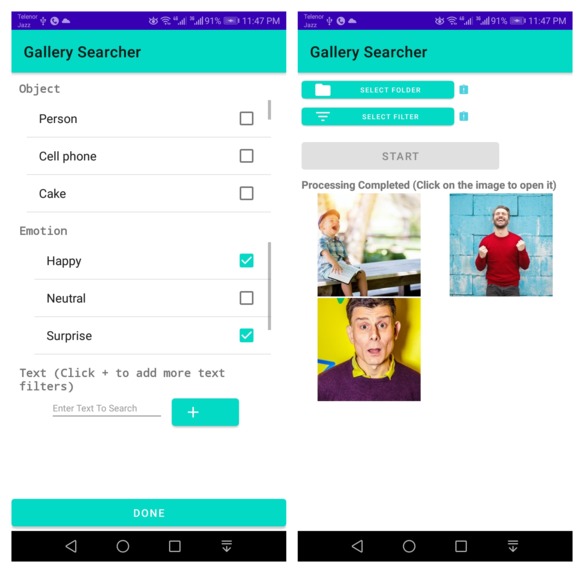

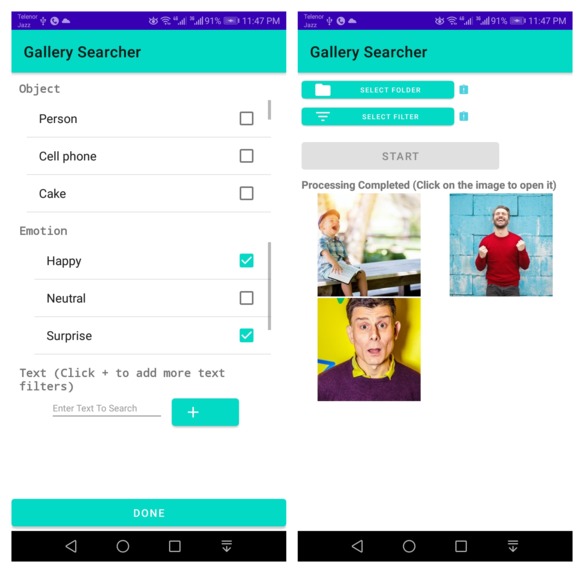

Emotion Detection Filter for "Happy" emotion and "Surprise" emotion with results

-

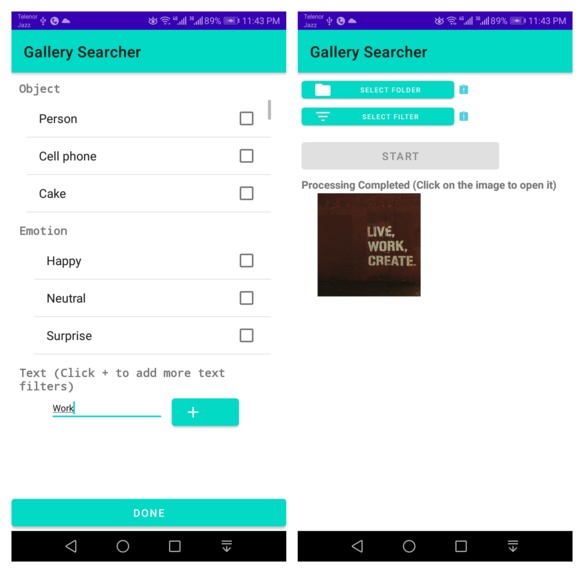

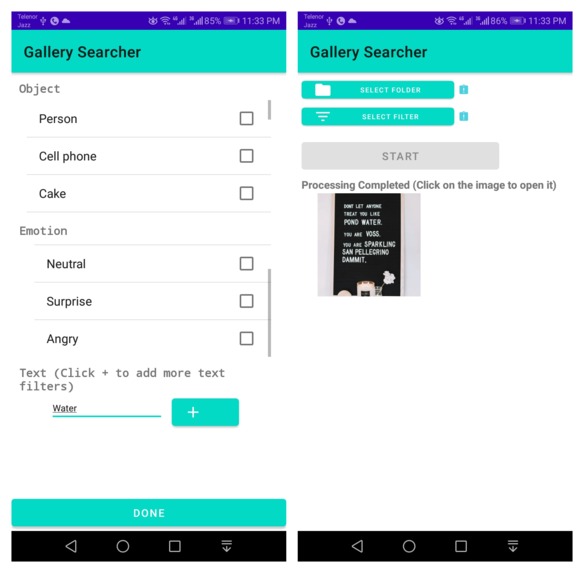

Text Detection for word "Water" with results

-

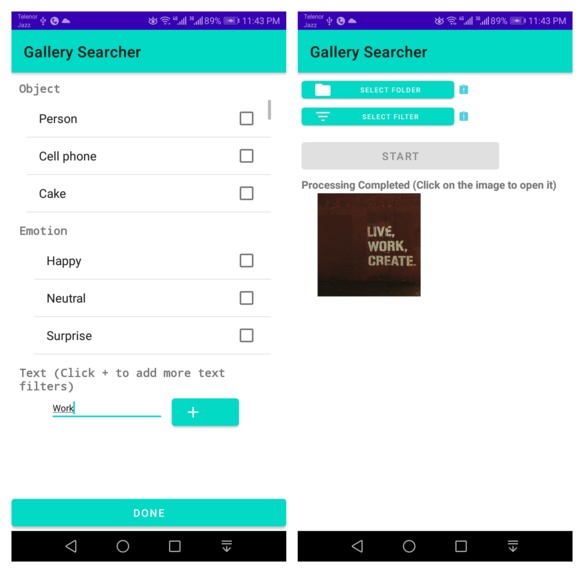

Text Detection for word "Work" with results

-

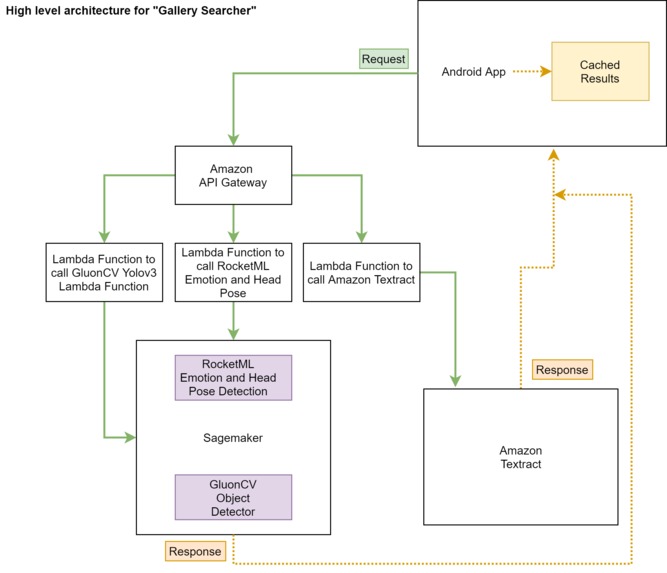

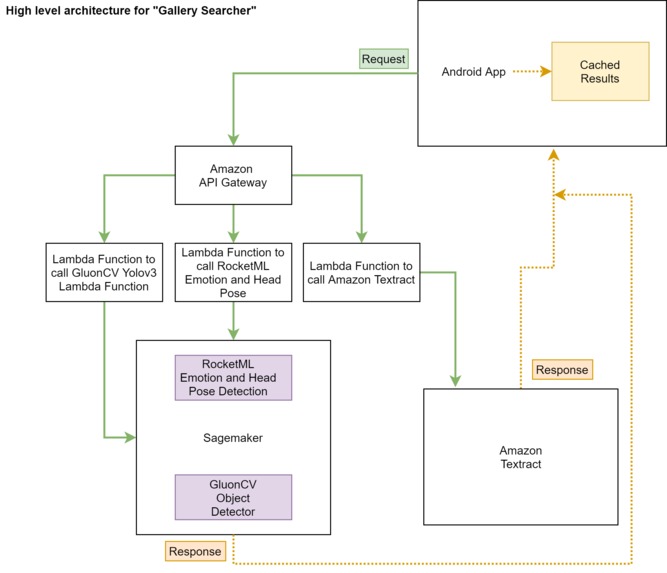

High Level Architecture Diagram showing the complete flow for a request including AWS services used.

Inspiration

The inspiration for taking part in this hackathon is the quest to do something special which solves a common problem using "Machine Learning" but without actually doing all that lengthy process of training models using different techniques. I also wanted to know about AWS and I thought this is a good way to get started by making something usable in real life.

What it does

Sam likes to take pictures because he love to see his past moments, whether it was some special meal cooked by Sam or if it was an important event like when he was cutting a cake. He also keep record of important document by taking document snaps. But oh, this really sometimes make a lot of trouble to find that one particular pic that he needs. For example, he wanted to see all those pics where he was eating a pizza, or cutting a cake, or that he wants to see his pics in formal dressing(tie), or that he wants to see the pics where he looks happy, or that he wants to find the snapshot of important document like a certificate or a medical report etc. Oh man! Thats a big trouble to go through the gallery everytime to find required pic.

Here is "Gallery Searcher". Launch the app, choose gallery folder, apply filters, wait for result, done! Yes it's as simple as it sounds. Here is what Sam would need to do Want to see pictures of cake in my gallery: Choose filter, select "cake" in objects filter category

Want to see my pictures in formal dressing(tie): Choose filter, select "tie" in objects filter category

Want to see the pictures where I look happy: Choose filter, select "happy" in emotions filter category

Want to see the picture of my certificate I earned few months ago: Choose filter, enter "certificate" or some text that was written on certificate like "Sam" etc in text filter category

Want to see the pictures of cars in my gallery: Choose filter, select "car" in objects filter category

Also results are cached after the search to improve the search time for next search.

How I built it

I build it using different AWS Services like sagemaker, lambda, amazon api gateway etc. Below is detailed description of which tools and service I used and for what purpose Android Studio: To develop android app to provide user an interface to select gallery folder and filters he/she wants to apply GluonCV Yolov3 Object Detector Model deployed on Amazon SageMaker: For detection of objects in images. RocketML Emotion and Head Pose Detection Model deployed on Amazon SageMaker: For detection of emotions in images Amazon Textract" to detect text in images **AWS Lambda and Amazon API Gateway to call sagemaker and textract from android app.

So, user select the folder and the filters and click "Start" button. Call goes to respective amazon api gateways urls. Responses are parsed and matched if they meet user needs. If response matched of particular image matches, that image is then shown in results.

To improve the performance, results are cached after the search so images can be matched up very quickly next time.

Challenges I ran into

Using sagemaker from android app was a bit challenging. Then I found a nice way of making lambda function and using amazon api gateway to call sagemaker.

Accomplishments that I'm proud of

- I don't know much about Machine Learning, Data Mining etc but inspired by the solutions it provide to problems, that's why I am really proud of making something which is making a good use of Machine learning (without actually doing any model training work) and solving a real life problem

What I learned

One of reason I participated in this hackathon is to know AWS. These days companies are shifting to Cloud Platform because of many reasons. Knowing AWS these days is very important these days. By participating in this hackathon, I learned about AWS Lambda functions, API Gateway, Sage maker. I also revised my android knowledge through this hackathon. I also learned one most important thing and that is "It is actually possible to make use of Machine Learning without knowing it".

What's next for Gallery Searcher

A lot could be done in Gallery Searcher

- Other than objects, human emotions and text, face filters can also be included where a specific person could be searched in whole gallery.

- Automatic recognition can be an option where as soon as a new picture is taken from camera, a service could be trigger to perform detection so next time, when user perform a search, pictures are already cached.

- Filters can also be incorporated in videos.

- There could be small bugs that must be fixed.

Built With

- amazon-api-gateway

- amazon-textract

- amazon-web-services

- android-studio

- aws-lambda

- github

- gluoncv-yolov3-object-detector

- java

- rocketml-emotion-and-head-pose-detector

- sagemaker

Log in or sign up for Devpost to join the conversation.