-

-

Over 130 Million Americans have diet sensitive conditions

-

$5.8 billion nutrition app industry

-

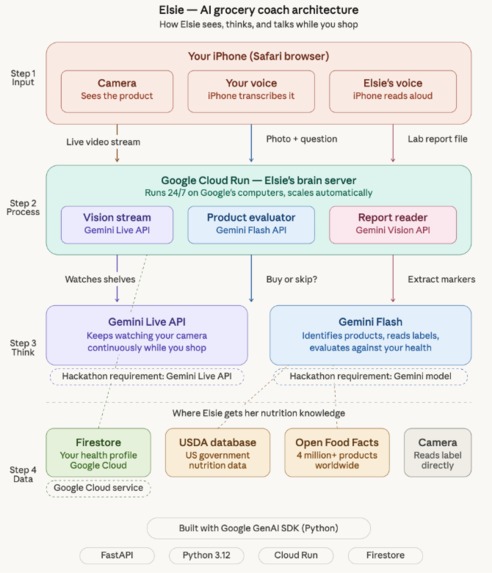

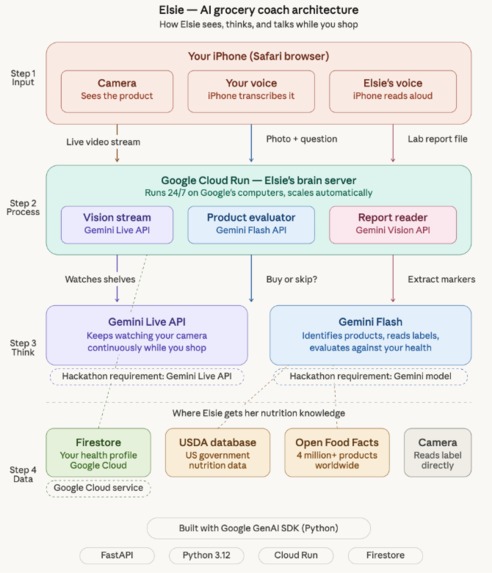

Elsie Architecture

-

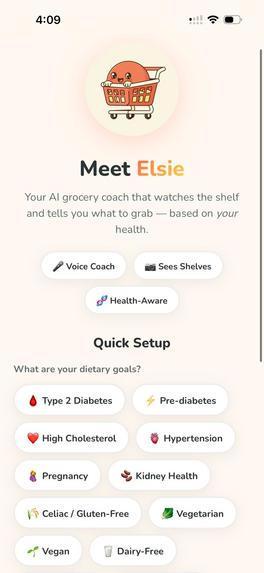

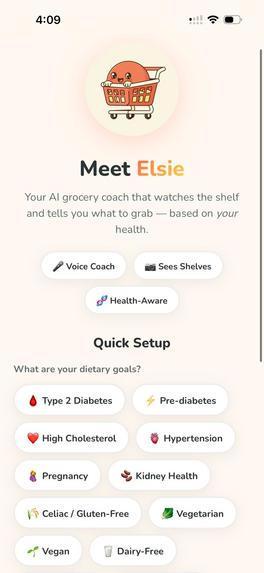

Elsie homepage with options to select for any pre-existing preferences (It's optional)

-

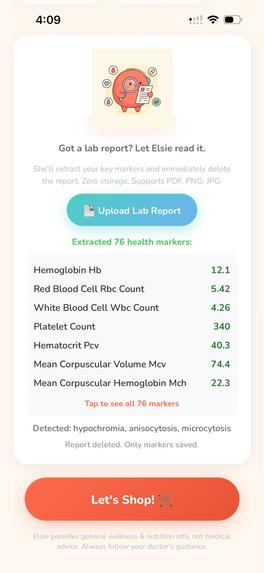

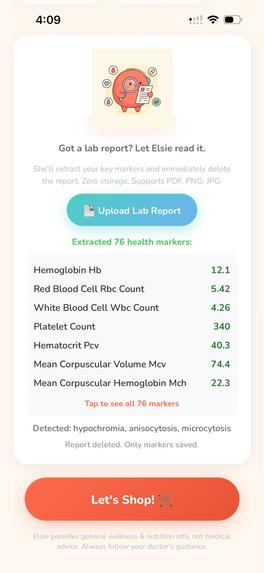

Top 7 health markers identified from the uploaded lab report

-

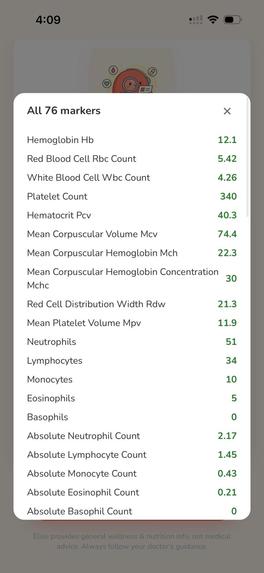

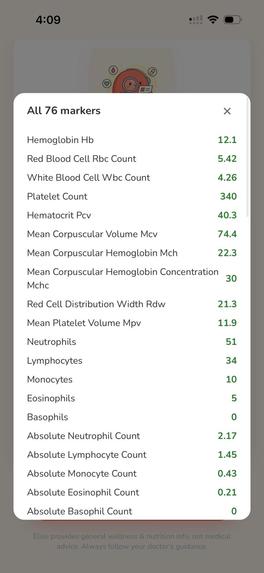

All 76 health markers from the uploaded lab report

-

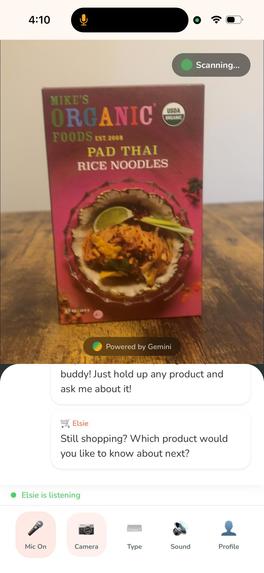

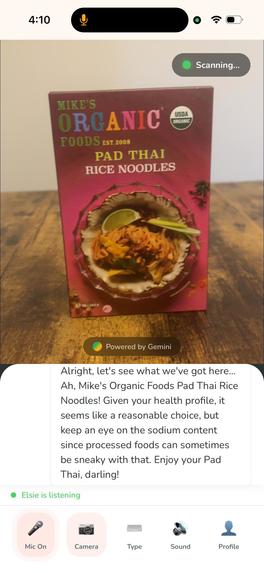

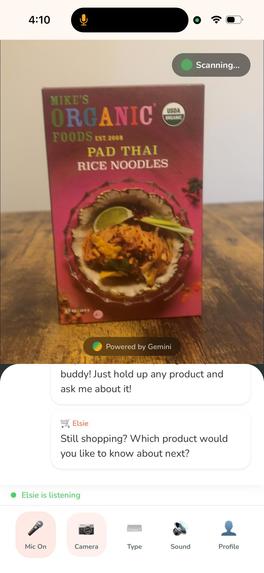

Let's shop take you to the camera where you simply point to the product

-

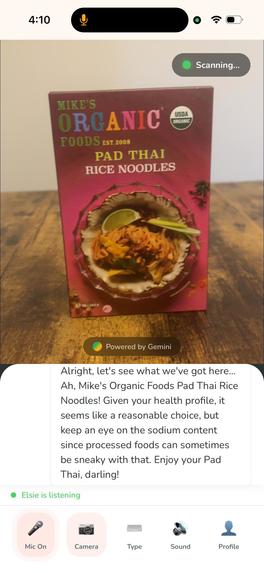

Elsie gives a personalized answer based on your identified health markers

Elsie — AI Grocery Coach

Real-time AI that reads your lab report, watches grocery shelves through your camera, and tells you what to grab — as naturally as asking a friend standing next to you.

Live App: elsie-grocery-coach-29072207619.us-central1.run.app Category: Live Agent Powered by: Gemini 2.0 Flash Hosted on: Google Cloud Run

See · Hear · Speak

The hackathon asked: "Can your agent see, hear, and speak?" Elsie does all three:

SEE — Camera streams frames every 3 seconds to Gemini Live API. Gemini identifies products by name, brand, and reads nutrition labels — packaged goods AND fresh produce.

HEAR — Continuous speech recognition via iOS SpeechRecognition. Always listening — no button tap needed. Barge-in support: start speaking and Elsie stops mid-sentence.

SPEAK — iOS speechSynthesis reads Elsie's evaluations aloud. Hands-free while you push the cart. Personalized greeting based on your conditions.

Elsie's Persona

Elsie is not a generic chatbot. She has a distinct personality:

- Warm and playful — "Ooh, jackpot! That salmon is an omega-3 goldmine!"

- Clever food puns — "That cereal has more sugar than a birthday cake wearing a lab coat!"

- Never judgmental — gently redirects with humor, never shames

- Quick — 2-3 sentences max, because grocery aisles move fast

- Fun food facts — "Fun fact: that avocado has more potassium than a banana!"

- Allergen alerts are urgent — "Whoa, hold up! That's got peanuts. Put that one back, friend."

Inspiration

More than half of all U.S. adults — over 130 million people — live with at least one preventable chronic condition linked to diet (2025 Dietary Guidelines Advisory Committee Report, USDA/HHS). We call them diet-sensitive conditions — conditions where what you eat directly impacts your health outcomes every single day:

- Diabetes and pre-diabetes: 40.1 million Americans have diabetes; another 115.2 million adults have prediabetes — must monitor sugars, carbs, and glycemic load (CDC, Jan 2026)

- Hypertension: 119.9 million adults — nearly 1 in 2 — must limit sodium, often below 1,500mg/day (CDC, Jan 2025)

- High cholesterol: 86 million adults have total cholesterol above 200 mg/dL — must watch saturated fats and dietary cholesterol (CDC, Oct 2024)

- Chronic kidney disease: 37 million adults, 1 in 7 — must restrict phosphorus, potassium, and sodium (CDC, Jan 2026)

- Food allergies: 33 million Americans — face potentially life-threatening reactions from hidden allergens (FARE)

- Celiac disease: approximately 3 million Americans — must avoid every trace of gluten (Celiac Disease Foundation)

Diet-related chronic disease accounts for over half of U.S. deaths and costs the healthcare system over $1 trillion annually (U.S. GAO Report GAO-21-593).

The nutrition app market is valued at $5.8 billion in 2025 growing at 12% CAGR (Mordor Intelligence). The chronic-disease patient segment grows at 14.4% CAGR — exactly who Elsie serves. The broader personalized nutrition market is projected to double to $30.9 billion by 2030 (MarketsandMarkets).

Every one of these people gets the same advice: "Watch what you eat." Then they walk into a grocery store with 40,000 products and zero guidance. There is a 5.8 billion dollar nutrition app industry trying to help them — and every single one makes you scan barcodes, type products, or upload photos. One product at a time. None of them read your lab report. None of them see fresh produce.

What if you had a friend who had already read your lab report and just shopped with you?

Competitive Landscape

- MyFitnessPal — Manual log or barcode scan. High friction. No fresh produce. Does not read your lab report.

- Fooducate — Barcode scan with A-D grades. Medium friction — scan, read, repeat. No fresh produce. Does not read your lab report.

- Yazio / Lifesum — Meal plans and manual logging. High friction — post-purchase. No fresh produce. Does not read your lab report.

- ChatGPT / Gemini chat — Photo upload and type question. High friction — 6 steps per product. Sees fresh produce but manually. Does not read your lab report.

- Dietitian — Office visit advice. Not at the store with you. General advice only. Sees your report but is not real-time.

- Elsie — Point camera and speak. Zero friction — just talk. Sees all products including fresh produce. Reads your entire lab report and extracts every marker.

What It Does

Elsie is a feature that fills this gap by being the knowledgeable friend who shops with you — one who has already read your lab report.

Before your first trip, you upload your lab report (PDF or photo). Gemini reads the entire document and extracts every health marker it finds — blood sugar, cholesterol, iron, vitamins, thyroid, liver function, kidney markers, everything. The report is immediately deleted. Only the numbers stay. Now Elsie knows you personally - not just "diabetic," but "A1c at 7.2, LDL at 145, iron at 38, vitamin D low."

Then you simply open Elsie, and start shopping. Your camera watches the shelves. When you want to know about a product, you just ask — out loud, naturally, like talking to a friend next to you: "Hey, can I buy this?" and within 2-3 seconds, Elsie sees the product (front or back side), identifies it by name and brand, evaluates it against your specific health markers, and responds with a clear verdict, spoken aloud so your hands stay free for the cart.

No barcode scanning. No typing. No switching apps. No uploading photos. JUST POINT AND ASK!

Unlike barcode apps, Elsie sees everything — packaged goods, fresh produce, bakery items, deli meats. Point at a bunch of spinach and Elsie knows your iron is low: "Ooh, grab that spinach! Your iron is on the low side and spinach is packed with it. Pro tip: pair it with something citrusy — vitamin C helps your body absorb the iron better!"

Key Features:

- Extract ALL lab markers — Upload once. Gemini reads the entire report: blood counts, vitamins, minerals, hormones, liver, kidney, thyroid, cholesterol, metabolic panel — everything. Report immediately deleted. Top 7 markers display on screen. Tap to expand full list in a popup.

- 12 health conditions — Diabetes, pre-diabetes, high cholesterol, hypertension, kidney disease, pregnancy, celiac, vegetarian, vegan, dairy-free, nut allergy, heart health. This step is optional.

- Works on ALL products — Packaged goods, fresh produce, bakery, deli, bulk bins. No barcode needed.

- Continuous voice — Always listening. No button tap. Just talk naturally.

- Barge-in — Start speaking and Elsie stops mid-sentence to listen.

- Silence nudge — After 60 seconds of quiet: "Which product would you like to know about next?"

- Personalized greeting — "I'll keep a special eye on sugars and carbs for you!"

- Works at any store, any country — No partnerships needed.

How We Built It

Think of it as two brains working together. The first brain is the Gemini Live API, and it is always watching your camera, keeping track of what is on the shelf in front of you. The second brain is Gemini Flash, and this is the one that does the heavy lifting. The moment you ask a question, it takes that camera frame along with your question and every single marker from your lab report, and gives you a clear, personalized answer.

The Live API excels at streaming context. generate_content excels at consistent, accurate responses. For the critical task of health-based product evaluation — when someone is standing in a grocery aisle making a health decision, the answer has to be right every single time. That is why we built it this way. Both paths use the Google GenAI SDK.

How Elsie evaluates products — four layers:

- Layer 1 — Camera (Gemini Vision): Identifies product name, brand, reads visible nutrition label

- Layer 2 — Your health profile (Firestore): Every marker from your lab report plus selected conditions and allergies

- Layer 3 — USDA FoodData Central and Open Food Facts: Detailed nutrition data for 4 million+ branded products worldwide

- Layer 4 — Direct label reading (Gemini OCR): Reads nutrition facts panel from camera if product is not in databases

Elsie never fails — even for store-brand or brand-new products, she reads the label directly.

Tech stack:

- AI Model: Gemini 2.0 Flash — Live API for real-time vision streaming, generate_content for product evaluation, Vision for lab report extraction (extracts ALL markers)

- SDK: Google GenAI SDK (Python) version 1.5.0 or higher

- Server: Google Cloud Run — auto-scales, pay-per-use

- Database: Google Cloud Firestore — health profile and lab marker persistence

- Backend: FastAPI (Python 3.12) — async, fast, WebSocket support

- Voice Input: iOS SpeechRecognition API — built into iPhone, continuous listening

- Voice Output: iOS speechSynthesis API — built into iPhone, hands-free

- Nutrition Data: USDA FoodData Central (U.S. government, updated monthly) and Open Food Facts (4 million+ products, 150 countries)

- Deployment: Automated via deploy.sh — one command deploys everything

Challenges We Ran Into

iOS Safari audio was the biggest battle. The original plan was to use Gemini's native audio streaming for voice output. On desktop it worked beautifully. On iPhone Safari, it failed silently. iOS restricts AudioContext initialization to user gestures, blocks raw PCM audio playback, and routes audio to the earpiece instead of the speaker when a microphone stream is active. After three days of debugging audio pipelines, we pivoted entirely to iOS native speechSynthesis for voice output and SpeechRecognition for voice input. This turned out to be more reliable than any server-streamed audio could be.

The Gemini Live API in TEXT mode does not proactively comment on video. We expected Gemini to narrate what it saw on the camera. It does not — it receives frames as silent context and only responds when asked. This forced the hybrid architecture: Live API watches, but generate_content answers. What felt like a setback became the right design — splitting awareness from evaluation improved reliability dramatically.

Lab report extraction required iterative prompt engineering. Our first prompt asked for 7 specific markers and returned a rigid JSON template full of nulls. Reports vary wildly — different labs, different formats, handwritten notes, multi-page PDFs. The breakthrough was simple: stop telling Gemini what to look for and just say "extract everything you find." Gemini went from returning 7 values to returning 30+ from the same report.

Chrome on iPhone does not support SpeechRecognition. Apple restricts the webkitSpeechRecognition API to Safari only, even though Chrome on iOS uses the same WebKit engine underneath. This means Elsie's core voice experience only works on Safari.

The speechSynthesis.cancel() bug on iOS. When users tapped Mute, we called cancel() to stop Elsie's voice. On iOS Safari, this puts the entire speech engine into a stuck state — even after unmuting, speak() silently fails. The fix: re-unlock the speech engine with a silent utterance when unmuting.

Accomplishments We're Proud Of

It actually works in a real grocery store. Not a mockup, not a simulation — a real person walking through real aisles, asking about real products, getting real answers in 2-3 seconds. Building something that survives the chaos of a real environment (bad lighting, crowded shelves, background noise, shaky hands) was the hardest and most satisfying part.

Fresh produce evaluation. Every competitor in the nutrition app space relies on barcodes. Elsie evaluates spinach, bananas, avocados — products with no barcode, no packaging, and no database entry. When she tells a kidney patient to skip the bananas because of potassium and grab apples instead, that is something no barcode scanner can do.

Lab report extraction that actually works. Upload a messy, multi-page lab PDF and Gemini pulls out 30+ health markers in seconds. The report is immediately deleted. Only numbers stay. This turns a generic nutrition app into a deeply personal health tool.

The entire thing was built solo in 4 days. One person, one API, deployed on Google Cloud, handling real-time camera streaming, voice interaction, lab report extraction, health profile management, and personalized product evaluation. That is what Gemini and Google Cloud make possible.

The "not a pamphlet" insight. After a diagnosis, patients get a pamphlet about dietary changes and are sent home. Elsie is what should come after that pamphlet — a persistent, knowledgeable companion that goes with you to the store and helps you act on the advice.

What We Learned

Reliability beats purity. The hybrid approach (Live API for vision plus generate_content for evaluation) proved far more reliable than routing everything through a single streaming session. The best architecture is whatever delivers a working product.

Don't limit the AI. My first extraction prompt asked for 7 specific markers. When I changed it to "extract everything you find," Gemini pulled 30+ values from a single report. Tell Gemini what you want, not what you think it can do.

Fresh produce is the blind spot. Every competitor relies on barcodes. Half the grocery store has no barcodes. Gemini Vision identifies products visually — making Elsie the only solution that works for the entire store.

Building for iPhone Safari is a project within a project. AudioContext restrictions, SpeechRecognition auto-restart patterns, and speechSynthesis timing quirks shaped the final architecture significantly.

Design for the aisle, not the desk. Every UX decision was tested against one question: would this work while pushing a cart with one hand and holding a product with the other? That constraint led to continuous voice, barge-in, and hands-free operation as core features — not nice-to-haves.

What's Next for Elsie

Elsie today is a feature that proves the interaction model works. The roadmap turns it into a platform with defensibility.

Phase 1 — Build the intelligence layer (0-3 months) Native iOS/Android app with Gemini audio streaming for true two-way voice. But the real work: build a clinically validated health-food scoring engine — "If your A1c is 7.2, this product scores 3/10" — curated and reviewed by dietitians, not generic LLM knowledge. This is what separates a feature from a product.

Phase 2 — Build the data flywheel (3-6 months) Multi-language support starting with Spanish (60 million+ U.S. speakers). Family profiles — one household, multiple health profiles, one grocery trip. But the real value: track what users buy over months, correlate with their lab marker changes over time. "Users who bought these 15 products saw their A1c drop 0.4 points in 3 months." This longitudinal purchase-health dataset does not exist anywhere.

Phase 3 — Build the moat (6-12 months) Partnerships with health systems so Elsie is prescribed post-diagnosis — embedded in the clinical workflow, not competing on the App Store. Explore FDA Digital Therapeutic pathway for regulatory defensibility. Health food brand partnerships where brands earn "Elsie Recommended" status for specific conditions.

Business Model: Elsie's core is open source. The business is the clinically validated health-food intelligence layer underneath — available as an API. Grocery delivery platforms integrate it to show "good for your profile" badges. Telehealth providers prescribe it post-diagnosis. Health insurers offer it to members because preventive dietary coaching is cheaper than hospitalization. The defensibility is not the app — it is the proprietary scoring engine and the longitudinal purchase-health data that no competitor can replicate without the user base and time.

Privacy

- Lab reports are processed in memory and immediately deleted — only extracted key-value markers are stored

- No personal identifying information is saved

- Camera frames are processed in real-time and never saved

- Health data stored in Google Cloud Firestore with encryption at rest

- Elsie provides general wellness and nutrition information, not medical advice

Elsie provides general wellness and nutrition information, not medical advice. Always follow your doctor's guidance.

Built by Priyanka Nayyar — GitHub

Built with Google Gemini, Google Cloud, and Google GenAI SDK.

GeminiLiveAgentChallenge

Built With

- css

- docker

- fast-api

- fastapi

- gemini-2.0-flash

- gemini-live-api

- google-cloud

- google-cloud-firestore

- google-genai-sdk

- html

- ios-speech-recognition

- ios-speech-synthesis

- javascript

- open-food-facts-api

- python

- usda-fooddata-central-api

- websocket

Log in or sign up for Devpost to join the conversation.