-

-

Main Screen

-

Use Iphone's Camera with some modification that allows switching camera, focusing and flash.

-

People Changing Camera. JK! Making sure the photo is good.

-

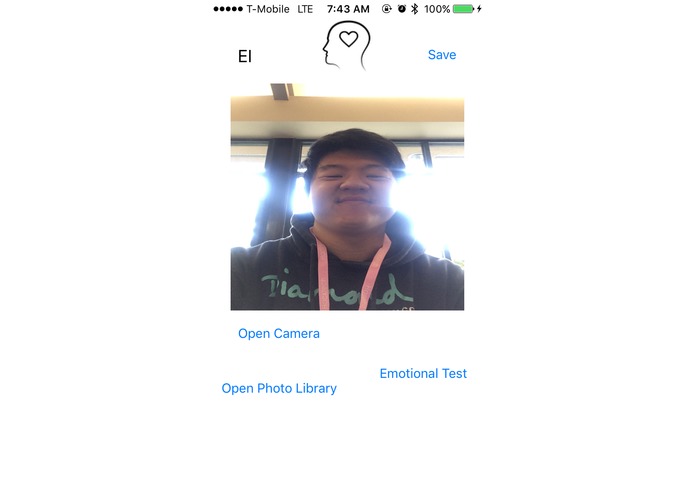

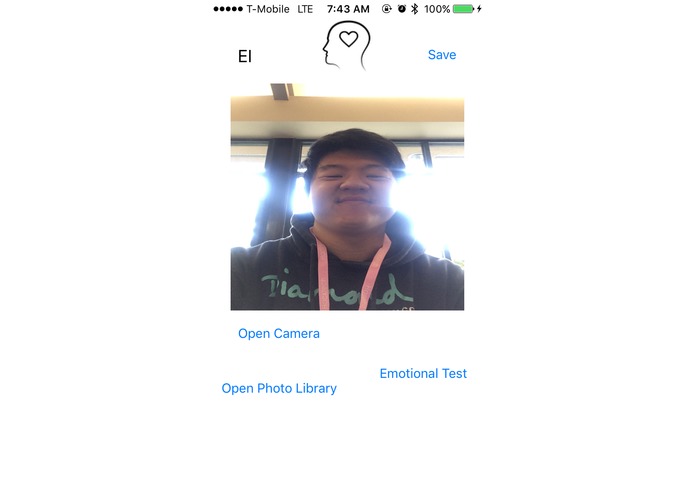

Back to the main screen with the photo to start 'Emotional Test'

-

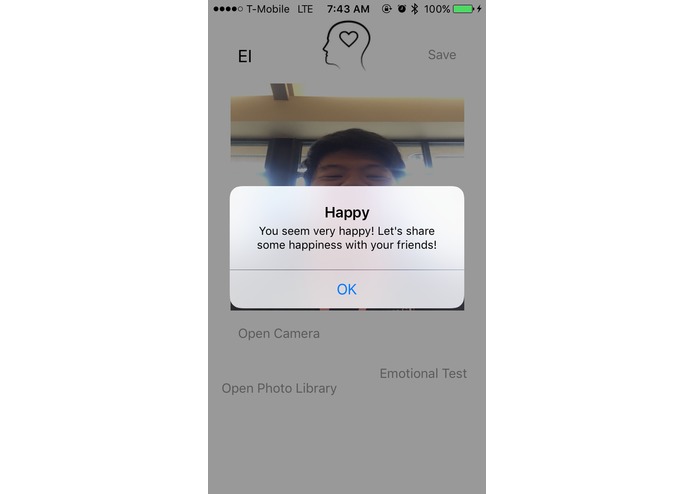

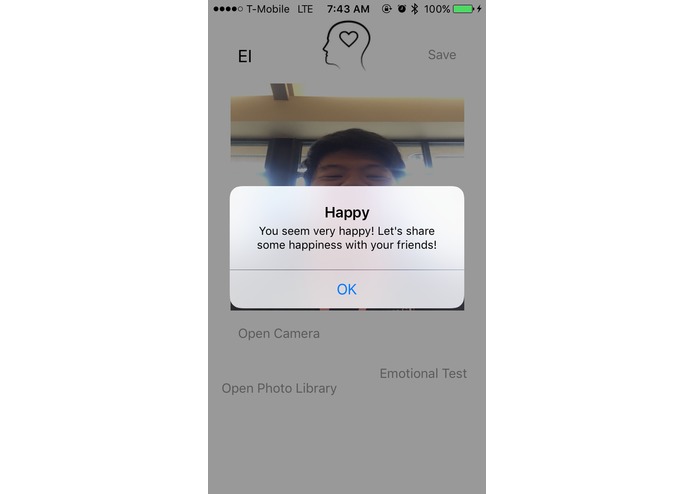

Replies based on the user's mood. This prompt leads to Facebook for sharing happiness.

Inspiration

This app was inspired by the use of AI to understand humans better. Using Microsoft's Emotion API, I was able to detect people's mood and emotions and, give them suggestions based on their mood.

What it does

To get started, the user has to open the camera from inside the app or choose a photo. Then, click on the 'Emotional Test' button to make the app work. The app uses Emotion API to gather information about moods of the person from the API and based on the mood suggests jokes, songs, articles or leads you to facebook to share your feelings. If it senses your fear, then it also calls 911.

How I built it

We built it using Xcode and Emotion API from Microsoft. We used values of different emotions provided by Emotion API and used them to create suggestions based on their mood.

Challenges I ran into

We started off with React Native hoping that it would be faster and easier but, got into more bugs. Thus, had to start from scratch again to build my first app for IOS. Due to lack of resources for Microsoft APIs and Swift and IOS platform, we had to dig in deeper about the working of JSON and REST APIs.

Accomplishments that I'm proud of

Being able to complete the first version of the app that can detect people's emotions. It seems technology is taking us further apart, but I was proud of using it for understanding the humans better.

What I learned

We learned resilience and perseverance. We learned it is never too late to pivot and work from scratch. We also learned the use of JSON in multiple programming languages since we had to compile knowledge of using JSON in other languages to work on Swift.

What's next for EI

The addition of other APIs and AI technology to give more options and better suggestions to the user. Ability to add your own emergency contacts instead of '911'. Better UI/UX Design. Real Time Scanning and Suggestions (Currently only analyzes still images. Expansion to other mobile platforms.

Log in or sign up for Devpost to join the conversation.