-

-

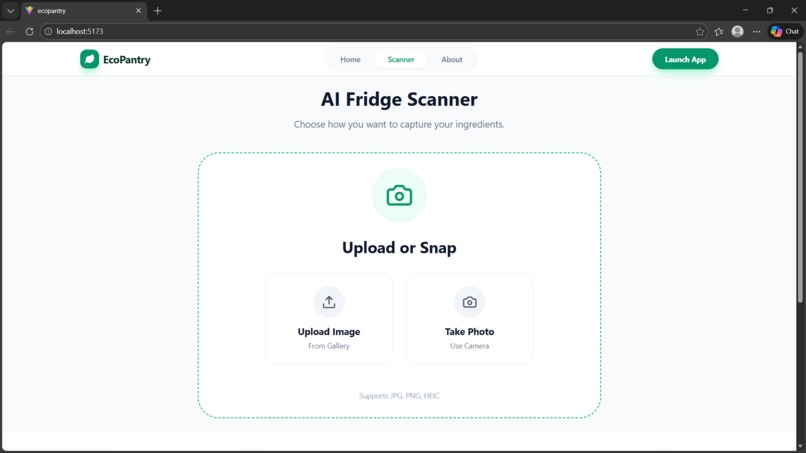

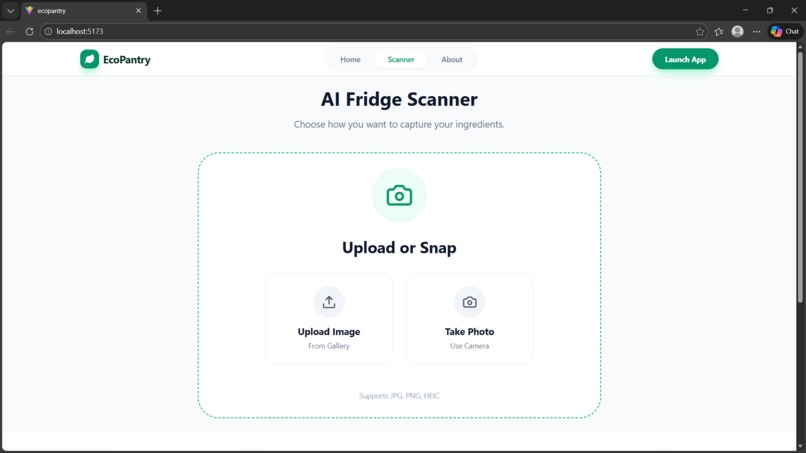

Scanner Interface: Simple options to upload photos or snap a picture of your fridge.

-

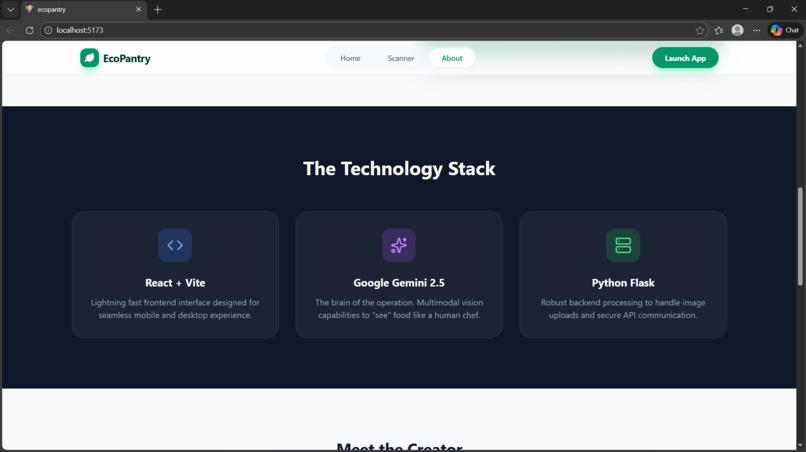

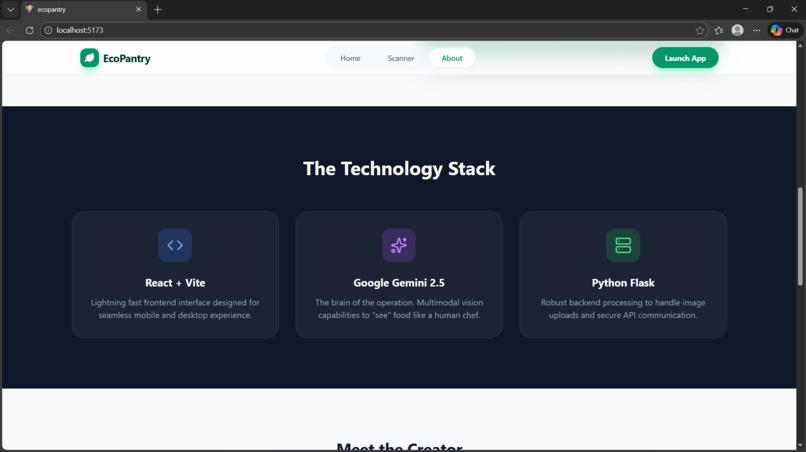

Tech Stack: Built with React, Python Flask, and the advanced Gemini 2.5 model.

-

Meet the Creator: The developer profile and the mission behind EcoPantry.

-

Our Mission: Combining AI technology with sustainability to fight global food waste.

-

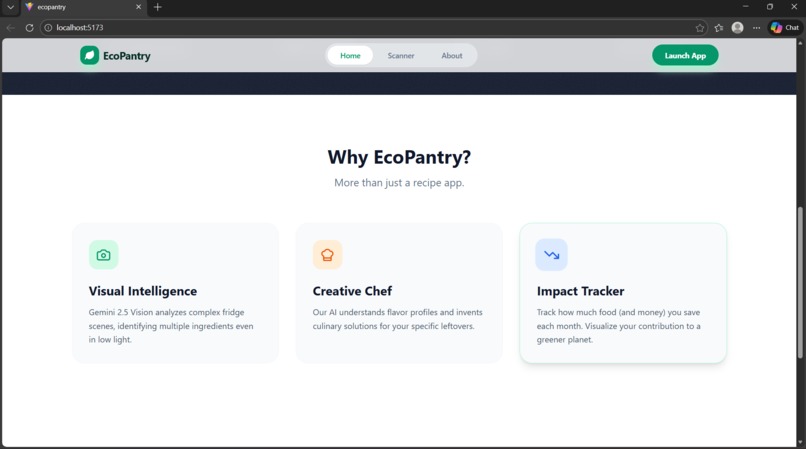

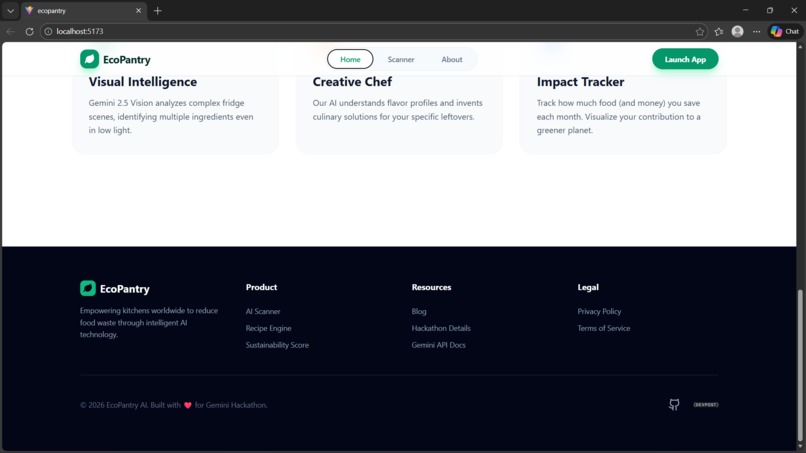

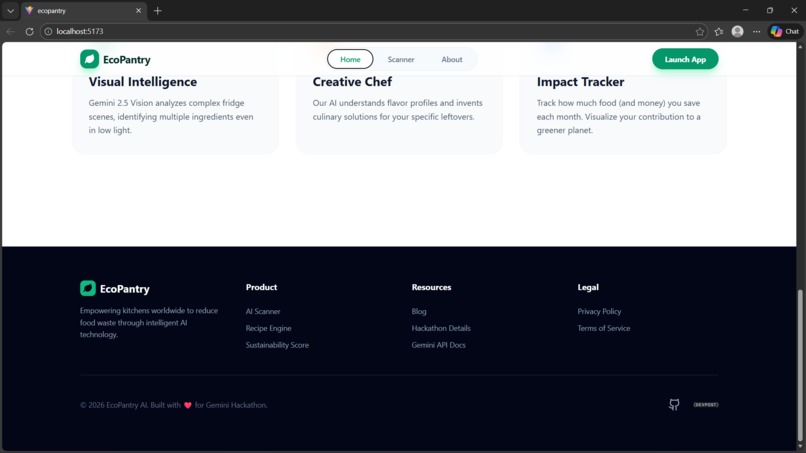

Key Features: Highlighting Visual Intelligence, Creative Chef, and Impact Tracking.

-

Landing Page: A modern, responsive interface inviting users to 'Scan My Fridge'.

-

The Mission: Addressing the 1.3 billion tons of annual global food waste.

-

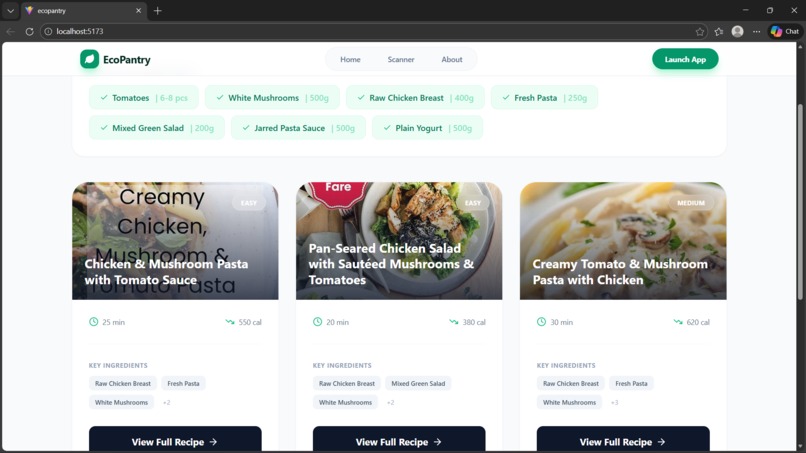

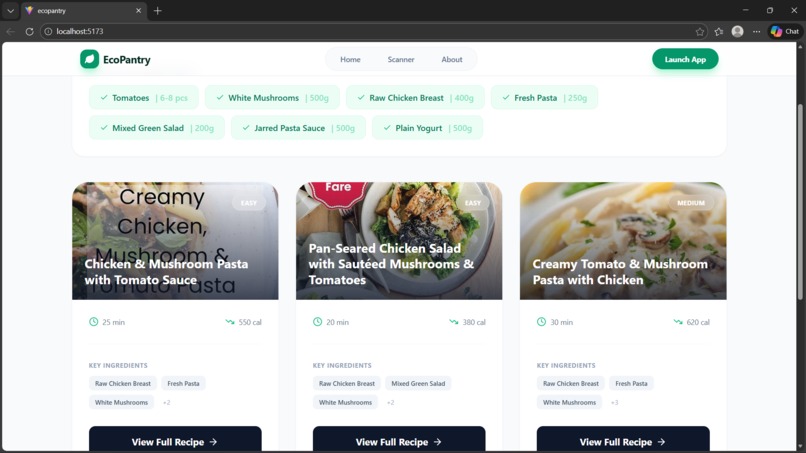

Chef's Suggestions: Real-time ingredient detection and personalized zero-waste recipes. (

-

Project Resources: Quick access to documentation, recipe engine, and source code.

💡 Inspiration

Every year, approximately 1.3 billion tons of food is wasted globally. That is nearly one-third of all food produced for human consumption.

As students and developers, we often found ourselves in a familiar situation: staring at a messy fridge full of random ingredients, tired from coding, and having absolutely no idea what to cook. The easiest option was often to order takeout, letting the fresh groceries go bad.

We realized this wasn't just a "us" problem; it's a global sustainability issue. We wanted to build a bridge between "what I have" and "what I can eat." That inspired EcoPantry—an intelligent kitchen assistant that gives you superpowers to reduce waste and cook delicious meals instantly.

🥗 What it does

EcoPantry is a full-stack web application that acts as your personal AI Chef.

- Scan: Users upload a photo of their open fridge, pantry, or a pile of groceries.

- Analyze: The app uses Google Gemini 2.5 Flash to "see" and identify ingredients, even in cluttered or low-light images.

- Create: It instantly generates personalized, zero-waste recipes based only on the detected ingredients.

- Visualize: The app provides cooking times, calorie estimates, and visual previews of the final dish.

⚙️ How we built it

We built EcoPantry using a modern, robust tech stack:

- Artificial Intelligence: The core brain is Google Gemini 2.5 Flash. We chose the "Flash" model for its incredible speed and cost-efficiency without sacrificing multimodal accuracy. We utilized the Python SDK to send image data and receive structured JSON responses.

- Backend: We used Python Flask to handle API requests, manage image uploads, and communicate securely with the Gemini API.

- Frontend: The user interface was built with React.js and Vite for lightning-fast performance, styled with Tailwind CSS for a clean, eco-friendly aesthetic.

- Prompt Engineering: We spent significant time refining the system instructions to ensure Gemini outputs strictly valid JSON data instead of conversational text, making it easy to parse on the frontend.

🚧 Challenges we faced

- Hallucinations vs. Structured Data: Getting a Large Language Model (LLM) to output consistent, error-free JSON was tricky. Early versions often included markdown text or conversational filler. We solved this by implementing strict prompt constraints and robust error handling in Python.

- Image Recognition Complexity: Fridges are messy! Distinguishing between a tomato and a red pepper in a dark corner of a shelf was difficult. However, the vision capabilities of Gemini 2.5 Flash proved to be surprisingly powerful in handling these edge cases.

- CORS & Connectivity: Connecting a local Flask backend with a Vite frontend initially caused some cross-origin resource sharing issues, which we resolved by configuring the Flask-CORS library properly.

🏅 Accomplishments that we're proud of

- Seamless Integration: Successfully connecting a React frontend with a Python backend and the Gemini API in a seamless flow.

- Speed: The application analyzes images and returns recipes in under 3 seconds, thanks to the efficiency of the Gemini 2.5 Flash model.

- Accuracy: The AI successfully identifies multiple ingredients from a single photo, even when items are partially obscured.

🧠 What we learned

- Multimodal AI is the Future: We learned firsthand how powerful it is to combine text and vision. It opens up endless possibilities for solving real-world physical problems with software.

- Prompt Engineering is Coding: We learned that writing a good prompt is just as important as writing good Python code. The way we instruct the model dictates the stability of the entire app.

- Full Stack Architecture: We deepened our understanding of how to structure a project where the frontend and backend are decoupled but communicate effectively.

🚀 What's next for EcoPantry

- Nutritional Tracking: Using Gemini to estimate the nutritional value (macros) of the scanned ingredients.

- Pantry History: Saving users' inventory so they don't have to scan every time.

- Shopping Lists: Automatically suggesting missing spices or ingredients to complete a specific recipe.

- Mobile App: Porting the React web app to React Native for a native mobile experience.

Built With

- computer-vision

- flask

- generative-ai

- git

- google-cloud

- google-gemini

- python

- react

- vercel

Log in or sign up for Devpost to join the conversation.