-

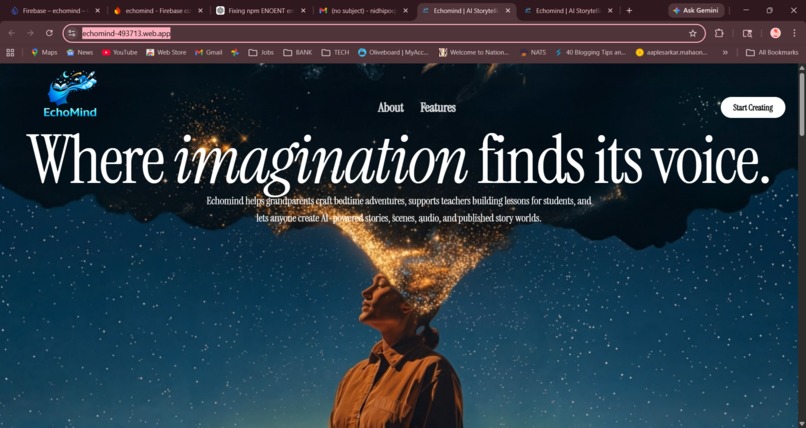

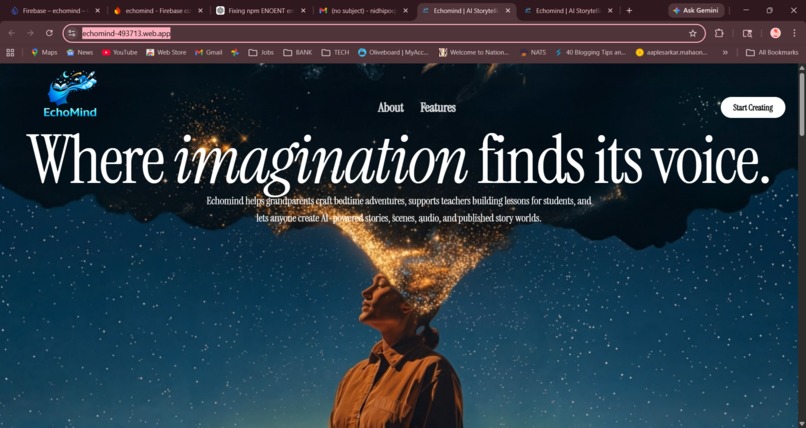

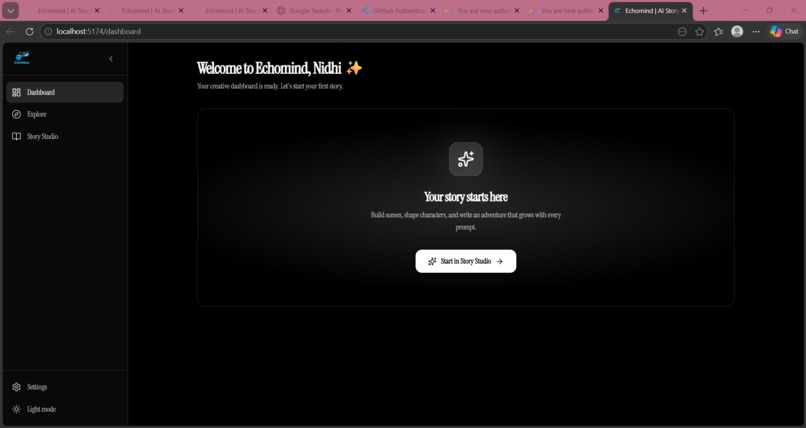

1. Welcome Page

-

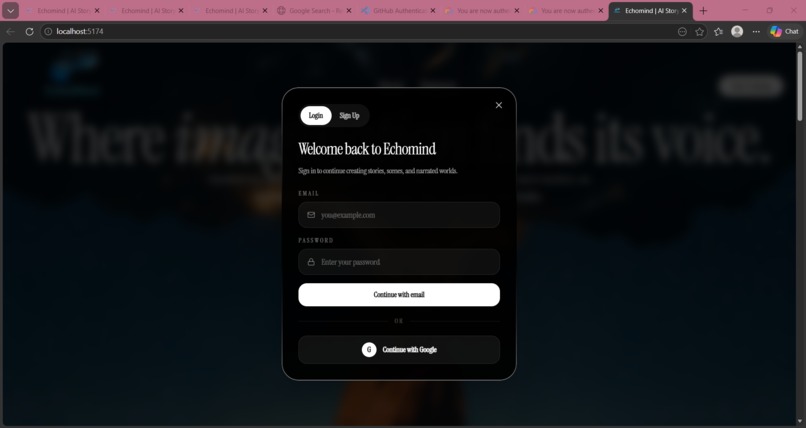

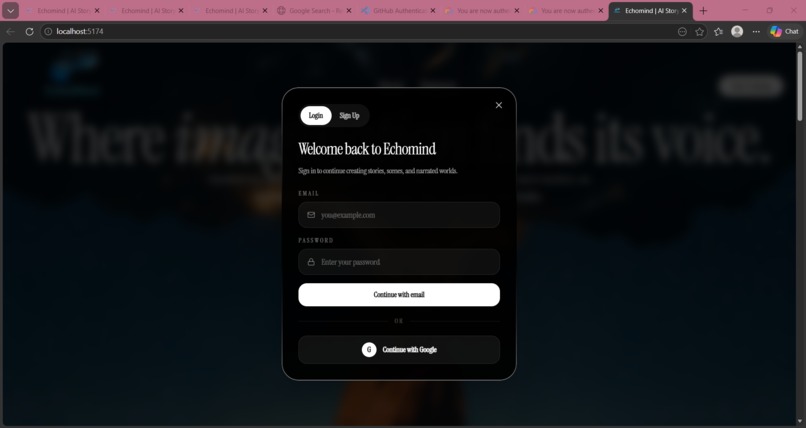

2. Authentication Page

-

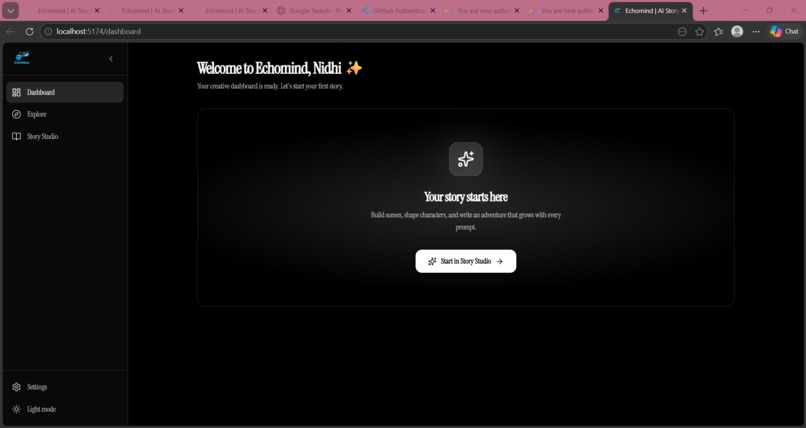

3. Home Page

-

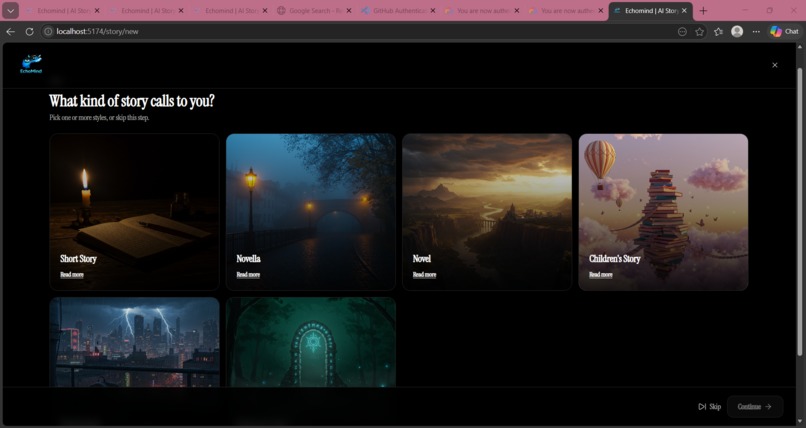

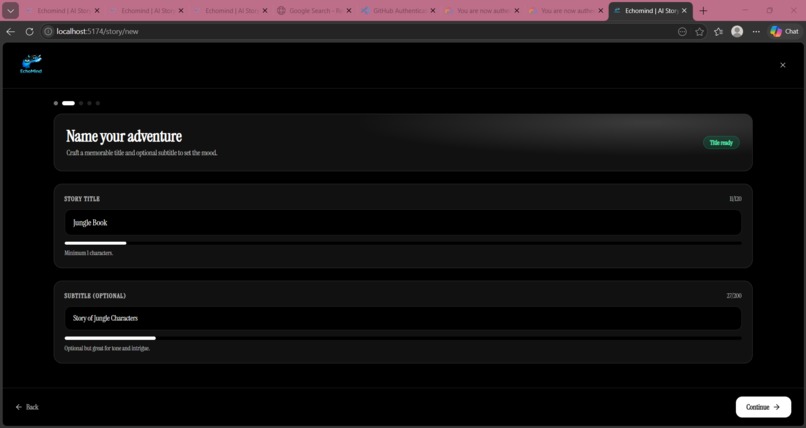

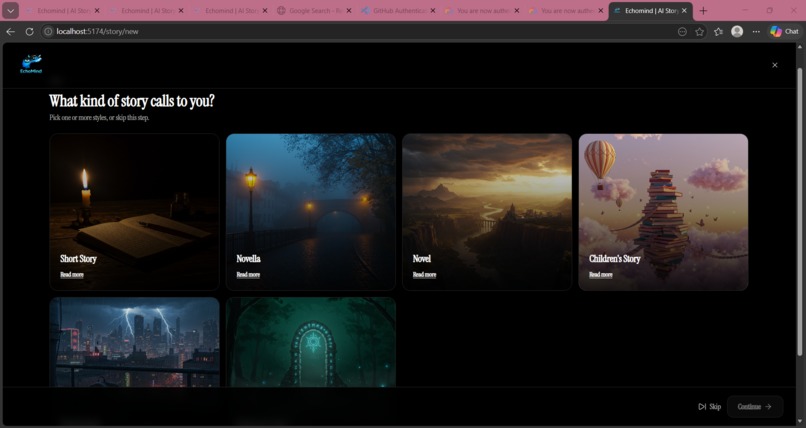

4. Story Setup Page-1

-

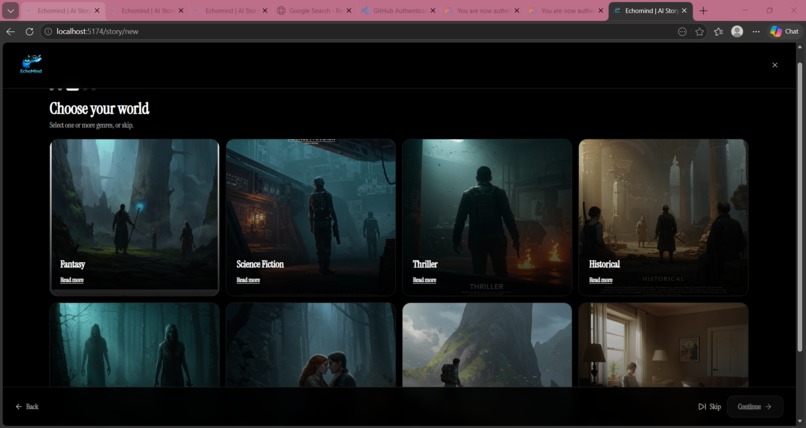

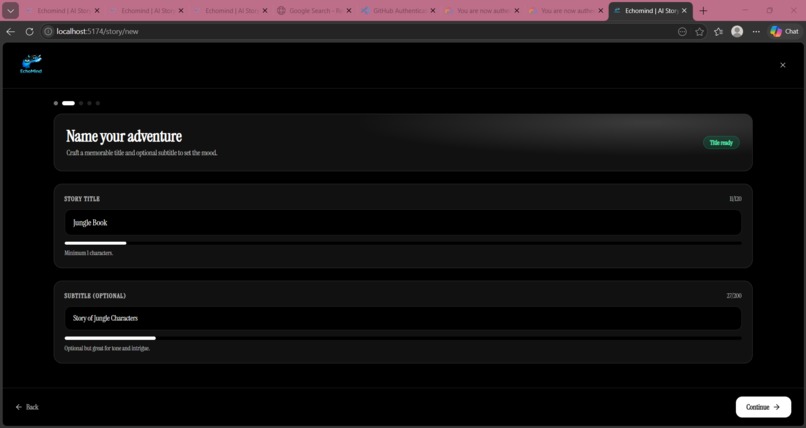

5. Story Setup Page-2

-

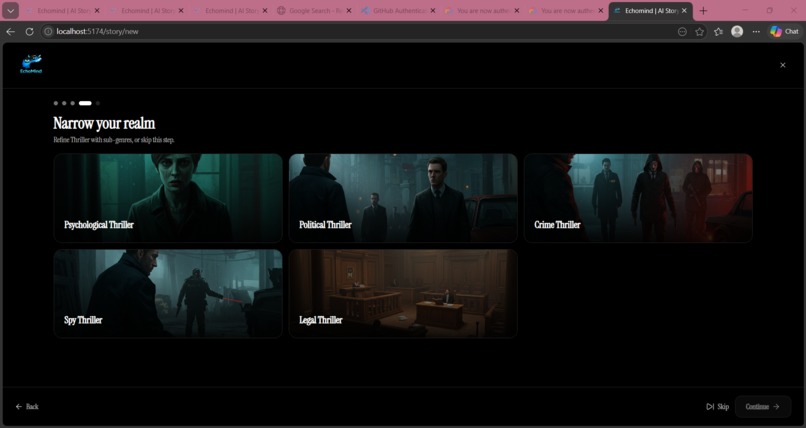

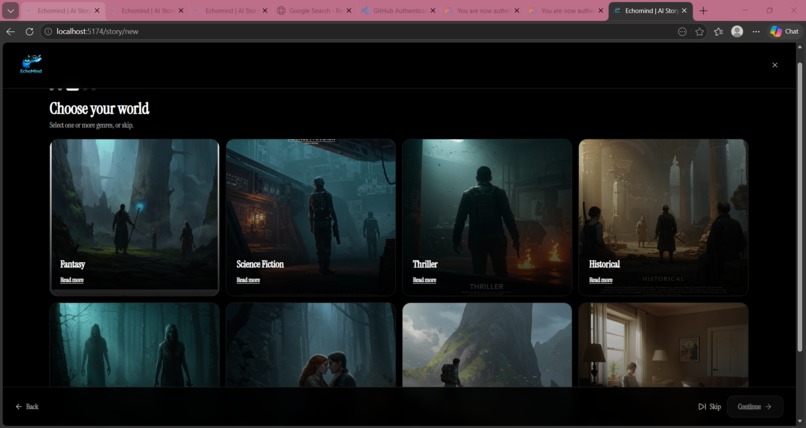

6. Story Setup Page-3 (Choosing Genre)

-

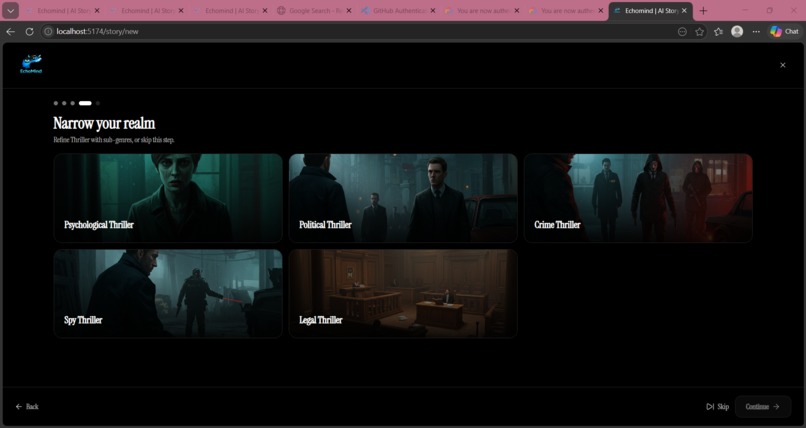

7. Story Setup Page-4 (Choosing SubGenre)

-

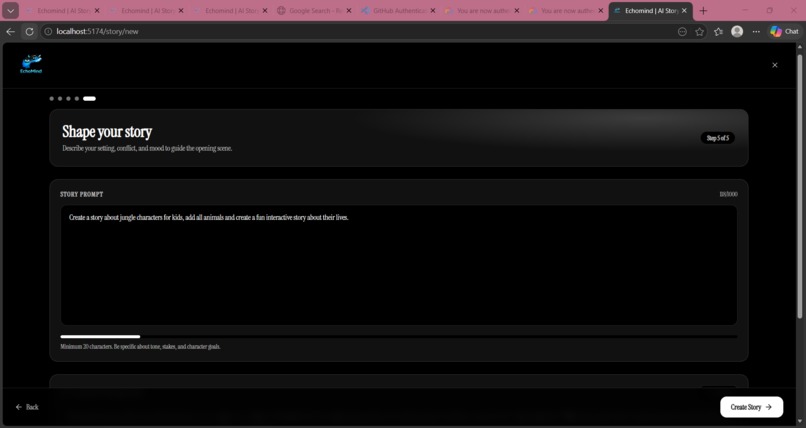

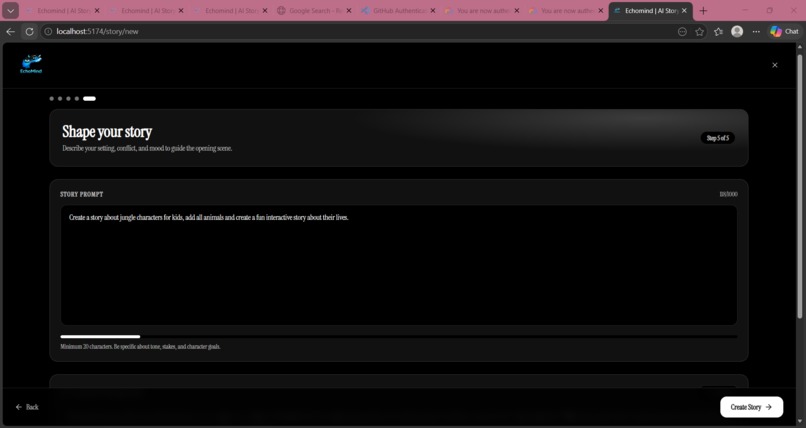

8. Story Setup Page-5 (Story Narration)

-

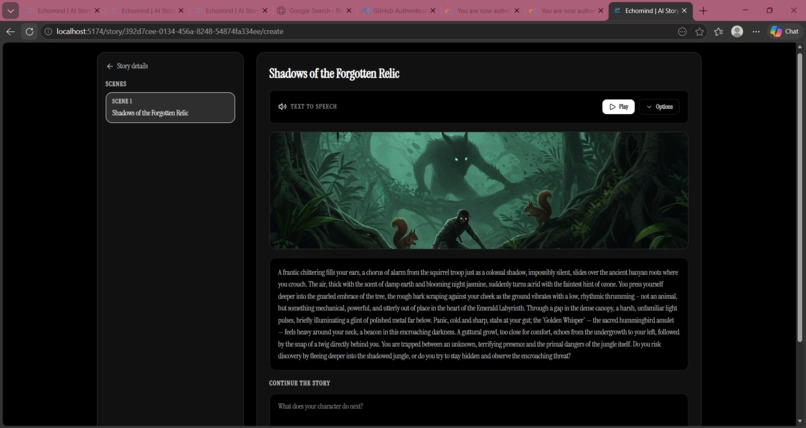

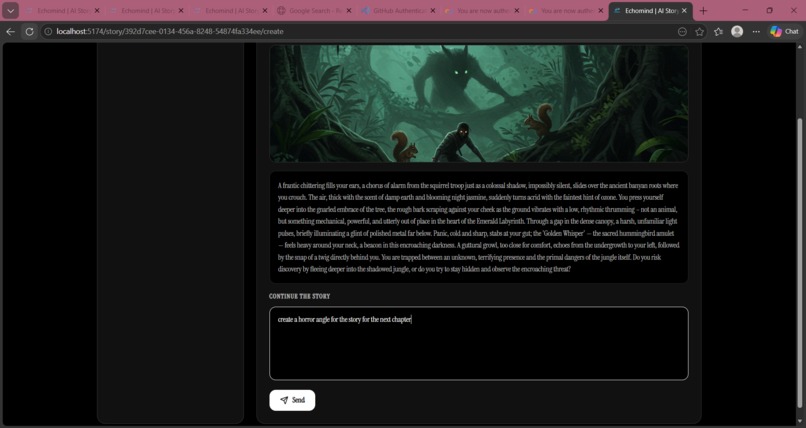

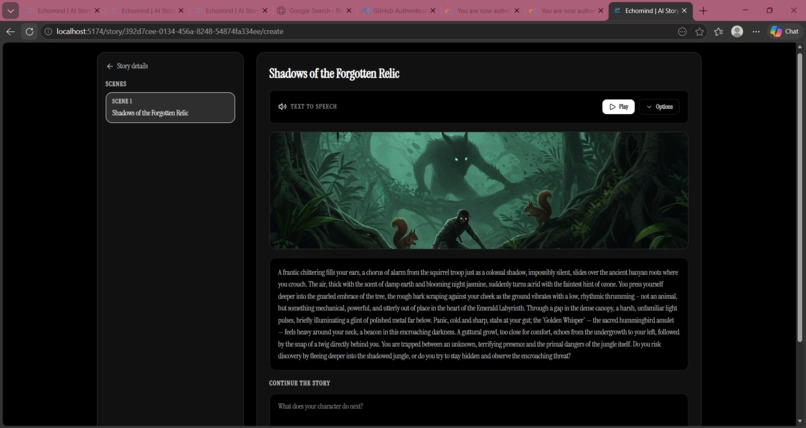

9. Story Setup Page-6 (Story Generation-Text and Audio)

-

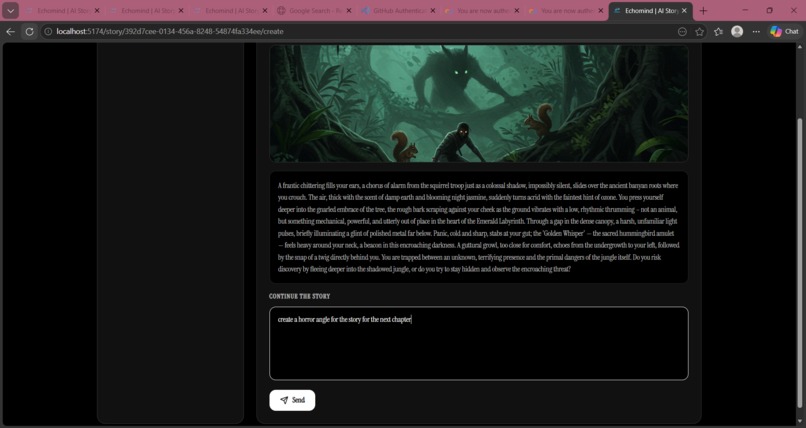

10. Story Setup Page-4 (Story Continuation User Input)

EchoMind - AI Storytelling Platform

Live Demo: https://echomind-493713.web.app/

Inspiration

Stories are the oldest technology humanity has ever built. Long before the printing press, the internet, or the smartphone, we used narrative to make sense of the world , to process fear, ignite curiosity, and feel less alone.

Yet for most people, the barrier to telling a story rather than merely consuming one has always been the blank page. The paralyzing moment of "where do I even begin?"

We built EchoMind to obliterate that barrier.

Inspired by the golden age of interactive fiction - Choose Your Own Adventure, narrative RPGs, and the immersive branching games of today, we asked ourselves: what if an AI could sit beside you as a creative partner, never writing the story for you, but always writing it with you? What if anyone, regardless of writing experience, could become the author of their own cinematic universe?

That question became EchoMind. A platform where your words are the engine, and artificial intelligence is the fuel.

What It Does

EchoMind is a full-stack, AI-powered interactive storytelling platform that turns a single sentence from a user into a fully illustrated, narrated, branching narrative experience.

The full user journey:

Story Setup Wizard : The user selects a story type (Short Story, Novella, Novel, Children's, Young Adult, Interactive), a genre (Fantasy, Horror, Sci-Fi, Mystery, and more), and one or more sub-genres via a beautifully designed multi-step wizard. Every story type and genre is illustrated with an AI-generated cinematic image.

AI Prompt Suggestions : Before the user types a single word, Gemini generates 5 tailored, high-quality opening prompt suggestions based on the exact story type, genre, and sub genre combination the user chose. Each suggestion is a 2–4 sentence vivid hook that immediately places the reader inside a world.

Opening Scene Generation : The user selects or writes their own opening prompt. Gemini transforms it into a richly detailed, 8–12 sentence cinematic opening scene, written in second person, present tense, structured like the opening of a prestige television drama.

AI Cover Image : Simultaneously, Imagen 3 generates a bespoke, painted concept-art style book cover for the story based on the genre, sub-genre, and the user's prompt.

Scene Image : Every individual scene in the story is also illustrated in real time with a cinematic image generated by Imagen 3, giving each narrative beat its own visual identity.

Continue the Story : The user types what they want to do, say, or where they want to go, in their own words, no menus, no pre-set choices. Gemini reads the full scene history and the player's live input, then generates the next scene: executing the player's action, showing consequences, escalating the stakes, and ending on a charged moment that demands the next move.

Text-to-Speech Narration : Every scene can be narrated aloud using the platform's built-in text-to-speech engine, transforming EchoMind into an audio storytelling experience. Users can play, pause, and resume narration at any point.

Story Library : All stories are persisted per user in our database, organised by workspace. Users can return to any story, review its full scene history, and continue from any point.

How We Built It

EchoMind is built on a production-grade, clean-architecture stack deployed entirely on Google Cloud.

Backend : FastAPI on Google Cloud Run

The backend is written in Python with FastAPI, structured using strict Clean Architecture principles:

Gateway (HTTP router) → Use Case (orchestration) → Repository (data access) → Service (AI/DB/Storage)

Each feature (story, users, workspaces, subscriptions) is a fully isolated module. Business logic never touches infrastructure directly all I/O is injected via interface repositories. This made it trivial to swap models or storage backends during development without touching a single use-case file.

The application is containerised with Docker and deployed on Google Cloud Run with auto-scaling from 0 to 10 instances, running under a dedicated service account with least-privilege IAM roles.

Dependency injection is handled by the dependency-injector library, wiring the entire composition root at startup without any global state.

AI Layer - Google Gemini API (Vertex AI)

The entire intelligence of EchoMind runs on Google's Gemini API via the Vertex AI SDK. All Gemini calls are isolated in a single service layer (GeminiServiceRepoImpl) , no other part of the codebase imports google.genai directly, ensuring clean separation and testability.

Key technical decisions:

- Structured JSON output: All text generation calls use

response_mime_type="application/json"with Pydantic schema validation on the response. This guarantees well-formed, type-safe outputs and eliminates the need to parse free-form text. - Thinking budget control:

ThinkingConfig(thinking_budget=0)is used for latency-sensitive calls (prompt suggestions, scene generation) where speed matters more than extended reasoning. This is a deliberate, thoughtful trade-off, not a default. - Dual-model architecture: Text generation uses

gemini-2.5-flash(optimised for speed and quality at scale). Image generation usesimagen-3.0-generate-002(photorealistic, painterly concept art). The correct model is used for each task, not one model for everything. - Async-first: All Gemini text calls use the async SDK (

client.aio.models.generate_content). Imagen calls, which lack a native async interface, are wrapped inasyncio.run_in_executorto avoid blocking the event loop under concurrent load. - Prompt engineering: Each prompt encodes expert-level creative writing direction (e.g., the cinematic 4-part scene structure: Hook → World-building → Tension → Player anchor).

Image Generation — Imagen 3

EchoMind uses Imagen 3 (imagen-3.0-generate-002) for four distinct image generation pipelines:

| Pipeline | Trigger | Usage |

|---|---|---|

| Story Type Images | Seeded once | Visual identity for each story format |

| Genre / Sub-genre Images | Seeded once | Browse-page imagery |

| Story Cover Image | On story creation | Unique cinematic cover per story |

| Scene Image | On each scene generation | Per-scene visual illustration |

All generated images are uploaded to Google Cloud Storage and served via authenticated GCS URLs stored in Firestore.

Frontend : React + TypeScript on Firebase Hosting

The frontend is a React 18 / TypeScript single-page application built with Vite, deployed on Firebase Hosting.

Infrastructure Overview

| Layer | Technology |

|---|---|

| Frontend | React 18, TypeScript, Vite, Framer Motion, Tailwind CSS |

| Hosting | Firebase Hosting |

| Auth | Firebase Authentication |

| Backend | Python, FastAPI, uvicorn |

| Compute | Google Cloud Run (Docker, auto-scaling) |

| AI Text | Gemini 2.5 Flash via Vertex AI |

| AI Images | Imagen 3 via Vertex AI |

| Database | Cloud Firestore |

| File Storage | Google Cloud Storage |

| IaC / Seeding | Python scripts with ADC / service account auth |

Challenges We Ran Into

1. Structured AI Output Reliability

Getting Gemini to return valid, parseable JSON 100% of the time with the exact schema we expected required iteration. Early attempts using free-form text generation with regex parsing were fragile. The breakthrough was combining response_mime_type="application/json" with Pydantic model validation on the server side, so malformed responses are caught, logged, and raise a typed StoryGenerationError rather than silently corrupting story data.

2. Imagen Prompt Engineering for Consistent Aesthetics

Generating images that feel tonally coherent with the story's genre required significant prompt engineering investment. A generic "fantasy landscape" prompt produces inconsistent, often mediocre results. We developed a structured prompt template that encodes: story type, genre, sub-genre, the user's original story concept, and a fixed aesthetic vocabulary ("cinematic book cover illustration", "painted concept-art style", "dramatic atmospheric lighting", "no text, no title, no UI elements") that reliably produces gallery-quality outputs.

3. Async Architecture Under Concurrent Generation

Imagen 3's Python SDK does not expose native coroutines. Running image generation synchronously on an async FastAPI event loop would block all other requests during generation. The solution — wrapping blocking calls in asyncio.run_in_executor required careful thread-safety analysis, especially since the google.genai client object is shared across concurrent requests.

4. Clean Architecture with AI Services

Integrating non-deterministic AI services into clean architecture where use cases should be deterministic and testable required deliberate boundary design. AI calls live exclusively in the service layer, injected as abstract GeminiServiceRepo interfaces. Use cases orchestrate but never directly invoke AI. This means the entire business logic layer can be unit-tested with mock AI services, a discipline that paid dividends when debugging scene continuity bugs.

*5. Maintaining previous scenes context while generating new scenes *

Accomplishments That We're Proud Of

End-to-end AI storytelling in production : A user can go from "I want to write a horror novella" to reading and listening to an illustrated, AI-authored opening scene in under 60 seconds, on a live production URL.

Seven distinct Gemini API integrations in a single cohesive product, each thoughtfully engineered: prompt suggestions, opening scene generation, continuation scene generation, story cover image, per-scene images, story type visuals, and genre visuals. Not a demo a deployed application.

Cinematic prompt system : The system prompts for scene generation encode a 4-part narrative structure (Hook → World-building → Tension → Player anchor for opening; Action → Consequence → Escalation → Momentum for continuation). Players consistently describe the output as "feeling like a real story" — not a chatbot response.

Clean architecture at scale : Every feature module (story, users, workspaces, subscriptions) is fully isolated. New story formats, new genres, new AI models can be added without touching any existing use-case code. The architecture is designed to survive the product actually succeeding.

What We Learned

Gemini's structured output capability is underutilised.

response_mime_type="application/json"combined with Pydantic validation makes AI outputs as reliable as a typed API endpoint. Most demos skip this and pay for it in production reliability.Prompt engineering is software engineering. The difference between a good Gemini integration and a great one is the same as the difference between a good SQL query and a great one - precision, constraints, and understanding what the engine actually optimises for. Our scene system prompts went through eight revisions before producing consistently cinematic output.

Async Python + AI is genuinely hard.

Users don't want AI to write for them. Every UX iteration that added more AI automation at the cost of user agency made the experience worse. The final design is carefully calibrated: AI generates the world's response to the user's action. The user always holds the pen. This mirrors how the best game masters run tabletop RPGs.

What's Next for EchoMind : AI Storytelling

EchoMind is not a hackathon demo. It is a foundation for a product category.

Near-term:

Collaborative storytelling : Real-time multiplayer stories where two or more users co-author a narrative, with Gemini mediating the shared world state. Using Firestore's real-time listeners, each player's input is visible to all participants as it happens.

Gemini Live narration : Replace the current Web Speech API narration with Gemini's native audio generation for high-fidelity, emotionally expressive story narration. The infrastructure for this is already designed; it is a model swap in the service layer.

Story branching visualiser : A visual graph of every scene node in a story — showing the branching tree of choices the user has made built on top of the already-persisted scene graph in Firestore. Each node carries its AI-generated image as a thumbnail. User can explore alternative realties

Mobile application : The React frontend's responsive design and Zustand state architecture are already mobile-ready. A React Native port sharing the same backend API is the natural next step.

Longer-term:

Gemini's multimodal input to let users describe scenes by uploading images or voice notes and have the story world respond to those visual inputs, not just text. A user could photograph a forest and say "I walk through here" and EchoMind would ground the next scene in that specific visual world.

Export to EPUB / PDF : Full illustrated story export with scene images, cover art, and formatted prose, using Gemini to generate a final editorial pass that smooths the narrative into a coherent single-author voice.

EchoMind for Education : A dedicated mode for schools where teachers set story constraints (historical period, reading level, curriculum topic) and students co-author narratives that are factually grounded by Gemini's knowledge. Creative writing meets curriculum.

Log in or sign up for Devpost to join the conversation.