-

-

-

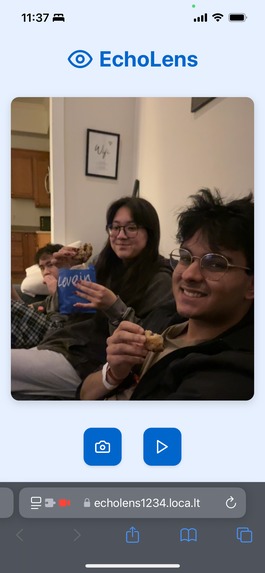

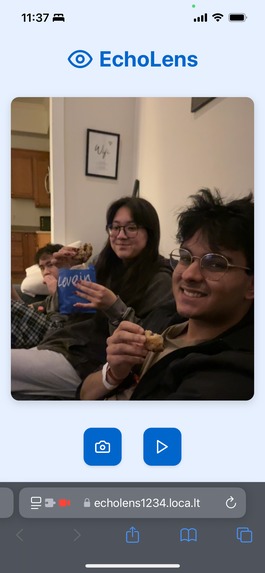

What it does

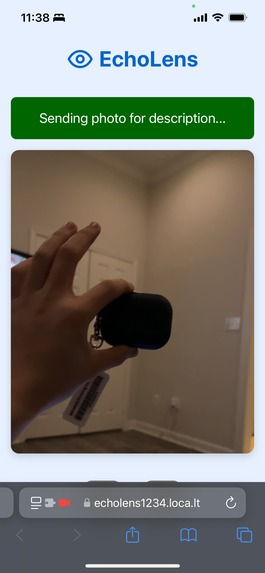

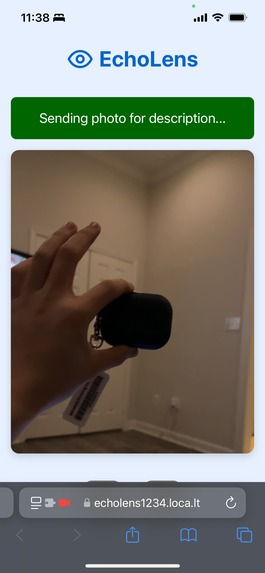

- Vocally describes the environment and objects around you using your camera on your phone.

How we built it

- Full Stack Web-App

- Flask Backend

- React Frontend

- Ngrok to tunnel the frontend to backend using HTTPS

- Local Tunnel to expose the frontend to the world using HTTPS

Challenges we ran into

- We found that for phones, videos can't be used unless it's HTTPS

- Ngrok only allows one tunnel on the free tier. The reason we needed Ngrok and Local Tunnel is because we needed two tunnels.

- Debugging a web app meant for mobile was extra difficult, due to how you can't access console on mobile.

Accomplishments that we're proud of

- It works

- It remembers the previous pictures, and the differences between pictures. So if things change or move it will point that out.

What we learned

- Full stack development in a team

- Tunneling

- HTTP Methods

What's next for EchoLens

- Full deployment

- Adjusting the automatic capture intervals per user

Log in or sign up for Devpost to join the conversation.