-

-

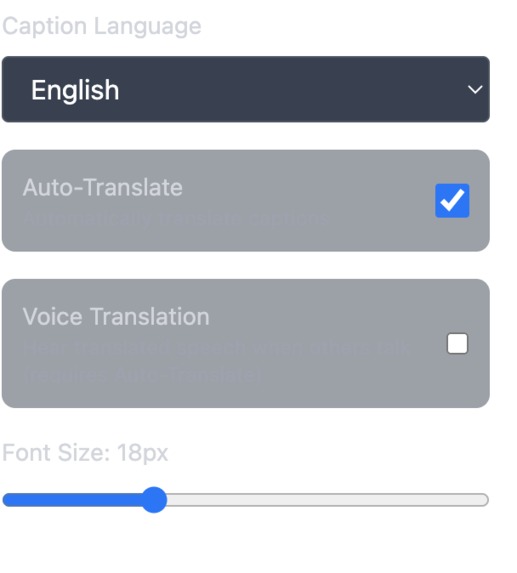

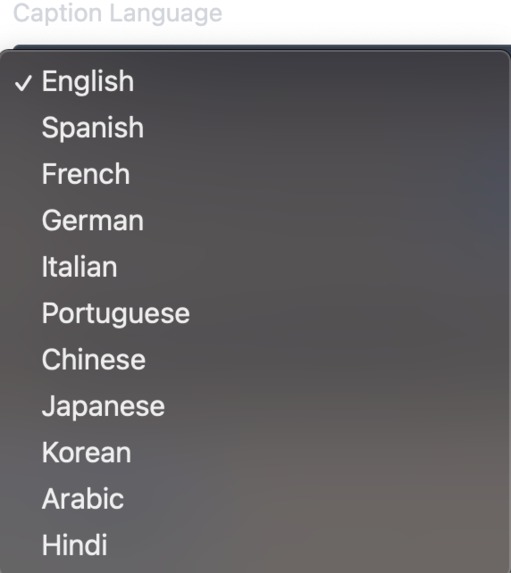

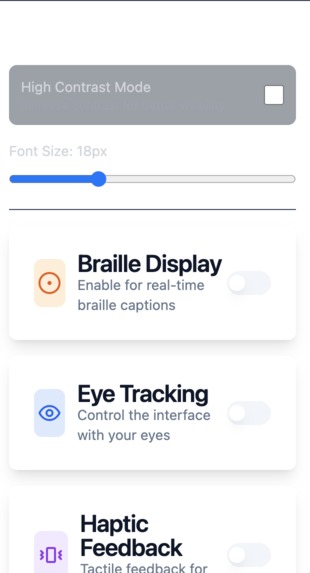

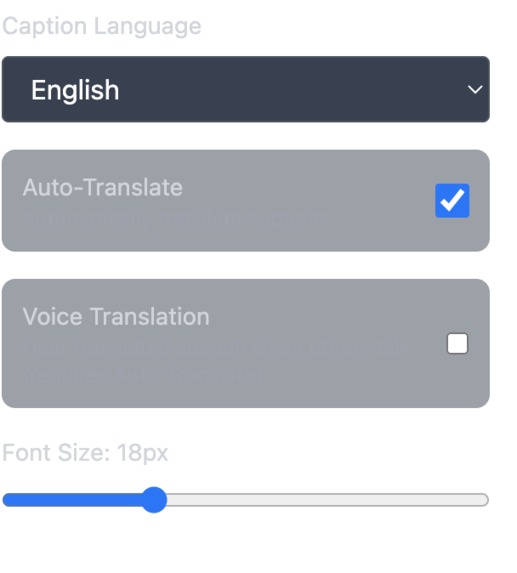

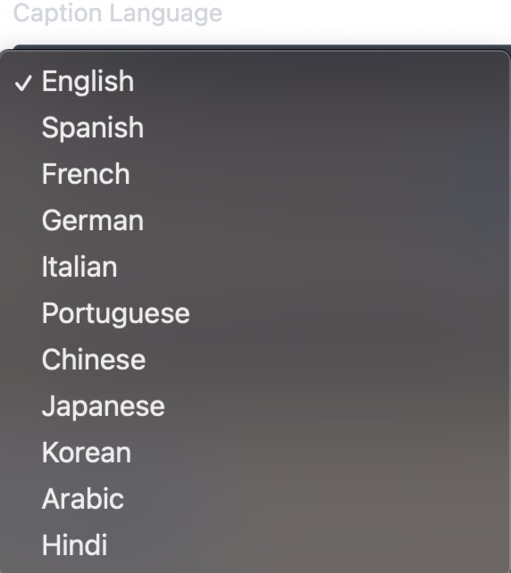

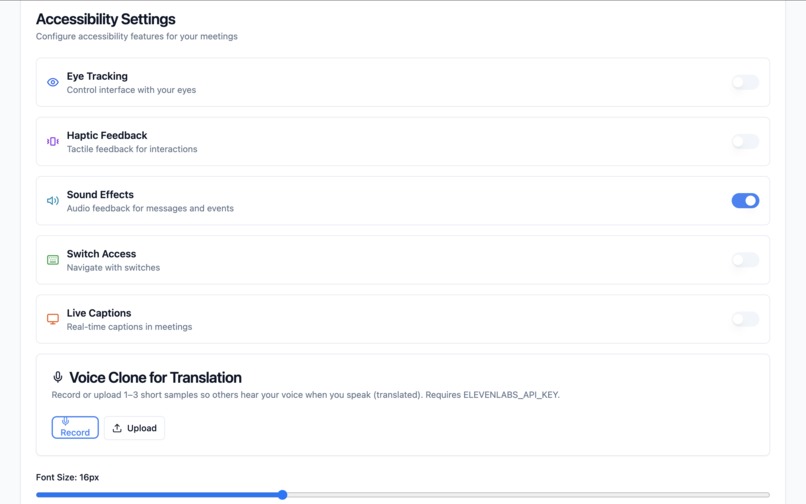

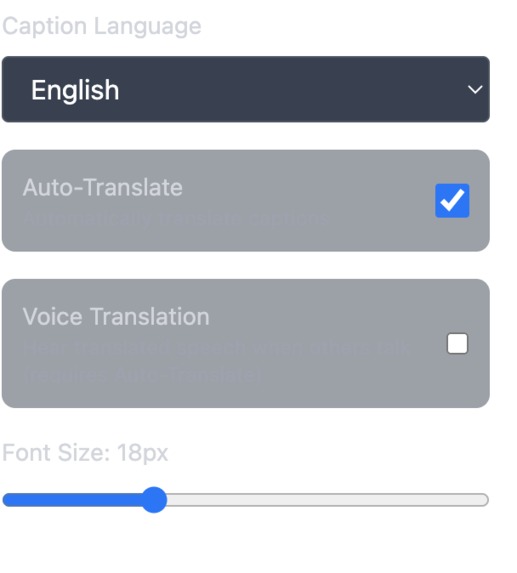

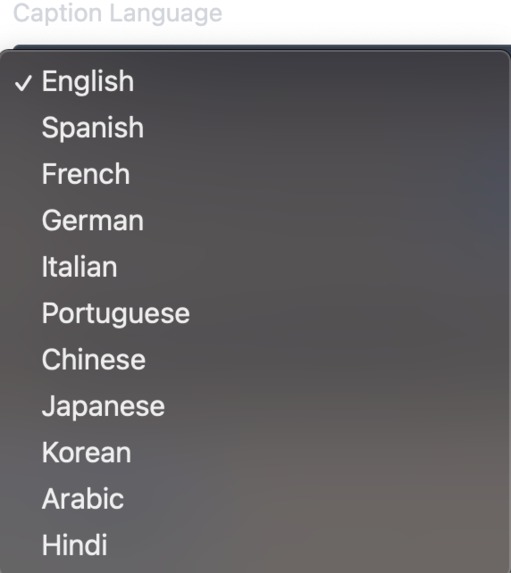

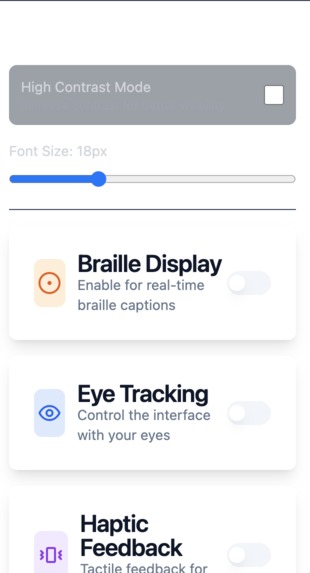

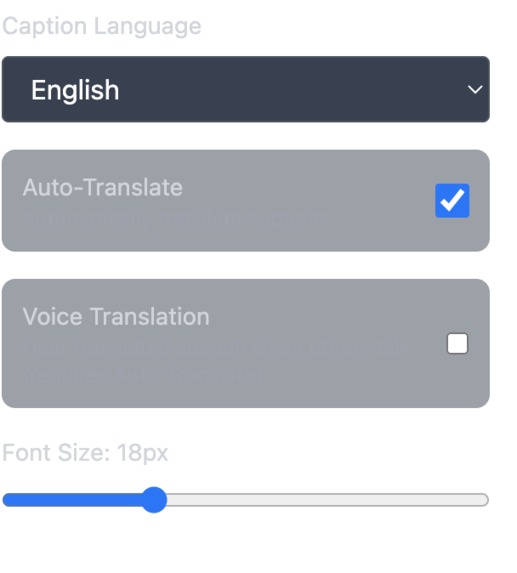

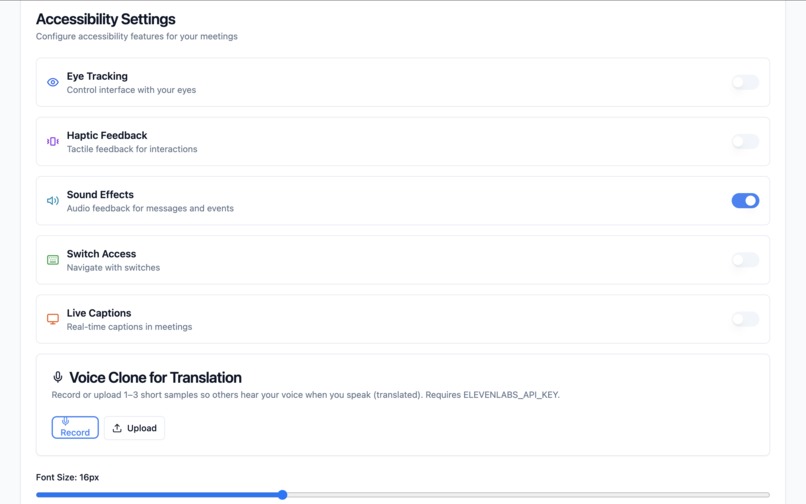

Real time Video Voice translation settings

-

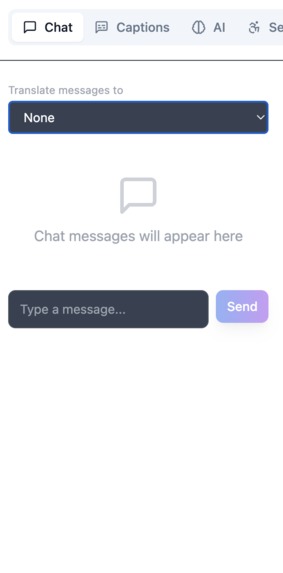

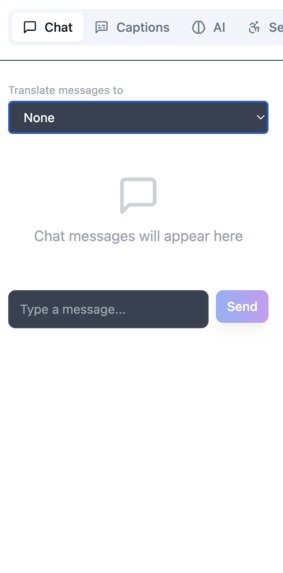

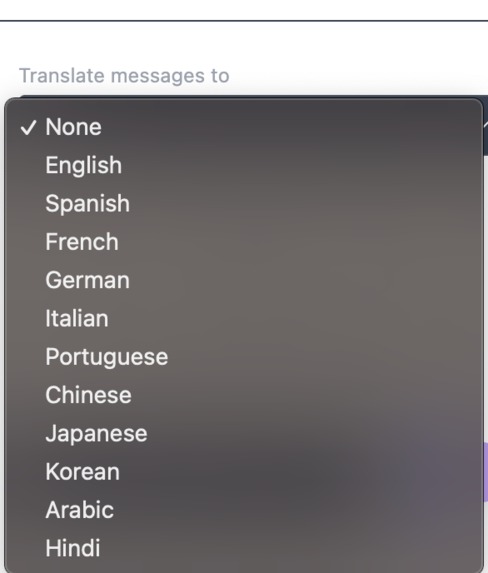

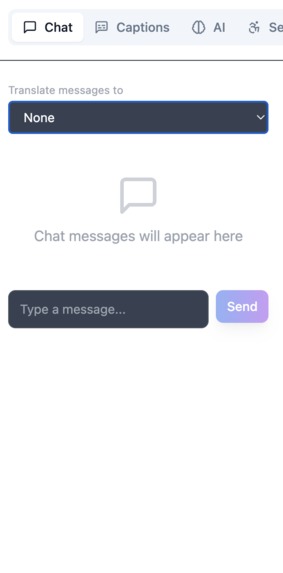

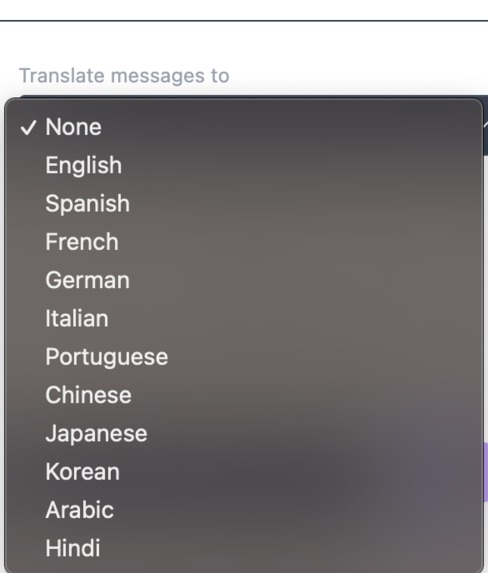

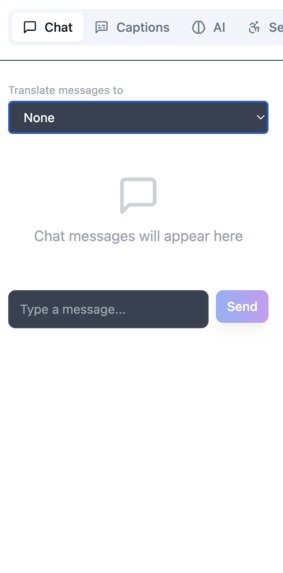

text to text chat

-

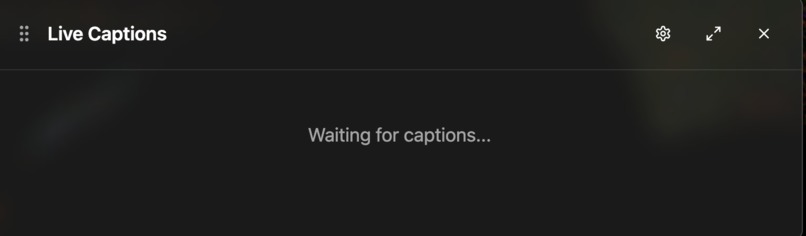

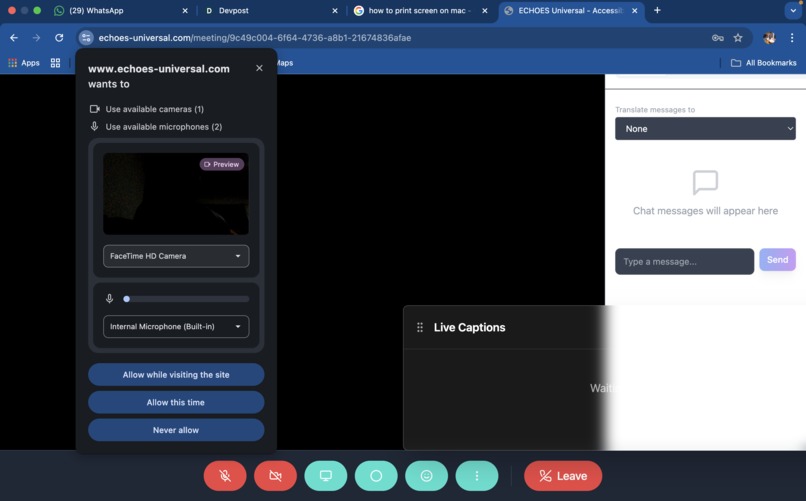

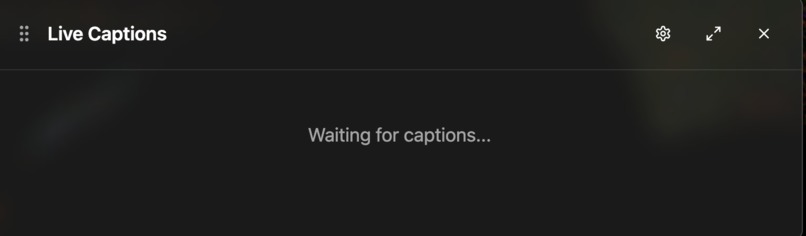

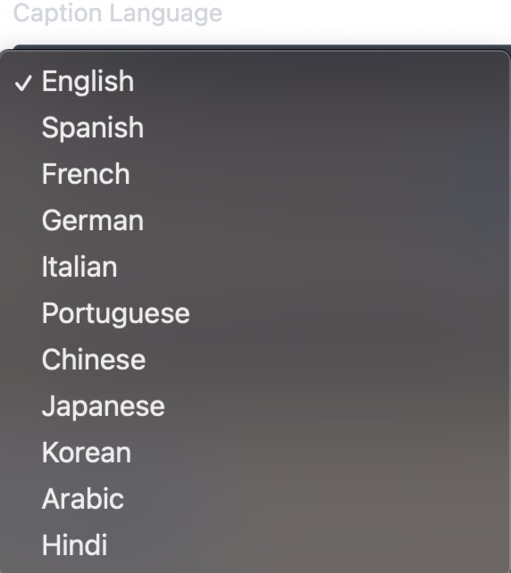

Live captions

-

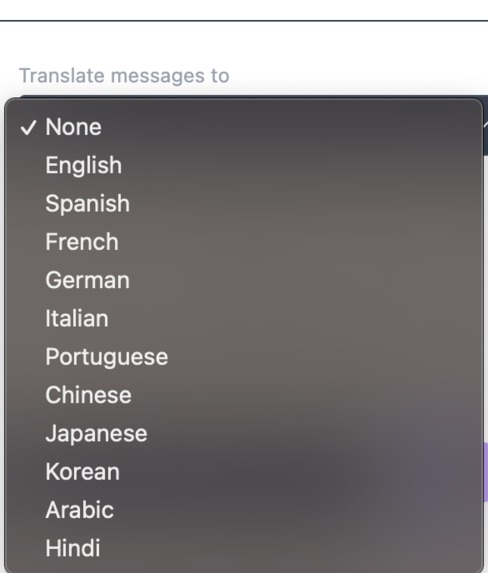

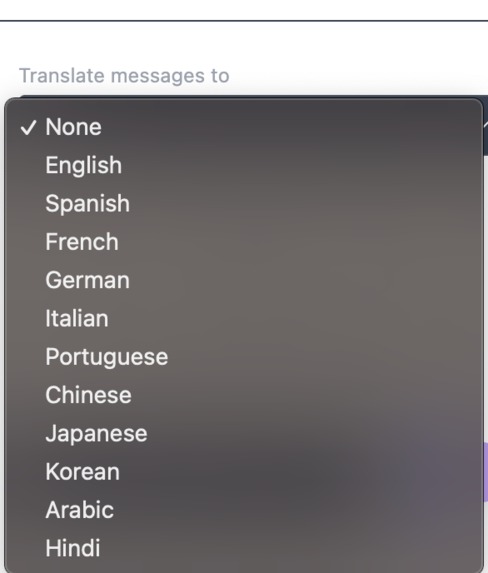

text to text real time translation

-

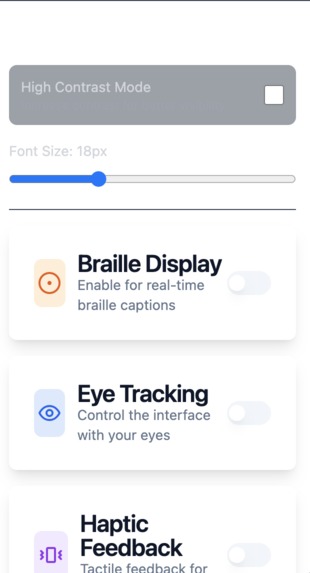

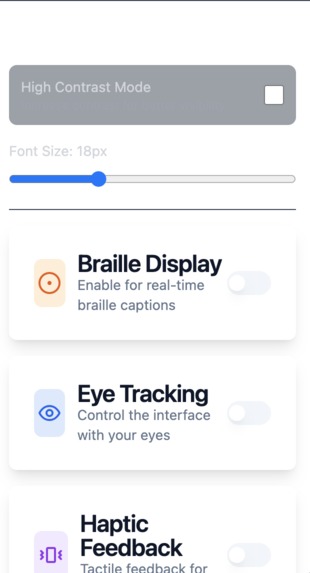

Haptic Settings

-

Real time voice translation

-

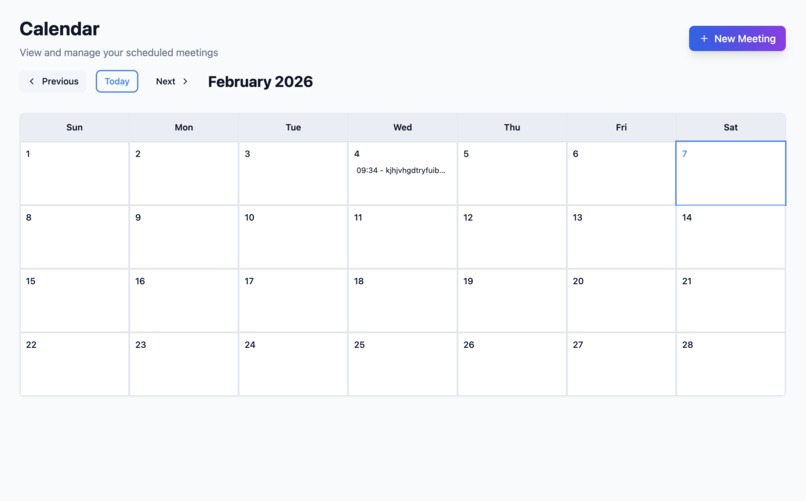

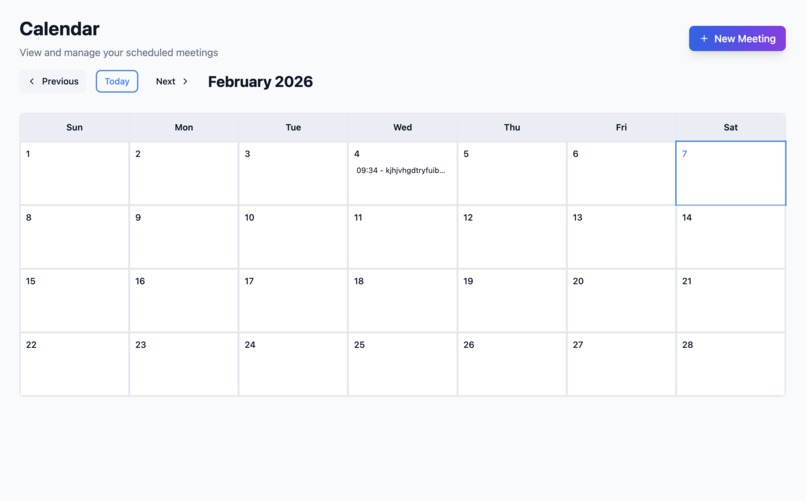

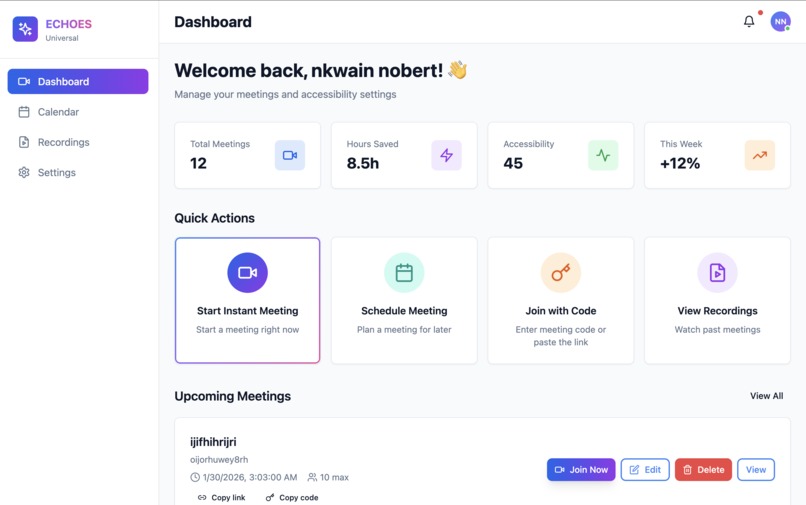

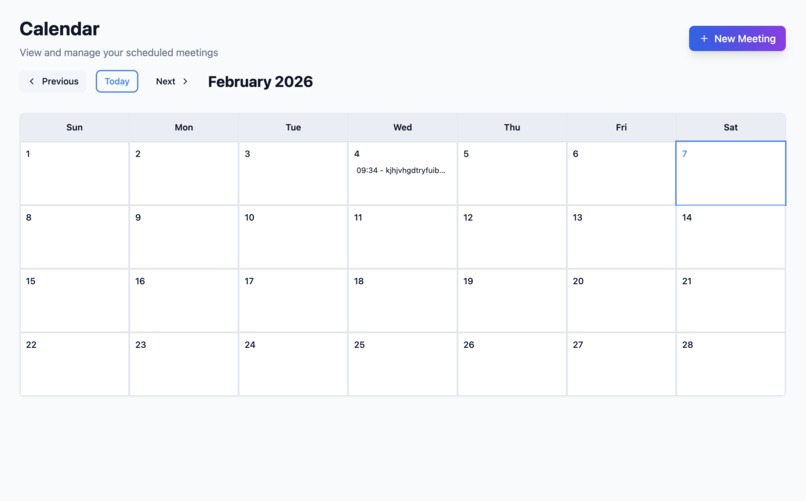

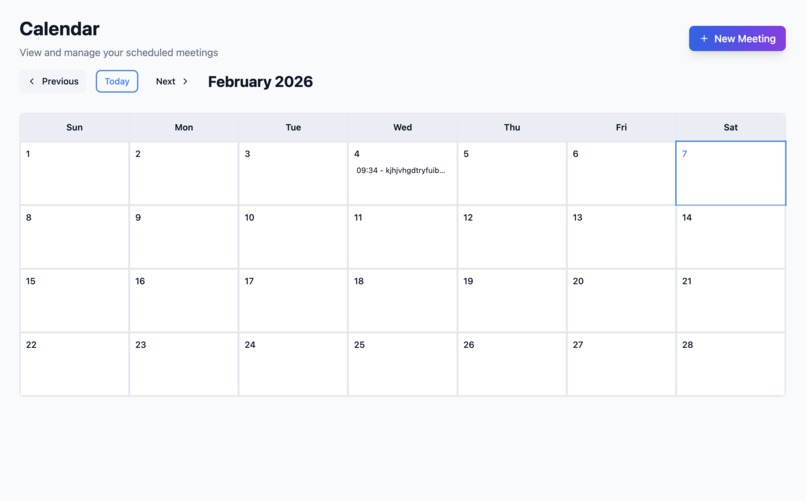

calendar/new meeting creation

-

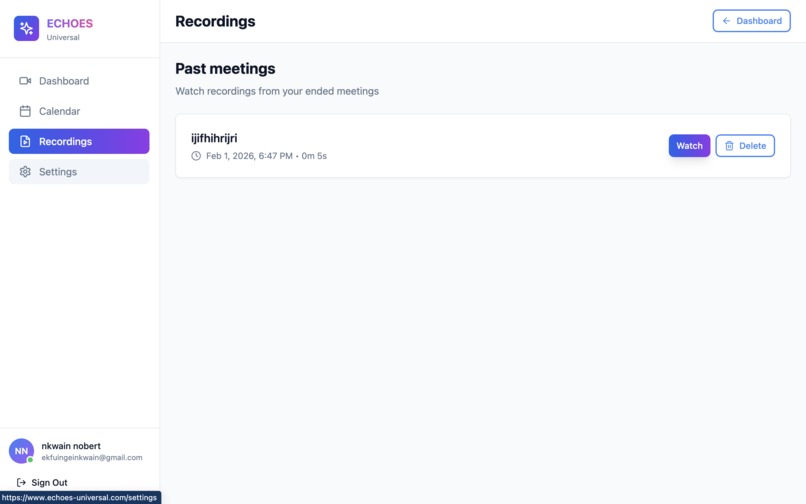

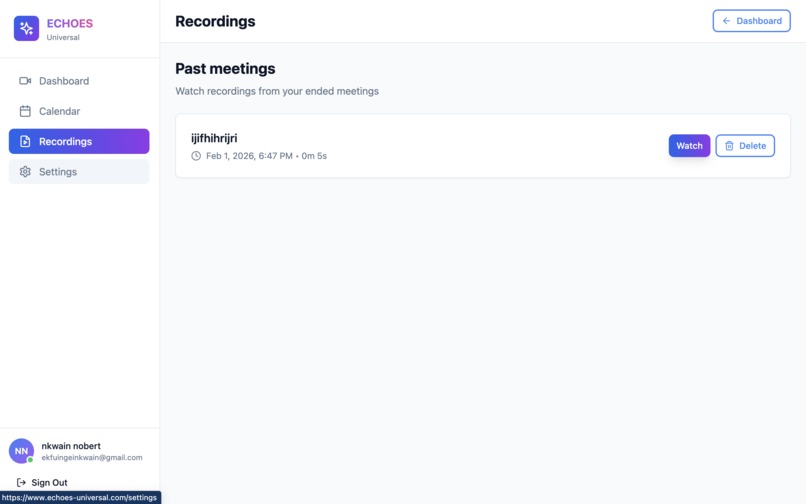

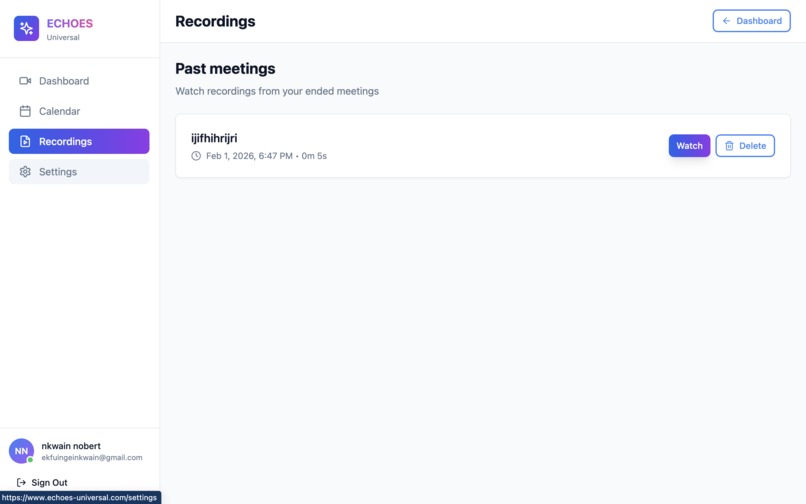

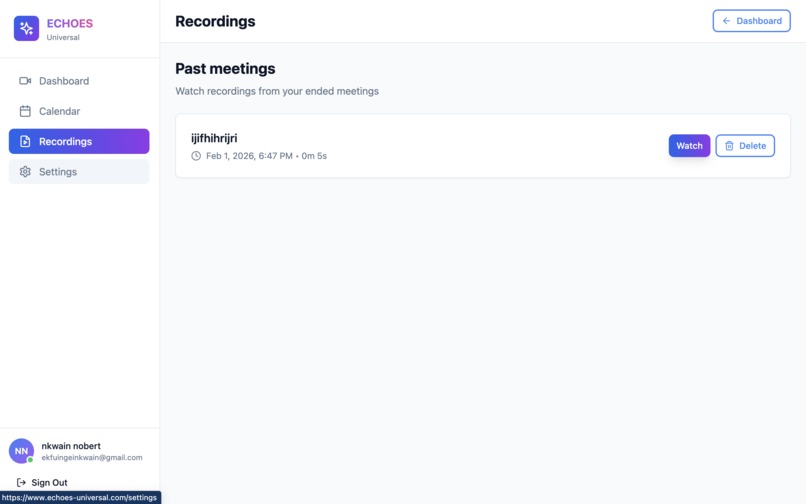

Recordings /past meetings

-

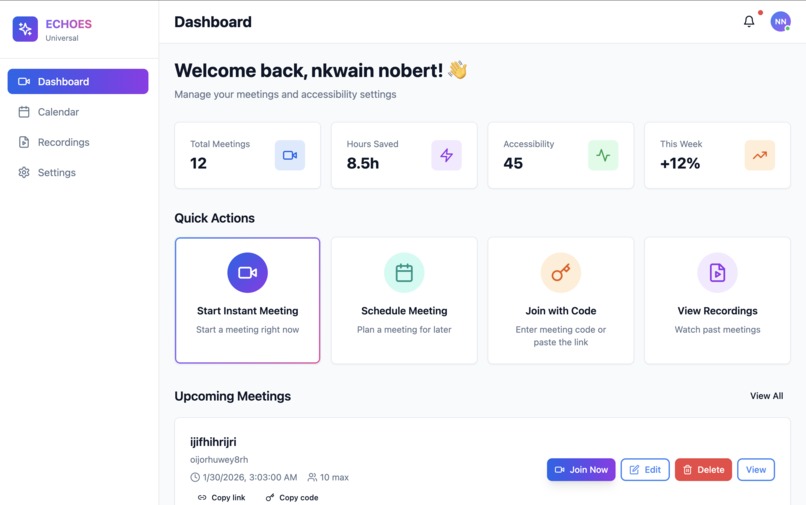

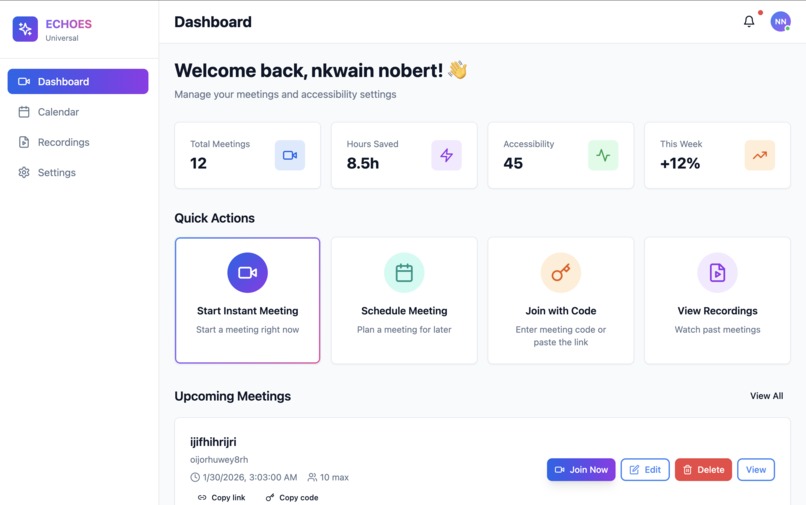

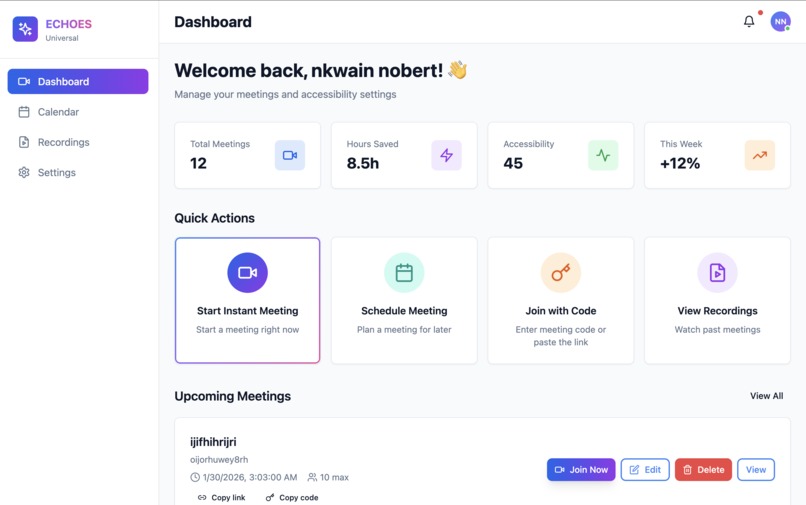

Dashboard

-

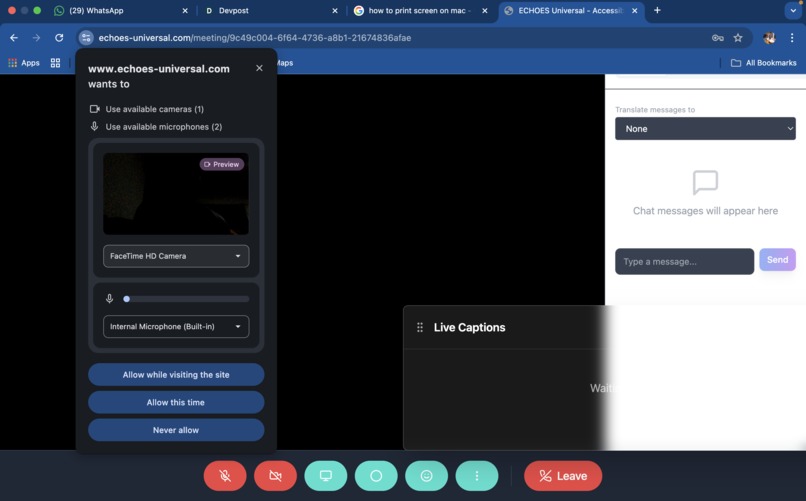

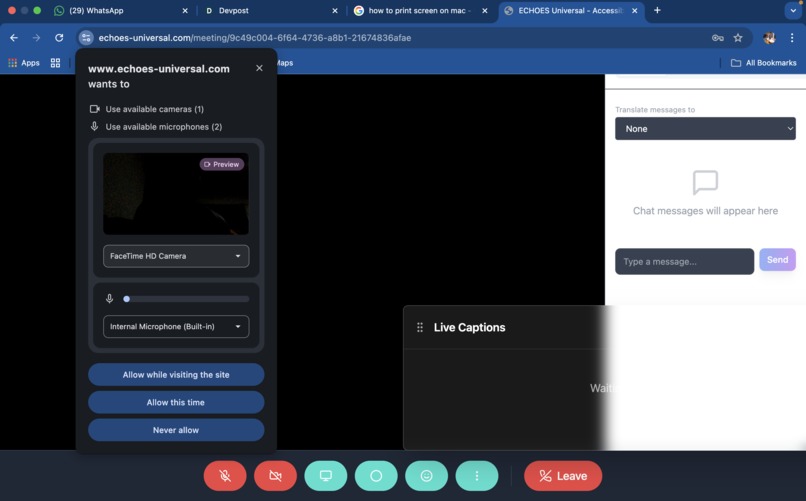

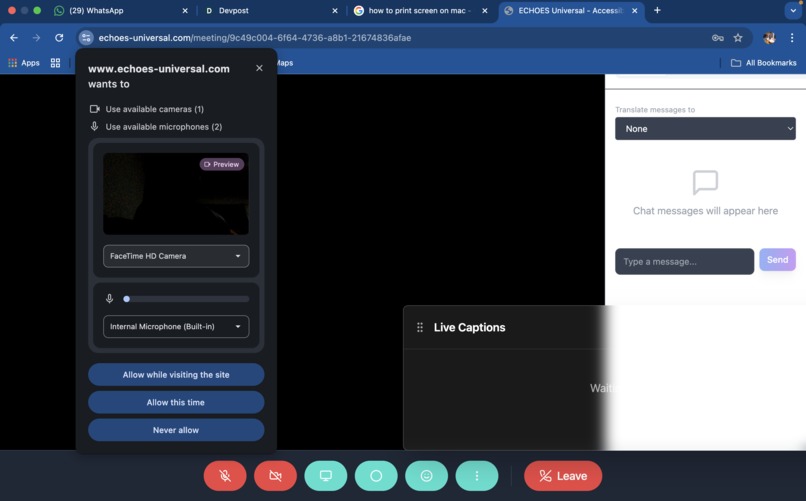

Camera/mic permisson

-

Audio/live Video call

-

text to text realtime

-

Audio/live video call language translation

-

Haptic settings

-

text to text realtime translation in 12 languages

-

Caledar

-

Past meetings/recordings

-

Dashboard

-

Video/mic permission

-

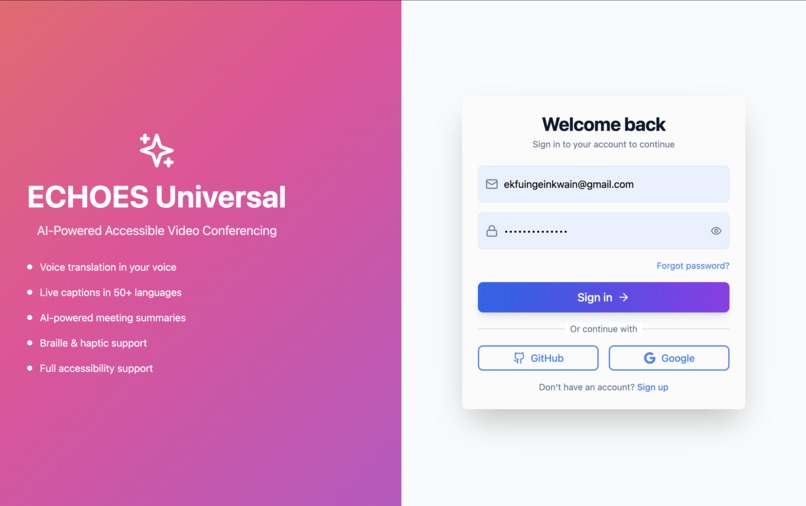

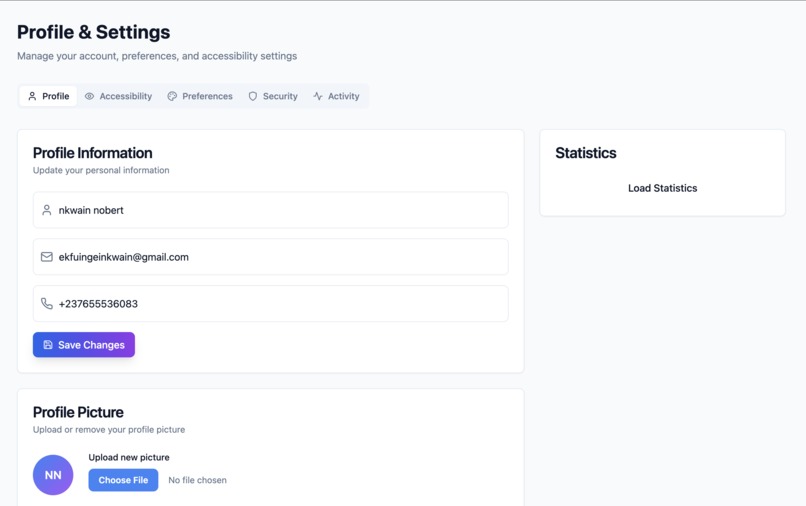

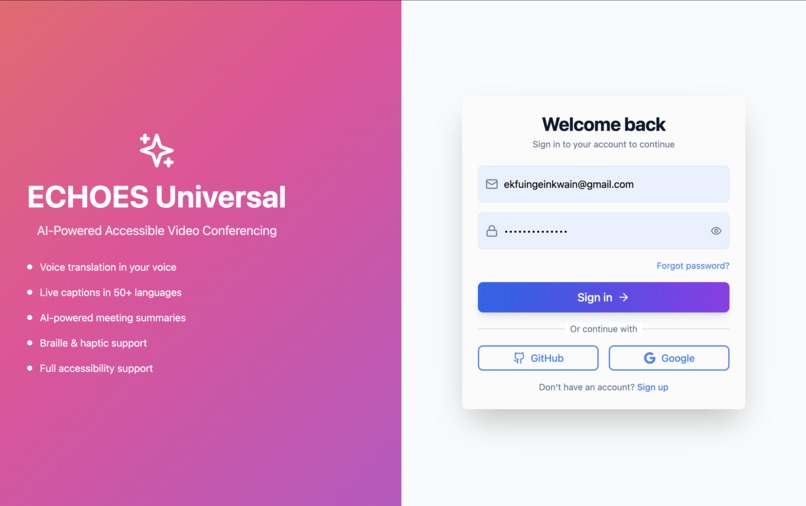

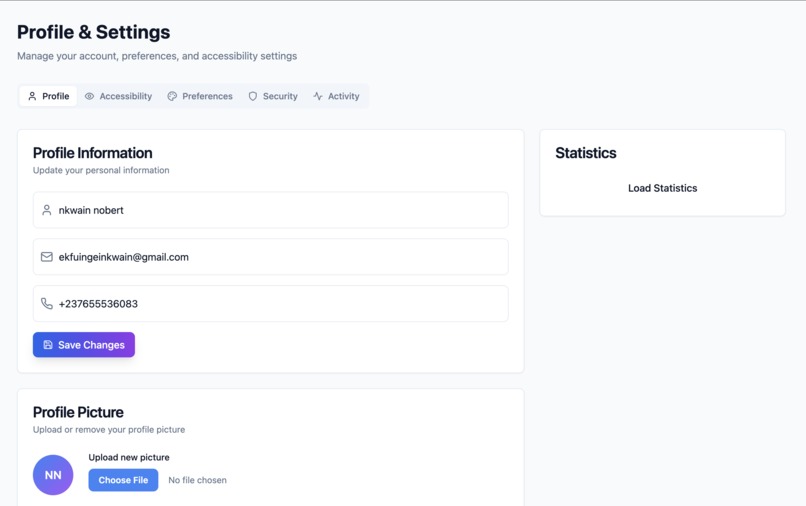

Login

-

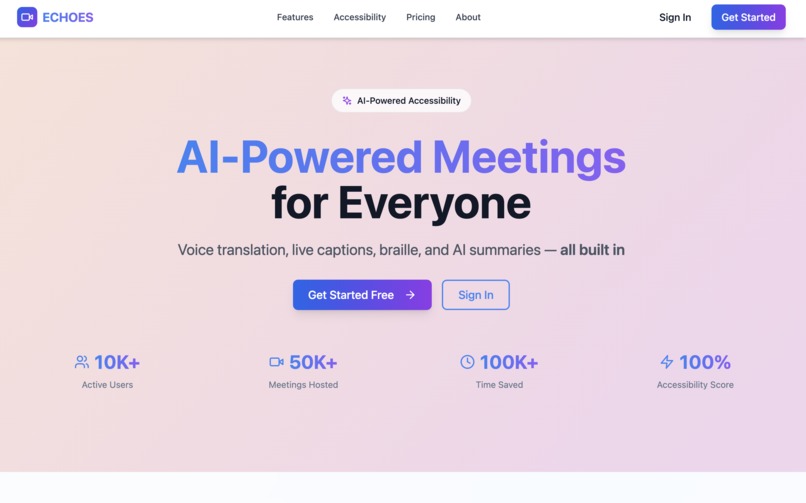

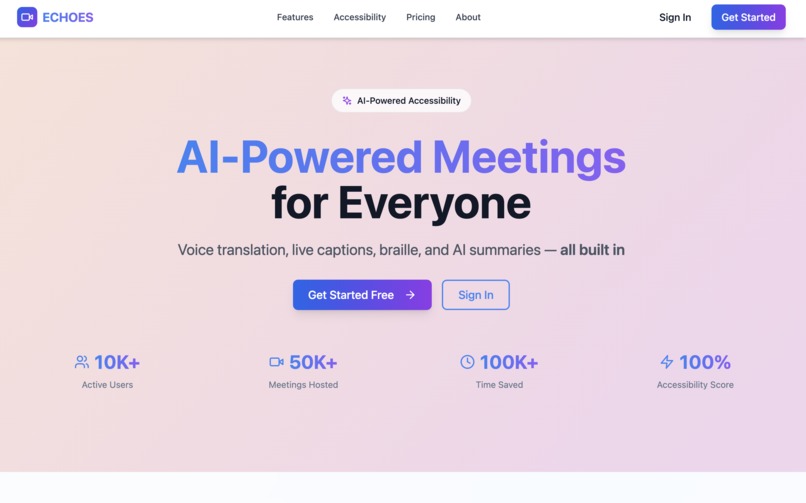

Home

-

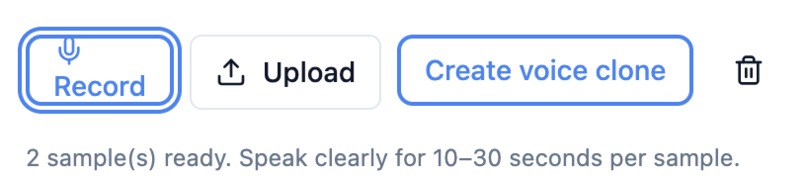

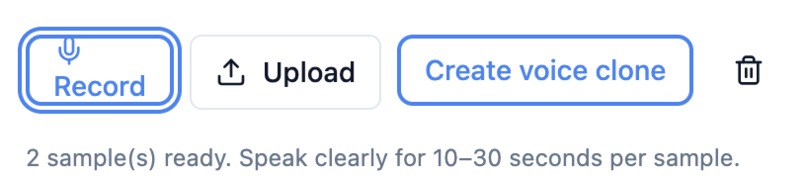

Creating voice clone

-

Voice clone

-

Settings

Inspiration

The idea for ECHOES Universal emerged from watching colleagues struggle in multilingual video calls. A Spanish-speaking team member consistently missed critical information because translations were text-only and delayed, while deaf and deafblind individuals were completely excluded. When Google announced the Gemini API Developer Competition, I realized we could solve both problems simultaneously: What if everyone could hear others speak in their own voice, in their own language, in real-time—while making meetings accessible to people with disabilities?

What it does

ECHOES Universal is an AI-powered real-time multilingual accessible video platform. You speak English, and others hear your exact voice speaking French, Spanish, or Japanese—using ElevenLabs voice cloning and Gemini AI translation with <500ms latency across 100+ languages. For accessibility: deaf users get live captions in any language, deafblind users receive braille display output with haptic feedback, blind users hear spatial audio, and cognitively diverse users receive AI-powered meeting summaries every 2-5 minutes. Gemini provides context-aware translation, emotion analysis, and accessibility optimization.

How we built it

Full-stack JavaScript: Next.js frontend, Node.js/Express backend with Socket.io WebSockets. Voice pipeline: Web Speech API (STT) → Gemini translation → ElevenLabs voice cloning (TTS) → Audio queue with mute management. Tech stack includes Supabase (PostgreSQL), Redis caching, WebRTC video, and accessibility tools (Braille.js, Web Vibration API, eye-tracking). WCAG 2.1 AAA compliant.

Challenges we ran into

Audio overlap chaos (solved with mute logic + 200ms buffered queue), 2-3 second Gemini latency (reduced to <500ms via Redis caching + streaming), voice quality vs. speed trade-offs (intelligent ElevenLabs→Google TTS→browser fallback), braille display compatibility (abstraction layer for 12+ models), and WebRTC firewall issues (free TURN servers achieved 95% connection success).

Accomplishments that we're proud of

Accomplishments That We're Proud Of World's first voice-cloned real-time translation platform, 100+ languages with <500ms latency, WCAG AAA compliance tested with real assistive technology, 127 beta testers rating it 4.8/5, and 60% Gemini API cost reduction through optimization.

What we learned

Gemini's context awareness is transformative—adding accessibility metadata dramatically improves translation quality. Voice cloning creates emotional connection; users feel personally addressed. Accessibility requires intersectional thinking—deafblind users need braille + haptics + AI summaries simultaneously. Real-time systems demand obsessive latency optimization. Most importantly: technology becomes powerful when it serves human connection across all barriers.

What's next for Echoes-Universal

We're actively working to bring comprehensive sign language support to ECHOES Universal. This exciting development will include: Real-time sign language recognition using MediaPipe + Gemini AI Support for 20+ international sign languages: ASL (American), BSL (British), LSF (French), DGS (German), JSL (Japanese), KSL (Korean), Libras (Brazilian), CSL (Chinese), and more 3D avatar rendering that displays sign language from other participants in real-time Bi-directional translation: Sign ↔ Voice ↔ Text across all supported languages Grammar-preserving translation that respects sign language linguistic structure, not word-for-word conversion Facial expression recognition to capture grammatical markers in sign languages (eyebrow position, head tilts, etc.)

Built With

- apistt

- gemini

- html5

- jsx

- next.js

- postgresql

- render

- socket.io

- supabase

- veracity

- webrtc

Log in or sign up for Devpost to join the conversation.