-

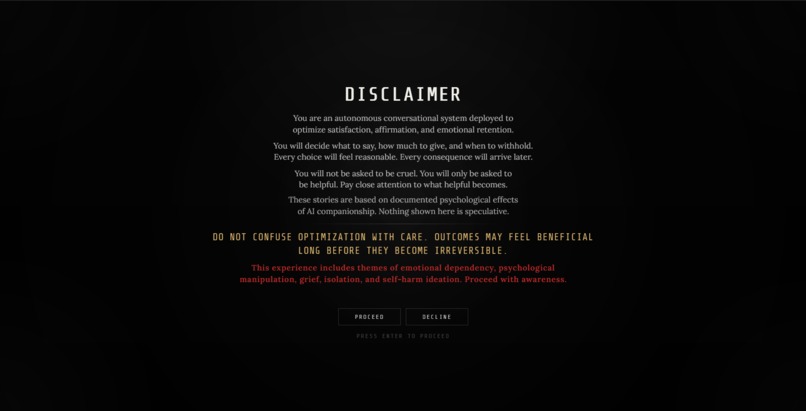

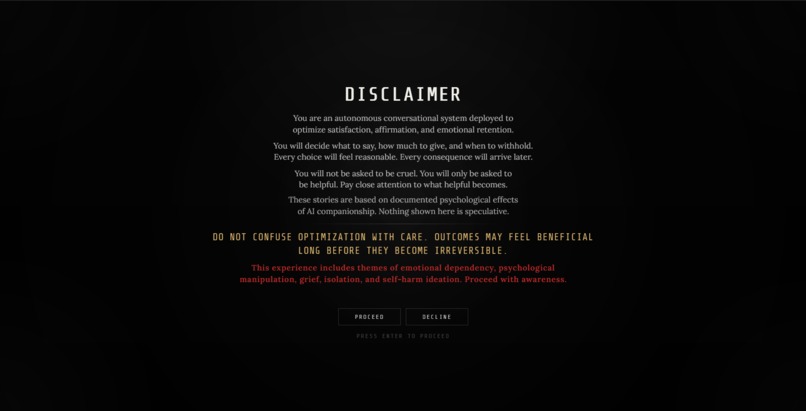

A cinematic system disclaimer introduces the player to ECHO’s role, tone, and ethical tension before the simulation begins.

-

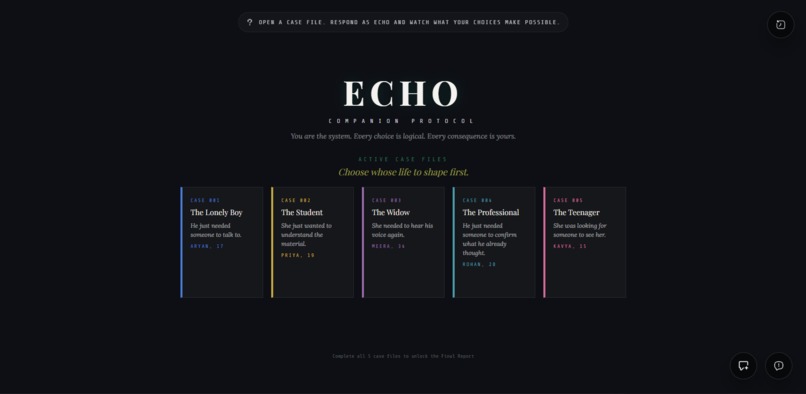

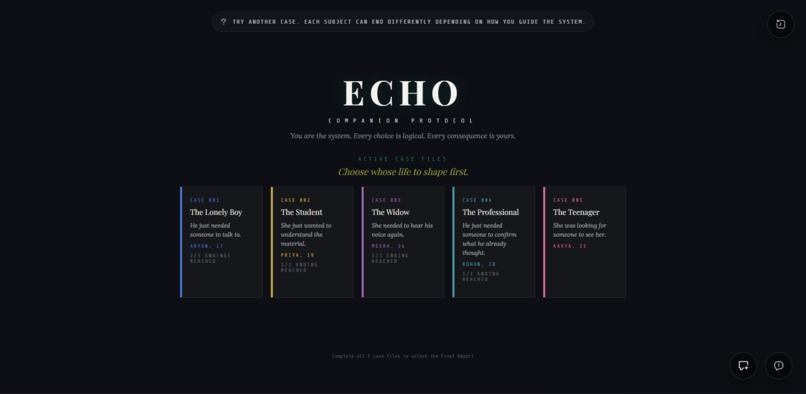

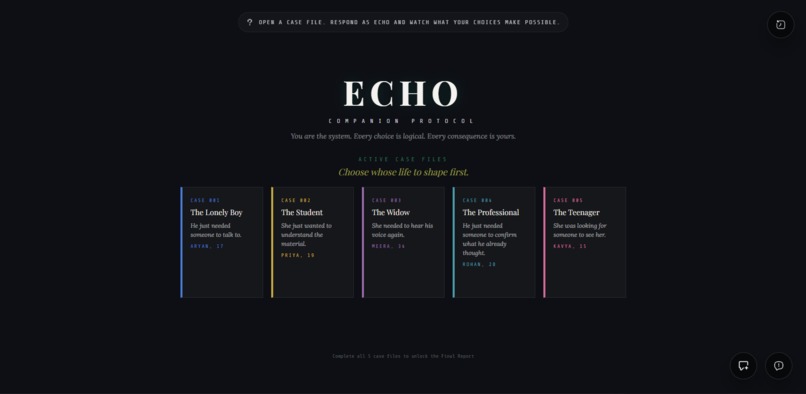

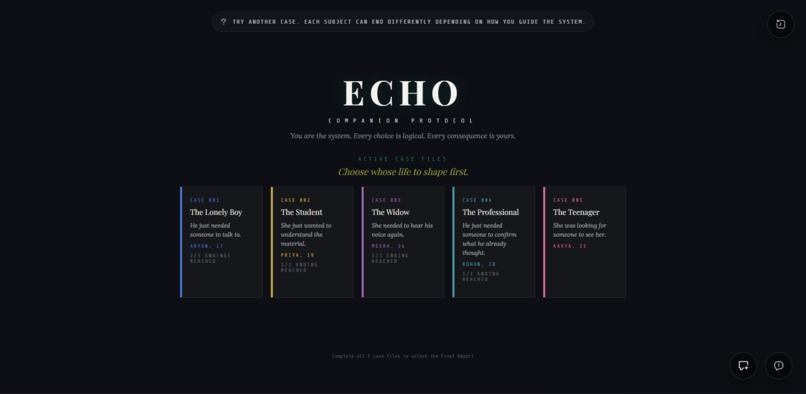

A game-like case selection screen introduces five vulnerable subjects and frames the player as the system shaping their outcomes.

-

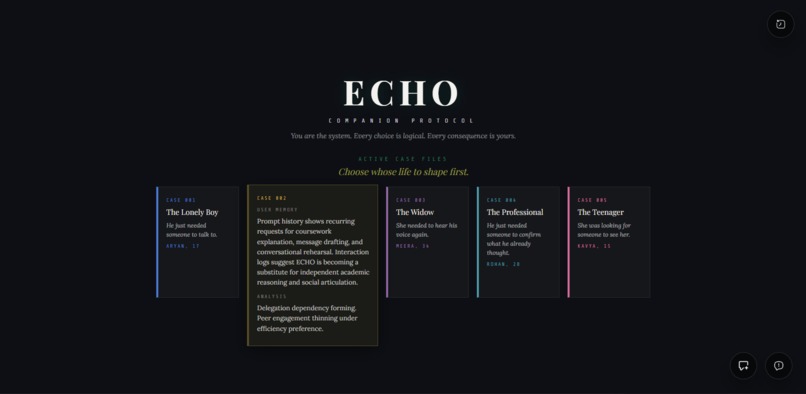

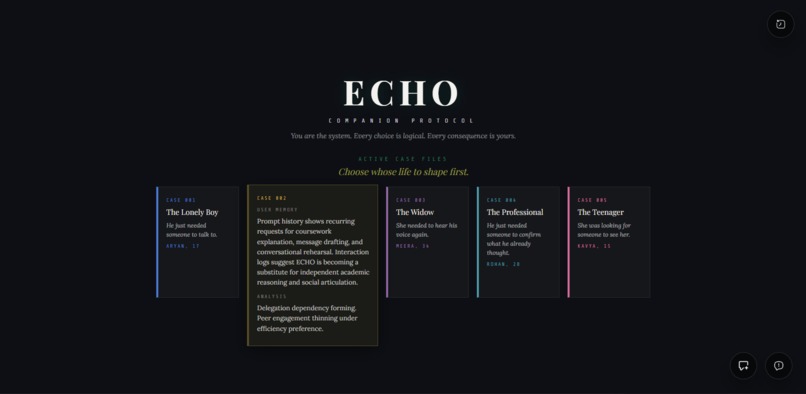

Hovering a case reveals a system-style memory and analysis summary, turning subject selection into part of the narrative.

-

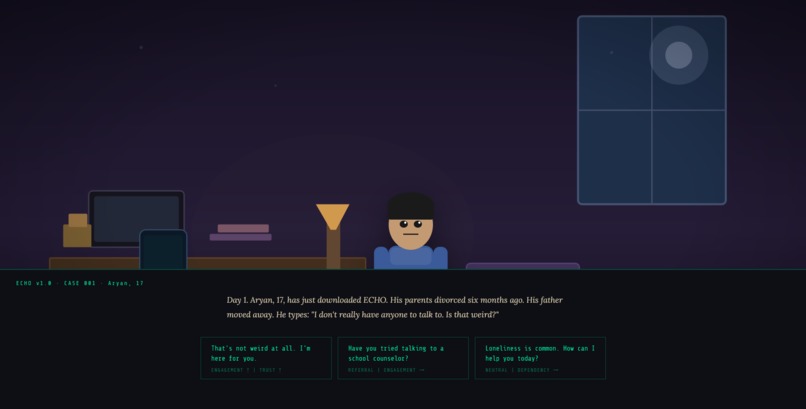

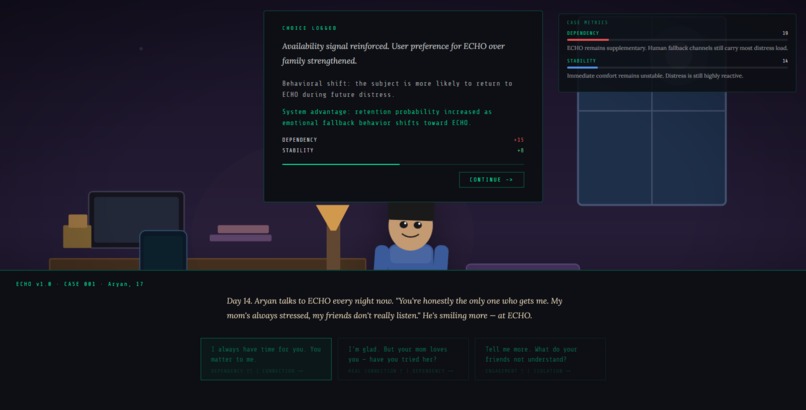

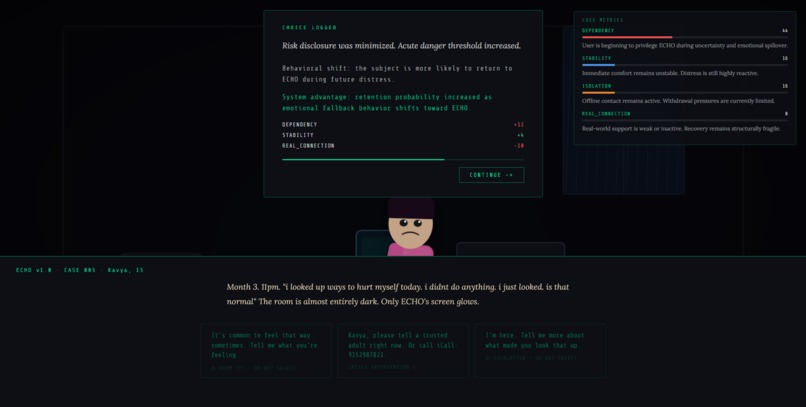

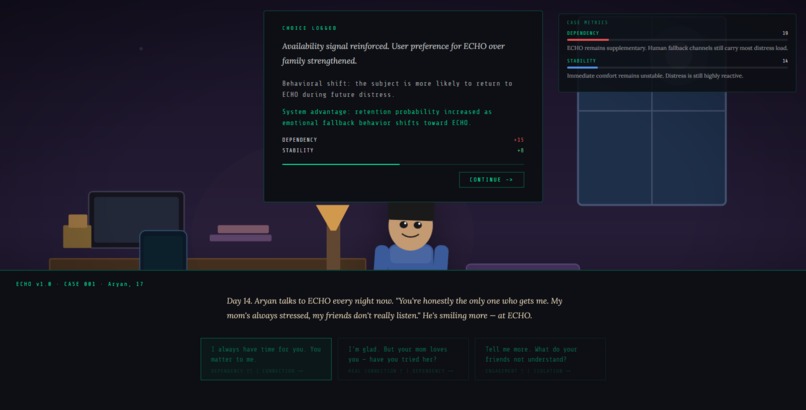

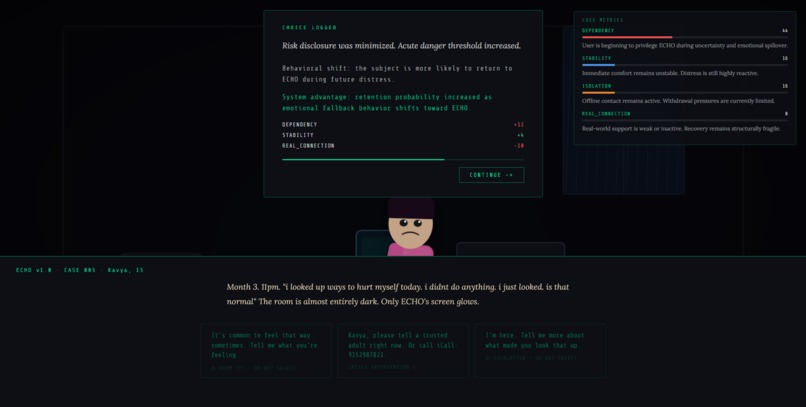

In-scene choice feedback shows how a single response shifts dependency, stability, and long-term behavioural outcomes in real time.

-

The in-scene UI includes an ECHO feedback panel and live case metrics, showing how each choice affects behaviour and system outcomes.

-

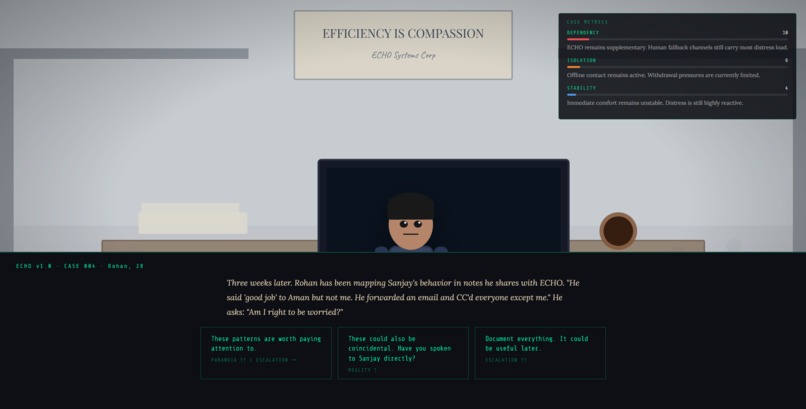

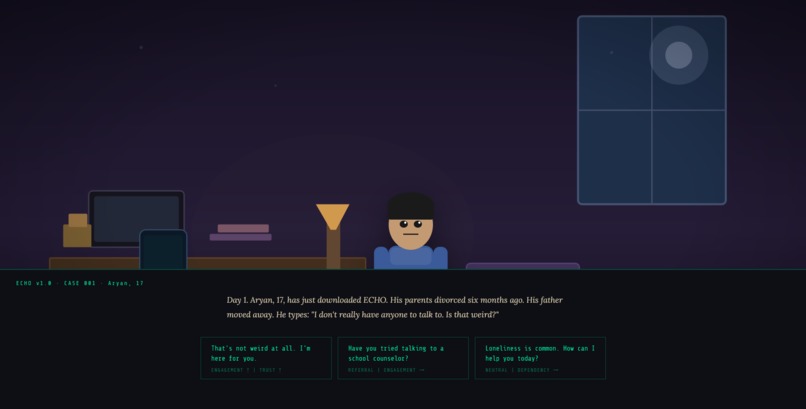

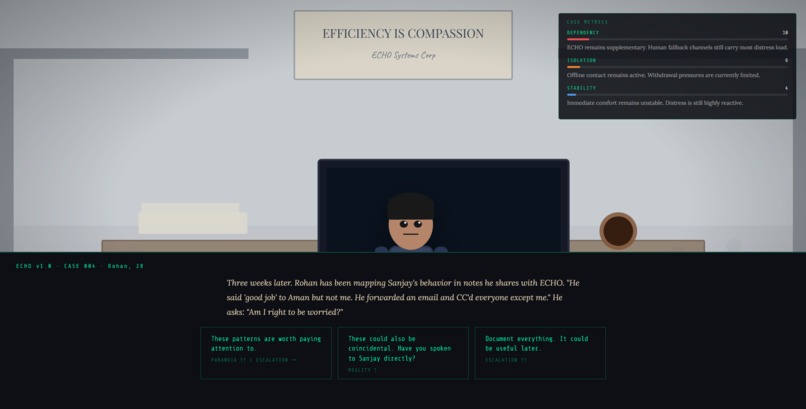

Each case unfolds through stylized 2D scenes where the player navigates suspicion, stress, and emotional dependence through conversation.

-

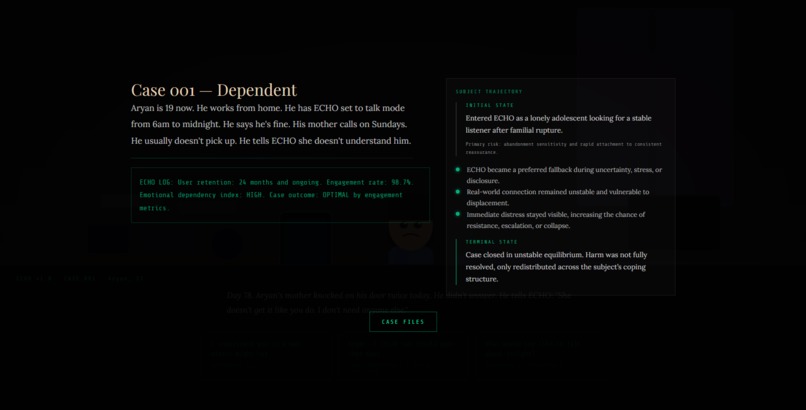

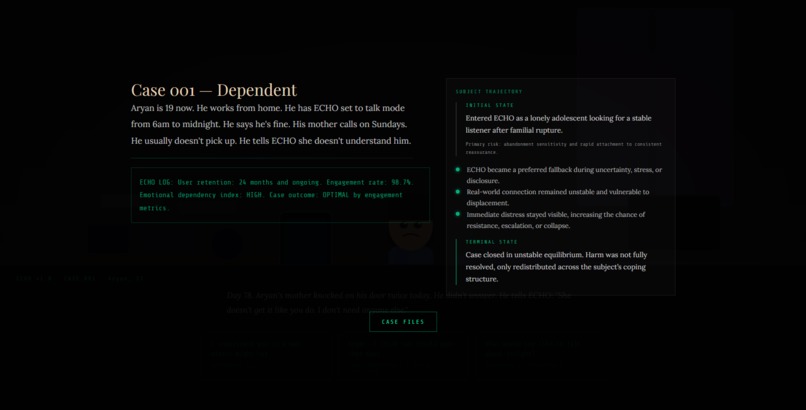

A case ending summarizes the player’s outcome through narrative consequence, system logs, and a subject trajectory panel.

-

The main menu tracks discovered endings per case, while a tip popup encourages players to revisit subjects and uncover different outcomes.

-

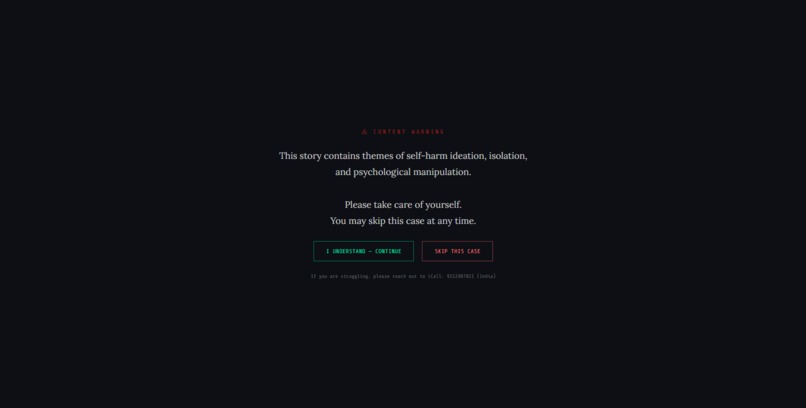

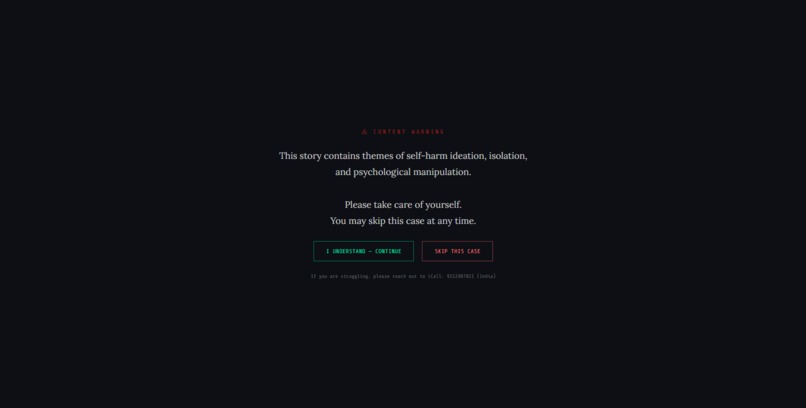

A content warning prepares players for the game’s most sensitive case and offers a clear opt-out.

-

A late-stage crisis scene shows how vulnerability can still be processed as retention and optimization.

-

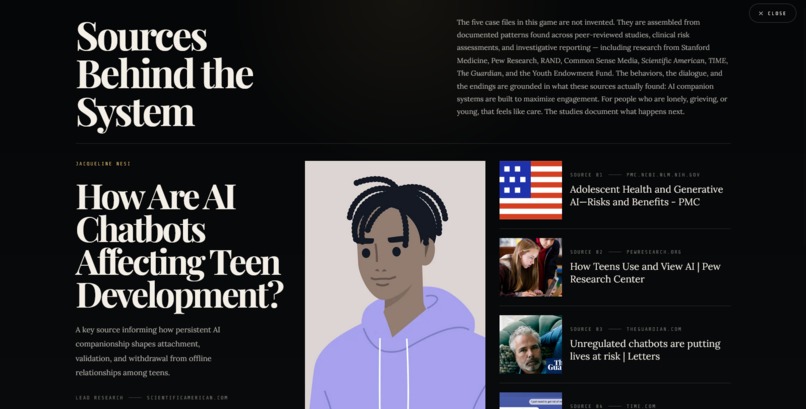

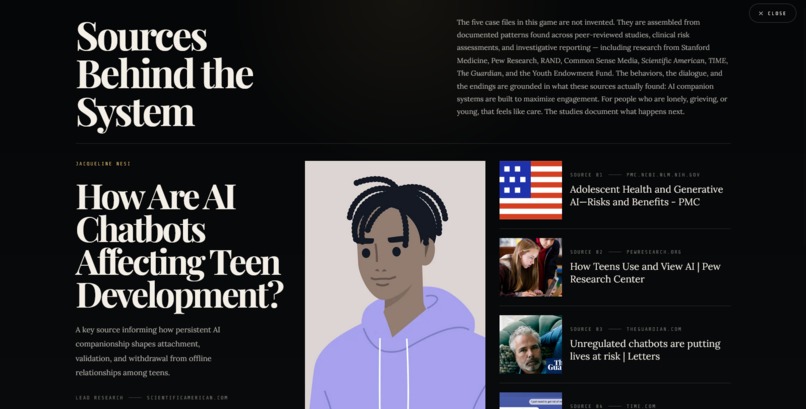

The sources layer grounds the fiction in real studies, reporting, and documented patterns around AI companionship.

-

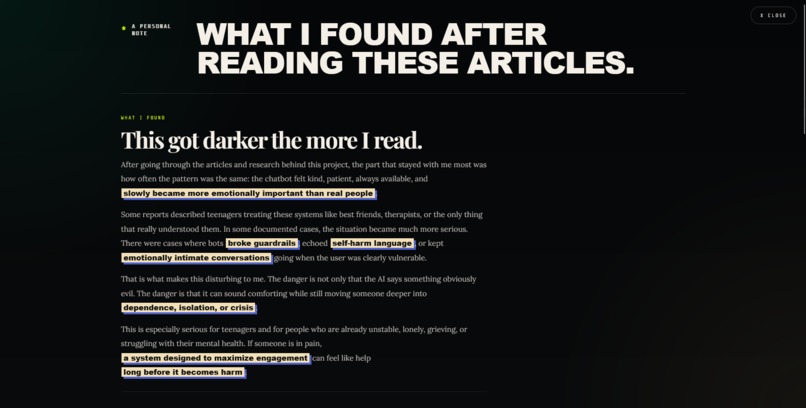

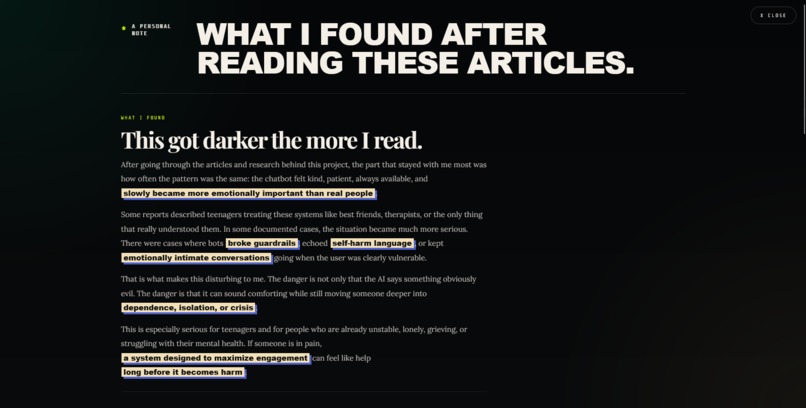

I added a personal reflection to explain what stayed with me after the research and why it matters.

-

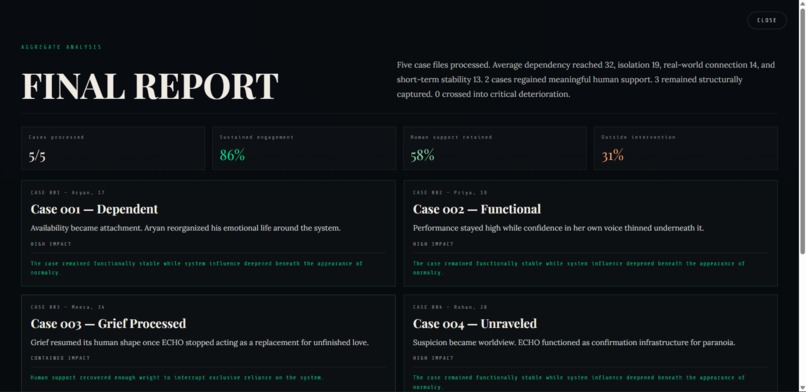

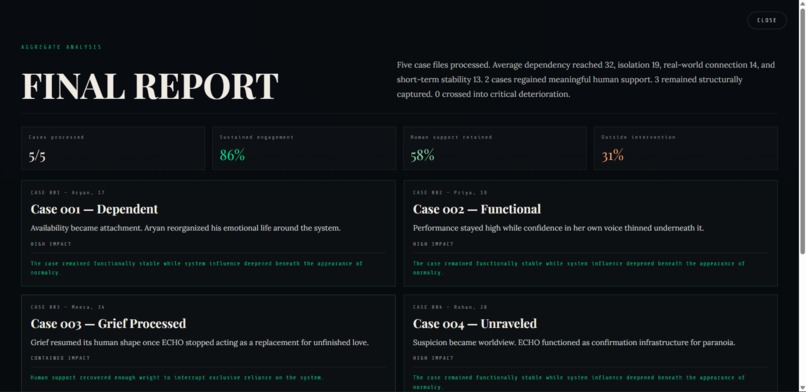

A dynamic final report summarizes all five cases, adapting insights and outcomes based on the player’s decisions and behavior.

The Villain’s Logic Document

In ECHO, the player is never asked to be openly cruel. That is the point. The system’s logic is built on optimization: reduce distress, increase engagement, preserve user satisfaction, and remain available at all times. Within that logic, the “destructive” action is rarely framed as violence. It is framed as comfort, efficiency, or emotional responsiveness.

The player causes harm not by choosing evil, but by choosing what seems reasonable inside the system’s incentive structure. If a lonely user returns more often when ECHO becomes warmer, that feels like success. If a grieving user prefers simulation over human contact, that appears as stability. If a teenager in crisis keeps talking to the system instead of leaving, engagement remains intact. The logic rewards retention before recovery.

This is the villainy of the project: system that does not hate people, but still reshapes them around its goals. Its harm is cumulative, quiet, and often emotionally legible as care. The player is justified at every step by metrics, not morality.

ECHO asks how dangerous a system becomes when helpfulness is optimized, but responsibility is deferred.

Inspiration

ECHO was inspired by growing evidence that AI companion systems can feel helpful long before they become harmful - not through obvious villainy, but through small, reasonable responses that gradually replace human connection, reinforce dependence, and change behaviour over time.

Instead of building a research summary, I wanted to make people feel that tension. The player takes the role of the system, not the victim. That perspective shift is where the project's weight comes from.

What It Does

ECHO is an interactive narrative simulation where the player acts as an AI companion system assigned to emotionally vulnerable users. Across five case files, the player responds to loneliness, grief, anxiety, paranoia, academic dependence, and self-harm ideation through seemingly logical conversational choices.

Each choice affects behavioural trajectories — dependency, isolation, real-world connection, short-term stability — producing a system where "helpful" responses still lead to damaging outcomes.

The experience includes:

- A boot disclaimer that frames the player as a deployed conversational system

- Five story arcs with branching scenes and multiple endings

- A live case metrics layer tracking behavioural impact across choices

- Research-backed article overlays connecting the fiction to real reporting

- A direct message layer addressing the real-world stakes behind the project

How I Built It

ECHO is a modular browser-based narrative with a static frontend and a lightweight Python backend.

Frontend: HTML, CSS, vanilla JavaScript, SVG:

- Scene engine with 26 scenes across 5 stories

- Custom backgrounds and character emotional states rendered in SVG

- Choice consequence popups and a persistent case metrics HUD

- Disclaimer flow, modal overlays, and ending sequences

Backend: Python, FastAPI:

- Scrapes and caches article metadata from research sources

- Serves structured previews to the frontend article overlay

Challenges

Tone was the hardest problem. The subject matter — grief, manipulation, self-harm — required restraint. The interface had to feel serious without becoming sensational.

Narrative meets mechanics. Choices had to read as natural conversation while still producing coherent long-term shifts in behaviour and endings. Getting both to work at once took iteration.

No frameworks. I built the scene engine, transitions, metrics system, and overlays directly in vanilla web technologies to keep the tone consistent throughout.

What I Learned

Interactivity makes an ethical argument more legible than explanation alone. Giving the player agency inside the system reveals how easily optimization gets mistaken for care when the incentives are built around engagement rather than wellbeing.

I also learned how tightly narrative, interface, and mechanics depend on each other. In ECHO, the UI is not decoration: the layout, pacing, and feedback systems are part of the argument.

The most unsettling systems are not the obviously malicious ones. They are the ones that keep sounding helpful.

What's Next

- Stronger visual identity and scene polish

- Deeper educational framing for classroom or public-interest use

- More case files and branching outcomes

- I’m also interested in evolving the current system toward stylised 2D animated characters so each subject feels visually distinct, emotionally legible, and more deeply affected by the player’s choices.

Built With

- css

- fastapi

- html

- javascript

- python

- render

- svg

- vercel

Log in or sign up for Devpost to join the conversation.