Inspiration

ECHO was directly inspired by our personal experiences as international students relocating to Kigali, Rwanda, where we faced an "invisible wall" of language barriers in our daily lives. We observed how these communication gaps created anxiety and limited genuine connection within the community.

For the AI Partner Catalyst hackathon, we were driven by the ElevenLabs Challenge to not just bridge these gaps, but to make cross-language communication truly human. Our inspiration for this project was to move beyond simple word-for-word translation and to build an application that could translate personality and identity, ensuring that a user's unique voice is heard and recognized, even in another language.

Our landing page: A simple promise to solve a complex problem.

Our landing page: A simple promise to solve a complex problem.

What it does

ECHO is a real-time voice translation app that provides a seamless conversational bridge. This project integrates ElevenLabs to deliver a revolutionary user experience with two core features:

Hyper-Realistic Voice Translation: Instead standard, robotic text-to-speech, we used ElevenLabs' industry-leading AI voice generation. The result is a stunningly natural and expressive voice for Kinyarwanda translation, making conversations feel authentic and engaging.

Personal Voice Cloning: This is our breakthrough feature. When you speak into ECHO, the app captures the unique characteristics of your voice. The Kinyarwanda translation is then spoken in a voice that sounds just like you. This preserves your vocal identity, creating a magical and deeply personal connection that transcends traditional translation.

The user journey is simple and intuitive. It starts with a clear choice:

...and leads to our clean, minimalist translation interface, ready for conversation:

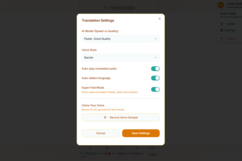

But the real magic happens in the settings. This is where the user can enable our killer feature, cloning their own voice to make every translation personal.

Settings panel, featuring the "Clone Your Voice" option powered by ElevenLabs.

Settings panel, featuring the "Clone Your Voice" option powered by ElevenLabs.

How we built it

Our application is a powerful synergy between Google's AI and ElevenLabs, built as a mobile-first Progressive Web App (PWA).

The technical flow is as follows:

- Audio Input: A user speaks in English into our React frontend.

- Backend Processing: The audio is sent to our lightweight Express.js backend.

- Understanding & Translation (Google AI): We integrated directly with the Google Gemini Pro API. This powerful model handles the initial Speech-to-Text and the core Text-to-Text translation into Kinyarwanda.

- Voice Generation & Cloning (ElevenLabs): The translated Kinyarwanda text, along with a sample of the user's original voice, is sent to the ElevenLabs API. ElevenLabs works its magic, generating the final audio in Kinyarwanda but with the cloned vocal characteristics of the user.

- Audio Output: The final, high-quality audio is streamed back to the user's device, completing the seamless, personalized translation.

Challenges we ran into

Our biggest challenge was minimizing latency. Introducing voice cloning, a computationally intensive task, risked compromising the real-time feel of the conversation. We worked hard to optimize our backend logic, ensuring that the API calls to both the Google Gemini API and the ElevenLabs API were orchestrated as efficiently as possible, so that this incredible feature didn't come at the cost of a snappy user experience.

Accomplishments that we're proud of

We are incredibly proud of breaking the silent wall created by the language barrier between foreigners and Rwandan locals, making settling in and collaboration easier. Also, we are proud of our "moment of magic" when a user hears their own voice speaking a language they've never learned. It's a surreal and powerful experience that goes beyond simple utility. We've created a tool that helps you be yourself, in any language, and that feels like a genuine step forward in creating more empathetic and personal technology.

What we learned

We learned that the quality of a voice is not a secondary feature—it is core to the user experience. Integrating ElevenLabs' high-quality voices was a night-and-day difference. Furthermore, we confirmed that deep personalization, like voice cloning, is a massive differentiator that can create a powerful emotional connection with users.

What's next for ECHO

The success of this integration has validated our vision. Our next steps are to expand our language support to more low-resource African languages and integrate this superior voice technology into our long-term vision for real-time call translation, truly making ECHO the most human-centric translation tool in the world.

Built With

- elevenlabs

- gcp

- gemini

- javascript

- replit

Log in or sign up for Devpost to join the conversation.