-

-

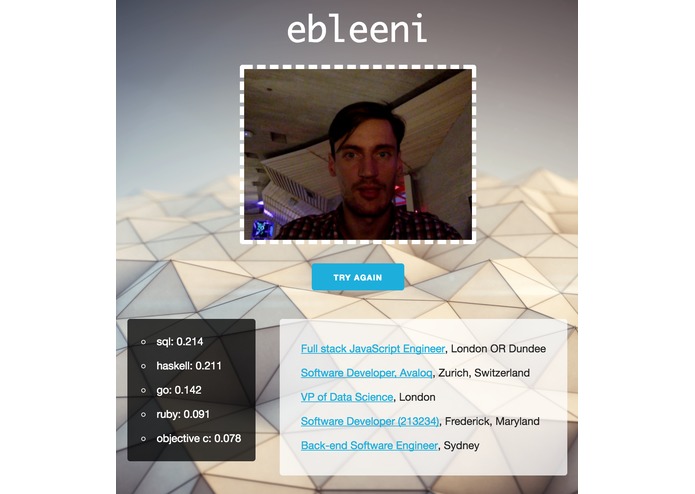

Programmer skills prediction by photo

-

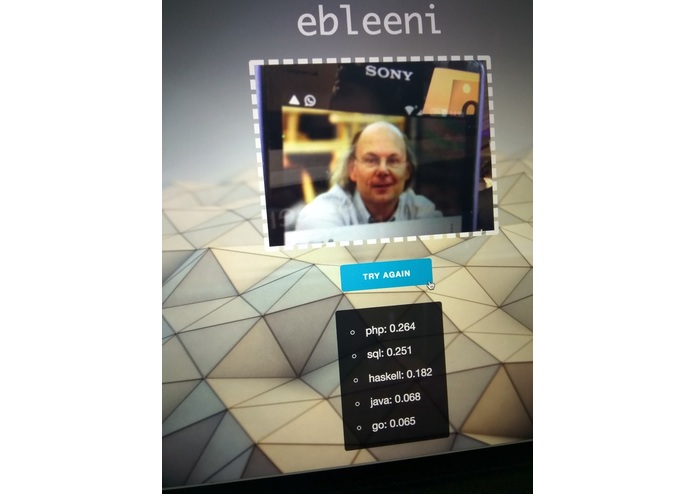

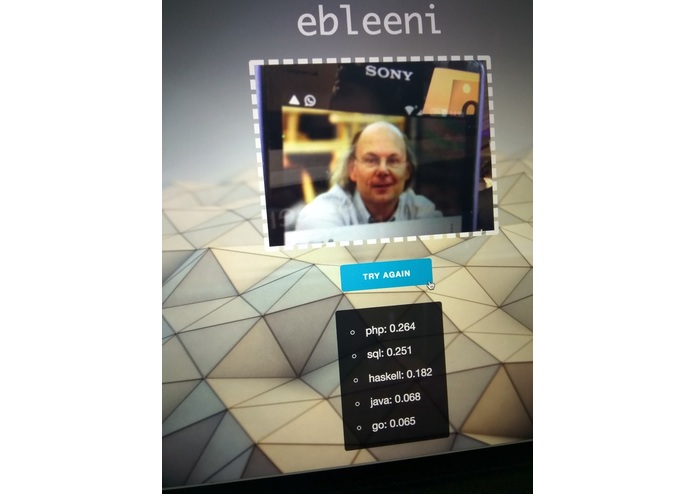

Bjarne Stroustrup's secret love is PHP

-

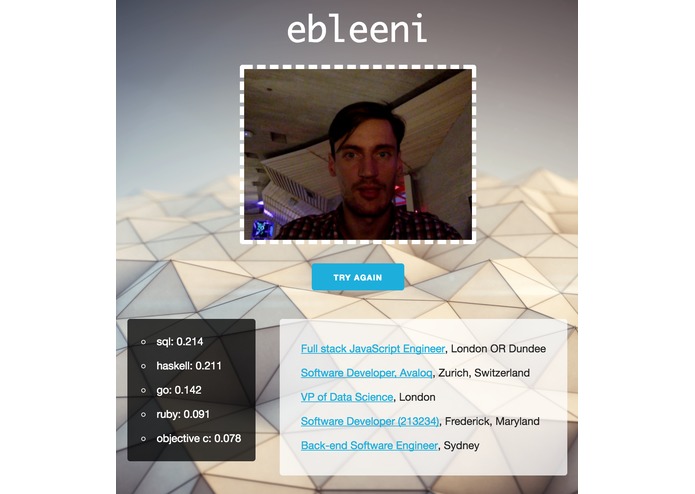

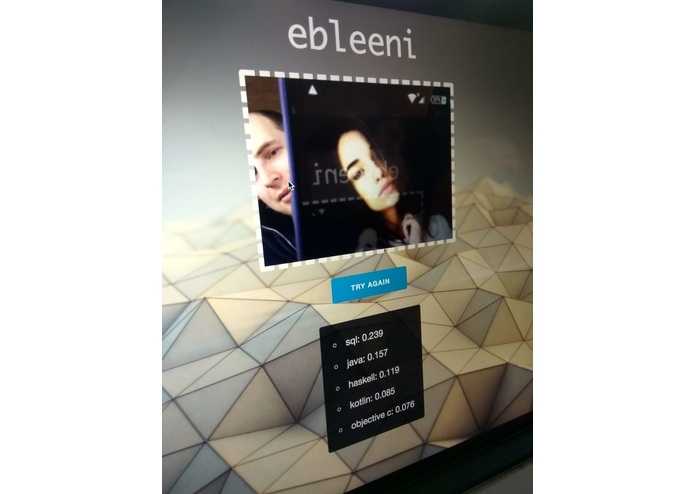

Somebody's careers could be different

-

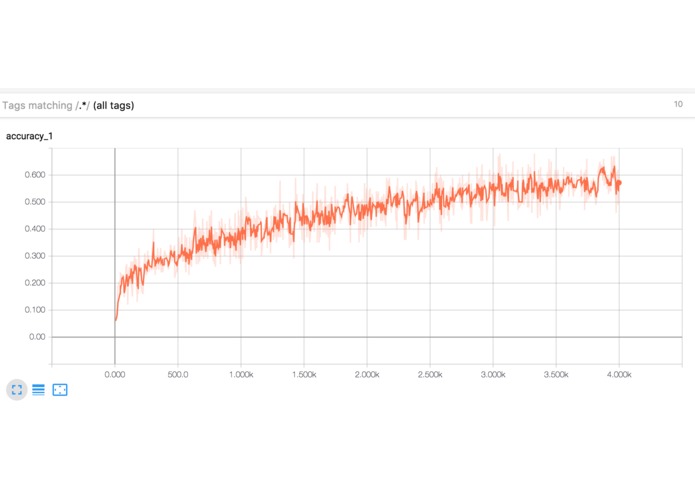

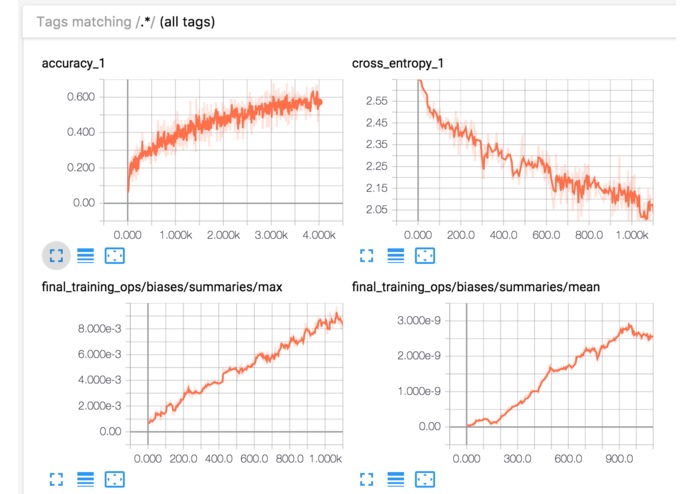

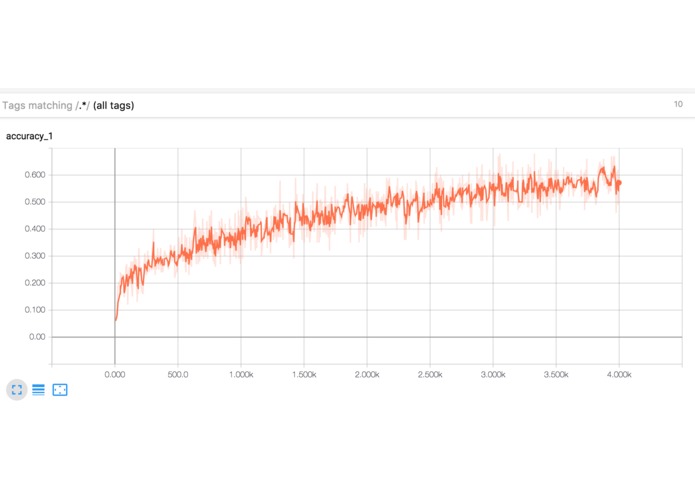

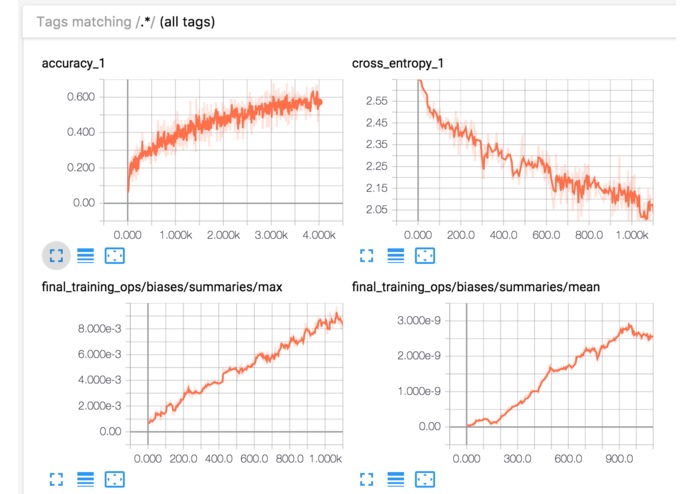

Tensorflow neural network training

-

Tensorflow neural network training

-

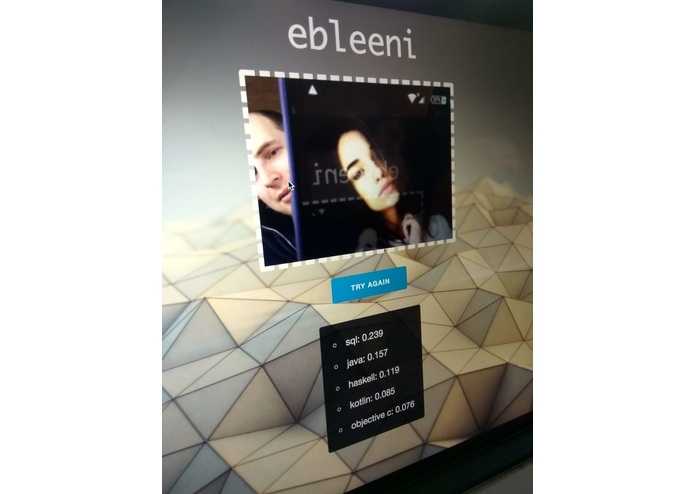

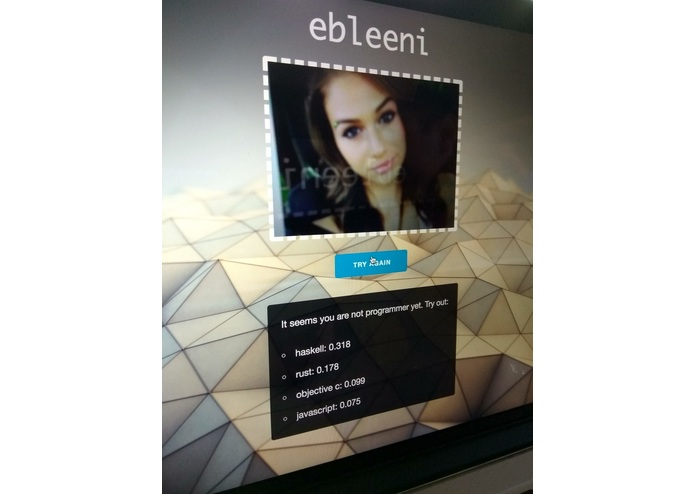

Ebleeni development: when you need a girl for testing

-

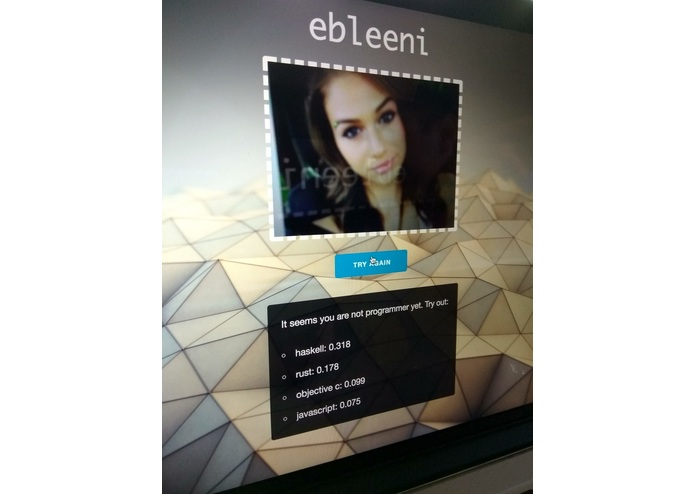

Ebleeni development: everybody should try coding

-

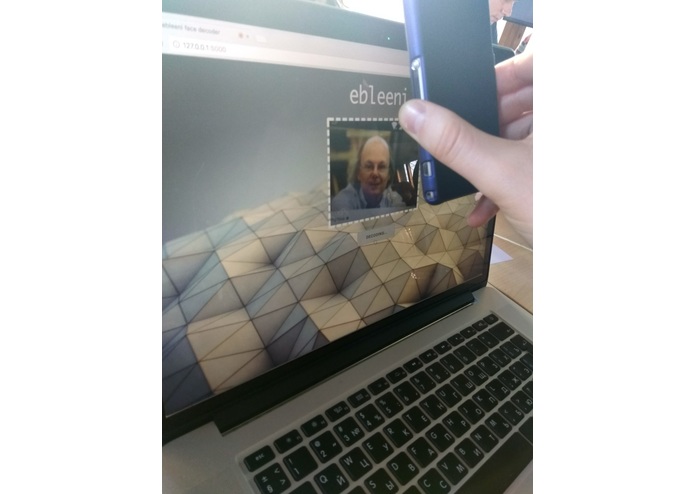

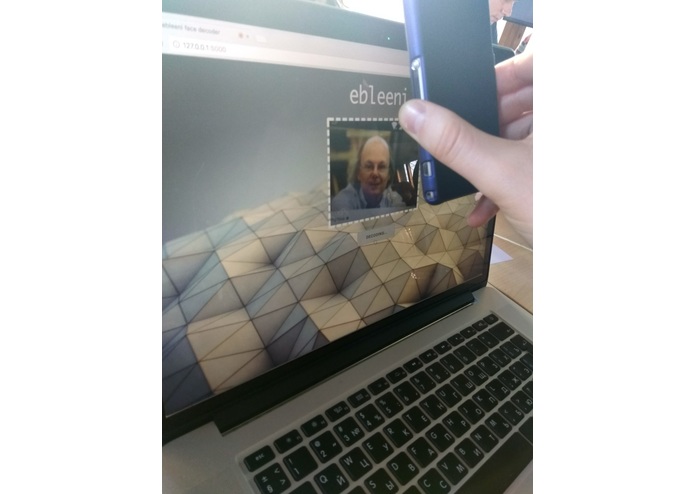

Ebleeni development: Bjarne on line

WARNING

Works in Google Chrome only. No Safari support yet, sorry.

Inspiration

Machine learning made a lot of progress last months. But it is still far from wide use in day-to-day tasks. We tried to improve some parts of job finding process, context advertisement and educational recommendations by adding some biometric data.

Idea

We believe biometric data collection may become a game changer in hiring process, advertisement and a whole lot of recommendation systems.

Hiring and jobs recommendations. If we collect enough biometric data of people working in different industries and determine some criteria of success, we could be able to find groups of professionals who do their work good. Using such information, we can try to find if our user has the similar attributes as the people in one or another groups. So our system would be able to recommend appropriate vacancies for the person based on their biometric data.

Hiring process can also be simplified notably. HR personnel can use recommendations from our system to find employers among huge crowds of people, for example, on conferences or other mass events. It is possible to make some "HR Booth" that can be used to automatically collect required person's biometric data: look, movement, behaviour during puzzle solving and so on. Collected data would be used to decide if the person matches any of open vacations.

Advertisement. Targeting in advertisement systems can be greatly improved if we include biometric data. A few examples. People with ginger hair and pale skin suffer from sunburs. We can propose strong sun blocks if we know our sun dread customer is going to vacation.

We could determine which sports do our customer do. We'd recommend sport foods and equipment based on this info.

Prototype

We aimed to collect some basic biometric data of programmers and classify them by their success in one or the other programming language. In Github we trust. After that, we could try to determine if our user looks like programmers in one or the other group to determine in which programming languages user writes. Additionally, we would recommend some vacancies matching found user skills.

What it does

Neural network tries to find if the person in front of camera is able to write code on one of programming languages: C++, Python, Go and a bunch of others. After that, program suggests some vacancies from Github Jobs matching recognized skills.

Usage

- Look to the camera.

- Push "Decode face" button.

- Get a list of programming languages that suits you well.

- Get a list of jobs matching found skills.

- Share your results in social networks.

How we built it

We trained neural network using Tensorflow to recognize which kind of face it faces. To train neural network, we downloaded hundreds of photos, found portrait areas and created a lot of portrait sets splitted by programming languages. Photos of hackers were taken from Github avatars. Photos of "normal" people where taken from Google.

Challenges we ran into

It was our first ML project. And it uses a stack of technologies which is new for our team. So we were forced to learn Tensorflow and data mining basics, video capturing via HTML Camera API. Our demo application placed on Google App Engine and Digitalocean servers, so it required some joggling with Docker to deploy, Let's Encrypt to set up HTTPS connection and uploading gigabytes of files to make our neural network breathe.

Accomplishments that we're proud of

97% tulips recognition accuracy. Funny deployment process. Complete web application with neural network created in less than two days.

What we learned

How to recognize faces, daisies, roses and tulips.

What's next for ebleeni

Integration into hiring and advertisement platforms. Detailed features collection. Biometric ads recommendations.

Built With

- digitalocean

- docker

- github

- github-jobs

- google-app-engine

- html5

- imagenet

- python

- tensorflow

Log in or sign up for Devpost to join the conversation.