-

-

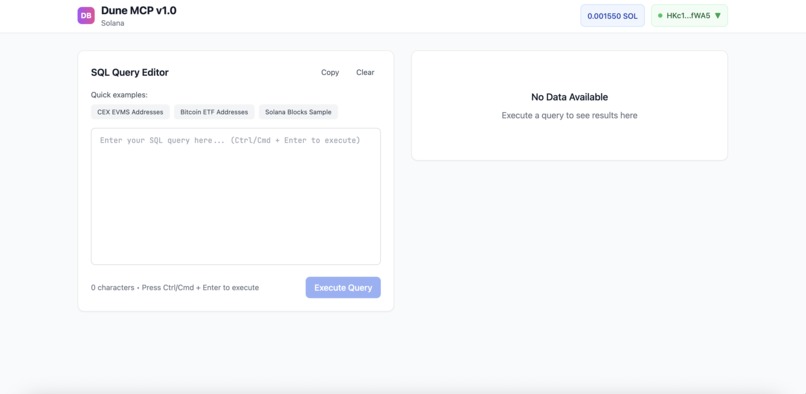

1) Initial MCP tool state with just Phantom wallet connected

-

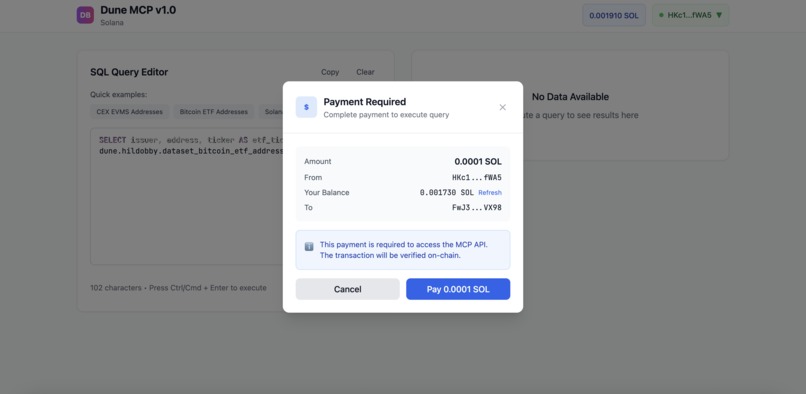

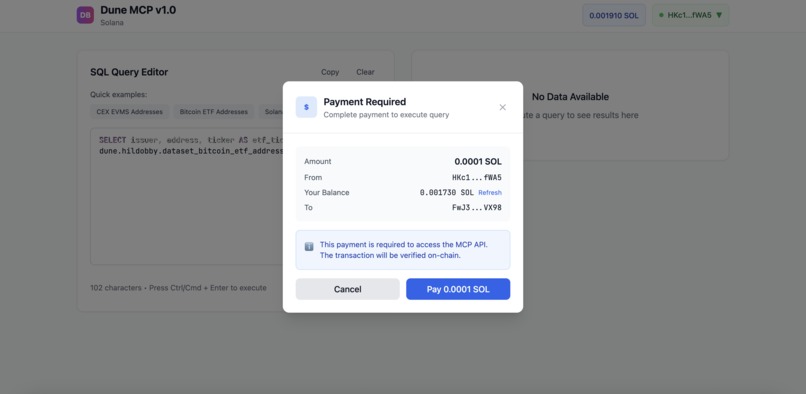

2) Payment middleware (x402) enforced prior to MCP call to Dune API

-

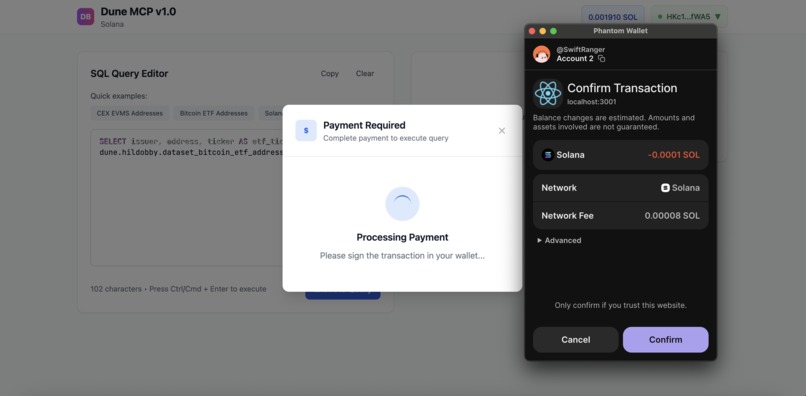

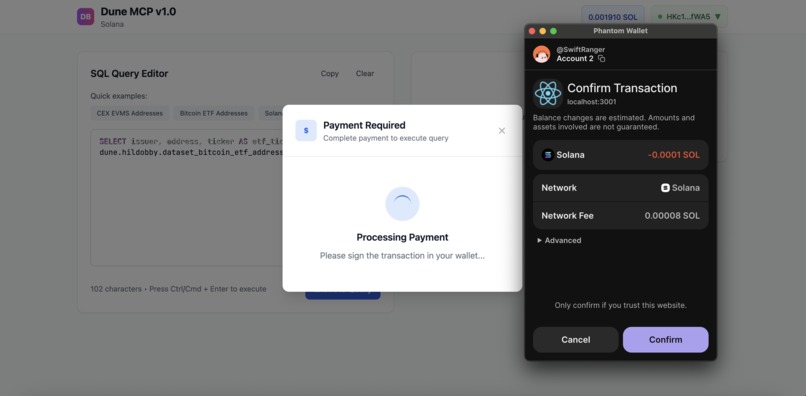

3) Connection to Phantom wallet to confirm transaction

-

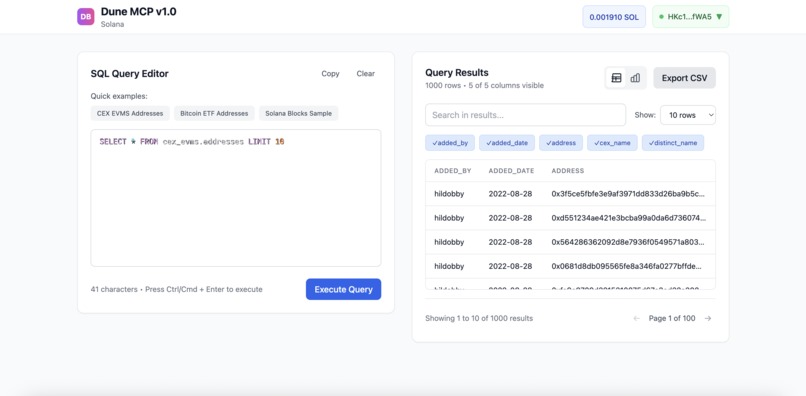

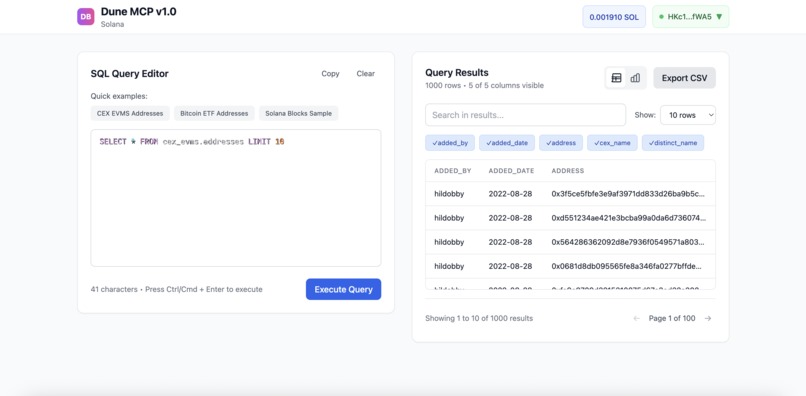

4) Initial Dune API data return after payment auth through x402

-

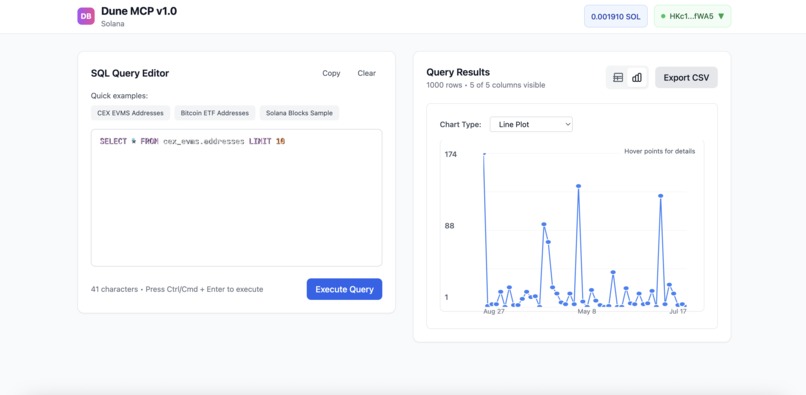

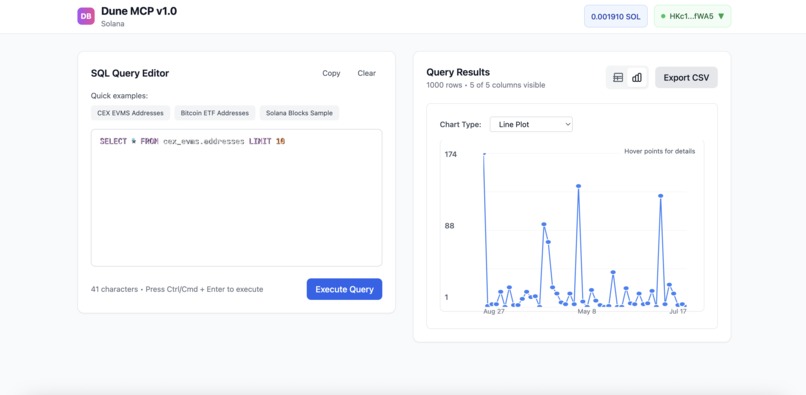

5) Time series analysis based off Dune API query return

-

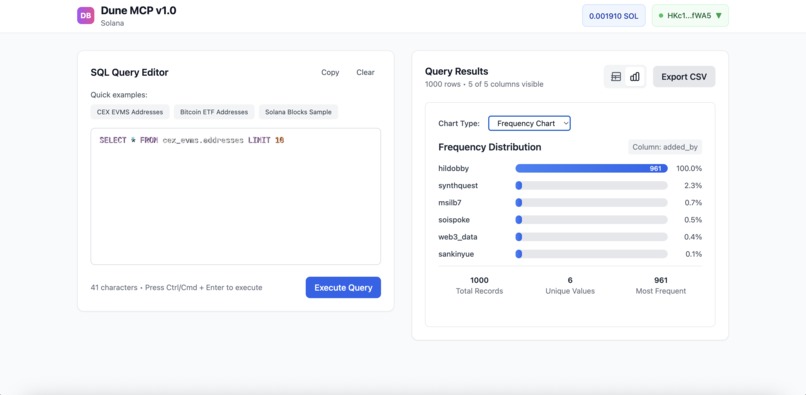

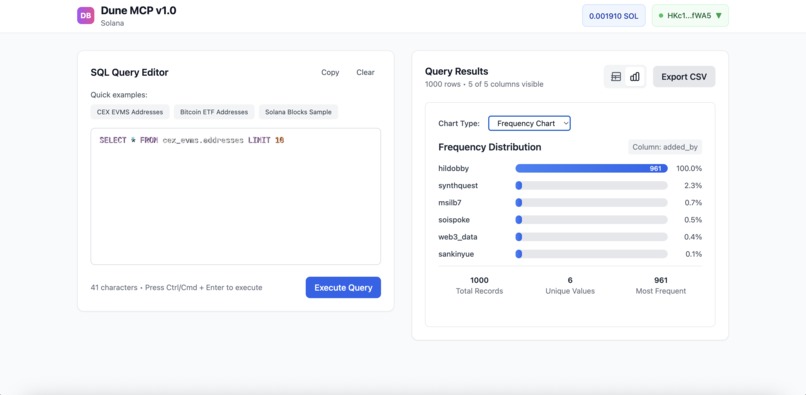

6) Frequency analysis based off Dune API query return

-

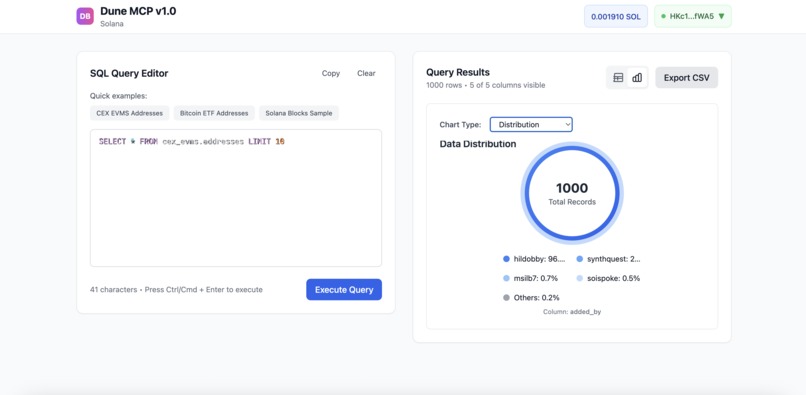

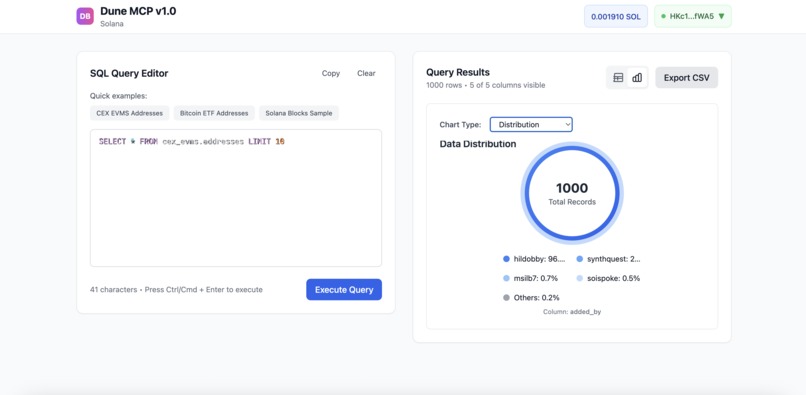

7) Distribution analysis based off Dune API query return

Inspiration

As someone who is chronically on Dune (Dune Analytics), a site for checking out any and all incredible raw on chain data from token transfers to DeFi volumes to literal NFT sales, I always thought there were many limitations to their discovery workflow. Let's say I was interested in looking into many questions like "what was the largest NFT flash loan in the last 24 hours?" Or "did the new DeFi protocol’s TVL dip after the upgrade?" The process of searching for the right query takes a LONG time, so this is where my inspiration really began to form. I wanted to make a MCP for Dune so that instead of humans (who are objectively slower at search and querying tasks than "AI agents"), LLMs or a personal fine-tuned model could just gather that data instantly with a simple HTTP request to the Dune MCP server. As I kept thinking, I realized that in order to keep costs minimal, I could integrate x402 payment auth within Solana to enforce micro-transactions as a pay-per-use model. This project means even more to me than just for the hackathon since it's something I would use myself to gather data from Dune in a much faster, cheaper (sometimes) way.

What it does

The best and easiest way to explain the Dune MCP is with the following example: Let's say there's an AI agent whose task is to figure out "What was the total daily trading volume for the USDC or SOL on Solana yesterday?" The AI agent would then structure a request to the Dune MCP to essentially query the data. As a side note, this query would be preset such that the agent's query would be matched on a "most close" basis to the queries Dune currently has. Once the MCP server intercepts the request, a valid payment auth header will prompt the agent to fill the payment details like "amount" (a preset amount I have set), "receiver_wallet address", etc. In a real-life scenario, the agent would get a HTTP 402 payment required error since the agent wouldn't have known to pay within the MCP. However, to simulate x402 payments in my MCP, I made it so the agent aka user would need to connect a wallet (like Phantom) with enough funds and then pay for the Dune API use. Once this payment is verified, the agent can retrieve the data from the respective query. This is the main part of the tool I built, but I also added on graphical visualizations as well as an option for the agent to use.

How we built it

The first few steps of building the tool was actually creating a DuneService for API communication to the actual Dune API. This consisted of combing through the Dune API docs and using the execute_query method as well as get query to ensure the correct query was being executed. Then, I created a query matching system through a simple JSON for testing, where a sample user/agent SQL query was related to a respective query_id based off of how close the user query was to the actual query scraped from Dune itself. Then I began building on Solana by implementing the Solana web3.js integration. This was also used to work on the payment middleware (x402) to essentially verify transactions (If that doesn't sound like x402, I'll explain why in the challenges next). For this, the essential parts were building the paymentModal and transaction signing components. I then optimized for payment response to ensure that as soon as the payment transaction began on Solana, the data would render. The way this MCP was built around was using the transaction hash/id to verify that a payment had successfully went through and then that transaction hash was used as an argument for the API I created that pulled from the execute_query from Dune. While all this backend work was going, I worked on basic frontend components to ensure the user could see what was happening with the data table population. In the case of the data table, I implemented pagination to ensure if 9000 row table was loaded it wouldn't exceed a user's machine memory. Finally, I also implemented graph visualizations within JS and TailwindCSS based on the table's data.

Challenges we ran into

The biggest challenge I ran into was with x402 and the Solana integration with it. x402 is extremely new so it is only supported by Base and ETH networks as far as I know. While working, I had seen Coinbase's Github docs on added Solana support as well within x402. However, when I tried to use their Config, the x402 functioned ALMOST like a facilitator in that it had verified and signed the transaction. But, the caveat and honestly the bottleneck in my program was that when a transaction occurred, even though the transaction hash would be used for getting access to the Dune API through the MCP, what was happening was that the transaction hash wasn't given a state or stored. What this meant was that a user could theoretically (and I tested this too) keep using the same transaction hash (through a CURL to my API) and keep getting the data over and over again without any issue. This contradicted the entire point of the x402 standard and the pay per use idea. So my solution was to create a local postgres database to store transaction hashes and essentially give them a state by saying that whenever a request to my API was made, the API would only return success if the hash was NOT in my database.

Accomplishments that we're proud of

I think the biggest accomplishment I'm proud of is in a way my biggest challenge too. I was proud of being able to think of a way to solve the problem I was having an issue with in a speedy manner honestly. I'm also happy with all the testing I have done to ensure there are no wedges to access my API without having to go through the MCP server.

What we learned

I think the biggest learning for me was trying to understand a new service/tool like x402 on chain and try to implement it. Even though the initial integration was confusing to say the least, I enjoyed reading through the whitepaper, other docs, and even Brian Amrstrong's X post about Solana and x402. I think a big takeaway for me in this entire hackathon is to never be afraid of trying something new. Even if you have a thousand errors in the journey of building, the end result I worked towards and the experience I gained was amazing.

What's next for Dune MCP

I think the next steps for the Dune MCP is to use a more automated way of grabbing all the queries (query_ids) from Dune with their paid subscription because currently I only have scraped a few to test with. This would mean just using the create_query API on Dune's paid plan. Another development is actually working on the x402 system to make it more robust. While I implemented a safety feature to ensure transaction hashes can't be reused, there's still potential for improvement like looking into the facilitator docs for Base and configuring them towards Solana. Solana makes the most sense for x402 and can be scaled very fast if done properly because of Solana's extremely low latency, so that's something I'm definitely looking forward to working on!

Built With

- css

- duneanalytics

- html

- http

- javascript

- postgresql

- rpc

- solana

- tailwind

- typescript

Log in or sign up for Devpost to join the conversation.